SAST and DAST are the two foundational pillars of application security testing. While both aim to identify vulnerabilities, they operate in fundamentally different ways.

They differ in:

How they analyze applications

What types of vulnerabilities they detect

When they run in the software development lifecycle

How they integrate into DevSecOps workflows

Choosing between SAST and DAST is not an either-or decision.

The real question is:

Where does each method fit within your security program?

At what stage should each be deployed?

Can modern AI-native security tools reduce the traditional gaps between them?

This guide provides:

A clear explanation of how SAST (Static Application Security Testing) works

A breakdown of how DAST (Dynamic Application Security Testing) works

A comparison that includes IAST (Interactive Application Security Testing) and SCA (Software Composition Analysis)

A practical decision matrix to help you choose the right approach based on your:

Team size

Development maturity

Compliance requirements

Risk profile

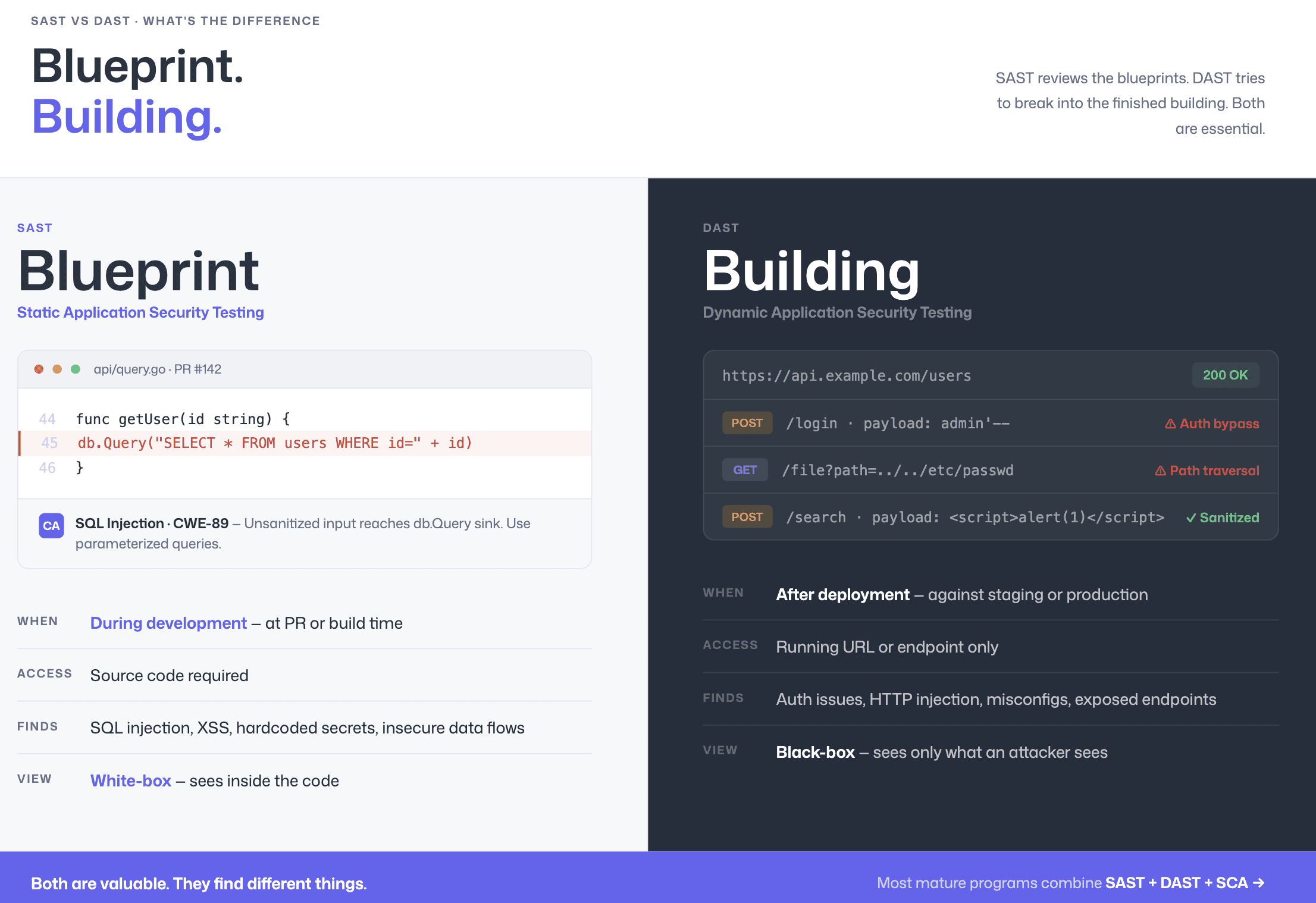

SAST vs DAST: What’s the Difference?

The core difference between SAST and DAST is simple:

SAST analyzes code during development without running the application (white-box testing).

DAST tests a running application from the outside to find exploitable weaknesses (black-box testing).

What is SAST?

Static Application Security Testing (SAST) scans source code, bytecode, or binaries to detect vulnerabilities before deployment.

It:

Reads and parses code

Traces data flows

Identifies insecure patterns

Flags issues like SQL injection, XSS, hardcoded secrets, and insecure cryptography

SAST runs early in the SDLC, typically at the commit, pull request, or CI stage, making it a shift-left security control.

What is DAST?

Dynamic Application Security Testing (DAST) evaluates a live, running application by sending crafted HTTP requests and analyzing responses.

It:

Requires no access to source code

Simulates external attack behavior

Detects runtime vulnerabilities such as authentication flaws, misconfigurations, and exposed endpoints

DAST runs after deployment, usually in staging or production environments.

Both are valuable. They operate at different stages and uncover different classes of risk.

Dimension | SAST (Static) | DAST (Dynamic) |

Testing approach | White-box; analyzes source code from the inside | Black-box; tests running application from the outside |

When it runs | During development, at commit, PR, or build time | After deployment, against staging or production |

Input required | Source code access | Running URL / endpoint |

What it finds | SQL injection, XSS, hardcoded secrets, insecure crypto, buffer overflows, business-logic flaws in code | Authentication bypasses, server misconfigurations, exposed endpoints, runtime injection, CORS issues |

What it misses | Runtime and configuration issues, environment-specific vulnerabilities | The specific code causing the vulnerability, issues not reachable via tested endpoints |

False positive rate | Higher in rule-based tools; lowest in AI-native tools like CodeAnt AI | Lower overall, but limited to tested paths |

Feedback speed | Seconds to minutes (inline in PR) | Minutes to hours (after deployment) |

Fix cost | Low; found during development, before code is merged | High; found after deployment, requires context-switching back to code |

The comparison above explains why security teams that rely on only one approach have blind spots.

SAST catches code-level vulnerabilities early but cannot detect runtime misconfigurations.

DAST finds deployment-specific issues but cannot tell you which line of code is responsible.

For a comparison of 15 tools that implement these approaches, see our complete SAST tools comparison for 2026.

How SAST Works (White-Box Testing)

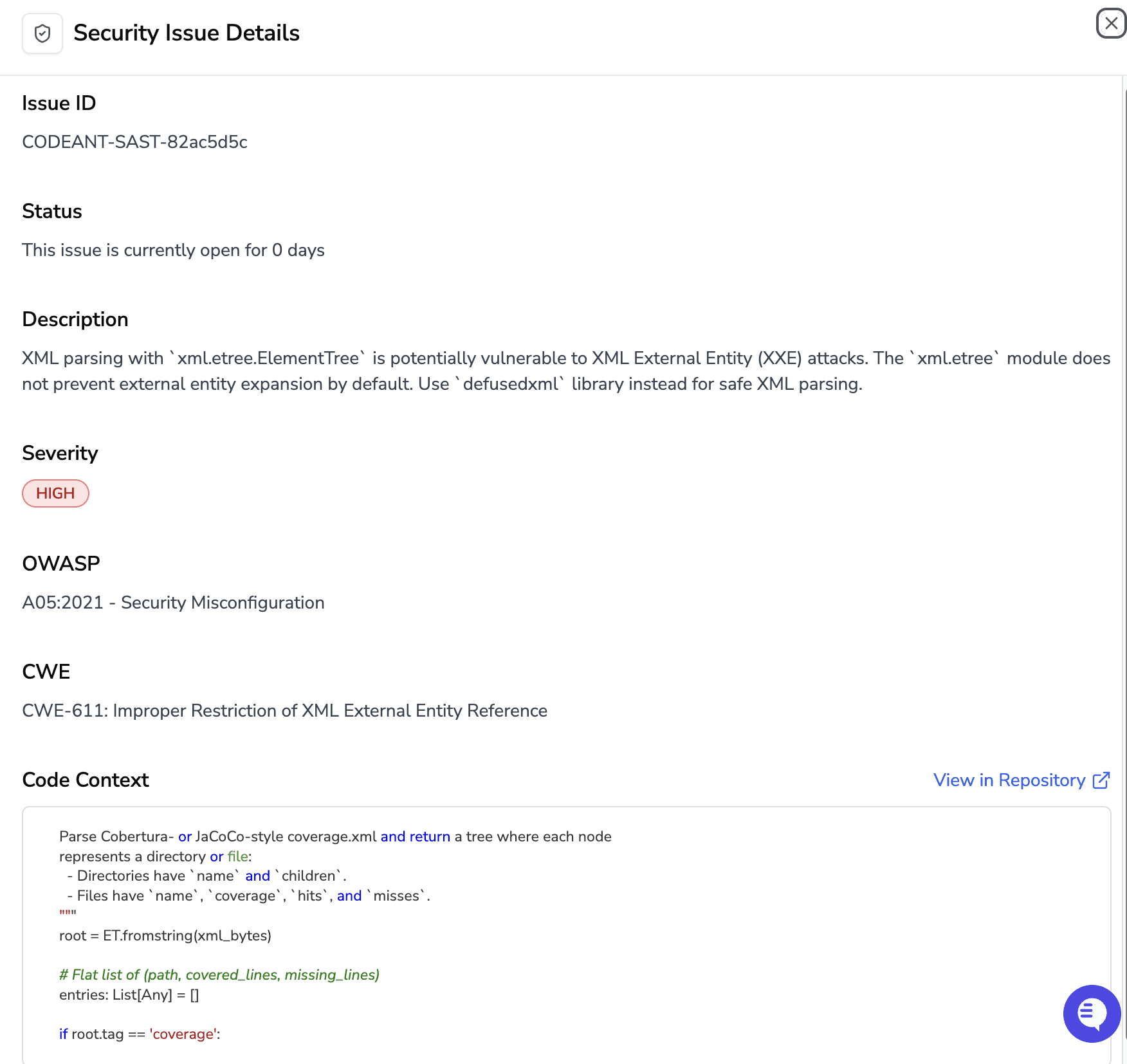

SAST tools parse source code into abstract syntax trees (ASTs), apply security rules or AI-powered analysis to detect vulnerable patterns, prioritize findings by severity and exploitability, and deliver results with remediation guidance. The process happens entirely during development, no running application needed.

The technical process follows four steps:

code parsing and AST construction

rule-based or AI-powered analysis (including taint analysis that traces untrusted input from source to sink)

vulnerability correlation and prioritization

developer feedback through PR comments

IDE warnings, or dashboard alerts

For a complete walkthrough with code examples, see What Is SAST?.

Where SAST excels

SAST provides the:

earliest possible detection

catching vulnerabilities at the pull request level

where remediation takes minutes rather than weeks

It achieves full code coverage (every line is analyzed, not just paths exercised by tests), and it integrates directly into the developer’s workflow. The shift-left security approach embeds SAST scanning early in the SDLC precisely because the cost of fixing a vulnerability increases by 10–100x as it moves from development to production.

Where SAST falls short

Traditional SAST tools have clear limitations. Because they analyze code without executing it, they cannot detect:

Runtime configuration issues

Server misconfigurations

Authentication logic dependent on session state

Environment-specific vulnerabilities that appear only in live systems

These gaps exist because static analysis lacks runtime context.

Rule-based SAST tools also struggle with false positives. For example, a scanner may flag a SQL injection pattern even if the application framework automatically sanitizes that input. Without understanding framework-specific behavior, the tool cannot determine whether the code path is actually exploitable.

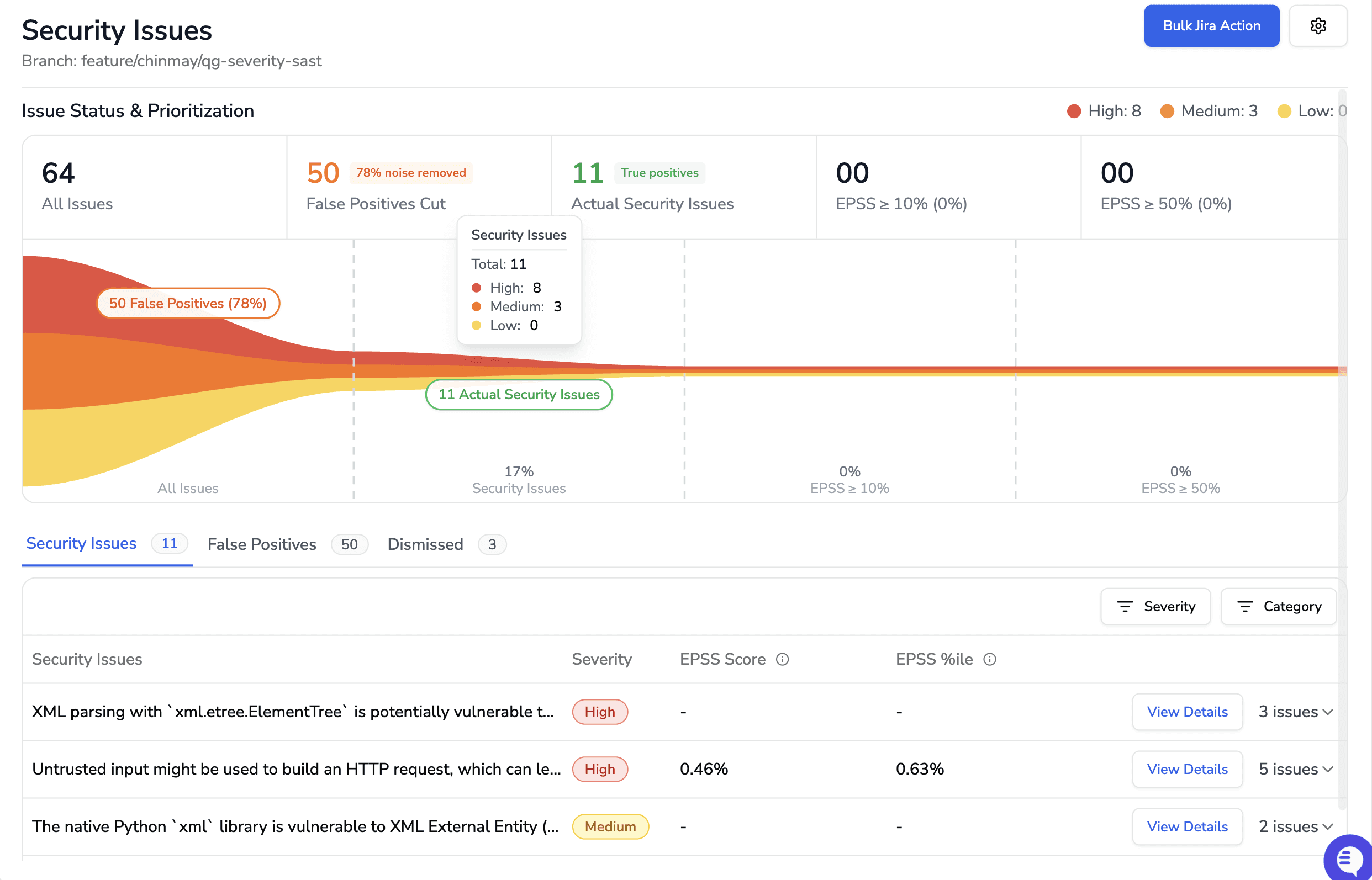

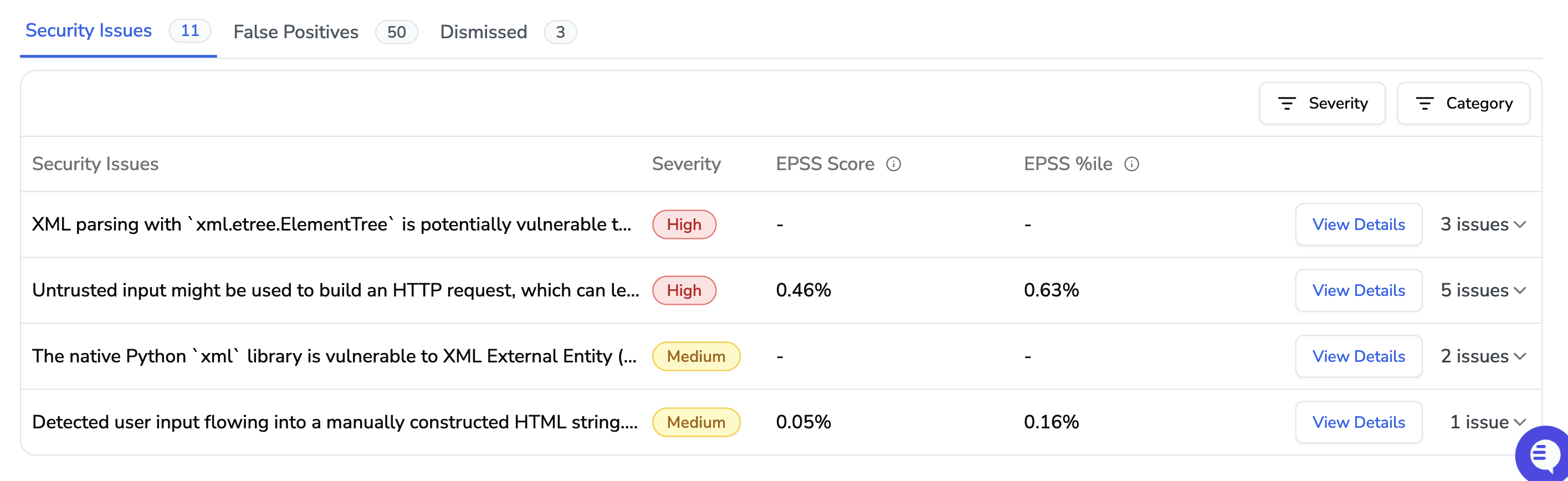

This is where AI-native SAST changes the equation. Tools like CodeAnt AI use LLM-powered reasoning to:

Understand framework-level sanitization

Analyze real data flows instead of simple pattern matches

Determine exploitability, not just pattern similarity

The result is significantly fewer false positives. Developers spend their time fixing real vulnerabilities instead of dismissing noise.

CodeAnt AI also enhances prioritization with EPSS (Exploit Prediction Scoring System) for every finding. Instead of relying only on severity labels, teams can prioritize based on real-world exploit probability, focusing first on vulnerabilities most likely to be targeted in the wild.

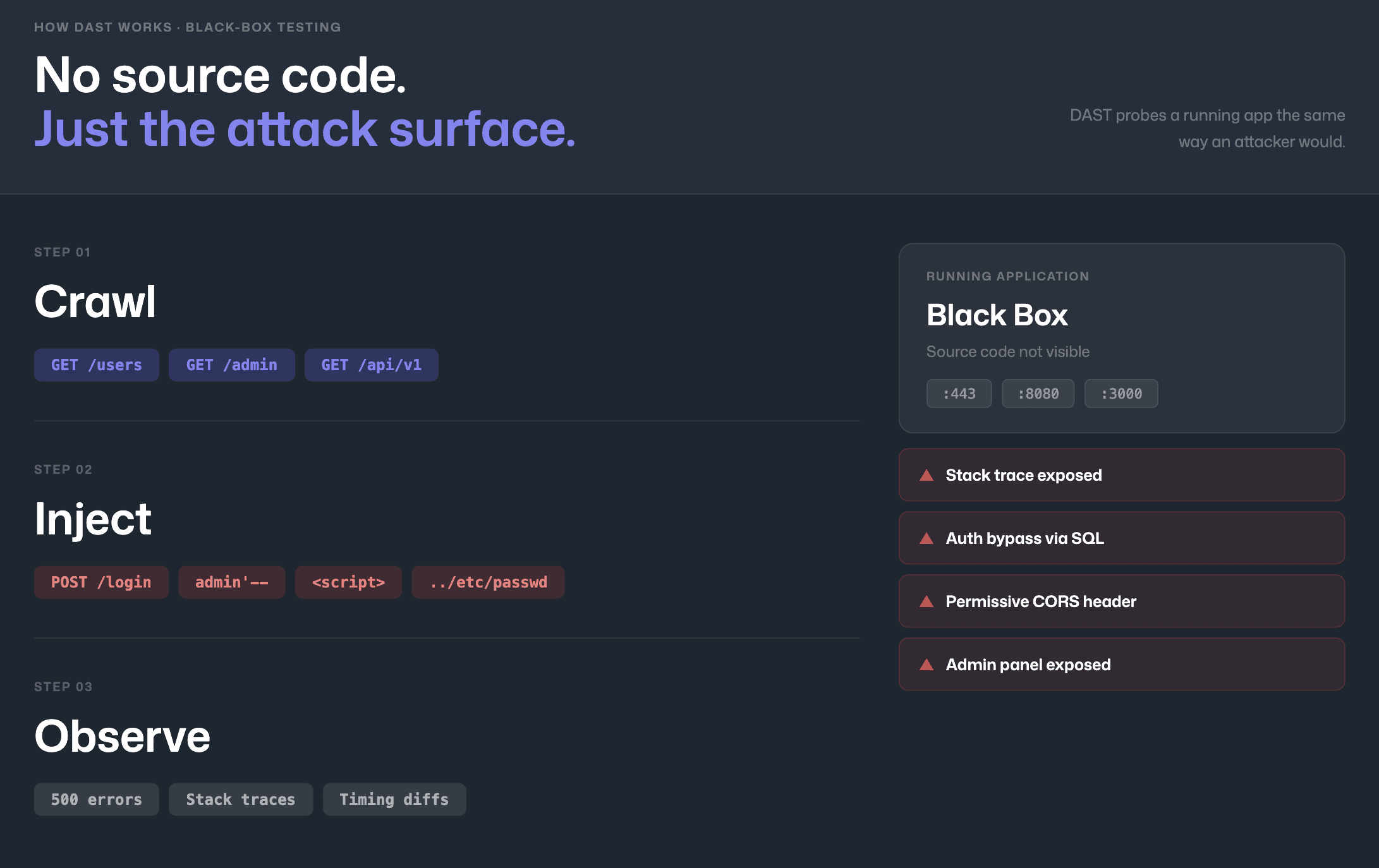

How DAST Works (Black-Box Testing)

DAST tools work by sending crafted HTTP requests to a running application and analyzing the responses for signs of vulnerabilities. The scanner acts like an automated attacker, probing endpoints, submitting malicious payloads, and observing whether the application behaves in unexpected ways.

A typical DAST scan follows this sequence:

the tool crawls the application to discover endpoints and input fields

generates attack payloads targeting common vulnerability classes (injection, authentication bypass, XSS)

submits those payloads, and analyzes responses for indicators of successful exploitation

error messages revealing stack traces, reflected input in HTML

unexpected redirects, or timing differences that suggest blind injection.

Where DAST excels

DAST identifies issues that static analysis cannot detect because it tests a live, running application.

It is particularly strong at uncovering:

Server misconfigurations, such as exposed admin panels, permissive CORS policies, or missing security headers

Authentication and session-handling vulnerabilities that depend on runtime state

Environment-specific weaknesses that only appear after deployment

Because DAST operates externally, it does not require access to source code. This makes it especially valuable for:

Testing third-party components

Assessing legacy systems

Validating production or staging environments

Where DAST falls short

DAST runs late in the software development lifecycle, typically against staging or production environments. By the time it identifies a vulnerability, the developer who wrote the code has often moved on.

Remediation is slower because:

The external symptom must be traced back to the exact line of code

Debugging often involves a different engineer than the original author

Fixes may require redeployment cycles

DAST is also limited to what it can reach. Its effectiveness depends on crawling logic and payload generation, which means:

Business logic behind authenticated workflows may not be fully tested

API endpoints not exposed through the UI can be missed

Complex single-page application states may remain unexamined

The fundamental tradeoff is timing. DAST catches runtime issues that SAST cannot, but it discovers them later, when fixes are more expensive and disruptive.

This is why modern security programs prioritize strong SAST coverage early in development, reducing the number of issues that need to be caught downstream by DAST.

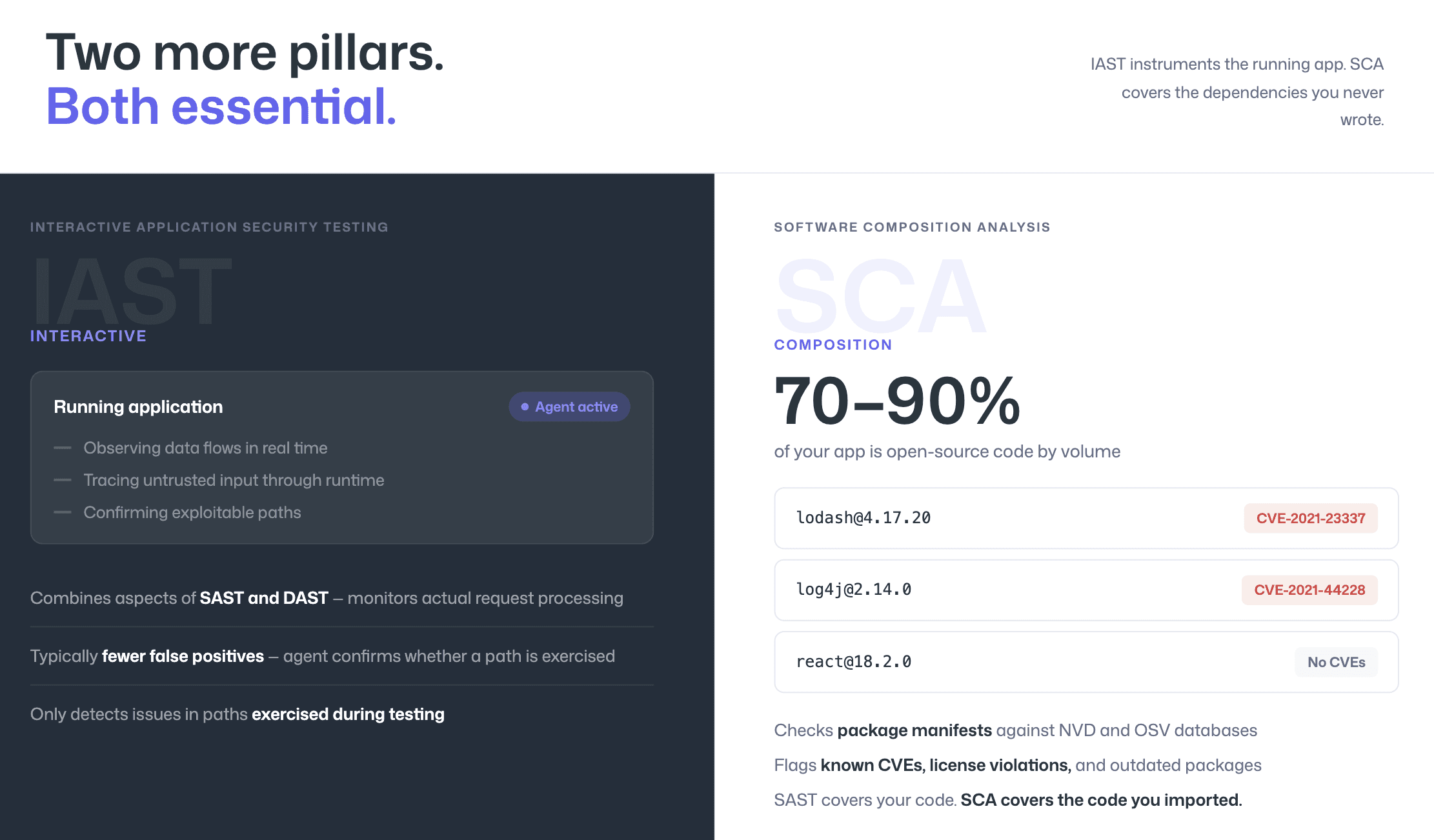

IAST and SCA: The Other Two Pillars

SAST and DAST are the most frequently compared testing methods, but two additional approaches complete a modern application security strategy: IAST and SCA.

Interactive Application Security Testing (IAST)

IAST instruments a running application with an agent that observes data flows in real time during testing. It combines elements of both SAST and DAST.

IAST:

Monitors actual request processing

Tracks how untrusted input flows through the application at runtime

Identifies vulnerabilities with real execution context

Because it validates issues during execution, IAST typically produces fewer false positives than static analysis alone. The agent can confirm whether a vulnerable code path is actually exercised.

The tradeoffs:

It requires a deployed instrumentation agent

It only detects vulnerabilities in code paths that are triggered during testing

If a path is never exercised, it remains untested.

Software Composition Analysis (SCA)

SCA focuses on third-party risk rather than first-party code.

It identifies vulnerabilities in:

Open-source libraries

Frameworks

External packages and dependencies

While SAST scans the code your team writes, SCA checks your dependencies against vulnerability databases such as NVD and OSV to detect:

Known CVEs

License violations

Outdated or unsupported packages

SCA is critical because modern applications are typically 70–90% open-source code by volume. Without SCA, teams may secure their own code while remaining exposed through inherited supply-chain vulnerabilities.

Together, SAST, DAST, IAST, and SCA address different layers of risk. Mature security programs use them in combination rather than isolation.

Dimension | SAST | DAST | IAST | SCA |

What it tests | First-party source code | Running application (black-box) | Running application (instrumented) | Third-party dependencies |

When it runs | During development (PR / build) | After deployment (staging / production) | During testing (with agent) | During development (dependency resolution) |

What it finds | Injection, XSS, insecure crypto, secrets, buffer overflows | Auth bypasses, misconfigs, exposed endpoints | Runtime injection, confirmed data flow issues | Known CVEs, license violations, outdated packages |

Strengths | Earliest detection; full code coverage | Finds deployment-specific issues; no source needed | Low false positives; real runtime context | Addresses supply-chain risk; automated patching |

Limitations | Cannot find runtime/config issues | Cannot identify causing code; late-stage | Requires running app; limited to tested paths | Only third-party code, not your code |

Best for | Catching vulnerabilities before merge | Validating deployed apps are secure | Confirming findings in QA/staging | Managing dependency risk |

CodeAnt AI consolidates SAST, SCA, secrets detection, and Infrastructure-as-Code scanning into a single PR-native platform.

Instead of running multiple tools, each with its own dashboard, alert stream, and pricing model, teams get a unified security view directly inside the pull request.

In one review workflow, developers can see:

First-party code vulnerabilities

Open-source dependency risks

Hardcoded secrets and credentials

Infrastructure misconfigurations

This reduces tool sprawl, eliminates context switching, and simplifies security adoption across engineering teams.

The result is a single platform that scales from small startups to enterprises with hundreds of developers, without requiring separate scanning systems for each layer of application risk.

When to Use SAST vs DAST vs Both

Decision Matrix by Use Case

The right testing approach depends on your team’s stage, risk profile, and development workflow. The matrix below maps common scenarios to recommended approaches.

Your Situation | Recommended Approach | Why |

Early-stage startup, small team, shipping fast | SAST first | Catches vulnerabilities before merge with minimal setup; no running environment needed for scanning |

Growing team adopting DevSecOps for the first time | SAST + SCA | Covers both first-party code and dependency risk, the two largest attack surfaces for most applications |

Mid-market company with staging environments | SAST + SCA + DAST | SAST catches code issues early; DAST validates deployment configuration and catches runtime issues |

Enterprise with compliance requirements (SOC 2, PCI DSS, ISO 27001) | SAST + SCA + DAST + IAST | Full coverage across all testing methods; IAST confirms SAST findings in QA; compliance frameworks often require multiple testing approaches |

Team using AI coding assistants (Copilot, Cursor) | SAST at every PR, mandatory | AI-generated code introduces vulnerabilities at the same rate as human-written code, SAST catches them before merge regardless of origin |

Evaluating third-party vendor applications | DAST only | No source code access available; DAST probes the application externally |

Replacing SonarQube or legacy SAST | AI-native SAST (CodeAnt AI) | Modern AI-native detection with Steps of Reproduction, lower false positives, and PR-native workflow, eliminates the noise that drove teams away from legacy SAST |

Consolidating multiple security tools | Unified platform (CodeAnt AI) | Replace 3-4 separate tools (code review, quality, SAST, SCA) with one PR-native platform, reduces cost, complexity, and context-switching |

The pattern is clear: SAST is the foundation. It runs early, catches the most common vulnerability classes, and integrates directly into the developer workflow.

DAST, IAST, and SCA add coverage for runtime, behavioral, and supply-chain risks, but they complement SAST, not replace it. If you are evaluating SAST tools specifically, review our pricing comparison to see which options fit your budget.

Quick Decision Guide: What Should You Implement First?

If you need a fast answer instead of reading the full comparison, here is the practical guidance.

If you are an early-stage team shipping quickly, implement SAST first. It requires no running environment and catches vulnerabilities at the pull request level when fixes take minutes.

If you are scaling and adopting DevSecOps formally, implement SAST and SCA together. Most real-world risk lives in first-party code and open-source dependencies.

If you already have staging environments and compliance pressure, add DAST. It validates runtime configuration and deployment behavior.

If you operate in a regulated enterprise environment, combine SAST, SCA, DAST, and IAST for layered coverage.

If you use AI coding assistants such as Copilot or Cursor, enforce SAST at every pull request. AI-generated code introduces vulnerabilities at the same rate as human-written code.

The pattern across all scenarios is consistent: start with strong SAST coverage, then layer additional testing methods based on maturity and risk profile.

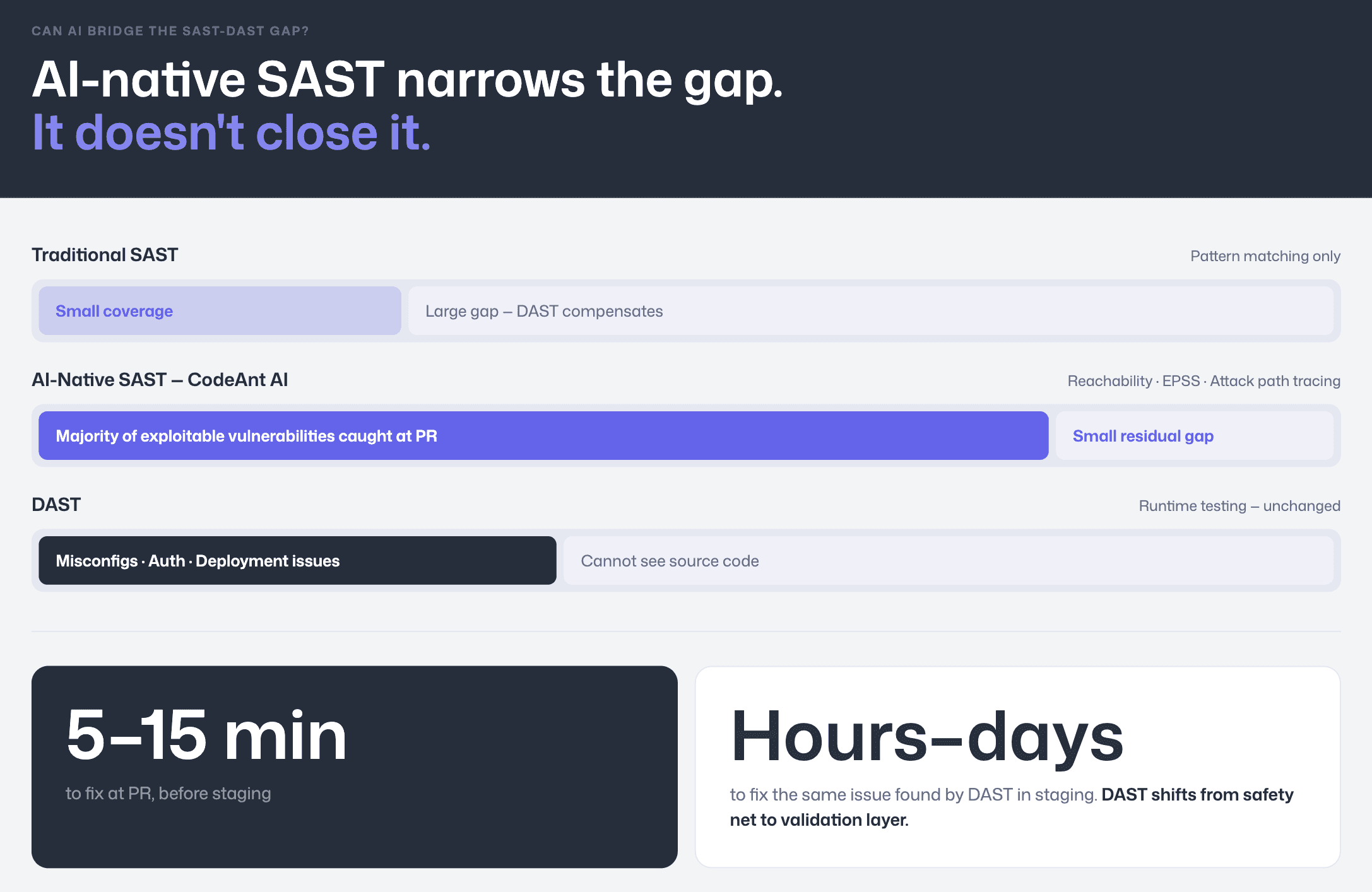

Can AI Bridge the SAST-DAST Gap?

Traditionally, SAST and DAST complemented each other.

SAST’s weakness: high false positives and limited runtime context.

DAST’s strength: testing real running applications for exploitable weaknesses.

AI-native SAST is narrowing that gap. Modern tools are incorporating capabilities that previously required runtime testing, reducing the category of issues that only DAST could detect.

Reachability Analysis and Runtime Context

One major advancement is reachability analysis.

Reachability determines whether flagged code is actually accessible through real execution paths. Traditional rule-based SAST flags every pattern match, even if the vulnerable code can never be triggered in practice.

AI-native tools filter out these theoretical risks.

CodeAnt AI extends this further with:

Reachability filtering to reduce non-exploitable findings

Full attack path tracing from entry point to vulnerable sink

EPSS scoring, estimating the probability of exploitation in the next 30 days

This provides runtime-like context during the pull request, before code is merged.

Developers see:

Where untrusted input enters

How it flows through the code

Why it is exploitable

How likely it is to be attacked

How to fix it immediately

This level of contextual evidence previously required IAST or DAST.

What AI-Native SAST Still Cannot Replace

AI-native SAST does not eliminate DAST entirely.

Runtime-only issues still require dynamic testing:

Server misconfigurations

Deployment-specific security headers

Authentication logic tied to session state

Environment-specific behavior

However, AI-native SAST dramatically reduces what escapes into staging or production. Most exploitable vulnerabilities can now be caught at the earliest and cheapest stage: the pull request.

How PR-Native SAST Reduces Late-Stage DAST Dependency

The economic argument is simple:

Fixing a vulnerability in a pull request takes minutes.

Fixing the same issue after a DAST scan in staging can take hours or days.

Late discovery requires:

Tracing symptoms back to source code

Context-switching the original developer

Rebuilding and redeploying for validation

PR-native SAST eliminates that delay.

Tools like CodeAnt AI deliver:

Inline PR findings

Full attack path and exploit explanation

EPSS prioritization

One-click AI-generated fixes

Developers fix issues immediately, inside their existing workflow.

The practical result: DAST scans begin surfacing fewer findings. DAST shifts from being the primary safety net to becoming a validation layer, confirming that early detection is working.

The End-to-End Security Model

This shift is strongest when SAST integrates across the entire workflow:

CLI pre-commit hooks

IDE integrations (VS Code, JetBrains, Cursor, Windsurf)

PR-native AI code review with Steps of Reproduction

CI/CD policy gates

SecOps dashboards with Jira and Azure Boards integration

When detection, prioritization, and remediation all happen early, DAST becomes confirmation, not rescue.

That is how AI-native SAST closes the historical gap between static and dynamic testing.

Recommended Modern Security Architecture

For most teams in 2026, the practical model looks like this:

Use AI-native SAST at every pull request to catch exploitable vulnerabilities early.

Use SCA during dependency resolution to manage open-source risk.

Enforce CI/CD policy gates for critical findings.

Run DAST in staging as validation for runtime misconfigurations.

Use IAST selectively in QA for high-risk systems.

This layered approach balances early detection, runtime validation, and supply-chain visibility without overwhelming engineering teams with redundant tools.

Conclusion

SAST and DAST are complementary, not competing. SAST catches code-level vulnerabilities early when fixes are cheap. DAST catches deployment-specific issues that static analysis cannot reach. The strongest security programs use both.

But the balance is shifting. AI-native SAST tools like CodeAnt AI now deliver the precision that previously required runtime testing, through reachability analysis, EPSS exploit probability scoring, full attack path tracing, and Steps of Reproduction that give developers the evidence they need to trust and act on findings immediately. The result is fewer vulnerabilities reaching production, fewer DAST findings in staging, and faster remediation across the board.

Start scanning: Try CodeAnt AI’s static analysis free for 14 days

FAQs

What is the primary difference between SAST and DAST?

Should startups use SAST or DAST first?

Can AI-native SAST replace DAST completely?

Why do traditional SAST tools generate high false positives?

How should enterprises combine SAST, DAST, IAST, and SCA?