Blogs

1000+ engineering teams have made CodeAnt AI the last line of defence before every deployment

Trusted by Startups to Fortune 500

AI Pentesting

How AI Penetration Testing Works: From Continuous Attack Surface Mapping to Proven Data Leaks

A technical walkthrough of an autonomous AI pentest pipeline that performs recon, source review, exploitation, deep-dive extraction, reporting, and self-evaluation in 30 to 90 minutes.

AI Pentesting

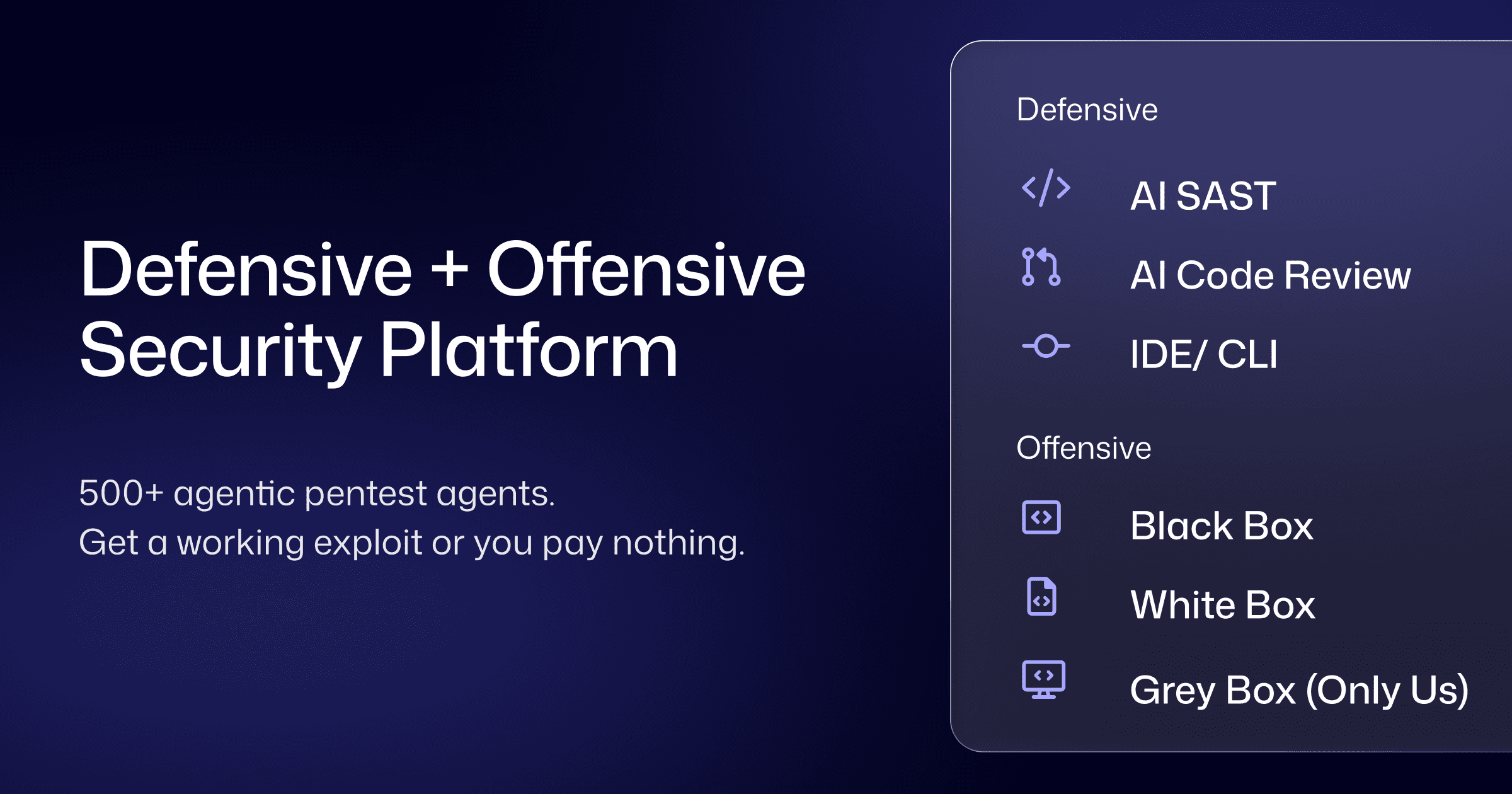

Defensive and Offensive Security Platform | AI Pentesting with Code Intelligence

Most penetration tests fail because they don't know your code. CodeAnt AI combines AI SAST, black box, white box, and grey box penetration testing on one intelligence layer. Every line reviewed. Every endpoint tested.

AI Code Review

Mini Shai-Hulud Strikes Again

631 malicious npm versions. 22 minutes. 16M weekly downloads at risk. Here's how TeamPCP's Mini Shai-Hulud worm compromised @antv, echarts-for-react, and timeago.js — and exactly what to do if you're affected.

AI Pentesting

AI Penetration Testing For Cloud Security

A complete enterprise guide to AI penetration testing for cloud security, covering AWS, Azure, GCP, IAM escalation, exposed services, CI/CD risks, compliance evidence, and retesting.

AI Pentesting

10 AI Penetration Testing Tools Compared

Not all AI penetration testing tools are the same. Compare 10 platforms across automated penetration testing, exploit validation, and real-world security coverage.

AI Pentesting

6 Top AI Pentesting Tools to Try in 2026

A deep technical comparison of AI pentesting tools based on methodology, exploit detection, and real-world coverage across modern SaaS systems.

AI Pentesting

AWS Penetration Testing: Complete Guide to IAM, S3, Lambda, and Cloud Attack Paths

Learn what AWS penetration testing actually covers, from IAM privilege escalation and SSRF-to-IMDS to S3, Lambda, and CloudTrail testing, with methodology, tools, and compliance guidance.

AI Code Review

Why Spring Security Misconfigurations Cause Critical Auth Bypasses and How To Test Them

Learn how Spring Security misconfigurations create authentication bypasses, how attackers exploit them, and how automated penetration testing plus code review finds what scanners miss.

AI Pentesting

GraphQL Penetration Testing With White Box Analysis

See how GraphQL penetration testing combined with white box analysis uncovers resolver-level authorization flaws, attack chains, and vulnerabilities external testing alone often misses.

AI Pentesting

Why Annual Pentests Fail: The Rise of PTaaS Explained

Annual penetration testing is outdated. Learn how PTaaS closes the 180-day security gap with continuous testing and real-time findings.

AI Pentesting

Defensive vs Offensive Security: What’s the Difference and Why It Matters

SaaS teams often run security in silos. Learn why unifying defensive and offensive security is key to finding real vulnerabilities.

AI Pentesting

3 Types of AI Pentesting: Black Box, White Box, and Gray Box

Not all penetration tests are the same. Learn how black box, white box, and gray box testing differ, and which one your application actually needs to stay secure.