CodeAnt AI and SonarQube take fundamentally different approaches to SAST (Static Application Security Testing).

SonarQube began as a code quality platform focused on code smells, duplication, and technical debt. Over time, it added rule-based SAST security scanning on top of its quality engine.

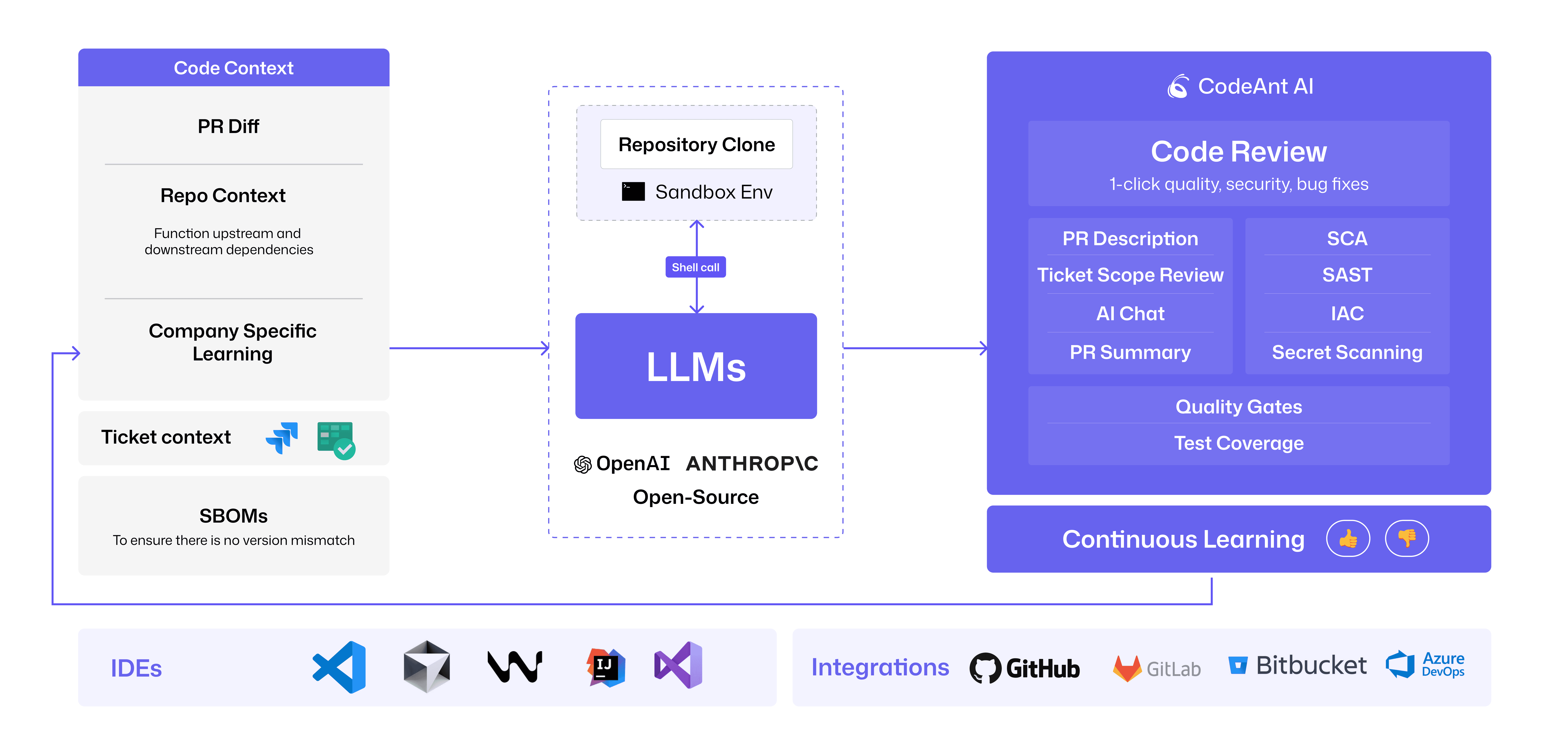

CodeAnt AI was built as an AI-native SAST platform from day one. It combines AI code review, semantic vulnerability detection, code quality analysis, SCA, secrets scanning, and IaC security into a single workflow that spans the full development lifecycle, from pre-commit hooks to pull requests, CI/CD pipelines, and a SecOps dashboard.

This comparison evaluates CodeAnt AI vs SonarQube SAST across six critical dimensions:

Detection accuracy

AI capabilities

Developer experience

Integrations

Pricing model

Enterprise readiness

The goal is simple: clearly explain how rule-based SAST differs from AI-native SAST, where SonarQube excels, where CodeAnt AI goes further, and which tool is better suited for modern application security in 2026.

CodeAnt AI vs SonarQube: Quick Summary

Comparison verified against SonarQube documentation andCodeAnt AI documentation as of February 2026. Features change, verify with both vendors before purchasing.

Where SonarQube Excels in SAST and Code Quality

SonarQube is one of the most widely adopted code analysis platforms globally, used by more than 7 million developers. Any serious SAST comparison must first acknowledge where SonarQube is strongest.

1. Established Ecosystem and Long Market Presence

Founded in 2007, SonarQube benefits from nearly two decades of ecosystem maturity. That longevity translates into:

Support for 30+ programming languages (more in paid editions)

A mature plugin ecosystem

Deep CI/CD integration across:

Jenkins

GitHub Actions

GitLab CI

CircleCI

For teams using uncommon build systems or legacy enterprise languages such as COBOL, PL/I, or ABAP (Enterprise and Data Center editions), SonarQube is one of the few SAST tools that offers coverage.

The Community Build is open-source and free. This lowers the barrier to entry for teams that need basic code quality and rule-based SAST scanning.

Because of its open-source roots, SonarQube also benefits from:

Extensive documentation

Large community support

Thousands of troubleshooting threads on Stack Overflow

For onboarding and long-term maintenance, this ecosystem depth is a practical advantage.

2. Deep Code Quality Analysis (Core Strength)

Code quality is SonarQube’s original foundation. Even in 2026, this remains its most mature capability.

SonarQube tracks:

Code smells (maintainability issues)

Code duplication

Cyclomatic complexity

Technical debt across the full codebase

The Quality Gate mechanism is particularly strong. It allows teams to block merges when predefined quality thresholds are not met. This pattern has become a standard across modern SAST and code quality tools.

For organizations primarily focused on maintainability rather than application security depth, SonarQube’s quality metrics are more granular than most security-first SAST platforms.

One standout feature is technical debt estimation, expressed in time-to-fix. This gives engineering leaders a concrete metric for communicating code health to executives and stakeholders.

3. Mature On-Premises Deployment Model

SonarQube offers full self-hosted deployment across all editions.

Deployment options include:

Community Build: runs on any Linux server with a database

Developer Edition: adds branch analysis and additional language coverage

Enterprise Edition: advanced governance features

Data Center Edition: high availability for large organizations

For regulated industries that require strict data residency or air-gapped infrastructure, SonarQube’s on-prem model is well-established. It has years of production deployments in finance, government, and enterprise environments.

If long-term track record in self-hosted SAST deployment is a top priority, SonarQube has a proven history.

Summary of Where SonarQube Is Strongest

SonarQube excels in:

Mature code quality analysis

Rule-based SAST scanning

Enterprise-grade on-prem deployment

Legacy language support

Large ecosystem and community support

Its strengths are most pronounced when code maintainability and on-prem control are higher priorities than AI-native detection or workflow-native security integration.

Where CodeAnt AI Goes Further in SAST

CodeAnt AI addresses the gaps teams encounter as they scale beyond traditional rule-based SAST, particularly in detection depth, developer workflow, and security consolidation.

Check out this: Top 13 Static Application Security Testing (SAST) Tools

AI-Native Detection vs. Rule-Based Scanning

SonarQube uses a rule-based detection engine: it matches code patterns against a SonarQube relies on a rule-based detection engine. It matches code patterns against a database of predefined vulnerability signatures.

This works well for known vulnerability classes such as:

Cross-site scripting (XSS)

Hardcoded credentials

However, rule-based SAST struggles with:

Novel vulnerability patterns

Multi-file taint flows

Business logic vulnerabilities

AI-generated code patterns not yet cataloged

CodeAnt AI uses an AI-native detection engine. AI is the primary analysis mechanism, not a post-processing layer.

Instead of matching patterns, the scanner reasons about:

Code semantics

Data flow across functions

Reachability

User-controlled inputs

Exploitable sinks

This enables detection of vulnerabilities that require understanding intent, not just syntax.

The distinction matters most in two scenarios:

AI-generated code, where tools like Copilot produce new patterns not covered by rule databases

Complex application logic, where exploitation depends on business context

In modern development environments, these scenarios are increasingly common.

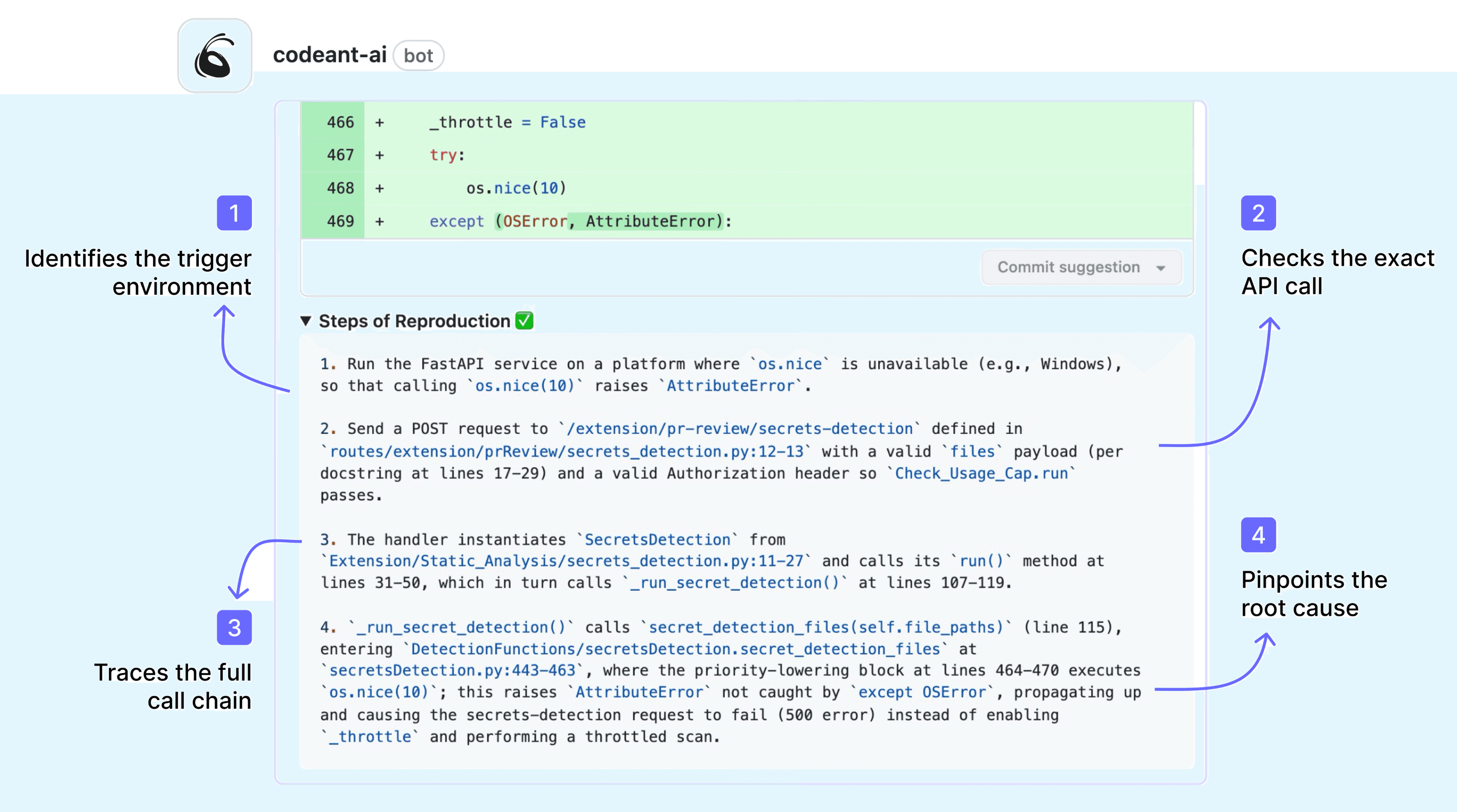

2. Steps of Reproduction for Every Finding

When SonarQube flags a vulnerability, it provides:

CWE classification

Severity rating

File and line location

The developer must then manually verify exploitability by tracing data flows and checking for sanitization.

CodeAnt AI generates Steps of Reproduction for every finding.

Each result includes:

Entry point

Taint flow path

Intermediate propagation steps

Vulnerable sink

Concrete exploitation scenario

This shifts the developer’s task from:

“Is this real?”

to:

“Review the evidence and apply the fix.”

Evidence-backed findings are:

Resolved faster

Suppressed less often

Less likely to cause alert fatigue

This directly improves SAST adoption within engineering teams.

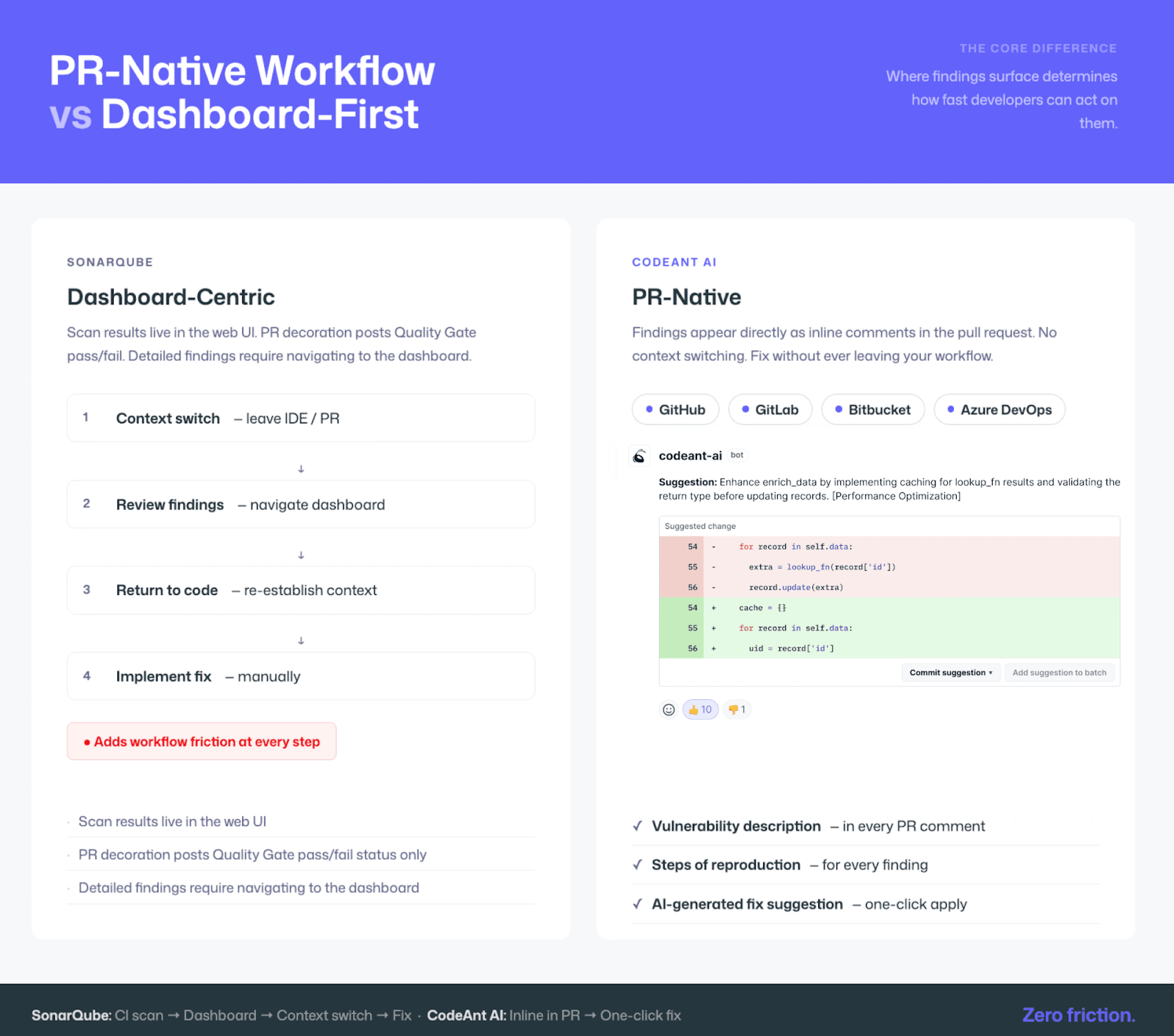

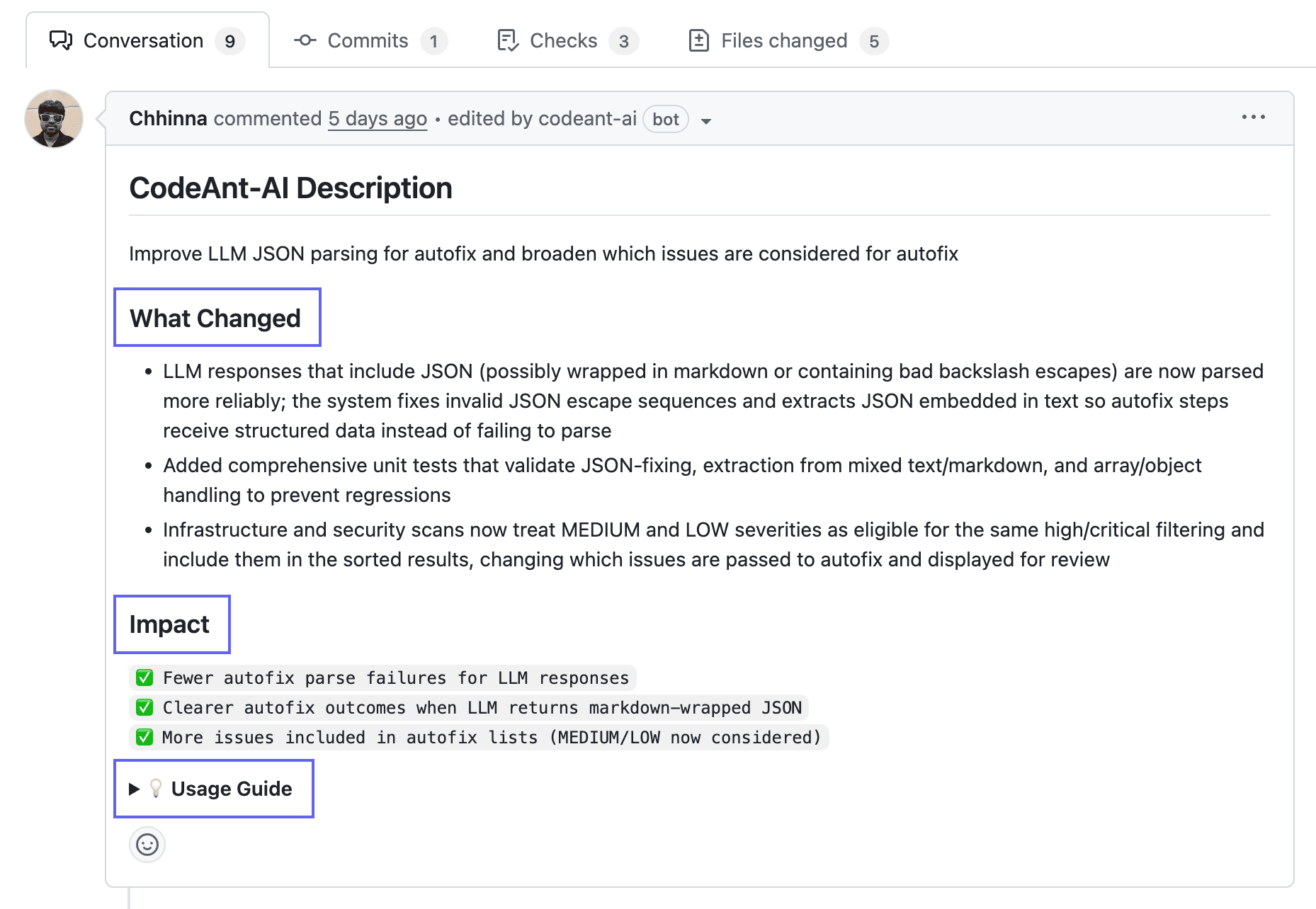

3. PR-Native Workflow Instead of Dashboard-First

SonarQube is primarily dashboard-centric.

Scan results live in the web UI

PR decoration usually posts Quality Gate pass/fail status

Detailed findings require navigating to the dashboard

This adds workflow friction:

Context switch → Review findings → Return to code → Implement fix

CodeAnt AI is PR-native.

Findings appear directly as inline comments in:

Each PR comment includes:

Vulnerability description

Steps of Reproduction

AI-generated fix suggestion

One-click commit option

The developer never leaves the pull request. This is an architectural difference, not a cosmetic one. PR-native SAST eliminates workflow context switching.

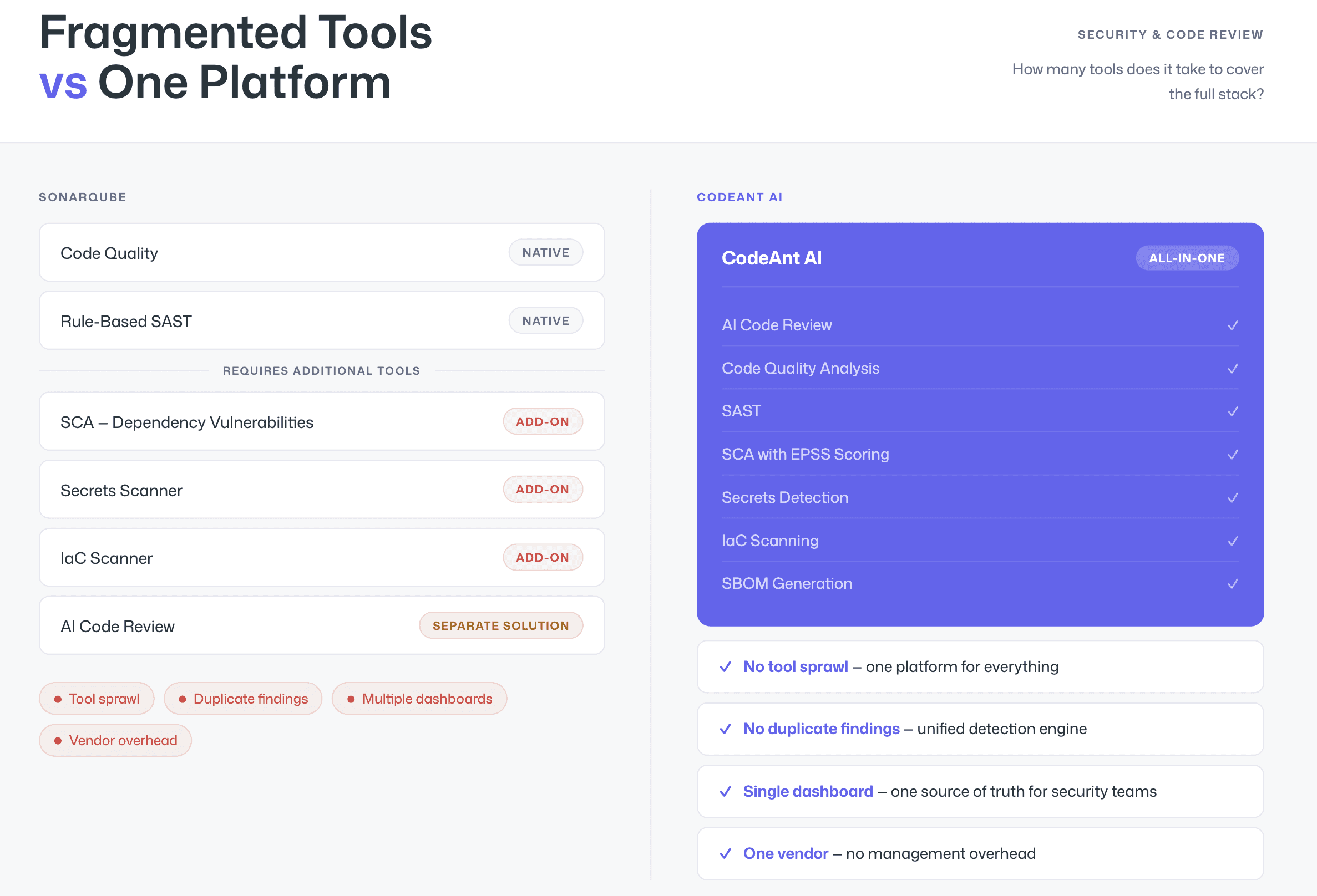

4. Unified Platform Across Security and Code Review

SonarQube covers:

Code quality

Rule-based SAST

For full application security coverage, teams often add:

SCA tool (for dependency vulnerabilities)

Secrets scanner

IaC scanner

Separate AI code review solution

CodeAnt AI consolidates:

AI code review

Code quality analysis

SAST

SCA with EPSS scoring

Secrets detection

IaC scanning

SBOM generation

All within one platform.

This reduces:

Tool sprawl

Duplicate findings

Multiple dashboards

Vendor management overhead

Security teams gain a single source of truth.

5. Pricing at Scale

SonarQube Server editions are priced per lines of code (LOC). As codebases grow, teams may cross pricing tiers even without adding developers.

Example pricing (as of 2026):

Developer Edition (2M LOC): ~$10,000/year

Enterprise Edition (5M LOC): ~$35,700/year

Community Build: free but limited

LOC-based pricing can create unpredictable scaling costs.

CodeAnt AI uses per-user pricing:

$20 per user per month (Code Security)

No per-repository fees

No per-scan fees

No LOC tiers

Check the full pricing here.

Example:

50 developers → ~$12,000/year

Same scale in SonarQube Enterprise → ~$35,700/year before negotiation

CodeAnt AI pricing scales with headcount, not codebase growth. For organizations adding features rapidly, this can create more predictable budgeting.

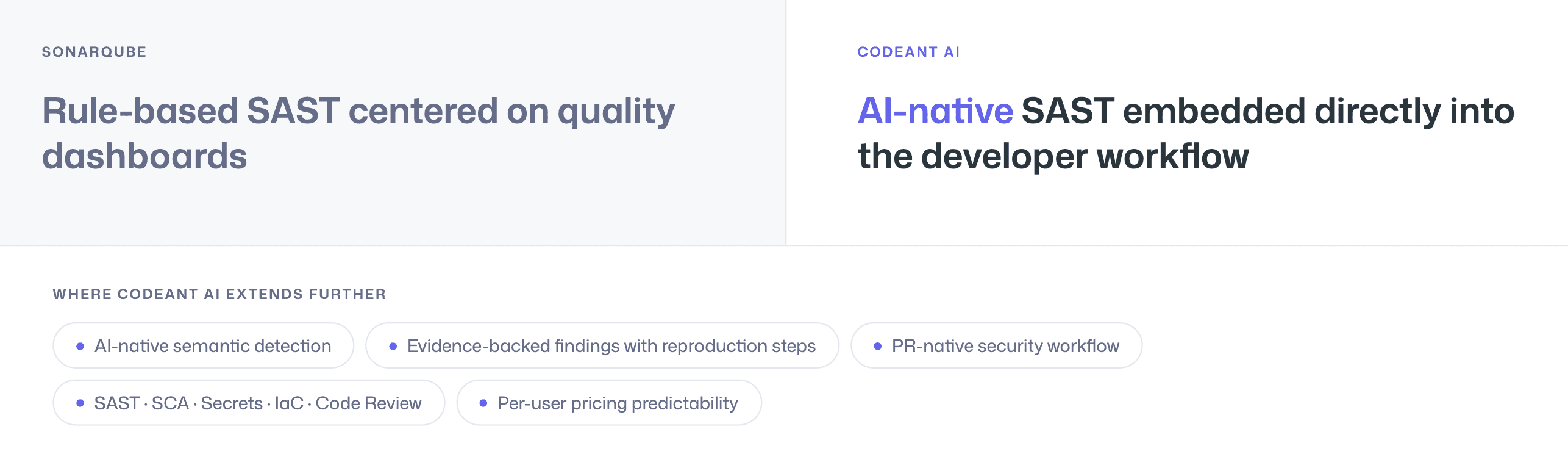

Summary of Where CodeAnt AI Extends SAST Capabilities

CodeAnt AI goes further in:

AI-native semantic detection

Evidence-backed findings with reproduction steps

PR-native security workflow

Tool consolidation across SAST, SCA, secrets, IaC, and code review

Per-user pricing predictability

The contrast is not just feature-based, it reflects two different architectural philosophies:

Rule-based SAST centered on quality dashboards versus AI-native SAST embedded directly into the developer workflow

Feature-by-Feature Comparison

The table below uses the six-dimension evaluation framework from the SAST pillar comparison for a detailed, dimension-by-dimension comparison.

Feature | SonarQube | CodeAnt AI |

Detection Accuracy | ||

SAST (first-party code) | ✓ (OWASP Top 10, CWE coverage) | ✓ (AI-native with semantic analysis) |

SCA (open-source dependencies) | Partial (basic dependency checks in Cloud) | ✓ (full SCA with EPSS scoring) |

Secrets detection | ✓ (limited set of patterns) | ✓ (comprehensive) |

IaC scanning | Partial (Terraform, CloudFormation in newer editions) | ✓ (AWS, GCP, Azure) |

SBOM generation | ✗ | ✓ |

Steps of Reproduction | ✗ | ✓ (every finding) |

AI Capabilities | ||

AI tier | Rule-Based | AI-Native |

AI-powered code review | ✗ | ✓ (line-by-line PR review) |

AI auto-fix | Limited (AI CodeFix in SonarCloud) | ✓ (one-click fixes in PR) |

AI triage / false positive reduction | ✗ | ✓ (AI-native detection + reachability) |

AI Code Assurance (AI-code detection) | ✓ (flags AI-generated code) | ✓ (scans AI-generated code at same depth) |

Developer Experience | ||

Primary interface | Dashboard (web UI) | PR-native (inline comments) |

CLI scanning | ✗ (CI/CD scanner only) | ✓ |

Pre-commit hooks (secret/credential/SAST blocking) | ✗ | ✓ (blocks before commit) |

IDE integration | ✓ (SonarLint) | ✓ (VS Code, JetBrains, Visual Studio, Cursor, Windsurf) |

AI prompt generation for IDE fixes | ✗ | ✓ (generates prompts for Claude Code/Cursor) |

Inline PR comments | Partial (Quality Gate decoration) | ✓ (findings + Steps of Reproduction + fix suggestions) |

PR summaries | ✗ | ✓ |

One-click fix application | ✗ | ✓ (committable suggestions in PR) |

Integrations | ||

GitHub | ✓ | ✓ |

GitLab | ✓ | ✓ |

Bitbucket | ✓ | ✓ |

Azure DevOps | ✓ | ✓ |

CI/CD pipelines | ✓ (broad) | ✓ (GitHub Actions, Jenkins, GitLab CI, Bitbucket Pipelines, Azure DevOps Pipelines) |

Pricing | ||

Free tier | Community Build (free, open source); Cloud free to 50K LOC | 14-day free trial |

Pricing model | Per LOC (Server); Per LOC (Cloud) | Per user |

Starter price | $720/yr (Developer, 100K LOC); €30/mo (Cloud, 100K LOC) | $20/user/month (Code Security) |

Small team (10 devs, ~500K LOC) | ~$2,500/yr (Developer Edition) | $2,400/yr |

Mid-market (50 devs, ~2M LOC) | ~$40,000/yr (Enterprise Edition) | $24,000/yr (code quality + code security) |

Enterprise (200 devs, ~10M LOC) | ~$200,000/yr (Enterprise Edition) | $96,000/yr (code quality + code security) |

SOC 2 | ✓ | ✓ (Type II) |

HIPAA | ✗ | ✓ |

Quality gates | ✓ (mature, highly configurable) | ✓ |

Language support | 30+ (Enterprise: COBOL, ABAP, PL/I) | 30+ languages, 85+ frameworks |

SecOps dashboard | ✗ (quality dashboard only) | ✓ (vulnerability trends, fix rates, team risk, OWASP/CWE/CVE mapping) |

Ticketing integration | ✗ | ✓ (Jira, Azure Boards — native) |

Audit-ready reporting | Basic | ✓ (PDF/CSV exports for SOC 2, ISO 27001) |

Attribution / risk distribution | ✗ | ✓ (repo-level and developer-level risk) |

Zero data retention | ✗ | ✓ (across all deployment models) |

Developer productivity metrics | ✗ | ✓ (DORA metrics, PR cycle time, SLA tracking) |

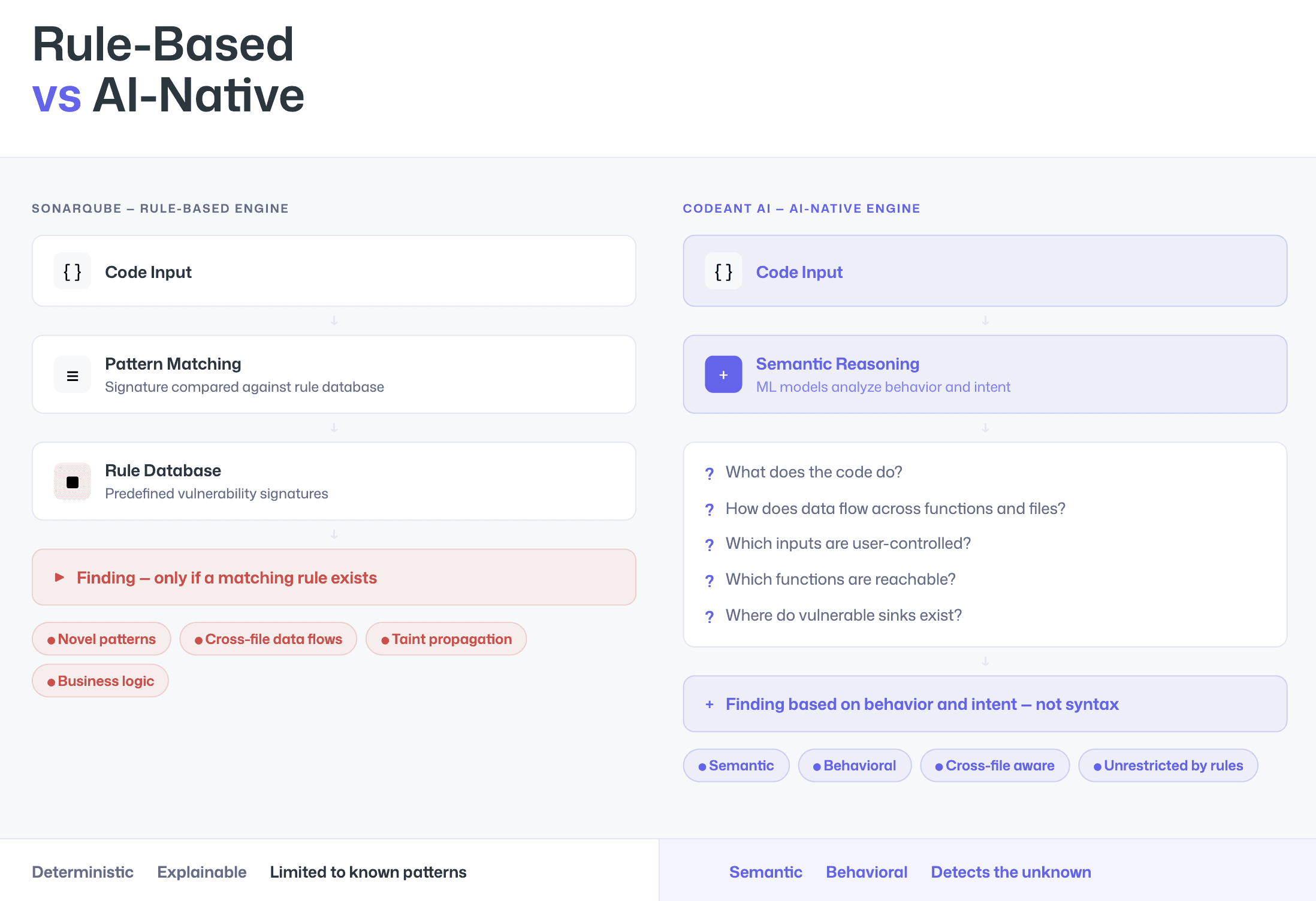

Detection Approach: Rule-Based vs. AI-Native

The most consequential difference between SonarQube and CodeAnt AI is how each tool analyzes code. At its core, this is a difference between rule-based SAST and AI-native SAST.

How Rule-Based SAST Works (SonarQube)

SonarQube uses a rule-based detection engine.

Each vulnerability type has a predefined pattern, a signature stored in a rule database. When code matches that pattern, the rule fires and generates a finding.

This approach is:

Deterministic

Explainable

Customizable

Easy to audit

You can inspect the rule, understand why it triggered, and modify it if necessary. However, rule-based SAST can only detect what its rules define.

It struggles with:

Novel vulnerability patterns

Complex cross-file data flows

Multi-step taint propagation

Business-logic vulnerabilities

Patterns not yet documented in rule libraries

If no rule exists, no finding is generated.

How AI-Native SAST Works (CodeAnt AI)

CodeAnt AI uses an AI-native detection engine. Machine learning models are the primary analysis mechanism, not a layer applied on top of rules.

Instead of matching signatures, the scanner reasons about:

What the code does

How data flows across functions and files

Which inputs are user-controlled

Which functions are reachable

Where vulnerable sinks exist

This is semantic analysis rather than pattern matching.

The result: vulnerabilities can be detected based on behavior and intent, not just syntax.

Where the Gap Becomes Visible

The difference between rule-based and AI-native SAST is most visible in three scenarios:

1. AI-Generated Code

Tools like GitHub Copilot and Claude Code generate patterns that may not exist in traditional rule databases. Rule-based engines miss these patterns until new rules are written. AI-native engines analyze behavior directly.

2. Complex Taint Flows

Some vulnerabilities require tracking data across multiple files, functions, and execution paths before reaching a sink.

Pattern matching is insufficient.

Semantic reasoning is required.

3. Emerging Vulnerability Classes

When a new vulnerability class appears, such as prompt injection in LLM-based applications, rule-based tools cannot detect it until signatures are created. AI-native systems can detect suspicious behavior before formal rules exist.

SonarQube’s AI Additions

SonarQube is introducing AI-powered features, including:

AI CodeFix in SonarCloud (automated fix suggestions)

AI Code Assurance (flags AI-generated code)

These additions improve developer experience. However, they are AI features layered on top of a rule-based engine. They do not replace the underlying pattern-matching architecture.

Architectural Difference in One Sentence

Rule-based SAST finds what it has been taught to recognize. AI-native SAST analyzes how the code behaves. That architectural difference shapes detection depth, false positive rates, and future adaptability.

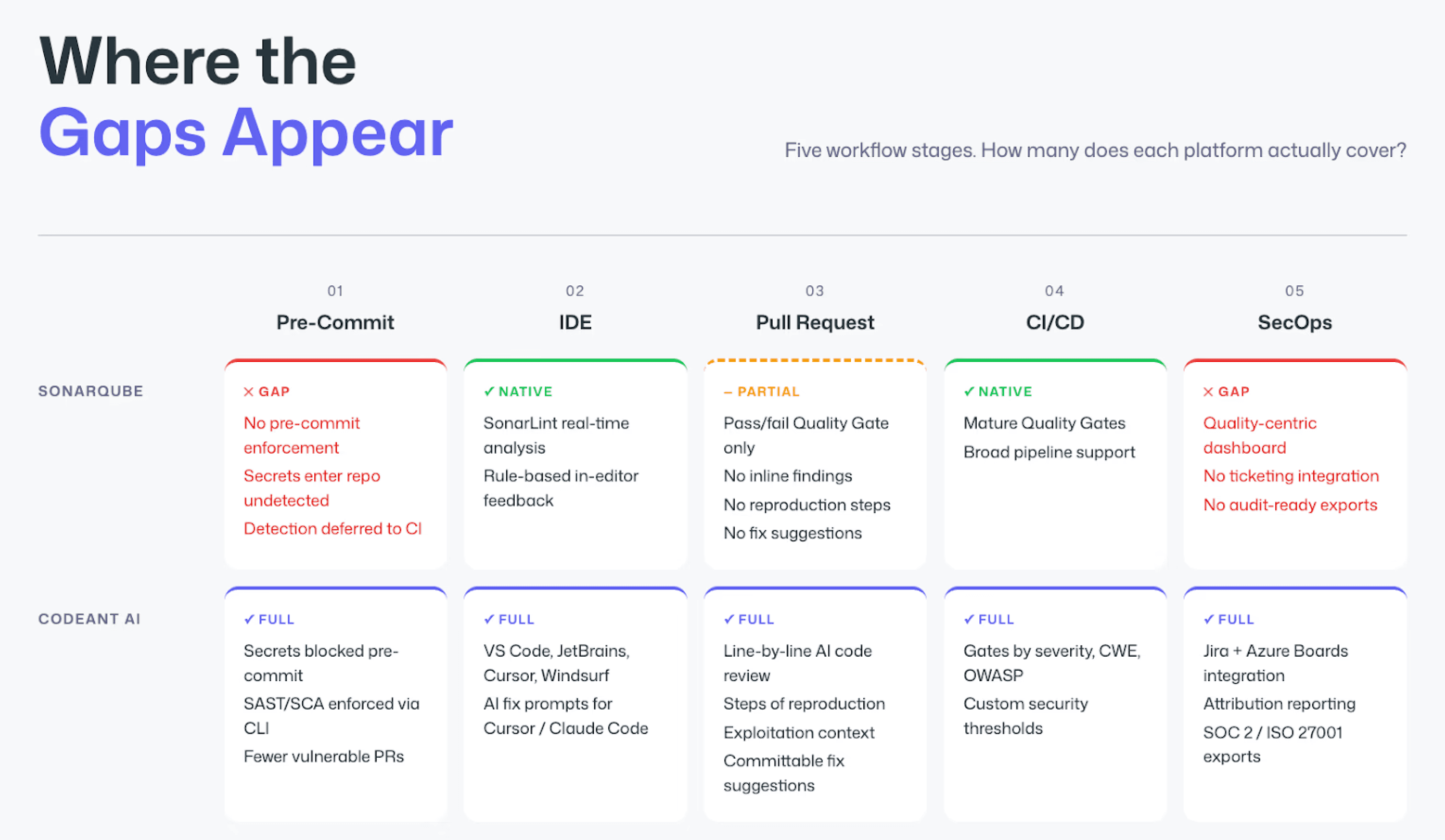

End-to-End Workflow Comparison (CLI → IDE → PR → CI/CD → SecOps)

Security tooling fails when it covers only one stage of the developer workflow. A scanner that only runs in CI means every vulnerability makes it into a pull request before being caught. A tool that only runs in the IDE means there is no enforcement at merge time. The right comparison is not just “which tool detects more” but “at each stage of my workflow, what does each tool actually do?”

Workflow Stage | SonarQube | CodeAnt AI |

CLI + Pre-Commit | ✗ No CLI for pre-commit scanning. Secrets, credentials, and SAST issues can be committed freely. | ✓ CLI blocks secrets, credentials, API keys, tokens, and high-risk SAST/SCA issues before |

IDE | ✓ SonarLint (VS Code, IntelliJ, Eclipse, Visual Studio). Sync rules from SonarQube server. Real-time feedback on quality and some security rules. | ✓ VS Code, JetBrains (IntelliJ, PyCharm, WebStorm), Visual Studio, Cursor, Windsurf. In-context scanning with guided remediation and one-click fixes. AI prompt generation triggers Claude Code or Cursor to auto-fix specific vulnerabilities. |

Pull Request | Partial. Quality Gate pass/fail posted as PR comment. Individual findings live in the SonarQube dashboard, not inline in the PR. The developer must navigate to the dashboard to review. | ✓ AI code review + security analysis runs on every PR. Findings appear as inline comments with Steps of Reproduction and one-click AI-generated fixes. PR summaries. Line-by-line review. Developers never leave the PR. |

CI/CD | ✓ Broad CI/CD integration (Jenkins, GitHub Actions, GitLab CI, Azure DevOps, CircleCI). Quality Gates block merges based on configurable thresholds. | ✓ GitHub Actions, Jenkins, GitLab CI, Bitbucket Pipelines, Azure DevOps Pipelines. Configurable policy gates by severity, CWE category, OWASP classification, and custom rules. |

SecOps / Compliance | Quality dashboard tracking code smells, duplication, complexity, and technical debt. Security findings visible but not the primary focus. No ticketing integration. No audit-ready export. | ✓ Unified SecOps dashboard: vulnerability trends over time, true positive vs. false positive rates, fix rates, EPSS exploit prediction scoring, OWASP/CWE/CVE mapping, team and repo risk distribution. Native Jira and Azure Boards integration. Audit-ready PDF/CSV reports for SOC 2, ISO 27001. Attribution reporting identifies which repos and developers introduce more risk. |

Workflow Coverage: Where the Gaps Appear

SonarQube covers two of the five workflow stages well:

CI/CD integration: broad pipeline support and mature, well-designed Quality Gates

IDE feedback: SonarLint provides real-time rule-based analysis inside the editor

These are real strengths, not marketing claims. However, gaps appear at the edges of the workflow.

Before the Commit

SonarQube does not provide pre-commit secret or SAST enforcement. Developers can commit credentials, tokens, or high-risk patterns. Detection happens later in CI, if it happens at all.

During the Pull Request

PR decoration typically provides a pass/fail Quality Gate status. Developers do not receive:

Inline findings

Evidence-backed reproduction steps

One-click fixes

The workflow becomes: Check dashboard → Investigate → Return to code → Fix manually.

After the Scan

SonarQube does not provide a SecOps-focused layer with:

Native ticketing integration

Attribution reporting

Audit-ready compliance exports

Its dashboard is quality-centric, not security-operations–centric.

How CodeAnt AI Covers All Five Stages

CodeAnt AI closes these gaps across the full lifecycle.

Pre-Commit (Shift Left)

Secrets and high-risk SAST/SCA issues are blocked before they enter Git.

This means:

Fewer vulnerable pull requests

Less remediation later in CI

Reduced accidental credential exposure

The PR never has to catch what the CLI already prevented.

IDE

Beyond rule syncing, CodeAnt AI integrates with AI coding environments. It can generate targeted prompts for tools like Claude Code or Cursor to automatically fix specific vulnerabilities. The IDE becomes a remediation surface, not just a warning surface.

Pull Request

Instead of a pass/fail summary, CodeAnt AI provides:

Line-by-line AI code review

Steps of Reproduction

Exploitation context

Committable fix suggestions

Developers stay inside the PR workflow. No dashboard context switching.

CI/CD

Policy gates can be enforced by:

Severity

CWE category

OWASP classification

Custom security thresholds

This ensures enforceable security standards at merge time.

SecOps and Compliance

At the post-scan stage, CodeAnt AI adds:

Vulnerability trend dashboards

Ticketing integration (Jira, Azure Boards)

Attribution reporting

Audit-ready exports for SOC 2 / ISO 27001

This gives security teams measurable remediation proof, something quality dashboards were not originally designed to provide.

The Practical Difference

The real distinction is not just feature depth. It is how many security gaps exist between workflow stages.

With SonarQube:

Secrets can enter the repository before detection

PRs receive summary status instead of evidence-backed findings

Compliance workflows require additional tooling

With CodeAnt AI:

Security begins before commit

Findings appear directly in the PR

Remediation is measurable and auditable

Fewer gaps between stages means fewer opportunities for vulnerabilities to slip through.

Deployment and Data Residency

For enterprises with strict data residency, regulatory, or air-gapped requirements, deployment architecture matters as much as SAST detection capability.

This is often a deciding factor in regulated industries such as finance, government, healthcare, and defense.

SonarQube Deployment Options

SonarQube offers full self-hosted deployment across all editions, including the free Community Build.

Self-Hosted (On-Premises)

Community Build runs on any Linux server with a database

Developer Edition adds branch analysis and expanded language support

Enterprise Edition adds governance features

Data Center Edition supports high availability for large organizations

SonarQube has years, in some cases decades, of production deployments in:

Air-gapped environments

Financial institutions

Government agencies

Defense organizations

This long track record makes self-hosted SAST deployment one of SonarQube’s strongest dimensions.

Managed Cloud

SonarQube Cloud (formerly SonarCloud) is the SaaS option for teams that prefer managed infrastructure without maintaining servers.

CodeAnt AI Deployment Models

CodeAnt AI offers three deployment models, providing flexibility across infrastructure types.

1. Customer Data Center (Air-Gapped)

CodeAnt AI can deploy fully within a customer’s on-premises infrastructure, including completely air-gapped environments with zero external connectivity.

In this model:

No code leaves the network

No metadata leaves the network

No telemetry leaves the network

The full workflow operates internally:

CLI pre-commit scanning

IDE integration

PR-native findings

CI/CD policy gates

SecOps dashboard

Everything runs inside the customer’s environment.

2. Customer Cloud (AWS, GCP, Azure)

CodeAnt AI can deploy within a customer’s own cloud environment (VPC).

In this model:

The customer retains infrastructure control

Network boundaries remain customer-defined

Data stays inside the customer’s cloud account

This is suitable for enterprises that prefer cloud flexibility but require full control over security boundaries.

3. CodeAnt Cloud (Managed SaaS)

Teams can use CodeAnt AI’s hosted infrastructure.

This model is:

SOC 2 Type II certified

HIPAA compliant

Fully managed

It provides the fastest deployment option with no infrastructure overhead.

Zero Data Retention

Across all three models, CodeAnt AI offers zero data retention. Code is analyzed in memory and is never persisted to disk, even temporarily. This can be significant for organizations with strict compliance requirements.

Pricing Comparison

Dimension | SonarQube | CodeAnt AI |

Pricing model | Per lines of code (Server) / per LOC (Cloud) | Per user |

Free option | Community Build (free, open-source); Cloud free to 50K LOC | 14-day free trial |

Small team (10 devs, ~500K LOC) | ~$2,500/yr (Developer Edition) | $2,400/yr |

Mid-market (50 devs, ~2M LOC) | ~$40,000/yr (Enterprise Edition) | $24,000/yr (code quality + code security) |

Enterprise (200 devs, ~10M LOC) | ~$200,000/yr (Enterprise Edition) | $96,000/yr (code quality + code security) |

Includes SCA | Partial (Cloud only) | ✓ |

Includes AI Code Review | ✗ | ✓ |

Includes Secrets + IaC | Partial | ✓ |

Includes SecOps Dashboard + Jira/Azure Boards | ✗ | ✓ |

Pricing page |

That said, SonarQube is cost-effective for basic code quality and rule-based SAST, especially with its free Community Build.

However, when teams require deeper security coverage, including SCA, secrets detection, AI code review, and compliance reporting, additional tools are often needed, increasing total annual cost.

CodeAnt AI consolidates SAST, SCA, secrets, IaC scanning, AI code review, and SecOps reporting into a single per-user price.

The key pricing difference is structural:

SonarQube scales with lines of code (LOC)

CodeAnt AI scales with users

For fast-growing teams with expanding repositories, per-user pricing offers more predictable long-term budgeting.

Migration Path: SonarQube to CodeAnt AI

Teams considering a transition from SonarQube to CodeAnt AI can follow a structured, low-risk migration approach.

The process typically unfolds in four phases:

Phase 1: Export Existing Configuration

Export SonarQube quality profiles

Document custom rules and thresholds

Capture existing Quality Gate logic

Phase 2: Run in Parallel (Shadow Mode)

Connect repositories to CodeAnt AI

Run scans alongside SonarQube

Compare detection output side by side

Evaluate false positives and reproduction depth

This phase ensures objective validation before any switch.

Phase 3: Map Quality Gates

Align SonarQube Quality Gates with CodeAnt AI policy gates

Map severity thresholds and CWE categories

Validate enforcement behavior in CI/CD

Phase 4: Transition Teams

Confirm findings parity

Enable PR-native workflow

Conduct lightweight developer onboarding

Most teams complete onboarding in days, not weeks, since the workflow shift is from dashboard-centric review to PR-native integration.

Key Migration Considerations

Before decommissioning SonarQube, teams should evaluate:

1. Custom Rules

If your organization has invested heavily in custom SonarQube rules, verify that CodeAnt AI’s detection covers the same vulnerability classes.

2. Historical Data

Export historical SonarQube quality trends before shutdown. CodeAnt AI tracks its own trends but does not import SonarQube history.

3. Developer Workflow Change

The primary shift is architectural:

Dashboard review → PR-native security workflow

This typically requires a short onboarding session, not extended retraining.

Our Real-World Migration Examples…

Migration from SonarQube to CodeAnt AI spans industries and company sizes.

In fintech, Bajaj Finserv Health migrated its 300-developer team from SonarCloud and manual review processes to CodeAnt AI. The result: PR reviews reduced from hours to seconds and elimination of unpredictable LOC-based pricing. Their CISO described the outcome as “vulnerability-free code and reducing code review time from hours to seconds.”

In cybersecurity, Commvault, a US public company, migrated across 800 developers and more than 17,000 merge requests. Approximately 25% of reviews were handled entirely by AI.

In aviation, Akasa Air evaluated SonarQube and selected CodeAnt AI. A $30 billion public automotive company also transitioned from SonarQube to CodeAnt AI.

Multiple US cybersecurity startups have consolidated SonarQube and other tools into a single CodeAnt AI platform.

Across these migrations, the common themes were:

Security depth limitations in rule-based SAST

Friction from LOC-based pricing

Need for AI-native detection capabilities

Desire to consolidate SAST, SCA, secrets, and code review

Check out more real use cases here.

Start Your Evaluation

You can import your SonarQube repositories into CodeAnt AI in minutes. Run both tools in parallel. Compare findings. Validate detection depth. Start your free 14-day trial — import your SonarQube repos in minutes →

CodeAnt AI vs SonarQube: Which SAST Tool Should You Choose in 2026

There is no universally “better” tool, the right choice depends on your team’s priorities, existing infrastructure, and security requirements.

SonarQube is mature, widely adopted, and strong in code quality analysis.

CodeAnt AI is built for modern application security workflows. Its AI-native SAST engine, evidence-backed findings with Steps of Reproduction, PR-native integration, and consolidated security platform reflect a shift from dashboard-centric analysis to developer-embedded security.

The decision ultimately depends on what you prioritize:

If you want rule-based SAST with deep code quality tracking and a free entry point, SonarQube may be sufficient.

If you need AI-native detection, workflow-native security, SCA + secrets + IaC in one platform, and pricing that scales with users instead of lines of code, CodeAnt AI offers a structurally different model.

In 2026, the gap between rule-based and AI-native SAST is no longer incremental. It is architectural. For a broader view of the landscape beyond these two tools, see our full 13-tool SAST comparison.

Now, if you are ready to see the difference in your own code, book a 30-minute call with our security engineers and:

Run CodeAnt AI in parallel with SonarQube

Compare findings side by side

FAQs

Is CodeAnt AI better than SonarQube for SAST?

What is the difference between rule-based and AI-native SAST?

Does SonarQube include SCA and secrets scanning?

How does pricing scale between SonarQube and CodeAnt AI?

Can CodeAnt AI replace SonarQube entirely?