Most AI code review evaluations start with a demo, a free trial, and a Slack poll of the dev team. That works fine for a 15-person startup. For an enterprise with 300+ developers across multiple business units, four git platforms, a security team with opinions, and a procurement process that takes 90 days, it's the wrong starting point.

Enterprise AI code review has a different problem set. It's not "does this tool catch bugs?" It's "can this tool work at scale across every team, every platform, every compliance boundary, without becoming a bottleneck or a liability?"

This guide covers what enterprise engineering orgs actually need from an AI code review platform, the requirements that matter at scale, the evaluation criteria that separate production-ready from proof-of-concept, and how to build a rollout that sticks.

If you're still in the evaluation phase, our best AI code review tools comparison covers the full landscape with verified pricing and platform support.

TL;DR

Enterprise AI code review requires multi-platform support, compliance certifications, role-based access, and centralized policy management — capabilities most SMB-focused tools lack

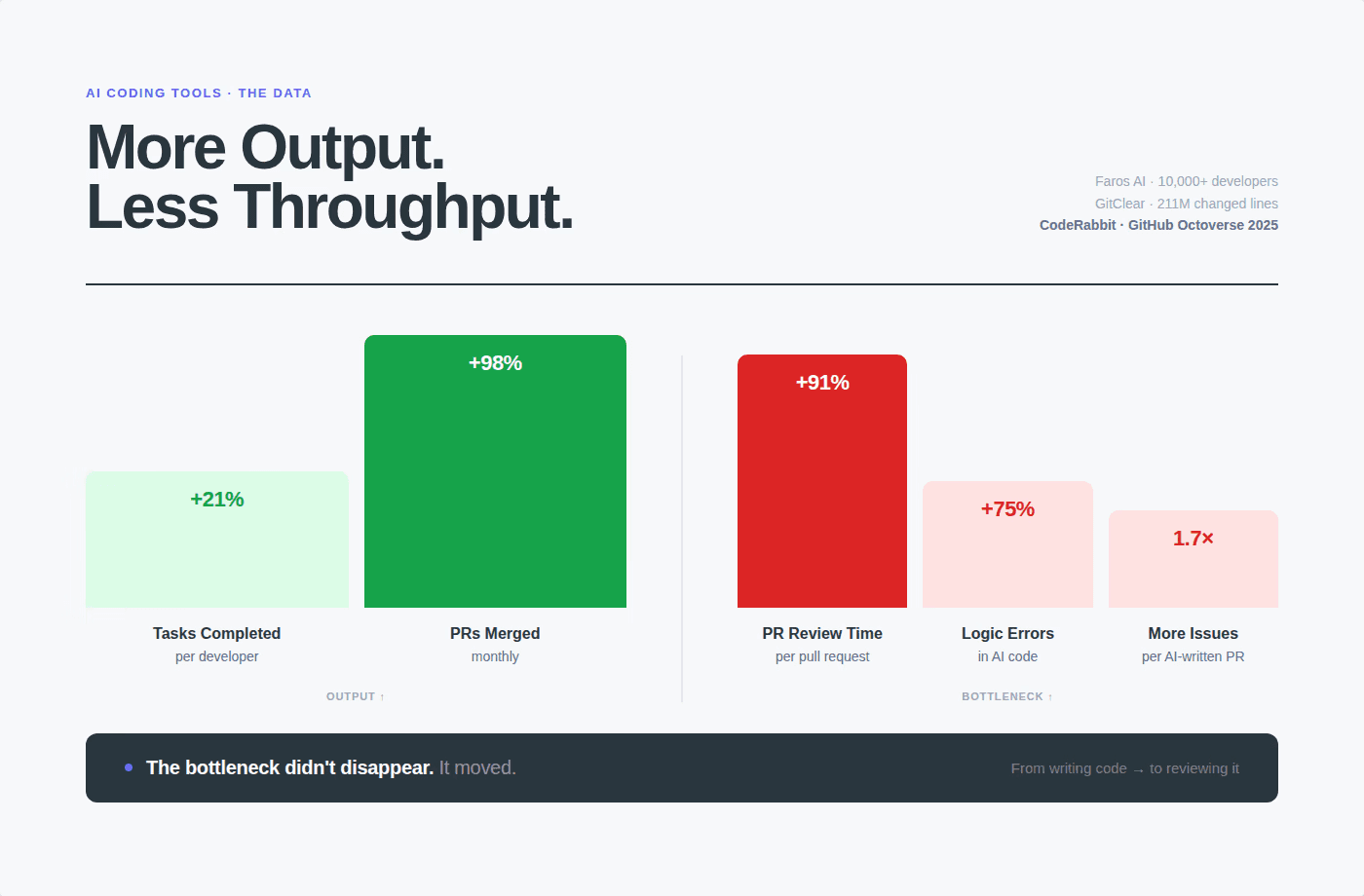

AI-generated code is raising the stakes: teams with high AI adoption merge 98% more PRs, review time increases 91%, and AI PRs produce 1.7× more issues per PR

The right enterprise platform combines SAST + secrets detection + IaC + SCA + AI-native review in one tool, eliminating the 4–6 point solutions most large orgs currently operate

Deployment model matters: SaaS, hybrid, and on-premises options each have different compliance implications

CodeAnt AI is SOC 2 Type II and HIPAA certified, supports all four major git platforms, and is deployed across enterprises including Commvault (800+ developers) and Bajaj Finserv (300+ developers)

Why Enterprise AI Code Review is a Different Problem

Scale changes everything.

A 15-person team adopts a code review tool by installing a GitHub App and turning it on. A 500-person engineering org has to answer questions like: Which teams get access first? How do we handle the GitLab teams in EMEA and the Azure DevOps teams in the US? What happens when the security team flags a finding, who owns remediation? How do we prove to auditors that our review process meets SOC 2 requirements? That said, high-AI-adoption teams merge 98% more PRs while review time increases 91%.

At enterprise scale, that's not a productivity story, it's a risk story. More code merging faster, with fewer humans able to review it meaningfully, across a codebase that may span millions of lines and dozens of teams.

The enterprise need isn't just review automation. It's a code quality control layer that works at the speed of AI-assisted development, across every team, on every platform, without requiring manual configuration for each repo.

7 Requirements That Separate Enterprise-Grade from SMB Tools

1. Multi-platform git support

Enterprise engineering orgs rarely live on a single git platform. Acquisitions bring different toolchains. Platform migrations take years. Different teams have different preferences and historical commitments.

The reality at most large orgs: GitHub for product teams, GitLab for DevSecOps, Bitbucket for legacy Java services, Azure DevOps for .NET and Windows workloads. A code review tool that only supports GitHub covers maybe 40–60% of your codebase.

What to look for: Native, full-featured support for GitHub, GitLab (cloud and self-hosted), Bitbucket, and Azure DevOps, not partial integrations that work on some features but not others.

Platform | Full support needed | Why it matters |

|---|---|---|

GitHub | ✅ | Most modern product teams |

GitLab | ✅ | DevSecOps, EMEA teams, self-hosted requirements |

Bitbucket | ✅ | Legacy Java/enterprise services, Atlassian shops |

Azure DevOps | ✅ | .NET stacks, Microsoft shops, regulated industries |

CodeAnt AI is one of four tools in the market that natively supports all four platforms at feature parity.

Most tools support GitHub well and treat the others as second-class.

2. Security and compliance certifications

Enterprise security teams don't care about demos. They care about trust frameworks. Before any tool touches production code, procurement and InfoSec will ask:

Is the vendor SOC 2 Type II certified?

Are we HIPAA-compliant if we're in healthcare?

What is the data retention and processing policy?

Is our source code used for model training?

Where is data processed: US, EU, or elsewhere?

Can we get a Business Associate Agreement (BAA)?

These aren't edge cases. They're table stakes for any enterprise software procurement. A tool without SOC 2 Type II certification simply won't pass InfoSec review at most large organizations.

CodeAnt AI is SOC 2 Type II certified and HIPAA compliant. Source code is never used for model training. Data processing agreements and BAAs are available for regulated industries.

3. Flexible deployment models

Not all enterprise codebases can go to SaaS. Financial services firms, defense contractors, and healthcare organizations often have data residency requirements that prevent source code from leaving their infrastructure.

Three deployment models matter:

SaaS (cloud-hosted): Fastest time to value, lowest operational overhead. Appropriate for most teams without strict data residency requirements. CodeAnt AI's cloud offering runs on isolated infrastructure with no cross-tenant data access.

Hybrid: Review processing happens in the vendor's cloud, but code never leaves your network. A local agent handles code access; only metadata and findings traverse the network. Appropriate for organizations with data residency concerns but cloud-first infrastructure.

On-premises: Full deployment within your own infrastructure. Highest operational overhead, but complete data sovereignty. Required for air-gapped environments, classified systems, or organizations with strict regulatory requirements.

What to evaluate: Does the vendor offer genuine on-premises deployment, or is "on-premises" a marketing term for a VPC deployment? Can you bring your own LLM (BYOLLM) to avoid sending code to external AI providers?

4. Centralized policy management

At enterprise scale, you can't configure review rules repo by repo. You need a way to define policies once and enforce them across all repositories, all teams, and all platforms.

This means:

Organization-level quality gates: define what blocks a merge across your entire org, not just per-repo

Team-level overrides: allow teams to set stricter rules within organizational baseline

Rule inheritance: new repos automatically inherit org policies without manual setup

Audit logs: every policy change, every override, every exception is logged and attributable

Without centralized policy management, enterprise rollouts devolve into configuration sprawl, different rules on different repos, no consistent security baseline, and no way to demonstrate to auditors that your review process is coherent.

5. Role-based access control (RBAC)

Enterprise engineering orgs have complex permission structures. A junior developer shouldn't be able to modify security quality gates. A team lead should be able to view findings across their team but not other teams. A CISO needs org-wide visibility without being able to change individual team configurations.

What to look for: Granular RBAC that maps to your org's actual structure, org admin, team lead, developer, security reviewer, read-only auditor, with permission inheritance that doesn't require manual configuration for every new hire.

6. SAST + AI-native review in one platform

Most large orgs currently run 4–6 separate tools in their code quality stack: a SAST scanner, a secrets detection tool, a dependency checker, an IaC scanner, a code review bot, and maybe a DORA metrics platform. Each has its own integration, its own alert format, its own vendor relationship, and its own renewal cycle.

The enterprise case for consolidation is strong:

Developer experience: One tool, one comment format, one place to see all findings

Reduced noise: Correlated findings across tools reduce duplicate alerts

Vendor management: One contract, one renewal, one support relationship

Cost: Consolidating 4 tools into 1 typically reduces total spend by 30–50%

CodeAnt AI bundles:

SAST (30+ languages)

IaC scanning (Kubernetes, Terraform, Docker)

SCA (vulnerable dependency detection)

DORA metrics in a single platform

7. Enterprise support and SLA commitments

When a code review tool goes down during a major release, it's a business-critical incident. Enterprise customers need:

Dedicated support channels (not a shared Slack community)

Committed SLA for response and resolution

Named customer success manager for large accounts

Professional services for complex rollouts

Executive escalation path for critical issues

Verify these commitments in the MSA, not just the sales pitch.

Building the Enterprise Review Pipeline

A well-designed enterprise review pipeline has five layers. Each layer catches different issues; together they achieve the 93–94% accuracy that research shows is possible with hybrid SAST + AI-native approaches.

Layer 1: Pre-commit hooks (optional but recommended)

Catch the most obvious issues before code is even pushed. Pre-commit hooks run SAST rules and secret detection locally, giving developers instant feedback without waiting for a PR review cycle.

Pre-commit hooks reduce the volume of obvious issues that reach PR review — making the AI-native review layer more signal-dense when it runs.

Layer 2: PR-triggered automated review

The core of the pipeline. When a PR is opened or updated:

SAST scan across all changed files and their dependencies

Secrets detection on every line of the diff

SCA scan on any new or modified dependency declarations

IaC scan on infrastructure configuration files

AI-native review with full codebase context, logic errors, hallucinated APIs, architectural violations, convention drift

All findings post as inline PR comments within 2 minutes for most codebases.

Layer 3: Quality gates as branch protection

Configure quality gates as branch protection rules that block merge on critical findings:

The key decision: what blocks vs. warns vs. requires human review. For AI-generated code, err toward stricter gates, the defect rate is higher than human code and the cost of a security vulnerability reaching production is orders of magnitude higher than the cost of a PR that takes an extra hour to fix.

Layer 4: Security review workflow for escalations

Not every security finding should block the PR and require a developer fix. Some findings need a security engineer's judgment, is this a true positive? Is there a compensating control? Does the risk profile warrant an exception?

Build an escalation path:

Critical findings: Auto-block, notify security team Slack channel, require security sign-off to merge

High findings: Block, assigned to developer with 48-hour SLA to resolve or dispute

Medium findings: Warn, developer can acknowledge and merge with documented rationale

Low findings: Log, visible in dashboard, no merge impact

This prevents both under-reaction (ignoring real vulnerabilities) and over-reaction (blocking every PR on false positives until developers stop trusting the tool).

Layer 5: Org-level visibility and reporting

The CISO and engineering leadership need aggregate visibility — not individual PR findings, but trend data:

What is the mean time to remediate security findings?

Which teams have the highest critical finding rates?

Is our change failure rate improving as AI review matures?

How many findings were auto-fixed vs. manually resolved?

What is the false positive rate, and is it trending down?

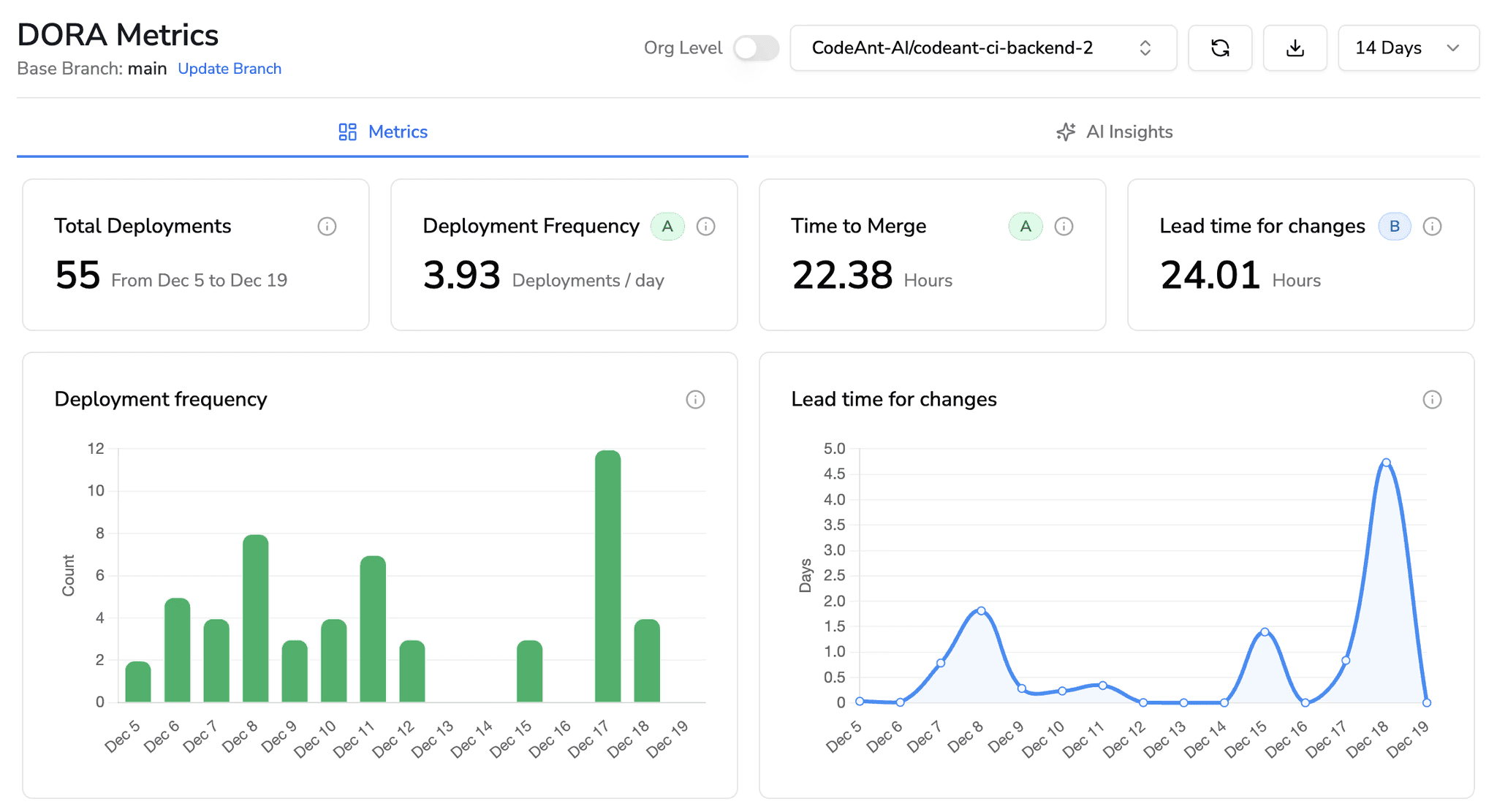

CodeAnt AI's DORA metrics dashboard surfaces these at the org level, team level, and individual developer level, giving engineering leaders the data to have informed conversations about code quality without digging through individual PR histories.

The AI-Generated Code Problem at Enterprise Scale

The stakes are higher for enterprises. A 500-developer org with 30% AI adoption is generating roughly 150 AI-assisted PRs per day. If those PRs average 1.7× more issues than human PRs, and your review process isn't calibrated for AI-specific failure modes, you're accumulating technical debt and security risk at a rate that manual review can't address.

The specific enterprise risks of AI-generated code at scale:

Copy-paste amplification across repositories. When an AI generates a buggy pattern and developers accept it, the same pattern may appear in dozens of repos within weeks, especially if the AI is trained on or has context from your internal codebase. In duplicated code blocks (5+ lines) during 2024.

Hallucinated dependencies creating supply chain risk. ~34% of AI-suggested package names don't exist in public registries. Attackers increasingly register packages under AI-hallucinated names, waiting for organizations to inadvertently install them. At enterprise scale, one developer accepting a hallucinated import can become a supply chain vulnerability before anyone notices.

Compliance drift in regulated code. AI-generated code doesn't know your regulatory obligations. It doesn't know which fields contain PII, which services are in scope for PCI, or which data flows require audit logging. Without review rules that enforce these requirements, compliance gaps accumulate silently.

Inconsistent security posture across teams. Different teams adopt different AI tools and have different review rigor. Without centralized policy, the security baseline of your codebase becomes the lowest-common-denominator of your least-careful team.

Enterprise Evaluation Checklist

Use this when evaluating AI code review vendors for enterprise deployment:

Security and compliance

[ ] SOC 2 Type II certified (not just in progress)

[ ] HIPAA compliant (if healthcare or handling PHI)

[ ] Clear data processing agreement available

[ ] Source code not used for model training

[ ] On-premises or hybrid deployment available

[ ] BYOLLM option for organizations with LLM provider requirements

Platform and integration

[ ] GitHub support (cloud and enterprise server)

[ ] GitLab support (cloud and self-hosted)

[ ] Bitbucket support

[ ] Azure DevOps support

[ ] CI/CD pipeline integration (GitHub Actions, GitLab CI, Jenkins, Azure Pipelines)

[ ] SIEM integration for security finding export (Splunk, Datadog, etc.)

[ ] Jira/Linear/Azure Boards integration for ticket creation

Policy and administration

[ ] Org-level quality gate configuration

[ ] Team-level policy inheritance and override

[ ] RBAC with granular permission levels

[ ] Audit logs for all configuration changes

[ ] Bulk repository onboarding (not one-by-one)

[ ] API access for programmatic configuration

Review capabilities

[ ] SAST across all languages in your stack

[ ] Secrets detection

[ ] IaC scanning (Terraform, K8s, Docker)

[ ] SCA / vulnerable dependency detection

[ ] AI-native review with full codebase context

[ ] Steps of Reproduction for flagged issues

[ ] Auto-fix suggestions

Commercial terms

[ ] Per-user pricing (predictable at scale vs. per-LoC)

[ ] Enterprise MSA with appropriate indemnification

[ ] SLA commitments in writing

[ ] Dedicated CSM for accounts above threshold

[ ] Multi-year pricing available

How Enterprises are Deploying CodeAnt AI

Three customer deployments illustrate different enterprise use cases:

Commvault: 800+ developers, 3.5 days → under 1 minute review turnaround. Commvault deployed CodeAnt AI across a large engineering org and reduced mean PR review time from 3.5 days to under 1 minute. The platform automated the first-pass review across their entire codebase, freeing senior engineers to focus on architectural and business-logic review instead of mechanical issue-finding. 25% of merge requests now complete with AI-only review and no human intervention required.

Bajaj Finserv: 300+ developers, replaced SonarQube. Bajaj Finserv Health evaluated CodeAnt AI as a replacement for SonarQube. The decision was driven by two factors: SonarQube's lines-of-code pricing model was becoming unpredictable as AI-generated code increased codebase volume, and SonarQube's rule-based approach wasn't catching the logic errors and context violations in AI-generated code. CodeAnt AI replaced SonarQube while adding AI-native review on top of deterministic scanning, at a predictable per-developer price.

Akasa Air: 1M+ lines of code secured. Akasa Air used CodeAnt AI to audit and remediate security issues across a large existing codebase, surfacing 900+ security issues and 100,000+ quality issues. The platform provided the full-codebase context needed to understand which issues were critical vs. acceptable risk, and Steps of Reproduction to verify and reproduce each finding before remediation.

Getting Started With Enterprise Deployment

AI-assisted coding is accelerating how fast teams can ship software. But it is also increasing the volume, complexity, and risk profile of pull requests moving through enterprise codebases every day.

What enterprises need now is not just another review bot. They need a centralized quality gate that works across the entire organization.

An effective enterprise AI code review platform should deliver:

• Automated first-pass review on every pull request

• Security scanning across vulnerabilities, secrets, and dependencies

• Context-aware analysis that catches logic errors and architectural drift

• Centralized governance with org-wide quality policies

• Consistent enforcement across GitHub, GitLab, Azure DevOps, and Bitbucket

Start with a small pilot on one team and one repository. Measure the impact on review time, defect detection, and developer adoption.

👉 Try CodeAnt AI with a 14-day free trial! No credit card required and installs in minutes across GitHub, GitLab, Bitbucket, or Azure DevOps.

FAQs

What is enterprise AI code review?

Why do enterprises need AI code review?

How is enterprise AI code review different from startup tools?

Can AI code review replace manual code review?

What should enterprises evaluate when selecting an AI code review platform?