TL;DR

AI coding tools have created a paradox: your team ships 98% more PRs but review time has increased 91%, the bottleneck shifted from writing code to reviewing it.

The best overall platform for most teams is CodeAnt AI, it's the only tool that bundles AI code review, SAST, secrets detection, IaC security, and DORA metrics in one product at $24/user/month, across all four git platforms (GitHub, GitLab, Bitbucket, Azure DevOps).

If you're GitHub-only and already paying for Copilot, GitHub Copilot Code Review is zero-friction, but it's shallow and won't replace a dedicated tool.

Tools like Greptile, Cursor BugBot, and Macroscope are worth watching but only support GitHub (+ GitLab) and lack bundled security scanning.

Nobody is winning on signal-to-noise yet, false positives are still the #1 complaint across every tool in this list.

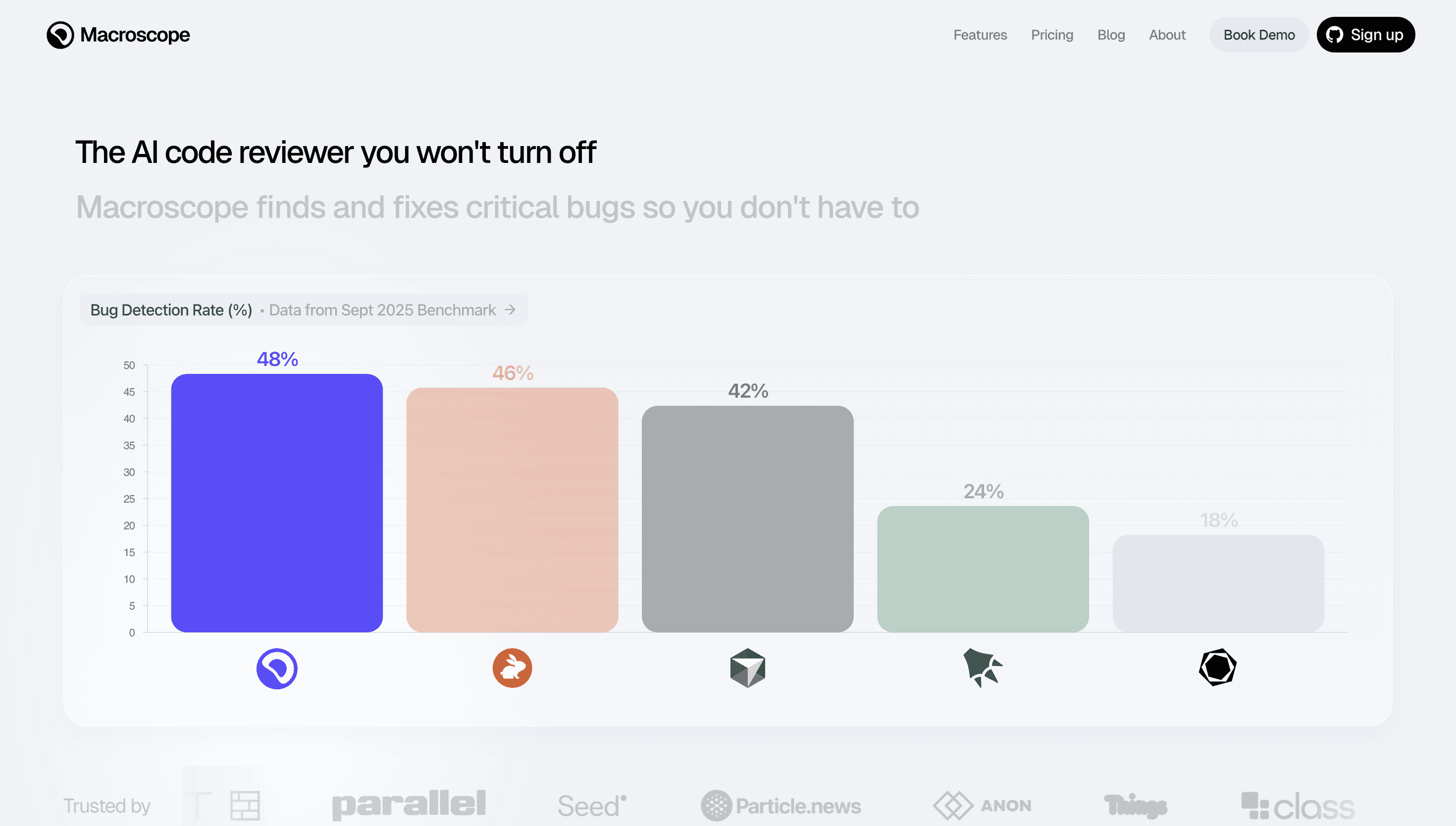

For the first three years of AI code review tools, every benchmark declared the publishing vendor the winner. Greptile published benchmarks where Greptile won. CodeRabbit published benchmarks where CodeRabbit won. This made evaluating tools genuinely difficult for engineering teams trying to make an honest purchasing decision.

That changed in February 2026. Martian, a research lab built by researchers from DeepMind, Anthropic, and Meta, and notably not in the business of selling code review tools, published the first independent benchmark for AI code review agents. They tested 17 tools across 300,000 real pull requests from open-source repositories, measuring which review comments developers actually acted on. They open-sourced the dataset, the judge prompts, the evaluation pipeline, and the methodology. Anyone can reproduce the results.

This guide uses the Martian benchmark results alongside current pricing, platform coverage, and security capabilities to rank the 10 best AI code review tools in 2026. No vendor funded this comparison.

Who This Guide is for

This guide is for engineering managers, CTOs, and senior developers evaluating AI code review tools in 2026. If you are asking "which tool actually catches real bugs vs which tool generates the most noise," this guide answers that using independent benchmark data. If you want a quick answer: CodeAnt AI is the best overall platform for teams that need AI review plus security in one tool. CodeRabbit is the fastest for pure PR summaries. Greptile is the deepest for complex multi-service architectures. SonarQube is the most reliable for compliance-driven regulated industries.

The Benchmark Problem, and How it Was Solved

There is a benchmark problem in this category: almost every published comparison shows the publishing vendor winning. This is not coincidence, it is selection bias. Vendors choose evaluation criteria that favour their own architecture.

The Martian Code Review Bench solves this with two complementary systems. The online benchmark monitors open-source pull requests directly, for each review comment a tool generates, it asks whether the developer modified the code after the comment. Real developer decisions, not curated datasets. The offline benchmark uses a panel of expert human reviewers to evaluate comment quality on a controlled set of PRs. Together they measure precision (are comments accurate?), recall (are real issues being caught?), and F1 score (the balance between the two).

Across 300,000 pull requests, the benchmark reveals something important: most AI code review tools are optimised for precision or recall, but not both. High-precision tools post fewer comments but catch less. High-recall tools catch more but flood developers with noise. F1 score, the harmonic mean, is the honest measure of which tools are actually useful in production.

The Problem Nobody Warned You About

You invested in AI coding tools. Your engineers are writing code faster. Commits are up. PRs are up, way up.

And somehow, your team is slower than it was 18 months ago.

This is not a hypothetical.

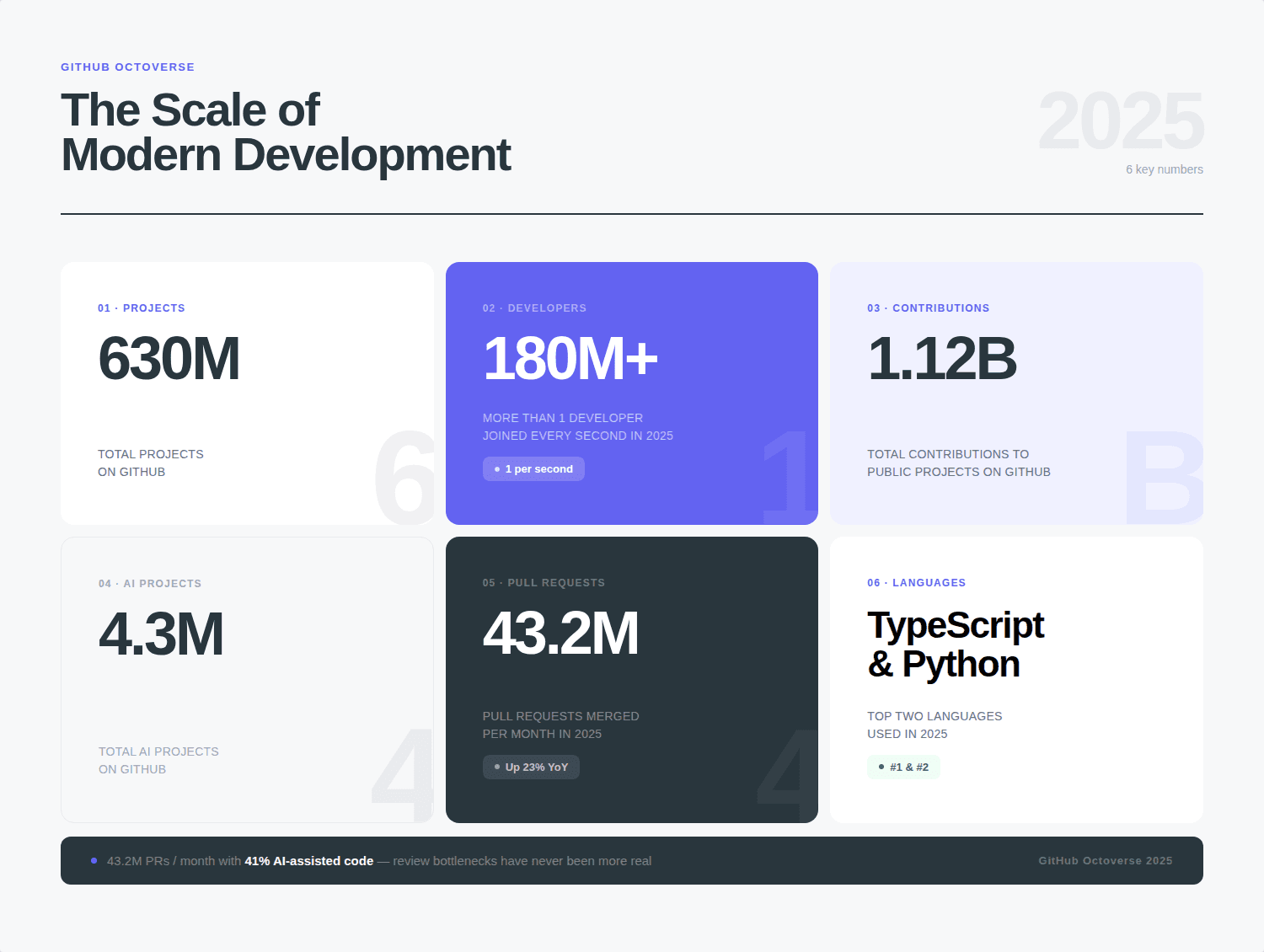

Faros AI tracked 10,000+ developers across 1,255 teams and found something uncomfortable: teams using AI coding tools completed 21% more tasks and merged 98% more pull requests, but PR review time increased 91%. The bottleneck didn't disappear. It moved.

GitClear's 2025 analysis of 211 million changed lines made it worse. AI-assisted code has nearly doubled code churn (code revised within two weeks jumped from 3.1% to 5.7%), and copy/paste code increased 48% between 2020 and 2024. The PRs your team is now reviewing are larger, messier, and more likely to contain subtle logic errors than anything they reviewed before.

GitHub's Octoverse 2025 confirms the scale: 43.2 million PRs merged monthly, up 23% year-over-year, with roughly 41% of new code now AI-assisted.

And CodeRabbit's own analysis found that AI-generated code produces 1.7x more issues per PR than human-written code, with logic errors up 75% and security vulnerabilities rising 1.5–2x.

The answer the industry landed on: AI should also review the code it's generating.

That's the category this article covers. Not IDE autocomplete, not AI test generation, the tools that sit in your PR workflow and decide what's worth your engineers' attention before they ever open a diff.

Here's the honest take on ten of them.

What "AI Code Review" Actually Means (and the Three Tiers You Need to Know)

AI code review is the automated analysis of code changes, typically at the pull request stage, using large language models, static analysis engines, or both, to detect bugs, security vulnerabilities, performance issues, and deviations from team standards before human reviewers spend time on them.

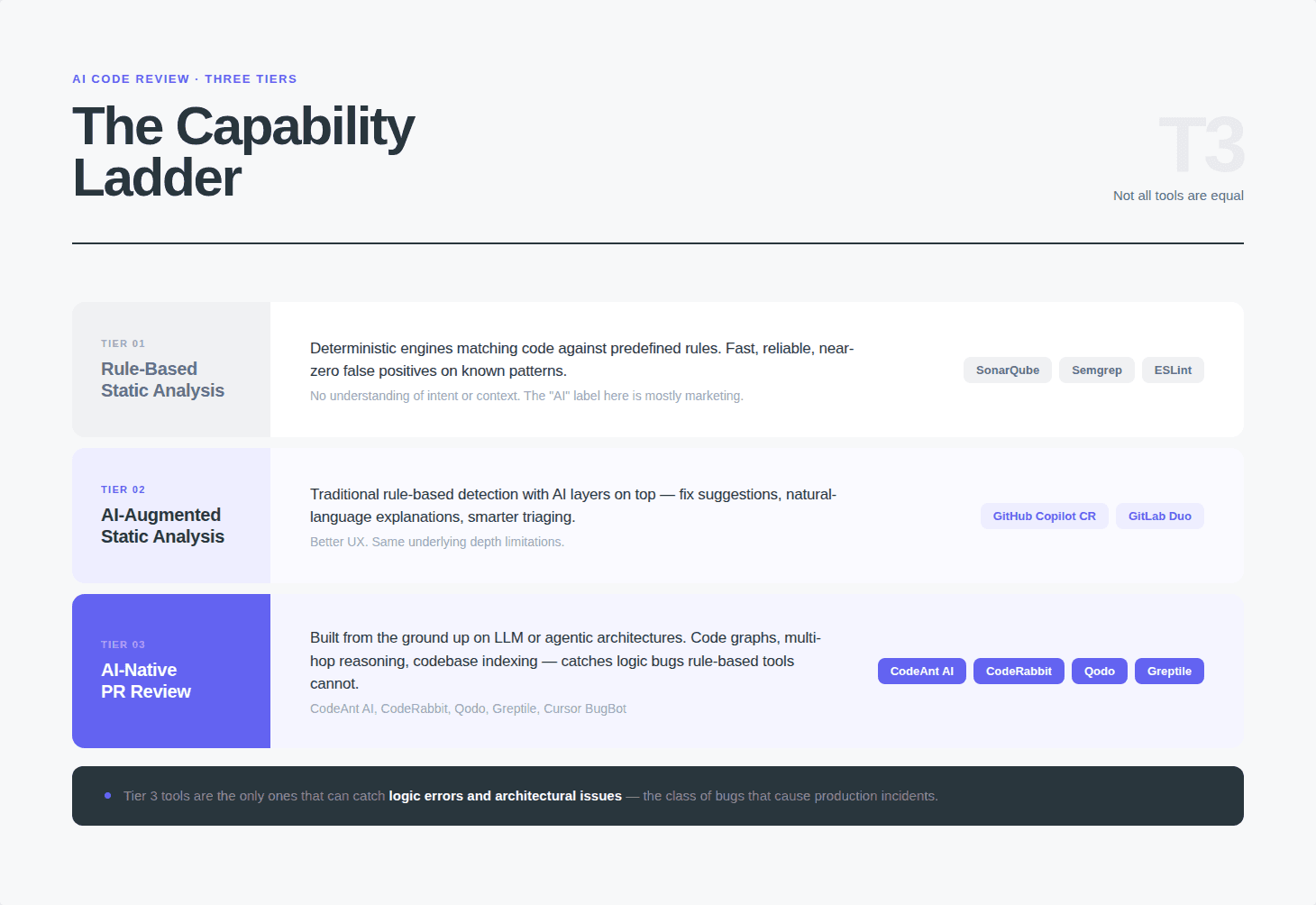

Not all tools in this category do the same thing. Before comparing them, you need to understand the three tiers:

Tier 1: Rule-Based Static Analysis (Traditional SAST)

Deterministic engines that match code against predefined rules and vulnerability patterns. Fast, reliable, near-zero false positives on known vulnerability classes. No understanding of intent or context. SonarQube is the canonical example. These tools have existed since the 1970s, the AI label applied to them is mostly marketing.

Tier 2: AI-Augmented Static Analysis

Traditional tools with AI layers bolted on. The detection engine is still rule-based; AI adds fix suggestions, natural-language explanations, or smarter issue triaging. GitHub Copilot Code Review and GitLab Duo are the most prominent examples. Better UX, same underlying depth limitations.

Tier 3: AI-Native PR Review Platforms

Built from the ground up with LLM or agentic architectures. Use code graphs, multi-hop reasoning, codebase indexing, or multi-pass review pipelines to detect logic bugs and architectural issues that rule-based tools cannot catch. CodeAnt AI, CodeRabbit, Qodo, Greptile, Cursor BugBot, and Macroscope live here.

The tier matters because teams often compare tools across tiers without realizing they're not solving the same problem. SonarQube won't catch the logic bug in your authentication flow. CodeRabbit won't give you the OWASP-mapped compliance report your enterprise auditor is asking for. The decision framework at the end of this article maps which tool belongs in which situation.

How We Evaluated These Tools

Every tool in this article was evaluated across five dimensions:

Review quality: Does it catch real bugs, or just style issues a linter would find?

Signal-to-noise ratio: What percentage of comments are actually worth acting on?

Platform coverage: GitHub only, or GitHub + GitLab + Bitbucket + Azure DevOps?

Security bundling: Does SAST, secrets detection, and IaC scanning come included?

Total cost of ownership: What does a 20-person engineering team actually pay?

Where independent benchmarks exist, we've cited them. Where only vendor-published benchmarks exist, we've flagged that clearly. And where community feedback (Reddit, HN, G2, Dev.to) contradicts vendor marketing, we've included both.

One important caveat: the Martian Code Review Bench, the closest thing to an independent benchmark in this space, run by researchers from DeepMind, Anthropic, and Meta, was only launched in February 2026 and currently covers around 300,000 real-world PRs. It doesn't include CodeAnt AI. No benchmark is definitive yet.

The 10 Best AI Code Review Tools in 2026

Tool | Martian F1 | Free tier | Starting price | Platform coverage | Best for |

|---|---|---|---|---|---|

CodeAnt AI | #3 (51.7%) | 14-day trial | $24/user/month | GitHub, GitLab, Bitbucket, Azure DevOps | AI review + SAST + security in one |

CodeRabbit | Top 5 | Yes (limited) | $24/user/month | GitHub, GitLab, Bitbucket | Fast PR summaries, low noise |

Qodo Merge | Top 5 (60.1% F1 own bench) | Yes (limited) | $30/user/month | GitHub, GitLab, Bitbucket | PR analysis + test generation |

Greptile | Not submitted | No | $30/user/month | GitHub, GitLab | Deep multi-repo architecture review |

GitHub Copilot | Included | Yes (free tier) | $10/user/month | GitHub, VS Code, JetBrains | GitHub-native, widest IDE coverage |

Cursor BugBot | Not submitted | No | $40/month add-on | Cursor IDE only | Lowest noise, Cursor ecosystem |

Macroscope | Top results | No | Custom | GitHub only | 98% precision, ultra-low noise |

SonarQube | N/A (rule-based) | Community Build | $2,500+/year | All major platforms | Deterministic SAST, compliance |

Graphite | Not submitted | Yes | $22/user/month | GitHub only | Stacked PRs, high-velocity teams |

Snyk Code | N/A (security-focused) | Yes (100 tests) | ~$25/user/month | All major platforms | Security-first, AppSec coverage |

Martian benchmark rankings based on February 2026 results across 300,000 real PRs. Not all tools were submitted. Pricing verified April 2026, verify current pricing on each vendor's page before purchasing.

1. CodeAnt AI: Best Overall for Enterprises That Want One Tool

Best for: Engineering teams that want to replace their fragmented stack (review tool + SAST tool + developer metrics tool) with a single platform.

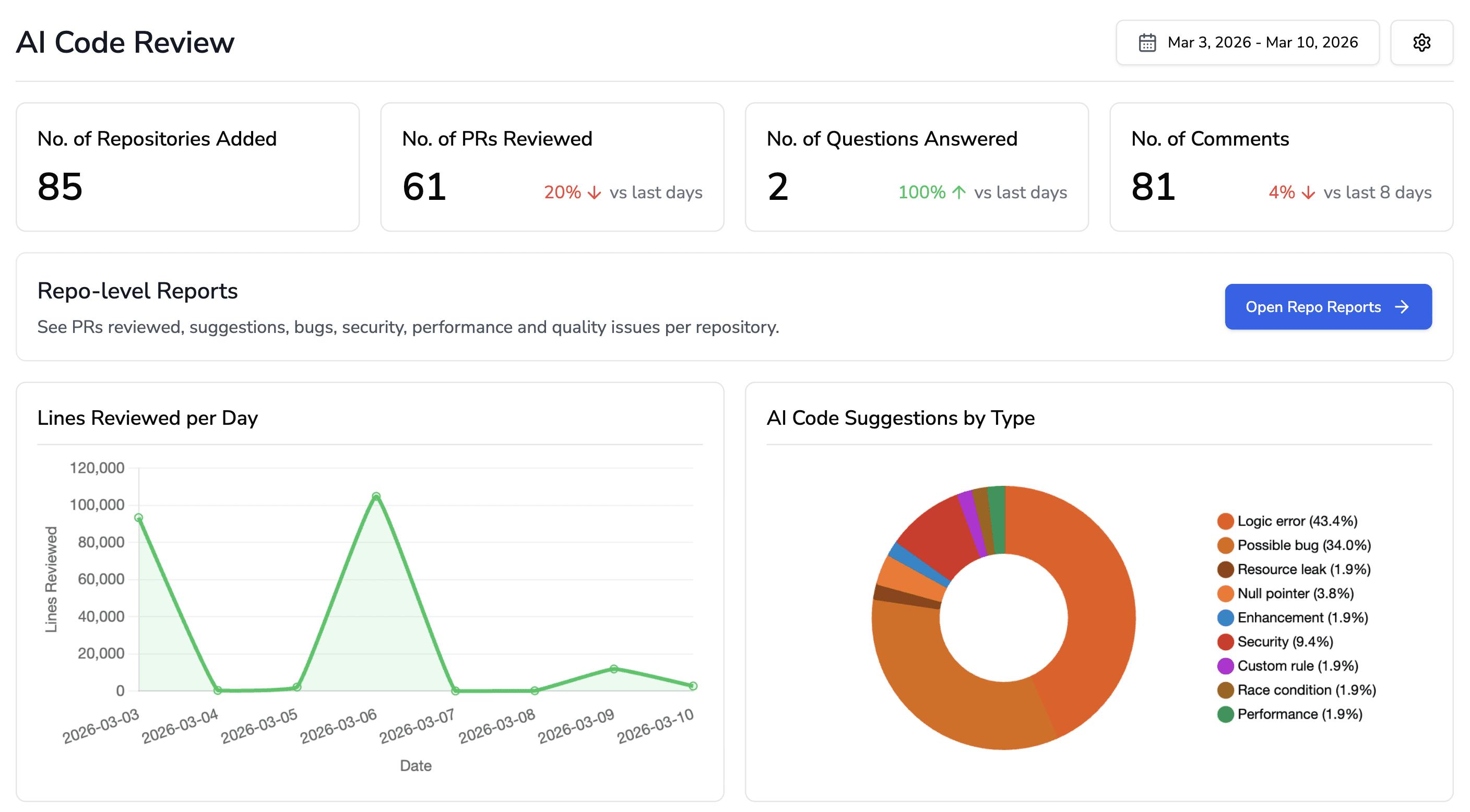

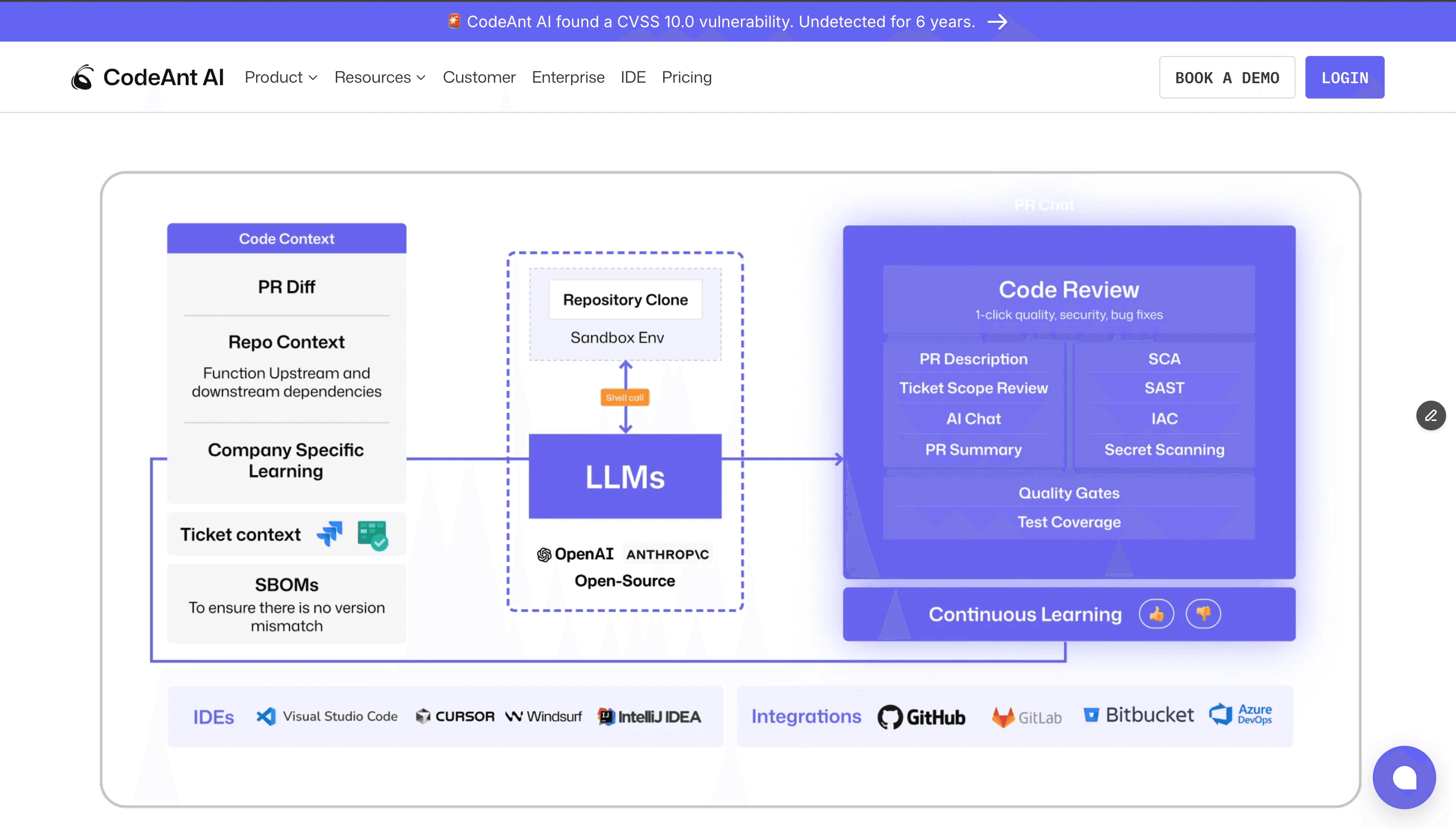

If you've ever tried to get four different security and review tools to play nicely in the same CI/CD pipeline, you understand the problem CodeAnt AI is solving. It's the only tool in this category that bundles AI-powered pull request reviews, SAST, secrets detection, IaC scanning, SCA (software composition analysis), and DORA metrics in a single product, across all four major git platforms.

How it works: CodeAnt AI sits in your PR workflow and runs review across the full codebase context, not just the diff. When it finds an issue, it doesn't just flag it, it provides one-click auto-fixes for roughly 80% of findings.

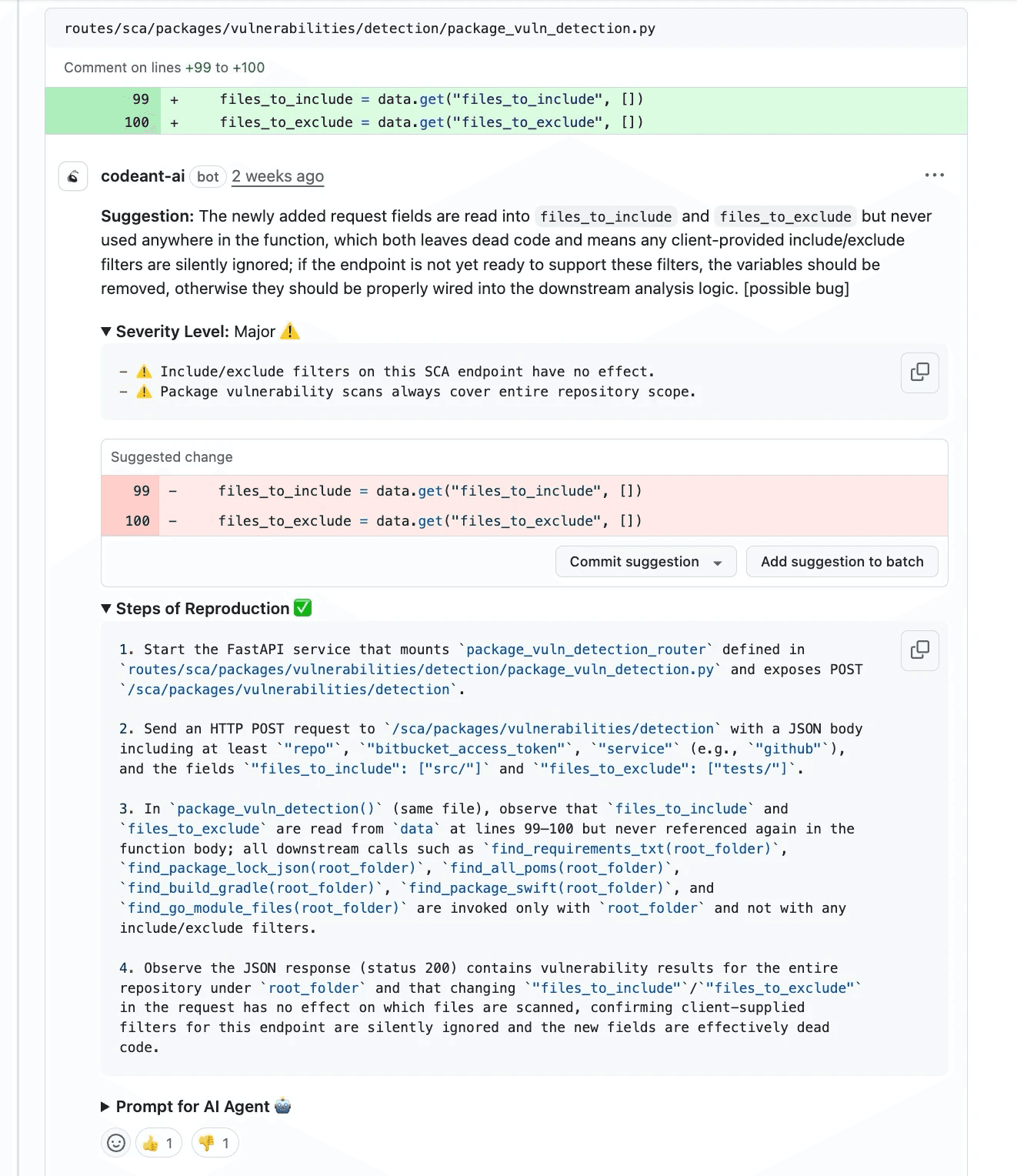

For bugs, it includes Steps of Reproduction: exact input conditions, execution path, and expected vs. actual behavior so engineers can verify the issue without back-and-forth.

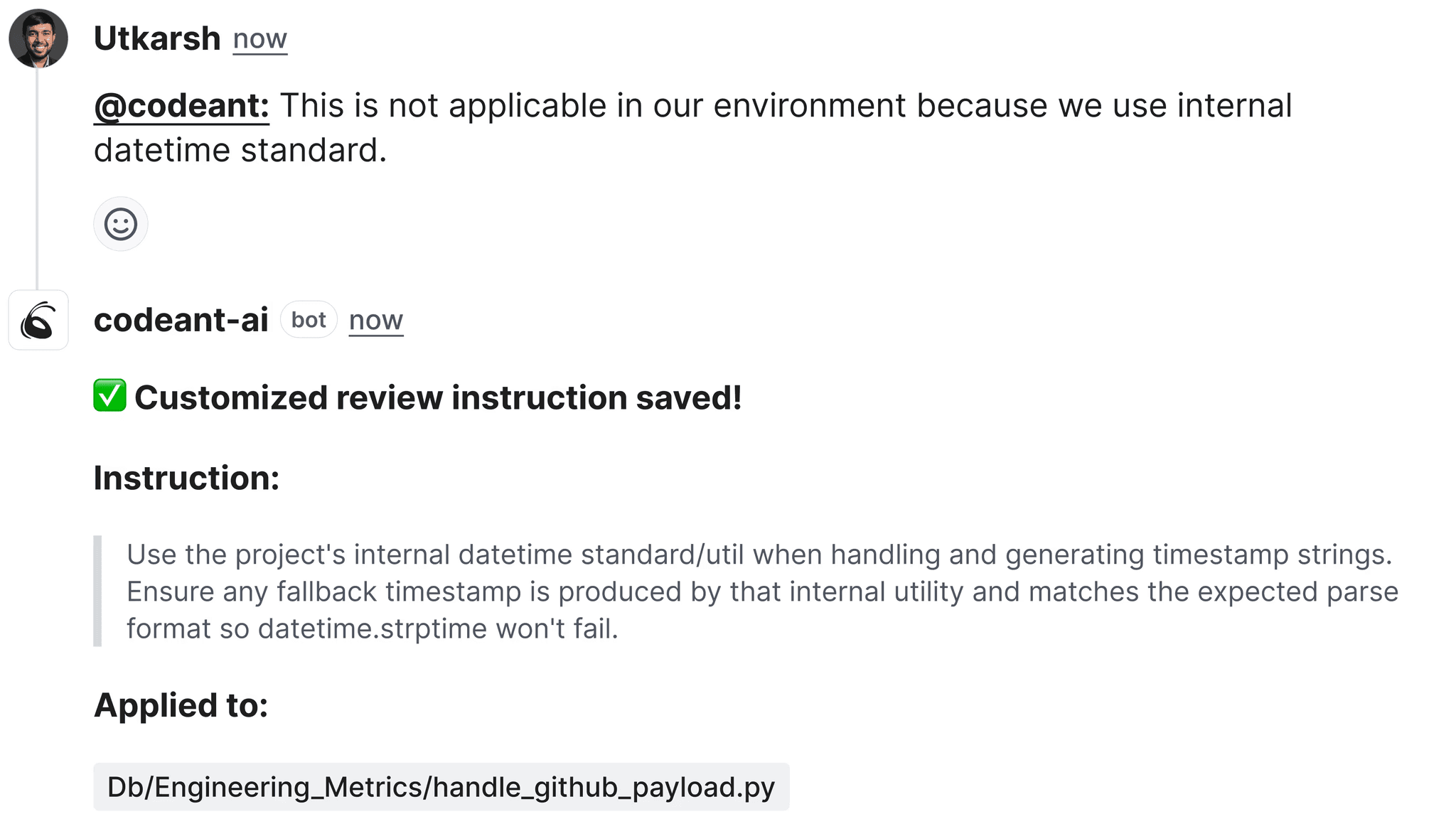

Steps of Reproduction: A CodeAnt AI Exclusive Most tools tell you what is wrong. CodeAnt AI tells you how to trigger it. Each flagged issue includes step-by-step reproduction instructions, the inputs that cause the problem, the execution path, the expected output vs. what actually happens. This eliminates the review cycle where someone marks a comment "can't reproduce" and the discussion goes cold for three days. No other tool on this list provides this consistently.

The security layer is genuinely comprehensive: SQL injection, XSS, insecure APIs, exposed credentials, Kubernetes/Docker YAML misconfigurations, end-of-life dependency detection, SBOM generation, and security quality gates that can block PRs with critical findings. The DORA metrics dashboard tracks all four core metrics plus extended ones:

First Review Time

Approval-to-Merge Time

Commit-to-MR Creation Time

… with org-wide visibility and PDF/CSV export for leadership.

Who's using it:

Commvault (NASDAQ: CVLT, 800+ engineers) achieved a 98% reduction in code review time after deploying CodeAnt AI in an air-gapped on-prem environment.

Bajaj Finserv Health replaced SonarQube entirely: "We replaced SonarQube, cut review time from hours to seconds, and now pay a flat per-developer price, all without leaving Azure DevOps."

Akasa Air secured 1M+ lines of aviation code across all GitHub repositories.

Platform support:

GitHub ✅ (including GHES + GitHub Marketplace)

GitLab ✅

Bitbucket ✅ (Atlassian Marketplace)

Azure DevOps ✅ (VS Marketplace + Azure Board + Azure Pipelines).

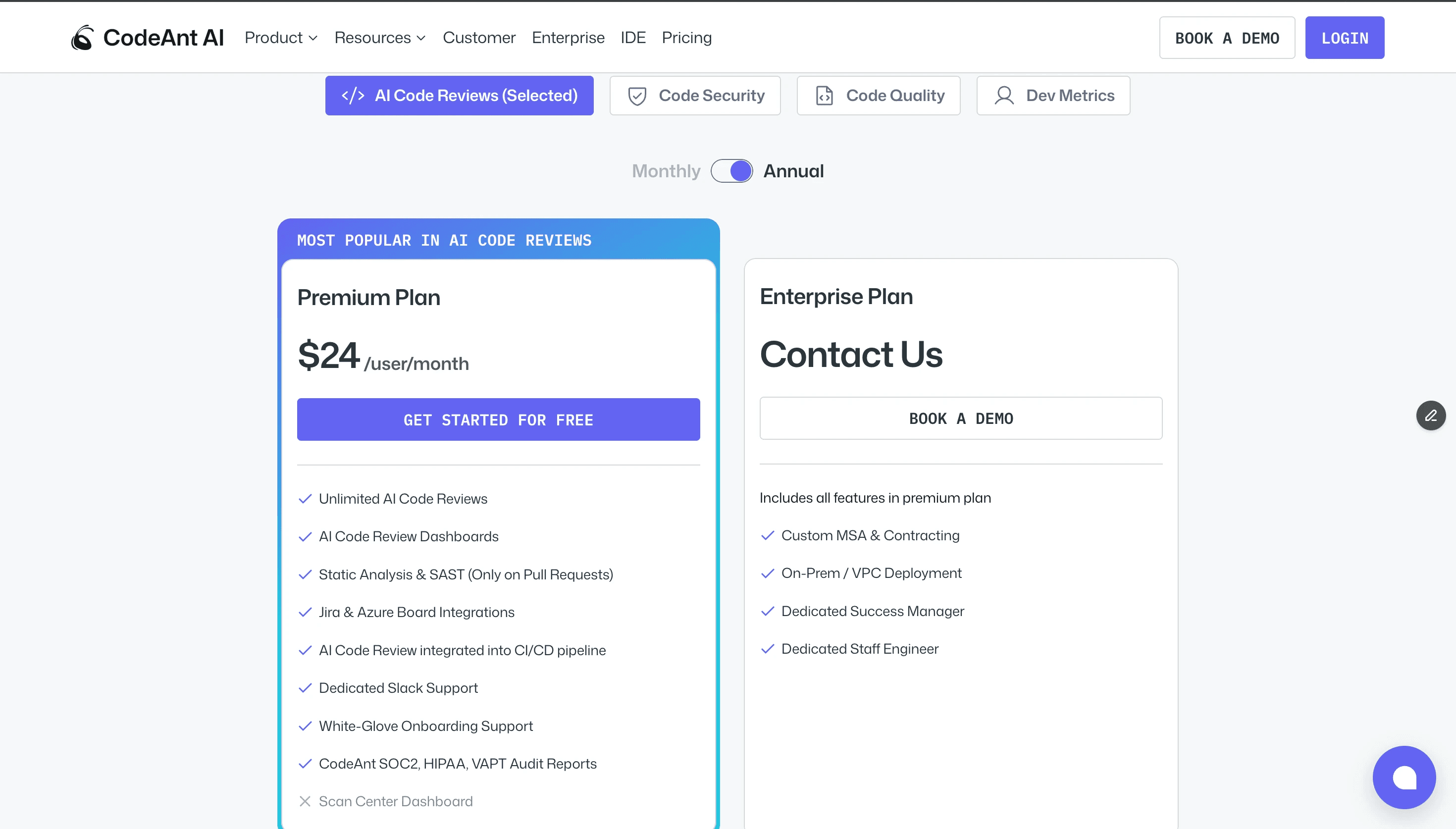

Pricing (codeant.ai/pricing):

Premium: $24/user/month: unlimited AI reviews, SAST, dashboards, Jira integration, CI/CD, SOC 2/HIPAA audit reports, white-glove onboarding

Enterprise: Contact sales: on-prem/VPC/air-gapped deployment, dedicated success manager

14-day free trial, no credit card required. 100% off for open-source projects.

Looking specifically at GitHub setups? See our guide on the best AI code review tools for GitHub CI/CD pipelines, and the best tools on the GitHub Marketplace.

Want to see CodeAnt AI on your codebase? Start your free 14-day trial →

2. CodeRabbit: Best for Breadth and Benchmark-Validated Quality

Best for: Teams wanting fast setup, a generous free tier, and the widest platform coverage.

CodeRabbit is the most widely adopted AI code review tool by install count, over 2 million repositories, 13 million PRs processed. It's the only tool in this category that consistently shows up in independent benchmarks, and those results are strong:

How it works: CodeRabbit clones your repository into a secure sandbox and builds a code graph of file relationships. It analyzes the PR diff within that full context, pulling in Jira ticket descriptions, relevant code graph nodes, past PR history, and user-defined "Learnings." It runs 40+ linters and SAST tools with zero configuration (ESLint, Semgrep, and more), and runs verification scripts to validate its comments before posting. A "Chill vs. Assertive" review mode lets teams tune verbosity.

What makes it genuinely useful: The PR walkthroughs. CodeRabbit generates a structured summary of every PR, what changed, why it matters, what the review found, in a format that saves 10–15 minutes of context-loading per reviewer. For large teams processing dozens of PRs daily, this compounds fast.

The noise problem: CodeRabbit's biggest complaint across G2 reviews, Reddit, and the DevTools Academy 2025 state-of-the-art report is verbosity. A Lychee project audit found 28% of CodeRabbit's comments were noise or incorrect assumptions. This is improving, the Learnings system suppresses repeated false positives — but it's not solved.

Platform support:

GitHub ✅

GitLab ✅

Bitbucket ✅

Azure DevOps ✅

Plus VS Code, Cursor, and Windsurf IDE extensions

Pricing:

Free: PR summaries, unlimited repos, rate-limited (200 files/hr, 4 reviews/hr)

Pro: $24/dev/month (annual), unlimited reviews, Jira/Linear integration, 40+ SAST tools

Enterprise: Custom (~$15K+/month for 500+ seats via AWS Marketplace)

Honest verdict: If you want benchmark-validated quality, broad platform coverage, and a functional free tier to evaluate before committing, CodeRabbit is the safest starting point. The noise issue is real but manageable. Customer support complaints on G2 are consistent enough to mention, if your team needs hands-on onboarding, look elsewhere.

Checkout this CodeRabbit alternative.

3. Qodo: Best for Enterprise Multi-Repo Context

Best for: Enterprise teams managing complex multi-repo codebases where cross-repository dependency analysis matters.

Qodo (formerly Codium) distinguishes itself by focusing on test generation and behavior analysis. It doesn't just review code; it helps developers write tests to verify that their code works as intended. This is critical for backend APIs where regression testing is mandatory.

How it works: Qodo is a multi-product platform:

Qodo Gen (IDE)

Qodo Merge (PR agent, built on the open-source PR-Agent)

Qodo Command (CLI)

The differentiator at Enterprise tier is the Context Engine (Qodo Aware), a RAG-powered system that indexes your entire codebase across multiple repositories, tracking cross-repo dependencies and surfacing relevant context from anywhere in your stack when reviewing a PR. If a change in Service A will break an interface in Service B, Qodo Enterprise catches it.

Platform support:

GitHub ✅

GitLab ✅

Bitbucket ✅ (Cloud + Data Center)

Azure DevOps ✅

plus Gitea and CodeCommit, the broadest platform support of any tool in this list

Pricing:

Developer: Free, 30 PRs/month (promotional, currently unlimited), 75 IDE credits

Teams: $30/user/month (annual), unlimited PRs (promo), 2,500 IDE credits

Enterprise: Contact sales, multi-repo context engine, SSO, on-prem, proprietary model support

The catch: The cross-repo context engine that makes Qodo genuinely different is Enterprise-only. At the Teams tier ($30/user/month), you're getting a capable AI reviewer without that differentiator, and CodeRabbit's free tier is meaningfully competitive for that use case.

Honest verdict: If you're an enterprise with 10+ microservices and a VP of Engineering asking "does our AI review tool understand how these services talk to each other," Qodo Enterprise is the answer. For smaller teams, the Teams plan is solid but not head-and-shoulders above cheaper alternatives.

Checkout this Qodo Alternative.

4. Greptile: Best for Deep Codebase Analysis

Best for: Teams with large, interconnected codebases where bugs regularly span multiple files and architectural layers.

Greptile takes a different architectural approach than most tools in this list. Rather than reviewing just the diff or even the surrounding file context, it indexes your entire codebase into a language-agnostic graph of functions, classes, variables, and call relationships before reviewing anything. When a PR comes in, Greptile's multi-hop investigation traverses that graph autonomously to understand second and third-order effects of the change.

Platform support:

GitHub ✅ (including GHES)

GitLab ✅

Bitbucket ❌

Azure DevOps ❌

No IDE integration

If your team is on Bitbucket or Azure DevOps, Greptile isn't an option today.

Pricing:

Standard: $30/dev/month, 50 reviews/month, then $1/review overage

Enterprise: Custom, self-hosted, air-gapped, custom AI models

100% off for open source, 50% off for startups. No free tier for private repos.

What it doesn't have: No dedicated SAST, no secrets detection, no IaC scanning, no compliance reporting. If security is a hard requirement alongside review, you'll need a separate tool.

Honest verdict: For GitHub + GitLab teams with complex architectures and tolerance for some false positives, Greptile's depth is genuinely differentiating. For teams on other platforms, or teams that need security alongside review, look at CodeAnt AI or CodeRabbit first.

Checkout this Greptile alternative.

5. GitHub Copilot Code Review: Best for GitHub Teams Already Paying for Copilot

Best for: GitHub-native teams that want zero-friction AI review without adding a new vendor.

If your team is already on GitHub Copilot, the code review feature is the easiest possible on-ramp: assign Copilot as a reviewer on any PR, and it analyzes the diff and posts comments. No integration work, no new subscription, no configuration. For teams processing lower PR volumes where the cost-per-review doesn't justify a dedicated tool, this is genuinely sufficient.

The depth limitation: Independent testing is consistently lukewarm. Cotera.co's evaluation found 31 of 47 Copilot suggestions would have been caught by ESLint, and 7 were factually wrong. A GitHub Community thread about Copilot reviewing 120 files and generating 1 comment went viral in late 2025. The root issue: Copilot reviews the diff, not the codebase. It has no cross-file context awareness. It cannot block merges, it's advisory only.

Platform support:

GitHub ✅ only

GitLab ❌,

Bitbucket ❌

Azure DevOps ❌

Pricing:

Free: code review NOT included

Pro: $10/user/month, code review included, 300 premium requests/month

Business: $19/user/month, code review included

Enterprise: $39/user/month

Each review consumes a premium request; additional at $0.04 each

Honest verdict: Zero friction is real value. But if your team is feeling the PR review bottleneck that opened this article, Copilot code review won't fix it, it's too shallow and too GitHub-locked. Use it as a complement, not a solution.

Checkout this GitHub Copilot alternative.

6. GitLab Duo: Best for GitLab-Native DevSecOps

Best for: Organizations running their entire engineering workflow on GitLab who want review, SAST, and vulnerability management in one integrated experience.

GitLab Duo is the AI layer across GitLab's platform, code completion, chat, and most relevantly here, merge request review. Unlike Copilot (which reads only the diff), Duo Code Review Flow (the agentic version) performs multi-step reasoning with full-file context and custom review instructions stored in .gitlab/duo/mr-review-instructions.yaml. Review instructions are versioned, auditable, and reviewable, a genuine advantage for compliance teams.

The DevSecOps integration is what differentiates it from everything else on this list: if you're on GitLab Ultimate, you get SAST, DAST, IaC scanning, SCA, fuzz testing, container scanning, and AI-powered vulnerability explanation in a single platform. No third-party integrations needed.

Platform support:

GitLab ✅ (only .com, self-managed, Dedicated)

GitHub ❌

Bitbucket ❌

Azure DevOps ❌

Pricing:

Duo Pro add-on: $19/user/month (Premium + Ultimate tiers), review, chat, suggestions

Duo Enterprise add-on: $39/user/month (Ultimate only), advanced AI review, vulnerability resolution

Total real cost: Premium ($29) + Duo Pro ($19) = $48/user/month; Ultimate + Duo Enterprise ≈ $138/user/month

Full SAST/DAST is Ultimate-only; basic secret detection available in all tiers

Honest verdict: For GitLab-committed enterprises that need integrated DevSecOps and can absorb the pricing, Duo is a compelling single-vendor story. For everyone else, the cost and platform lock-in are hard to justify.

7. Graphite: Best for Teams That Want to Fix the Root Cause

Best for: Teams that want to reduce review bottlenecks by making PRs smaller and more reviewable in the first place.

Graphite made a deliberate architectural choice that most AI code review tools didn't: instead of trying to make AI smarter at reviewing large, complex PRs, it makes the PRs smaller. Stacked pull requests, breaking a large feature into a series of sequential, independent PRs, is the core product. The AI reviewer is a complement to that workflow, not the primary value proposition.

Important: Graphite was acquired by Cursor (Anysphere) for $290M+ in December 2025. The product roadmap is now tied to the Cursor ecosystem. If Cursor-dependency is a concern for your procurement team, note it.

Platform support:

GitHub ✅ (only)

GitLab ❌

Bitbucket ❌

Azure DevOps ❌

Pricing:

Hobby: Free, personal repos, limited AI reviews

Starter: $20/user/month (annual), all GitHub org repos, limited AI

Team: $40/user/month (annual), unlimited AI reviews, automations, merge queue

Enterprise: Custom, GHES, SAML/SSO, audit log

Honest verdict: If your review bottleneck stems from PRs being too large and unfocused, Graphite fixes the root cause in a way no AI reviewer can. For teams already running small, focused PRs, the value proposition narrows. GitHub-only is a hard constraint.

Checkout this Graphite alternative.

8. Cursor BugBot: Best for Low Noise, High Signal Review

Best for: Teams already embedded in the Cursor IDE ecosystem wanting high-precision review with autofix capabilities.

Cursor BugBot is the most architecturally interesting tool in this list on the signal-to-noise problem. Rather than running one review pass and posting everything it finds, it runs 8 parallel passes with randomized diff order, then uses majority voting to filter comments, only flagging issues that multiple passes independently surface. The theory: one-off LLM hallucinations get voted out; real bugs survive.

Platform support:

GitHub ✅

GitLab ✅ (recently added, some webhook installation bugs reported)

Bitbucket ❌

Azure DevOps ❌

Pricing: $40/user/month as an add-on to a Cursor subscription (Cursor Pro or Teams required separately). 14-day free trial. At $40 on top of the Cursor subscription cost, it's the most expensive single-purpose review tool on this list.

What it doesn't have: No dedicated SAST, no secrets detection, no IaC scanning, no compliance reporting. Separation-of-concerns is a legitimate question: Cursor generates the code and then reviews it. The Cursor team acknowledges this; the architecture is designed to be adversarial (different models for generation and review) but it's worth your team discussing.

Honest verdict: Best low-noise AI review available if you're in the Cursor ecosystem and can stomach $40/month. For teams on Bitbucket, Azure DevOps, or not using Cursor as the primary IDE, the platform lock-in is too severe.

9. Macroscope: Best for Precision-First Teams

Best for: Teams that have been burned by noisy AI reviewers and want the highest precision available.

Macroscope (CEO: Kayvon Beykpour, ex-Twitter/Periscope) shipped v3 in February 2026 with a notable claim: 98% precision, meaning 98% of comments it posts are correct and actionable. That's self-reported and not independently verified, but even taking it with appropriate skepticism, the architectural approach is legitimate.

The hybrid: Abstract Syntax Trees (ASTs) build a graph-based codebase representation before anything touches an LLM. That graph feeds into an initial pass with OpenAI o4-mini-high, then a consensus step with Anthropic Opus 4 to verify and filter. The result is fewer comments, higher confidence. Nitpicks dropped 64% in Python and 80% in TypeScript from v2 to v3; comment volume down 22% overall.

The unique feature is Approvability, Macroscope can autonomously approve low-risk PRs that meet configured criteria, freeing engineers from reviewing boilerplate changes entirely. The companion Fix It For Me feature creates a branch, commits a fix, opens a PR, runs CI, and attempts self-healing if tests fail.

Platform support:

GitHub ✅ (only)

GitLab ❌

Bitbucket ❌

Azure DevOps ❌

Pricing: $30/active developer/month base + usage credits ($0.35/code review, $0.05/commit summary). Base subscription includes credits equal to the monthly cost, roughly 85 reviews/month for a single seat. 14-day free trial. Free for open source.

Honest verdict: Macroscope is the tool to evaluate if your team's primary frustration is false positives and you're GitHub-only. The precision-first architecture is different and defensible. At 18 months old (public launch September 2025), it's still establishing the track record that CodeRabbit or SonarQube have built over years.

10. SonarQube: Best for Compliance-Heavy Enterprises

Best for: Regulated enterprises where deterministic, auditable security scanning is a non-negotiable foundation.

SonarQube is not an AI-native tool. It's a 15-year-old static analysis engine that's added AI capabilities on top, and it's on this list because no honest comparison of code review tools in 2026 can exclude the category incumbent that 7 million developers trust.

Its strength is what AI-native tools cannot reliably replicate:

deterministic rules, i.e., 6,500+ built-in rules across 35+ languages

compliance mappings to OWASP Top 10

CWE Top 25

PCI DSS

NIST SSDF

CASA, STIG

MISRA C++:2023

Quality Gates that automatically block merges when critical issues are present.

The AI CodeFix layer (2025) adds LLM-suggested one-click remediation on top of rule-triggered findings, a meaningful upgrade for developer experience without changing the detection foundation.

Platform support:

GitHub ✅

GitLab ✅

Bitbucket ✅

Azure DevOps ✅

IDE support across VS Code, JetBrains, Eclipse, and Visual Studio.

Pricing:

Community Build (self-managed): Free — 17 languages, open-source

SonarQube Cloud Team: Starting $32/month — 30+ languages, AI CodeFix

SonarQube Server Developer: Starting ~$720/year (scales by lines of code)

Enterprise: Contact sales — can reach $35K+/year at 5M LOC

The self-managed Community Build is the most economical entry point

The honest limitation: SonarQube does not understand code intent. It cannot reason about whether a business logic change introduces an edge case your test suite doesn't cover. The engineers at Deutsche Bank, NASA, and Adobe use it because of what it does reliably, not to replace the AI-native review layer that catches what rules can't.

Honest verdict: If you're in a regulated industry, BFSI, healthcare, aerospace, government, SonarQube is table stakes, not optional. Pair it with CodeAnt AI (which a growing number of SonarQube customers are replacing it with, as Bajaj Finserv did) or run both if your compliance framework requires deterministic rule-mapping alongside AI-native analysis.

Checkout this SonarQube Alternative.

How to Choose: The Decision Framework

The tool comparisons above are useful. But the real decision is usually made at the intersection of three constraints: which git platforms you're on, whether security scanning is a hard requirement, and what your team's noise tolerance is.

If your situation is... | Start with | Why |

|---|---|---|

On 2+ git platforms (esp. Bitbucket or Azure DevOps) | CodeAnt AI or CodeRabbit | Only 4 tools support all platforms; these two are the strongest |

Need review + security + metrics in one tool | Only platform bundling all three; avoids tool sprawl | |

Enterprise multi-repo with complex dependencies | Qodo Enterprise | Cross-repo context engine; Gartner Visionary designation |

Deep codebase analysis matters more than noise | Greptile | Full codebase graph indexing; highest claimed detection depth |

Already on GitHub Copilot, low PR volume | GitHub Copilot Code Review | Zero new cost, zero integration work |

All-in on GitLab with DevSecOps requirements | GitLab Duo | Native SAST + review + vuln management integrated |

PRs too large to review effectively | Graphite | Stacked PRs fix the root cause; AI review is the complement |

False positives are killing team trust in AI review | Cursor BugBot or Macroscope | 8-pass majority voting / 98% claimed precision |

Regulated industry with compliance mapping requirements | SonarQube + CodeAnt AI | SonarQube for deterministic rules; CodeAnt AI for AI-native analysis |

Budget-constrained, evaluating first | CodeRabbit Free or Qodo Free | Both have functional free tiers; zero commitment to evaluate |

Not sure what fits your stack? Talk to the CodeAnt AI team →

Platform Support at a Glance

Tool | GitHub | GitLab | Bitbucket | Azure DevOps | Free Tier |

|---|---|---|---|---|---|

✅ | ✅ | ✅ | ✅ | 14-day trial | |

✅ | ✅ | ✅ | ✅ | ✅ Unlimited repos | |

✅ | ✅ | ✅ | ✅ | ✅ 30 PRs/mo | |

✅ | ✅ | ✅ | ✅ | ✅ Community Build | |

✅ | ✅ | ❌ | ❌ | OSS only | |

✅ | ❌ | ❌ | ❌ | ✅ (no review) | |

❌ | ✅ | ❌ | ❌ | ❌ | |

✅ | ❌ | ❌ | ❌ | ✅ Limited | |

✅ | ✅* | ❌ | ❌ | Limited | |

✅ | ❌ | ❌ | ❌ | OSS only |

GitLab support recently added to BugBot; some webhook installation issues reported.

Pricing Compared: What a 20-Person Team Actually Pays Per Month

Tool | Price per User | 20-Person Team/Month | SAST Included? | All 4 Platforms? |

|---|---|---|---|---|

$24/user/mo | $480 | ✅ | ✅ | |

CodeRabbit Pro | $24/dev/mo | $480 | ✅ (40+ tools) | ✅ |

Qodo Teams | $30/user/mo | $600 | ⚠️ Partial | ✅ |

Greptile | $30/dev/mo | $600 | ❌ | ❌ (GitHub+GitLab) |

GitHub Copilot Business | $19/user/mo | $380 | ❌ (separate) | ❌ (GitHub only) |

GitLab Premium + Duo Pro | $48/user/mo | $960 | ⚠️ Basic only | ❌ (GitLab only) |

Graphite Team | $40/user/mo | $800 | ❌ | ❌ (GitHub only) |

Cursor BugBot | $40/user/mo + Cursor | $800+ | ❌ | ❌ (GitHub+GitLab) |

Macroscope | $30/dev/mo + usage | ~$660 | ❌ | ❌ (GitHub only) |

SonarQube Cloud Team | ~$32+/mo (by LoC) | Varies | ✅ | ✅ |

Prices as of March 2026. Verify current pricing on each vendor's pricing page before purchasing.

CodeAnt AI is $480/month for 20 engineers, and replaces your review tool, your SAST scanner, your secrets tool, and your metrics dashboard. Start your free trial →

What the Benchmarks Actually Tell You (and What They Don't)

There's a benchmark problem in this category: almost every published comparison shows the publishing vendor winning. Greptile publishes benchmarks where Greptile wins. Macroscope publishes benchmarks where Macroscope wins. Qodo publishes benchmarks where Qodo wins.

The Martian Code Review Bench, created by researchers from DeepMind, Anthropic, and Meta, launched February 2026, open-sourced at github.com/withmartian/code-review-benchmark, is the closest thing to an independent benchmark in this space. Results so far on ~300K PRs.

What the benchmarks don't tell you: how tools perform on your codebase, in your language stack, at your PR volume. The right evaluation is a two-week pilot with your own PRs, not a comparison spreadsheet. Most tools offer free trials for exactly this reason.

The Bottom Line

The PR review bottleneck is real, it's quantified, and it's getting worse as AI coding tools ship more code than human reviewers can process. AI code review tools are the logical response, but not all of them are solving the same problem.

If you're building a serious engineering organization and need to make one decision: CodeAnt AI is the most complete platform available today. It reviews code, scans for security issues, tracks developer metrics, supports every major git platform, and does all of it at $24/user/month. The consolidation alone, replacing your SAST tool, your secrets scanner, and your review tool with one product, is the ROI story.

If you want to evaluate before committing: CodeRabbit's free tier is the lowest-friction starting point. It's rate-limited but functional, it supports all four platforms, and it's the tool with the strongest independent benchmark results.

For deep dives on specific tools and comparisons, see:

Ready to fix your review bottleneck? Start a free CodeAnt AI trial →

Last updated: March 2026. Pricing and features change frequently, verify on each vendor's site before making purchasing decisions.

FAQs

Can AI code review tools replace human reviewers?

Are AI code review tools worth it?

How do I choose between CodeAnt AI and CodeRabbit?

What DORA metrics tools are built into AI code review platforms?

Which tool has the best security scanning?