Every SAST vendor mentions compliance. “Supports SOC 2.” “Helps with ISO 27001.” “Maps to OWASP Top 10.” But when you ask the obvious follow-up question, which specific controls does it map to, what evidence does it produce, and how do I present that evidence to an auditor? Most vendor pages go silent. The gap between “supports compliance” and “here is the mapping table your auditor needs” is where compliance teams actually live.

This guide bridges that gap. It maps SAST capabilities to specific controls across three frameworks: SOC 2 Trust Service Criteria, ISO 27001:2022 Annex A, and OWASP Top 10. For each control, it explains what evidence SAST generates, what auditors expect to see, and how to automate evidence collection. For a broader view of which SAST tools offer the best compliance support, see the full SAST tools comparison.

Why Compliance Teams Need SAST

Compliance frameworks do not mention SAST by name. SOC 2 does not say “run a static analysis scanner.” ISO 27001 does not specify a vendor. What these frameworks do require is evidence that your organization follows secure development practices, tests code for vulnerabilities before deployment, and remediates findings in a documented, auditable process. SAST is the most direct way to generate that evidence automatically.

Without SAST, compliance teams rely on manual code review logs, spreadsheet-based vulnerability tracking, and periodic penetration tests to demonstrate secure development. This approach has three problems: it does not scale, it creates gaps between tests, and it produces evidence that auditors find difficult to verify independently. A penetration test conducted in January tells you nothing about code deployed in March.

SAST solves this by scanning every pull request, every commit, every build, and producing a timestamped record of what was found, when it was found, what severity was assigned, and when it was remediated. This continuous record is the foundation of audit-ready compliance evidence.

Understanding what SAST detects and how it works is a useful starting point for teams new to static analysis. The sections below map SAST capabilities to specific controls.

SAST Mapping to SOC 2 Trust Service Criteria

SOC 2 audits evaluate an organization’s controls across five Trust Service Categories: security, availability, processing integrity, confidentiality, and privacy. Security is the only mandatory category, and it is where SAST provides the most direct evidence. The AICPA Trust Services Criteria define the specific Common Criteria (CC) controls that auditors evaluate.

Three Common Criteria series are directly relevant to SAST:

SOC 2 Control | Control Description | SAST Evidence Generated | Automation Level |

CC7.1 | Monitor system components for anomalies indicative of malicious acts or errors | SAST scan results showing vulnerabilities detected per PR/commit, with severity classification and trend data over time | Fully automated, every PR scanned, findings logged |

CC7.2 | Monitor for and detect changes to configurations and new vulnerabilities | Scan-over-scan comparison showing new findings introduced by code changes, dependency vulnerability alerts from SCA | Fully automated, delta reports per commit |

CC7.4 | Respond to identified security incidents | Remediation tracking records showing finding → fix timeline, AI-generated fix suggestions with developer acceptance/rejection | Semi-automated, fixes suggested, human approval required |

CC8.1 | Authorize, design, develop, configure, document, test, approve, and implement changes | Pre-merge SAST scan as a quality gate: PR blocked if critical/high findings unresolved, scan pass/fail records per deployment | Fully automated, CI/CD gate enforcement |

CC6.1 | Implement logical access controls to protect information assets | Secrets detection scanning identifying hardcoded credentials, API keys, and tokens in source code before deployment | Fully automated, secrets flagged inline in PR |

CC6.8 | Prevent or detect unauthorized or malicious software | SAST detection of injection vulnerabilities, backdoor patterns, and unsafe deserialization that could enable malicious code execution | Fully automated, findings per scan with CWE classification |

What auditors actually ask for: During a SOC 2 Type II audit, auditors want to see that these controls operated consistently over the audit period (typically 6–12 months). The key evidence is not a single scan report, it is the historical record demonstrating that every code change was scanned, findings were triaged, and critical issues were resolved before deployment. SAST tools that integrate into CI/CD pipelines and maintain historical scan records make this evidence collection automatic. For guidance on setting this up, see how to integrate SAST into your CI/CD pipeline.

SAST Mapping to ISO 27001 Annex A Controls

ISO 27001:2022 restructured its controls into four categories: organizational, people, physical, and technological. The technological controls in Annex A Section 8 contain the most direct mappings to SAST. The ISO 27001:2022 standard defines these controls, and auditors evaluating secure development practices will focus on controls A.8.25 through A.8.32.

ISO 27001 Control | Control Name | SAST Evidence Generated |

A.8.25 | Secure Development Life Cycle | SAST scan records demonstrating that security testing is embedded in the SDLC, scan results per PR, quality gate configurations, and historical compliance data showing continuous scanning across the development lifecycle |

A.8.26 | Application Security Requirements | SAST rule configurations mapping to security requirements (OWASP Top 10, CWE categories), demonstrating that security requirements were defined and enforced during development |

A.8.28 | Secure Coding | SAST findings showing detection of insecure coding patterns (injection, XSS, hardcoded secrets, unsafe deserialization), remediation records, and AI-generated fix suggestions demonstrating that secure coding principles are enforced |

A.8.29 | Security Testing in Development and Acceptance | SAST scan reports from pre-merge and pre-deployment stages, showing that security testing occurs before code reaches production, pass/fail gate records, finding severity distributions, remediation timelines |

A.8.8 | Management of Technical Vulnerabilities | SCA scan results showing dependency vulnerabilities with CVE identifiers, EPSS exploit probability scores, and remediation tracking, demonstrating a systematic process for identifying and addressing technical vulnerabilities |

A.8.32 | Change Management | Pre-merge scan records documenting that every code change was security-tested before deployment, with approval workflows and finding resolution records providing change-level audit trails |

A.5.7 | Threat Intelligence | SAST tools that map findings to CWE, CVE, and OWASP categories demonstrate integration with threat intelligence — showing that known vulnerability patterns inform the scanning process |

The 8.28 audit checkpoint. Control A.8.28 (Secure Coding) is the single most SAST-relevant control in ISO 27001:2022. Auditors evaluating this control specifically look for evidence that automated SAST tools are integrated into the development lifecycle, that scan reports show identified flaws and remediation status, and that source code cannot be promoted to production without an automated SAST scan being executed. This is not optional guidance, the ISO 27001 audit checklist for A.8.28 explicitly names SAST scan reports as required evidence.

SAST and OWASP Top 10 Coverage

The OWASP Top 10 (2021) is the most widely referenced benchmark for web application security. While OWASP is not a compliance framework in the same sense as SOC 2 or ISO 27001, it is referenced by virtually every compliance framework as the baseline for what application security testing should detect. SOC 2 auditors expect OWASP coverage; ISO 27001 A.8.28 references “common and historical coding practices that lead to information security vulnerabilities,” which is effectively the OWASP Top 10.

OWASP Category | CWE Examples | SAST Detection Capability | Detection Confidence |

A01: Broken Access Control | CWE-200, CWE-284, CWE-352 | Detects missing authorization checks, CSRF vulnerabilities, insecure direct object references, path traversal | High, pattern and data-flow analysis |

A02: Cryptographic Failures | CWE-259, CWE-327, CWE-328 | Identifies weak algorithms (MD5, SHA-1 for passwords), hardcoded cryptographic keys, missing encryption for sensitive data | High, static pattern matching |

A03: Injection | CWE-79, CWE-89, CWE-77 | Detects SQL injection, XSS, command injection, LDAP injection through taint analysis tracing user input to dangerous sinks | Very high, core SAST strength |

A04: Insecure Design | CWE-209, CWE-256, CWE-501 | Partial detection of design-level issues: missing rate limiting, trust boundary violations, excessive data exposure in error messages | Medium, requires architectural context |

A05: Security Misconfiguration | CWE-16, CWE-611 | Detects XML external entity processing, debug modes left enabled, default credentials in configuration files, IaC misconfigurations | High, IaC scanning covers infrastructure-level misconfigurations |

A06: Vulnerable Components | CVE database | SCA scanning identifies known vulnerabilities in third-party dependencies with CVE identifiers, EPSS scores, and upgrade paths | Very high, SCA database matching |

A07: Auth & Session Failures | CWE-287, CWE-384, CWE-613 | Identifies session fixation, missing authentication checks, improper session timeout configuration | Medium-High, depends on framework-awareness |

A08: Software & Data Integrity | CWE-502, CWE-829 | Detects unsafe deserialization, untrusted data in integrity checks, missing code signing validation | High, deserialization is well-detected by SAST |

A09: Logging & Monitoring Failures | CWE-778 | Limited detection — can identify missing logging statements and insufficient error handling, but runtime monitoring gaps require DAST | Low-Medium, SAST detects code-level gaps only |

A10: Server-Side Request Forgery | CWE-918 | Detects SSRF patterns where user-controlled input reaches server-side HTTP request functions without validation | High, taint analysis traces input to request sinks |

Where SAST is strong vs. where you need additional testing

SAST provides very high detection confidence for:

injection vulnerabilities (A03)

cryptographic failures (A02)

vulnerable components (A06)

These are the categories where static analysis excels because the vulnerability patterns are well-defined and detectable without runtime context. Categories like insecure design (A04) and logging failures (A09) require additional testing methods, DAST, penetration testing, or architectural review, because the vulnerabilities depend on runtime behavior that static analysis cannot fully observe.

Generating Audit-Ready Evidence from SAST

Running SAST scans generates raw data. Turning that data into audit-ready evidence requires three capabilities: automated reporting, historical records, and remediation tracking.

Automated Reports and Dashboards

Auditors do not read raw scan output. They need summary reports that answer specific questions: how many vulnerabilities were found, what severity distribution exists, how does this compare to the previous period, and what percentage of findings were remediated within the defined SLA.

The most useful SAST reports for compliance include a vulnerability summary by severity (critical, high, medium, low) with trend lines over the audit period, a compliance mapping view that tags findings by the framework control they satisfy (CWE → OWASP category → SOC 2 CC → ISO 27001 control), and a mean-time-to-remediation (MTTR) metric showing how quickly the team resolves findings of each severity level.

Teams that generate these reports automatically on a weekly or monthly cadence eliminate the last-minute scramble that typically precedes an audit. Instead of spending two weeks compiling evidence manually, the compliance team exports the dashboards that have been running continuously.

Historical Scan Records

A single point-in-time scan report is insufficient for SOC 2 Type II or ISO 27001 surveillance audits. Both frameworks require evidence that controls operated consistently over the audit period. This means every scan must be logged with a timestamp, the code version scanned, the findings produced, and the disposition of each finding.

SAST tools that run in CI/CD pipelines inherently produce this record, every PR scan is a timestamped event in the audit trail. The key is ensuring these records are retained for the audit period (minimum 12 months for SOC 2 Type II) and exportable in a format auditors can review independently. SARIF (Static Analysis Results Interchange Format) is the emerging standard for SAST output, and tools that export in SARIF make it easier to integrate with compliance platforms like Vanta, Drata, or Secureframe.

Remediation Tracking

Finding vulnerabilities is only half the compliance story. Auditors also want evidence that findings were remediated, and that the remediation process followed a documented workflow. This means tracking each finding through its lifecycle: detected → triaged → assigned → fixed → verified → closed.

SAST tools that integrate with issue trackers (Jira, Linear, GitHub Issues) create this audit trail automatically. When a SAST finding generates a ticket, and the ticket is resolved by a PR that passes the subsequent scan, the full lifecycle is documented without manual intervention. AI-generated fix suggestions accelerate this process — a developer can apply a one-click fix directly in the PR, and the subsequent scan verifies the fix, creating a closed-loop remediation record.

How CodeAnt AI Supports Compliance Workflows

CodeAnt AI generates the continuous evidence stream that compliance teams need for SOC 2, ISO 27001, and OWASP-based audits, without requiring a separate compliance tool or manual evidence collection.

Continuous scanning as the default

Every pull request is scanned automatically across GitHub, GitLab, Azure DevOps, or Bitbucket. This means every code change produces a timestamped scan record — the foundation of SOC 2 CC7.1 and CC8.1 evidence, and ISO 27001 A.8.25 and A.8.29 evidence. There is no configuration required to achieve continuous scanning; it is the default behavior.

OWASP Top 10 and CWE coverage

CodeAnt AI’s AI-native detection engine covers all ten OWASP categories with particularly strong coverage for injection, cryptographic failures, and vulnerable components. Findings are tagged with CWE identifiers, making it straightforward to map them to the OWASP category → SOC 2 control → ISO 27001 control chain described in the tables above.

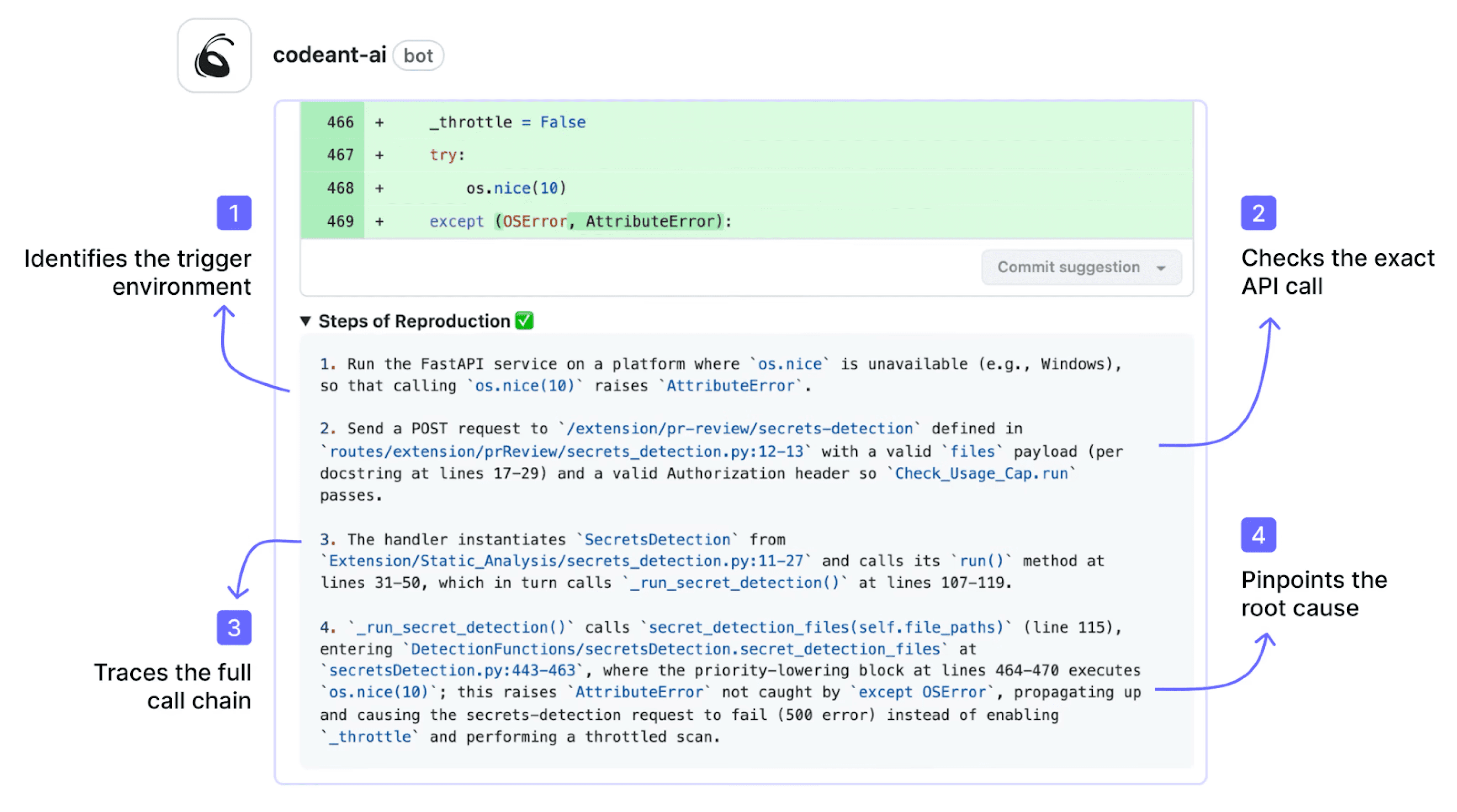

Steps of Reproduction as audit evidence

Most SAST tools produce a finding with a CWE number and a line reference. CodeAnt AI generates Steps of Reproduction, the exact conditions needed to trigger the vulnerability, including the attack path and exploitation scenario.

For auditors, this is the difference between “our tool found something” and “here is the evidence that this vulnerability is real and here is how we verified it.” This directly supports ISO 27001 A.8.28’s requirement for documented security testing evidence.

Secrets detection across codebases

Hardcoded credentials, API keys, and tokens are flagged inline in every PR, directly generating the evidence required for SOC 2 CC6.1 (logical access controls) and OWASP A02 (cryptographic failures). Secrets are caught before they reach the default branch, eliminating the most common compliance audit finding.

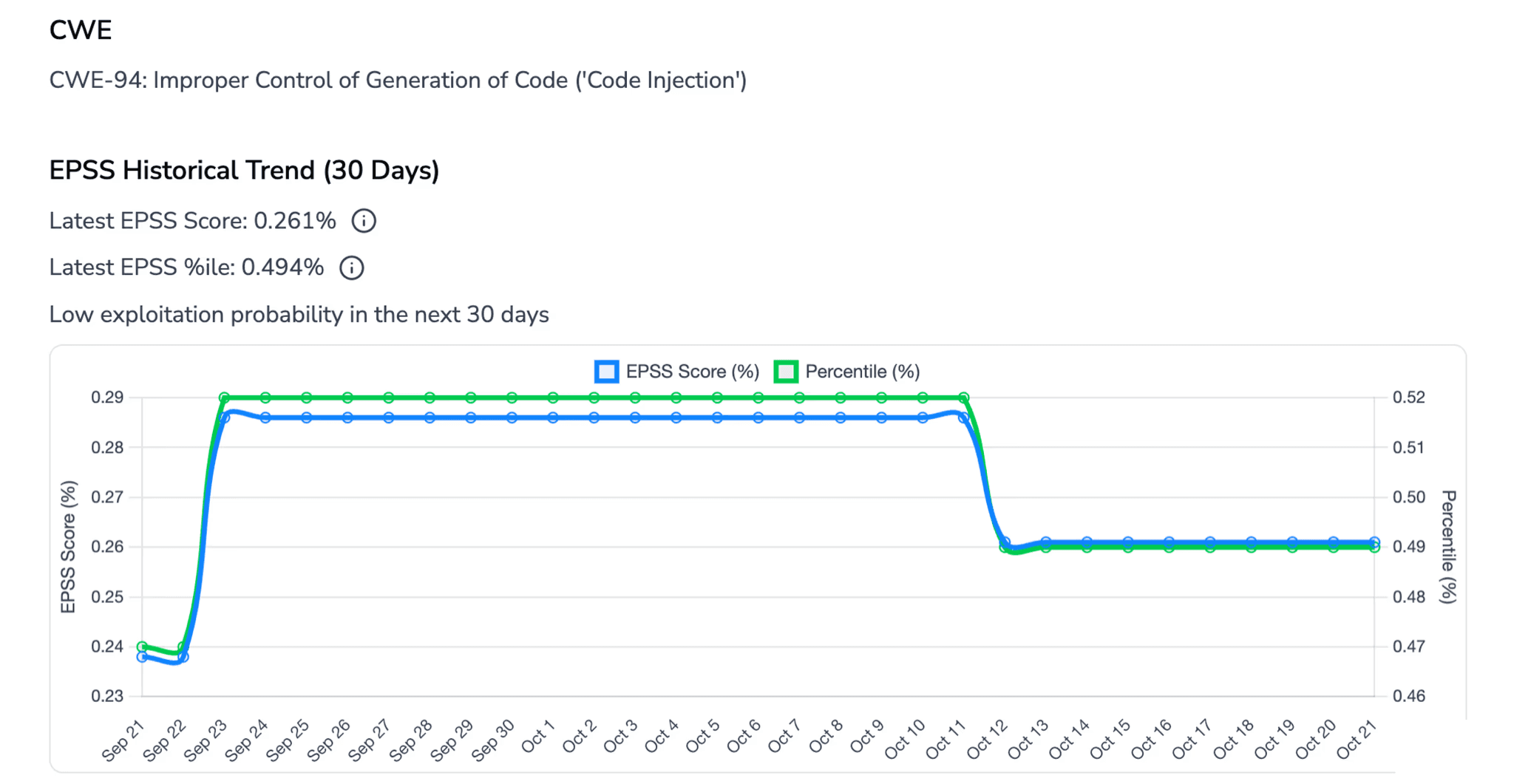

SCA with EPSS scoring

Dependency scanning identifies known vulnerabilities in third-party components with CVE identifiers and EPSS exploit probability scores.

This generates the evidence required for ISO 27001 A.8.8 (management of technical vulnerabilities) and OWASP A06 (vulnerable components), with risk-based prioritization that aligns with how auditors evaluate remediation prioritization.

Remediation tracking with one-click fixes

Every finding includes an AI-generated fix suggestion that developers can apply directly in the PR. When the fix is applied and the subsequent scan passes, the full finding-to-fix lifecycle is documented automatically. This closed-loop remediation record is the strongest evidence for SOC 2 CC7.4 and ISO 27001 A.8.28.

SecOps dashboard with compliance-ready analytics

Beyond individual findings, CodeAnt AI's SecOps dashboard provides the aggregated views that compliance teams and security managers need:

vulnerabilities found over time (trend analysis for audit periods)

true positives vs. false positives filtered out (demonstrates tool accuracy for auditors)

fixed vs. unfixed issues by severity and age (shows remediation velocity)

OWASP Top 10 and CWE/CVE mapping across the entire codebase (the classification auditors expect)

team and repository risk distribution (identifies hotspots)

Compliance workflows integrate natively with Jira and Azure Boards, so every finding can be tracked as a ticket through remediation and closure, creating the closed-loop audit trail that SOC 2 CC7.4 and ISO 27001 A.8.28 require. Reports export as audit-ready PDF and CSV.

Shift-left secret prevention for CC6.1 compliance

CodeAnt AI's CLI and pre-commit hooks block secrets, credentials, and tokens before they ever enter Git, not just flagging them in a PR, but preventing the commit entirely. For SOC 2 CC6.1 (logical access controls) compliance, this is the strongest evidence: the secret was never in the repository, so there is nothing to remediate and no exposure window to explain to auditors.

Commvault’s 800-developer team runs CodeAnt AI across 17,000+ merge requests, generating continuous compliance evidence as a byproduct of their development workflow rather than a separate process (full case study).

Conclusion: Compliance is Evidence, Not Claims

Compliance frameworks do not require you to “buy a SAST tool.” They require evidence that secure development controls operate consistently.

Manual reviews and quarterly penetration tests cannot provide that continuous proof. Automated SAST integrated into your CI/CD pipeline can.

The difference between “supports SOC 2” and “passes audit scrutiny” is traceability:

Every PR scanned

Every vulnerability logged

Every fix documented

Every report exportable

If your current process still depends on spreadsheets and screenshots, it is not audit-ready.

Start running continuous SAST scans across your repositories and generate compliance evidence automatically.

Book a short walkthrough or start a 14-day trial to see how compliance reporting works on your real codebase.

FAQs

How does SAST help with SOC 2 compliance?

Does ISO 27001 require SAST?

Can SAST demonstrate OWASP Top 10 coverage?

What evidence do auditors expect from SAST tools?

Is SAST alone enough for compliance?