Security patches create a comforting illusion.

A vulnerability is discovered.

A patch is released.

A new version number appears.

Dependency scanners mark the issue as resolved.

For most engineers, the story ends there.

But security researchers learn something quickly: patches often fail the first time.

Not because developers are careless.

But because the process of fixing vulnerabilities is fundamentally reactive.

A patch usually answers one question:

How do we stop this exploit?

But attackers ask a different question:

What assumptions does this patch make?

That difference explains why security history is filled with vulnerabilities that required multiple patches before they were truly fixed.

The discovery of CVE-2026-28292, a remote code execution vulnerability in the Node.js library simple-git, is one example.

The vulnerability survived two previous security fixes.

The reason was subtle: a case-sensitive regex protecting a case-insensitive configuration system.

But the deeper lesson is not about regex.

It is about how we evaluate patches.

If your organization ships software, maintains libraries, or reviews dependencies, there are several critical questions every security patch should force you to ask.

1. Does the Patch Fix the Exploit, or the Vulnerability Class?

This is the most important question in patch analysis.

Most patches fix the exploit that was reported.

Few patches eliminate the entire vulnerability class.

Example vulnerable filter:

This filter was designed to block configuration overrides enabling dangerous Git protocols.

But it assumed configuration keys would appear in lowercase.

Attackers used:

The patch blocked the original exploit but not the variant attack.

This is why patch evaluation should focus on:

2. What Assumptions Does the Patch Make?

Every patch embeds assumptions.

Attackers search for them.

Common assumptions include:

Patch assumption | attacker bypass |

|---|---|

input lowercase | uppercase |

encoded once | double encoding |

path normalized | alternate separators |

single parameter | multiple parameters |

In CVE-2026-28292, the assumption was simple:

Git did not enforce that assumption.

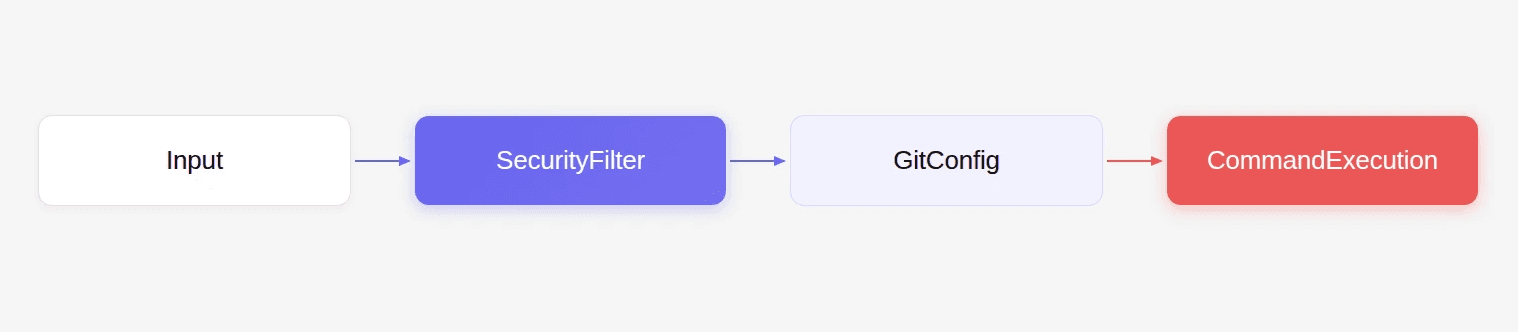

3. Does the Patch Align with the System’s Behavior?

Security filters frequently fail when they misunderstand the semantics of the system they protect.

Example architecture:

If the filter interprets input differently than the system, bypasses become possible.

Examples across ecosystems:

Filter | System behavior |

|---|---|

case sensitive regex | case insensitive config |

URL validation | browser normalization |

path sanitizer | filesystem canonicalization |

Security controls must always align with system semantics.

4. What Variant Inputs Could Bypass the Patch?

After analyzing the patch logic, security teams should attempt variant generation.

Typical variant techniques include:

Example fuzz helper:

Testing security controls against variant inputs often reveals bypasses quickly.

5. Does the Patch Introduce New Attack Surface?

Security patches can sometimes introduce unintended behavior.

Consider a patch that blocks a specific configuration flag.

Attackers may instead manipulate:

When evaluating patches, researchers should ask:

6. Is the Security Boundary Clearly Defined?

Many vulnerabilities occur because the security boundary is ambiguous.

Example scenario:

If userArgs contains untrusted input, the boundary shifts.

Attackers may inject arguments enabling dangerous behavior.

Patch evaluation must confirm that the boundary is enforced consistently.

7. Are There Other Code Paths That Bypass the Patch?

Patches often modify only the code path used by the original exploit.

But libraries frequently expose multiple APIs.

If the patch protects only clone() but not raw(), the vulnerability persists.

Patch audits must examine all entry points.

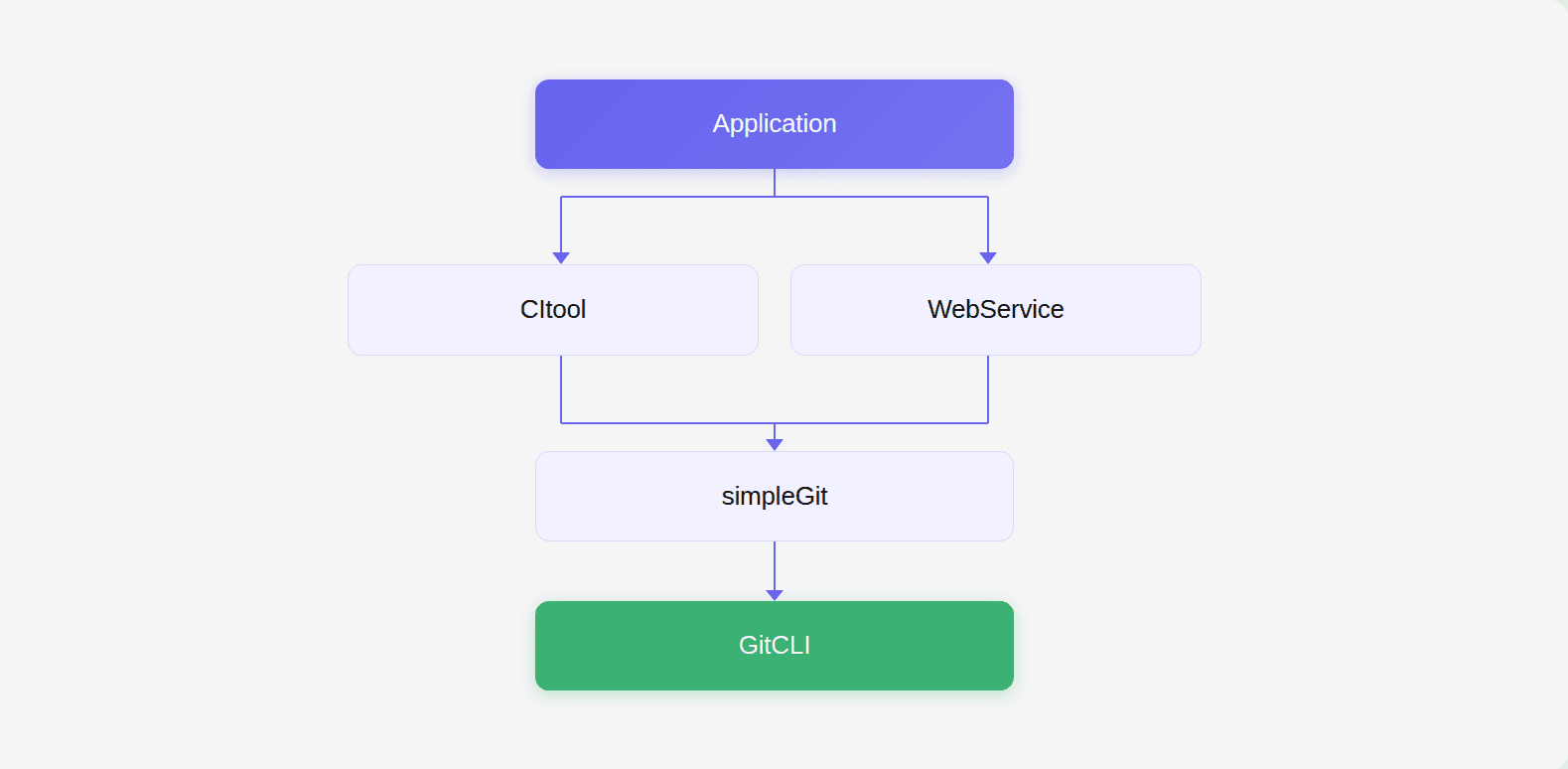

8. How Does the Patch Interact with Dependencies?

In modern software ecosystems, vulnerabilities often exist across multiple layers.

Example dependency graph:

A patch may fix one layer while leaving others exposed.

Security reviews should evaluate:

9. How Easily Can Attackers Reproduce the Exploit?

Patch evaluation should include exploit reconstruction.

Example PoC:

If the exploit still succeeds under variant inputs, the patch failed.

10. How Can We Automate Patch Analysis?

Manual patch analysis does not scale.

Large ecosystems contain:

Automated analysis becomes essential.

AI-assisted code analysis can help by identifying:

During internal research, CodeAnt AI’s code reviewer analyzed patches across multiple ecosystems and flagged suspicious patterns, which eventually led to the discovery of CVE-2026-28292.

This approach allows researchers to examine patches not just for syntax changes, but for whether the fix actually achieves its intended security goal.

Why This Matters for Code Review

The deeper lesson behind CVE-2026-28292 is not about regex filters.

It is about how code review prioritizes different types of issues.

Many automated review tools focus on developer convenience:

These improvements are useful.

But they rarely prevent catastrophic failures.

The true value of a code review gate lies elsewhere.

It lies in the moment when the tool identifies a vulnerability that nobody realized existed yet.

A subtle logic flaw.

A broken security assumption.

A patch that appears correct but still leaves a hidden bypass.

Those are the findings that justify stopping a pull request.

Those are the moments when developers actually appreciate automated code review.

And they are the kinds of issues that AI-assisted reasoning systems are beginning to surface.

Conclusion

Security patches are not the end of the vulnerability lifecycle.

They are often just the beginning.

Every patch should trigger a deeper investigation:

What assumptions does the fix rely on?

What variant inputs could bypass it?

Does the fix align with system behavior?

Are other attack paths still possible?

The vulnerability behind CVE-2026-28292 survived multiple patches because the fix focused on blocking the exploit rather than understanding the system semantics that made the exploit possible.

That distinction matters.

Because attackers do not test the exploit.

They test the patch.

As software ecosystems grow more complex, patch evaluation will become an increasingly important part of security engineering.

And the tools that help engineers reason about those patches, identifying hidden assumptions and variant attack paths, will play a central role in the future of code review.

FAQs

Why do many security patches require follow-up fixes?

What is a variant attack?

How can security teams evaluate whether a patch is complete?

Why are semantic mismatches dangerous in security filters?

How can AI improve vulnerability discovery during patch analysis?