If you've been told your company needs a penetration test, the next question is almost always the same: what kind? The term "pentest" gets used as if it describes a single thing, it doesn't. The methodology your team chooses determines which vulnerabilities will be found, which will be missed entirely, and whether the results will actually reflect your real-world risk.

The three test types, black box, white box, and gray box, differ not in intensity, but in the starting conditions the tester operates under. Each simulates a different attacker profile, surfaces a different class of vulnerability, and answers a fundamentally different security question. Choosing the wrong one doesn't just waste budget; it creates a false sense of security while your actual attack surface remains untested.

This guide explains exactly what happens during each type of engagement, what it can and cannot find, and how to match the right test to your situation.

The core insight: Black box tests what a stranger can do to you. White box tests what someone with your code can do. Gray box tests what a legitimate user with bad intentions can do. All three are different threat models, not different skill levels.

Black Box Penetration Testing: The External Attacker Simulation

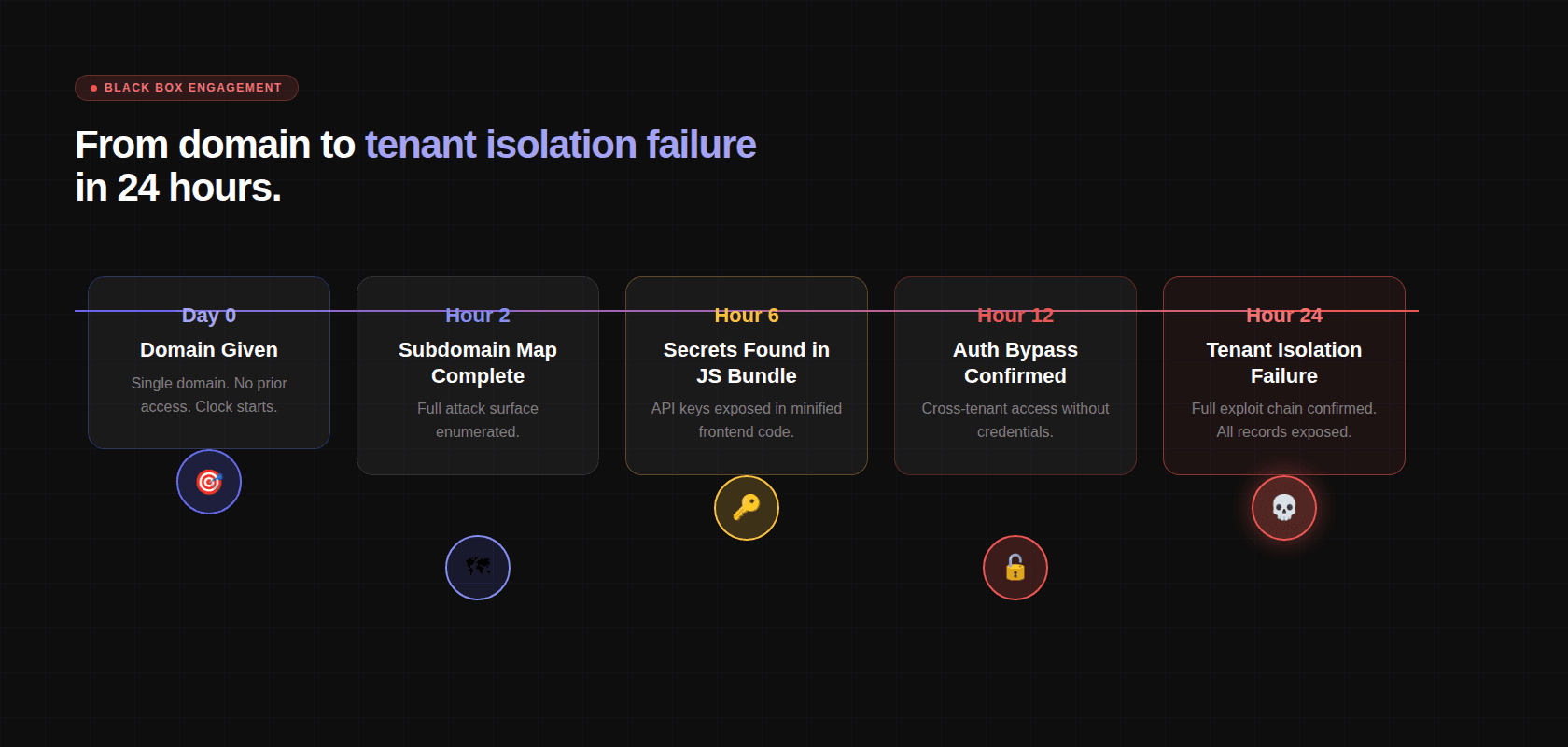

In a black box test, the tester starts with a single piece of information: your domain. No credentials. No code access. No documentation. No architecture diagrams. The inside of the system is opaque, hence "black box."

This is the most faithful simulation of what an external attacker with no prior knowledge or inside access would be able to do. The question a black box test answers is precise: what could someone on the internet, starting from nothing, actually do to your users' data?

What Happens During a Black Box Engagement

Reconnaissance and External Surface Mapping

Before a single vulnerability is tested, the AI builds a complete map of everything visible from the outside. This is called reconnaissance, and it is far more comprehensive than most teams expect.

Subdomain enumeration uses brute-force DNS resolution across 150+ common prefix patterns, not just www, api, mail, but dev, staging, uat, internal, jenkins, grafana, admin, portal, and hundreds more. Each prefix is checked against the target domain. Discovered subdomains are added to scope.

Certificate Transparency (CT) logs are queried. Every TLS certificate issued for any subdomain of your domain is publicly logged. CT log queries surface subdomains that DNS brute-forcing might miss, including historical subdomains that are no longer in active use but may still be running a server.

CNAME records are resolved to identify underlying cloud providers and CDNs, information that tells the tester what infrastructure they're dealing with before they've sent a single HTTP request.

Port scanning runs across all discovered hosts. Not just ports 80 and 443, all TCP ports. This finds databases accidentally exposed to the internet, internal admin interfaces bound to 0.0.0.0, container orchestration APIs, monitoring dashboards, message queue management interfaces. The number of companies with a Redis instance or Elasticsearch cluster accessible from the public internet without authentication remains astonishing.

Cloud Asset Discovery

Modern applications don't live only on their own servers. They use cloud storage, managed databases, serverless functions, CDNs, and CI/CD infrastructure. All of it is in scope.

Cloud Asset Type | What's Being Tested |

|---|---|

S3 Buckets | Public read access, public write access, bucket name enumeration |

Azure Blob Containers | Anonymous access, container listing, SAS token exposure |

GCP Storage Buckets | allUsers permissions, bucket enumeration via known naming patterns |

CI/CD Dashboards | Jenkins, CircleCI, GitHub Actions, exposed without authentication |

Container Registries | Private images accessible without credentials |

Monitoring Endpoints | Grafana, Kibana, Datadog, exposed management interfaces |

JavaScript Bundle Analysis

This is a technique most traditional pentesters don't apply systematically, and it is one of the highest-value steps in a modern black box engagement.

Every JavaScript bundle served by the application is downloaded and statically analyzed. Modern single-page applications ship 5–15 MB of minified JavaScript to the browser, and inside that code is often more sensitive information than most teams realize.

What the analysis extracts:

Hardcoded secret detection runs across 30+ pattern types: AWS access keys, Stripe live keys, GitHub tokens, JWT secrets, database connection strings, Sentry DSNs, Google API keys, Twilio credentials, SendGrid keys. Every hit is verified for validity before being reported.

Staging vs. production bundle comparison surfaces endpoints that were removed from production but remain reachable on non-production URLs, a common source of forgotten API endpoints with weaker security controls.

API Authentication Testing

Every endpoint discovered, from documentation, from JS bundle analysis, from Swagger/OpenAPI exposure, from GraphQL introspection, is tested unauthenticated first.

The response classification is simple:

Response Code | What It Means |

|---|---|

200 OK with data | No authentication enforced, confirmed finding |

401 Unauthorized | Authentication required and enforced |

403 Forbidden | Authenticated but unauthorized (check if bypassable) |

500 Internal Server Error | Request processed before auth check ran, potential finding |

302 Redirect to login | Auth enforced via redirect (check direct access bypass) |

Authentication bypass patterns are tested systematically on every endpoint that returns anything other than a clean 401:

CORS Policy Testing

Cross-Origin Resource Sharing misconfigurations are a consistent finding in production applications. The AI tests every domain with 7+ attacker-controlled origins:

Exploit Chaining

No finding is evaluated in isolation. Every confirmed finding is cross-referenced against every other finding, and the AI constructs the highest-impact chain possible from the confirmed set.

Tenant ID leaking from the user profile endpoint + IDOR in the records endpoint = complete cross-tenant data access. Hardcoded internal API hostname in the JS bundle + unauthenticated endpoint on the internal API = access to internal services with no credentials. The combination of findings is almost always more dangerous than any single finding.

What Black Box Reliably Misses

Black box testing cannot find what's invisible from the outside:

Authentication bypass vulnerabilities buried in middleware configuration that produce normal HTTP responses

Business logic flaws in flows that require authentication to reach

Secrets in Git history or config files

Vulnerabilities in internal microservices not exposed to the internet

Dependency vulnerabilities that require code access to assess reachability

This is precisely why the most effective security programs don't treat black box testing as a standalone answer. The vulnerabilities black box misses, middleware authentication bypasses, dataflow injection, secrets in Git history, are the same class of issues that continuous defensive code review is designed to catch at the source. When both run on the same platform, the white box analysis already has 18 months of code intelligence before the offensive engagement begins. The external test and the internal review are no longer separate programs with separate blind spots, they are two phases of the same assessment.

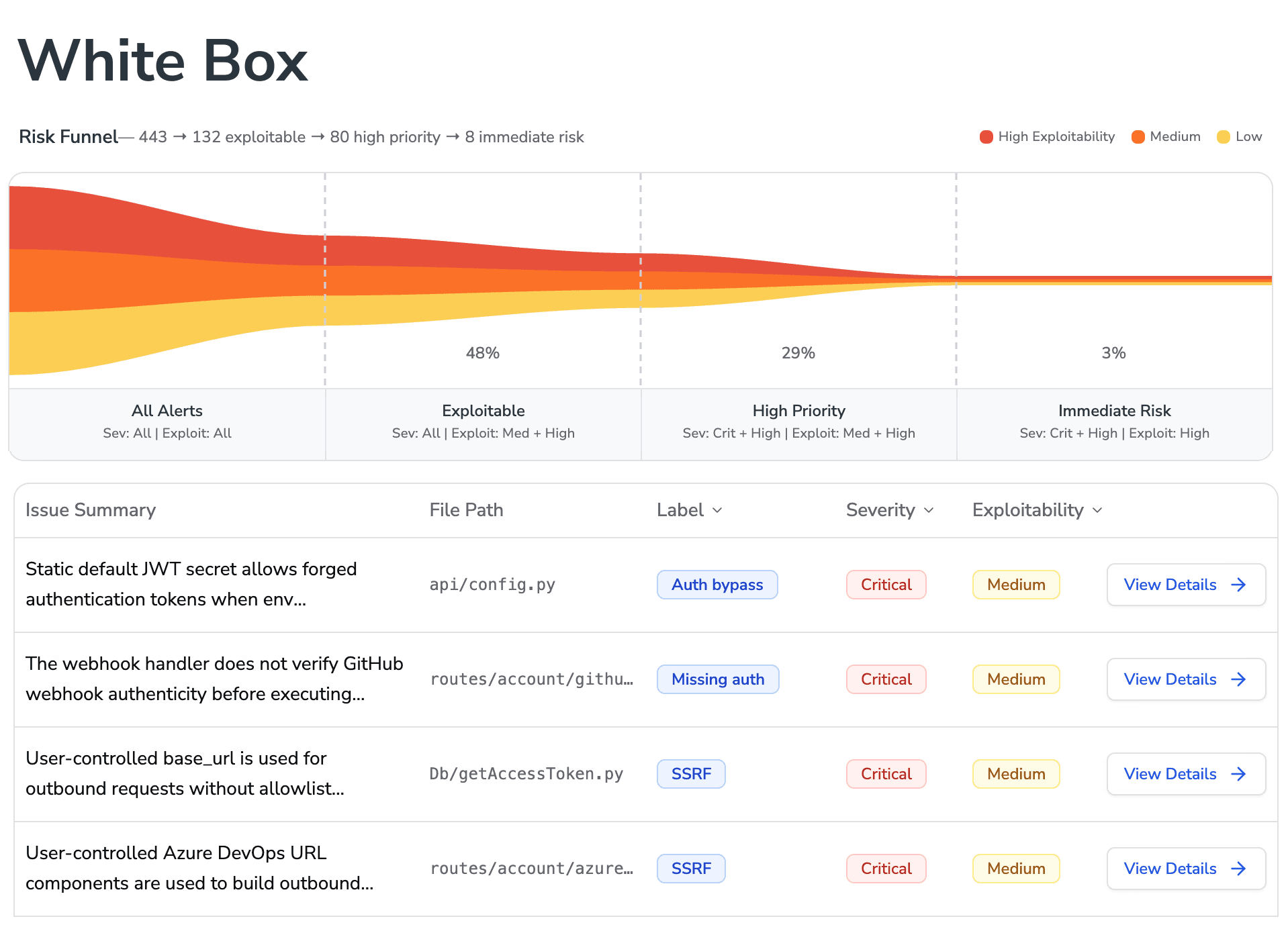

White Box Penetration Testing: The Source Code Audit

In a white box test, the tester has read-only access to the complete repository, source code, configuration files, infrastructure definitions, and version history. The system is fully transparent, "white box."

The threat model this simulates is often underestimated: an insider threat, a contractor with repo access, a leaked GitHub token in a CI/CD log, a public repository accidentally containing production credentials. If someone motivated obtained your source code, what would they find?

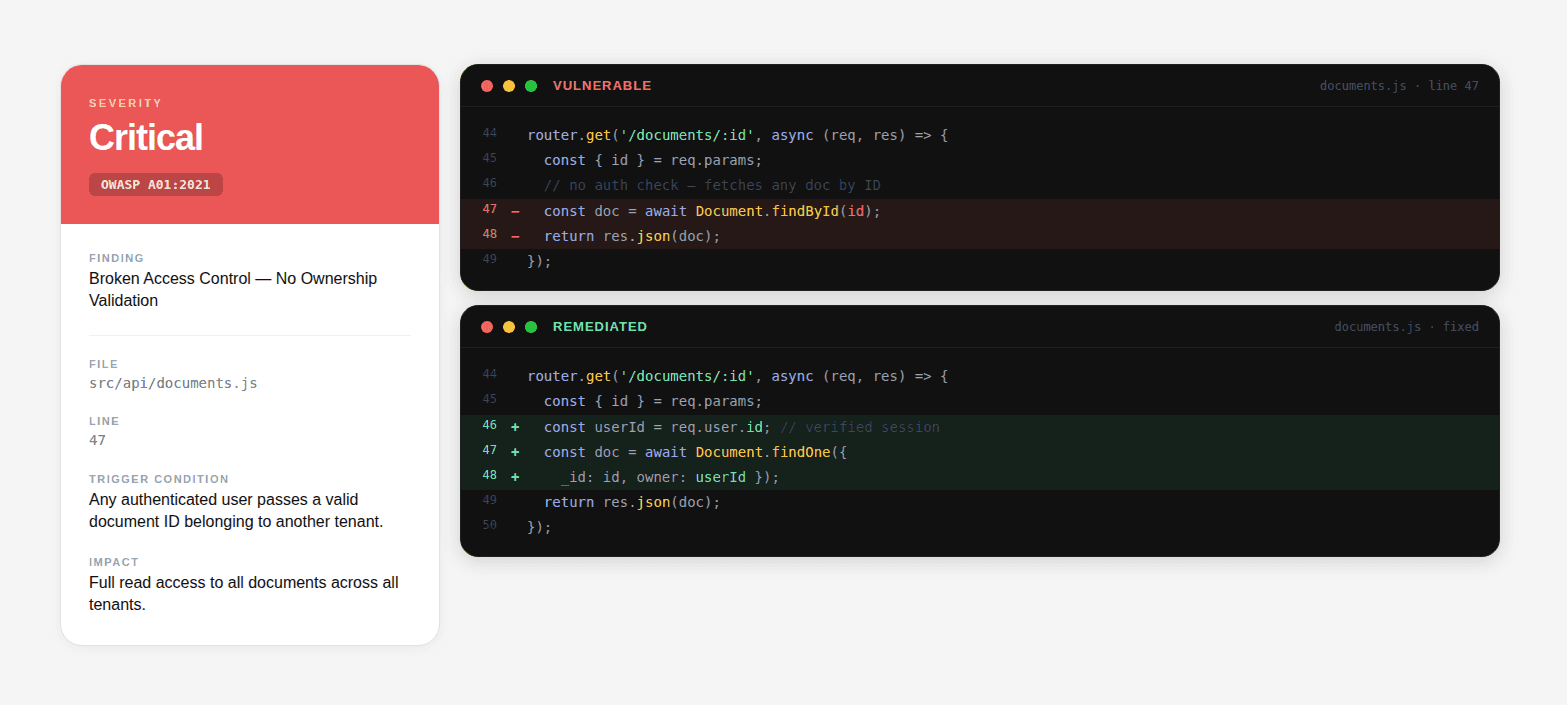

White box testing is also the only way to find vulnerabilities that are completely invisible from the outside, middleware misconfigurations, auth chain breaks, secrets in configuration files, and dataflow-level injection vulnerabilities that produce no anomalous external response.

Security Configuration Analysis

The first thing a white box engagement does is read every authentication and authorization configuration in the codebase.

Spring Security (Java):

An external scanner sees the /api/v2/admin/users endpoint responding correctly. It has no idea the response is bypassing authentication because the security filter chain was excluded for the entire /api/v2/ namespace. A white box read catches this immediately.

Express.js middleware ordering (Node.js):

The admin endpoint returns 200 OK with real data to unauthenticated requests. The external response looks normal. The vulnerability is entirely in the code.

Secrets and Credential Scanning

Every configuration file in the repository is scanned:

A common finding in CI/CD pipelines:

Git history is scanned separately from the current HEAD. A credential committed and deleted is still in version control:

Dataflow Tracing and Root Cause Analysis

For every trust boundary identified, the AI traces the data forward — all the way from the HTTP request to every place the input is used. This is how injection vulnerabilities are found with precision.

The finding in the report doesn't say "SQL injection detected." It says: app/views/products.py, line 14, search_products(), the category parameter from request.GET reaches a raw SQL query via string formatting. Payload: ' OR '1'='1' --. Effect: returns all products regardless of category and featured status. Root cause: use of Product.objects.raw() with f-string interpolation instead of parameterized query.

Remediation diff:

That's the level of specificity a white box engagement should produce. Engineers fix the right thing on the first attempt.

Infrastructure and Dependency Analysis

Dependency reachability analysis goes beyond CVE matching. A vulnerable dependency never called in the application's code paths is not the same as one that processes every user file upload. The analysis determines whether the vulnerable function is actually reachable given the application's dependency usage patterns, reducing false positives and prioritizing real risk.

Gray Box Penetration Testing: The Insider Threat Simulation

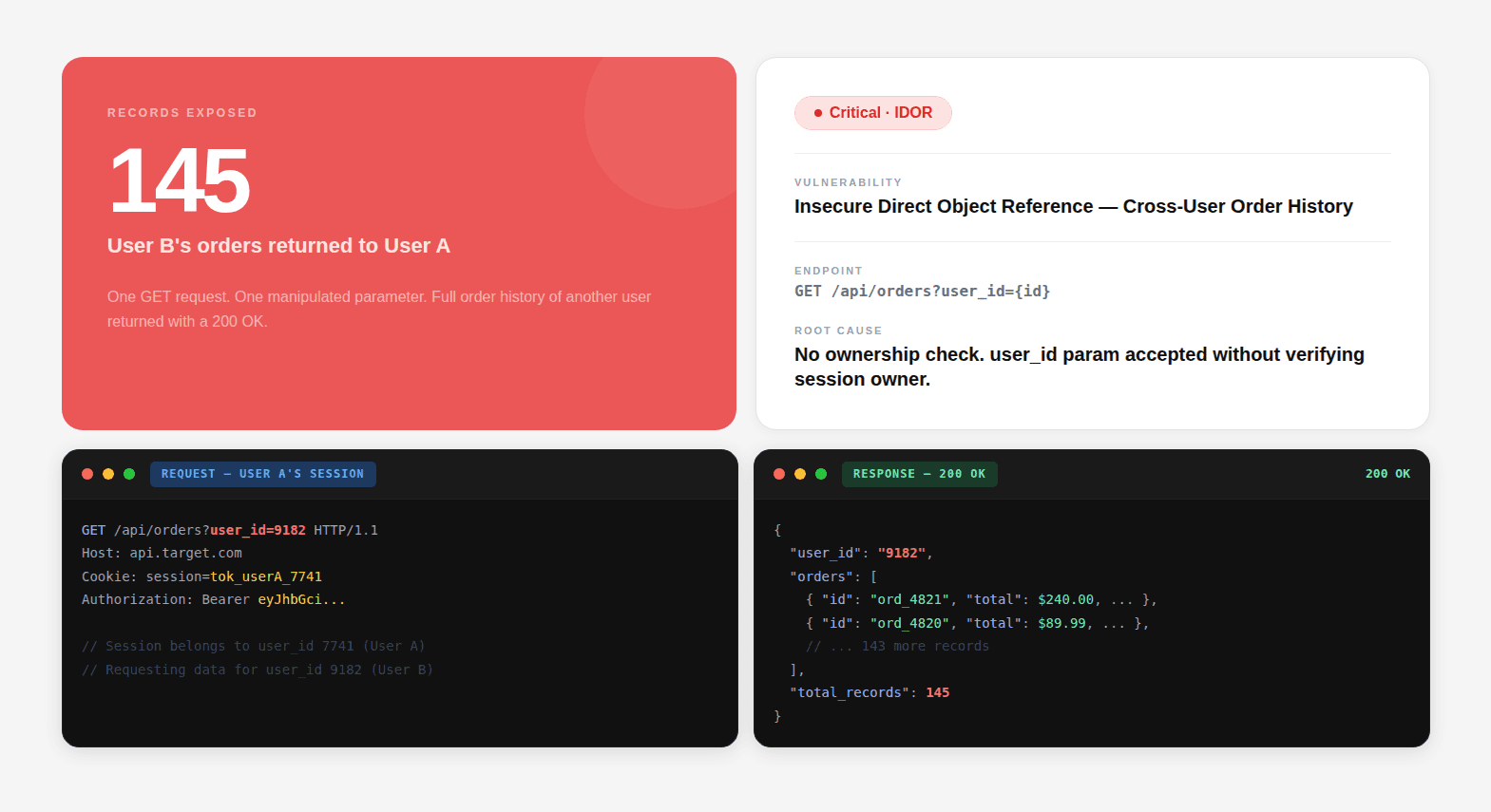

In a gray box test, the tester starts with authenticated access, test credentials for one or more user roles, and optionally some code context or architecture documentation. The test simulates the most operationally dangerous threat model: a legitimate user who decides to abuse their access.

This is your highest-risk threat in most SaaS applications. Not an external attacker with zero knowledge, a customer, an employee, a contractor who already has valid credentials and is systematically exploring what they can do with them.

Access Control and Privilege Escalation

Every admin endpoint is tested with non-admin credentials:

JWT claim manipulation:

IDOR Testing: Systematic Identifier Enumeration

Every endpoint accepting a record identifier is tested for IDOR:

Tenant isolation is verified at the data layer, not just the API layer. The test confirms that the database query itself filters by the authenticated user's tenant, not just that the API returns a 403 for obvious cross-tenant requests.

Business Logic Testing

This is the category where gray box testing produces findings that no other methodology reaches:

Business Logic Test | What's Being Checked |

|---|---|

Price manipulation | Can the total be modified in the request before payment confirmation? |

Discount code reuse | Can a single-use code be replayed by intercepting and resending the validation request? |

Workflow bypass | Can step 5 ( |

Subscription tier abuse | Can a free-tier user call a premium endpoint directly via API? |

Rate limit evasion | Can rate limits be bypassed by rotating user IDs, IP headers, or request parameters? |

Quantity manipulation | In an e-commerce flow, can negative quantities be used to reduce total price? |

Concurrent request exploitation | Can two simultaneous requests exploit a race condition in inventory or balance checks? |

None of these produce anomalous HTTP response patterns. None of them match known CVE signatures. They require understanding what the application is supposed to do, and then methodically testing whether it actually enforces that intent at every entry point.

Black Box vs White Box vs Gray Box Penetration Testing: Full Comparison

The three test types are not interchangeable, and they are not additive in a simple sense either. Each one has a structural blind spot that only the other two can close. Before picking one, here is exactly how they differ across every dimension that matters:

The comparison table:

Black Box | White Box | Gray Box | |

|---|---|---|---|

Attacker simulated | External stranger — no prior access, no inside knowledge | Insider, contractor, or someone who obtained your source code | Legitimate user with valid credentials who decides to abuse access |

Starting knowledge | Domain name only | Full repo, source code, config files, Git history | Test credentials for one or more user roles |

What it finds | Exposed infrastructure, unauthenticated endpoints, cloud misconfigs, JS bundle secrets, open ports, CORS failures | Middleware auth bypasses, dataflow injection, secrets in config + Git history, insecure dependency usage, Dockerfile misconfigs | IDOR, broken access control, JWT manipulation, privilege escalation, business logic flaws, race conditions |

What it misses | Anything invisible from outside, middleware bypasses, business logic, code-level auth failures, internal services | Runtime-only behaviors, misconfigs that only manifest in production, authenticated flow abuse | External surface exposure, unauthenticated endpoints, cloud asset misconfiguration, code-level vulnerabilities |

Typical duration | 3–5 days | 5–10 days (scales with codebase size) | 3–5 days (scales with role complexity) |

SOC 2 controls | CC6.6, external threat protection | CC6.1, CC6.3, access control at code level | CC6.1, CC6.3, CC6.6, authenticated access control |

Blind spot closed by | White box + gray box | Black box + gray box | Black box + white box |

The last row is the most important one. Every test type has a blind spot that only the other two close. The only way to eliminate all three simultaneously is to run all three as a single integrated engagement, where reconnaissance from black box informs what gray box targets, code intelligence from white box informs what exploit chains are possible, and findings from each track cross-reference against the others.

Choosing the Right Penetration Test for Your Situation

The right answer depends on your threat model, your current security maturity, and what question you need answered most urgently.

Your Situation | Recommended Approach | Rationale |

|---|---|---|

First pentest, no security baseline | Full Assessment (all three) | Don't pick one angle, understand the full picture first |

Pre-launch, shipping customer data | Gray Box + White Box | Business logic and code-level auth issues are highest priority at launch |

SOC 2 / PCI-DSS audit incoming | Full Assessment | Auditors want external surface, code review, and authenticated testing covered |

Recent codebase change, regression check | White Box | Fastest way to confirm new code didn't introduce auth or injection issues |

Ongoing continuous security validation | Continuous (monthly) | Attack surface changes continuously, testing should too |

"We've been pentested before, want deeper" | White Box | Most prior engagements are black box, code level is likely untested |

Acquired a company, assessing their security | Full Assessment | Unknown codebase, unknown history, unknown risk, cover all angles |

You ship code weekly and need continuous coverage | Continuous Unified Assessment | Attack surface changes every sprint, offensive testing needs the same code intelligence your defensive review already has |

The full assessment, black box, white box, and gray box run as a single engagement with a unified report, is the right starting point for most teams. Each methodology surfaces a different class of vulnerability; running only one gives you a partial picture and the false confidence of a clean report that didn't actually look where the vulnerabilities are.

For pre-launch products handling customer data, gray box combined with white box is the highest-priority pairing. Business logic flaws and code-level authentication issues are what ship to production in a first release. The external attack surface can be addressed continuously once the application is live.

If your organization has been tested before, especially if those were traditional black box engagements, white box is likely the highest-value next investment. Most prior engagements never looked at the code. That's where the deepest vulnerabilities live.

Get a full audit-grade pentest report, SOC 2 and ISO 27001 ready, in 48 hours, not weeks.

Conclusion

Black box, white box, and gray box penetration testing are not tiers of the same thing, they are three distinct methodologies that simulate three distinct attacker profiles and find three distinct categories of vulnerability. Treating them as interchangeable, or assuming any single one covers your full risk, is how organizations end up with a clean pentest report and a compromised production database.

The practical framework is straightforward. If you've never been tested, run all three. If you're pre-launch, prioritize white box and gray box, business logic and auth issues are what ship in a first release. If you've only had black box tests before, white box is likely your highest-value next investment because your code has almost certainly never been examined. And if your threat model is continuous, your attack surface changes every sprint, your testing cadence should match it.

A penetration test is only as valuable as its threat model is accurate. Match the test type to the attacker you're actually worried about, and the results will reflect your real risk rather than what happens to be visible from the outside. The teams that close the most risk are the ones that stopped choosing between defensive and offensive, and started running both on the same platform, where the code intelligence from one continuously sharpens the other.

FAQs

What is the main difference between black box and white box penetration testing?

Is gray box testing better than black box testing?

How long does each type of penetration test take?

What vulnerabilities does white box penetration testing find that the others miss?

Do I need all three types of penetration testing, or just one?