Search "automated penetration testing services" and you'll find two kinds of results.

The first is marketing pages for scanner platforms, tools that run known vulnerability signatures against your infrastructure, produce a report full of CVE numbers, and call it a pentest.

The second is a handful of genuinely different products: agentic AI platforms that approach your attack surface the way a sophisticated adversary would, combining external reconnaissance with source code intelligence to find what no external scan can see.

The gap between these two categories is not a matter of price or brand reputation. It is a fundamental difference in what gets found, and more importantly, what gets missed. A scanner that runs 50,000 signature checks and misses the authentication bypass buried in your Express.js middleware ordering, the hardcoded Stripe key in your JavaScript bundle, and the IDOR across your entire customer record dataset has not assessed your security. It has documented what it already knew to look for.

This guide covers what automated penetration testing actually does at a technical level, what separates a real automated assessment from a scanner run, and why the most effective platforms in 2026 are the ones that stopped treating offensive testing and defensive code analysis as separate problems.

What Automated Penetration Testing Actually Means

The term "automated penetration testing" covers three fundamentally different categories of product, and understanding which category you're evaluating is the prerequisite for any useful comparison.

Category 1: Automated vulnerability scanners

Tools like Nessus, Qualys, and basic web scanners. They run known CVE signatures against discovered hosts and return a list of potential findings. Fast, cheap, continuous. They do not confirm exploitation, every finding is a hypothesis based on version detection or signature matching. For compliance purposes, these do not satisfy the "exploitable vulnerabilities" language auditors use because nothing is actually exploited.

Category 2: Continuous attack surface management platforms

Tools like Intruder and Pentera. They go beyond simple signature scanning to provide ongoing external surface monitoring, CVE-to-infrastructure correlation, and some level of active testing. Better than pure scanners, but still fundamentally external-only. No source code access, no white box capability, no business logic testing. They find what is visible from the outside, and nothing else.

Category 3: Agentic AI penetration testing platforms

The newest and most technically differentiated category. These pentesting tools like CodeAnt AI use AI reasoning to approach your attack surface the way a skilled adversary with persistent access would, mapping what is unknown, following what reconnaissance reveals, constructing exploit chains from combinations of findings, and tracing vulnerabilities through source code to root cause. The output is confirmed, exploited, documented findings, not hypotheses.

Most vendor marketing conflates all three. The way to cut through it is to ask one question: does the platform confirm exploitation with working proof-of-concept before reporting a finding, or does it report potential vulnerabilities based on signatures? The answer separates categories 1 and 2 from category 3.

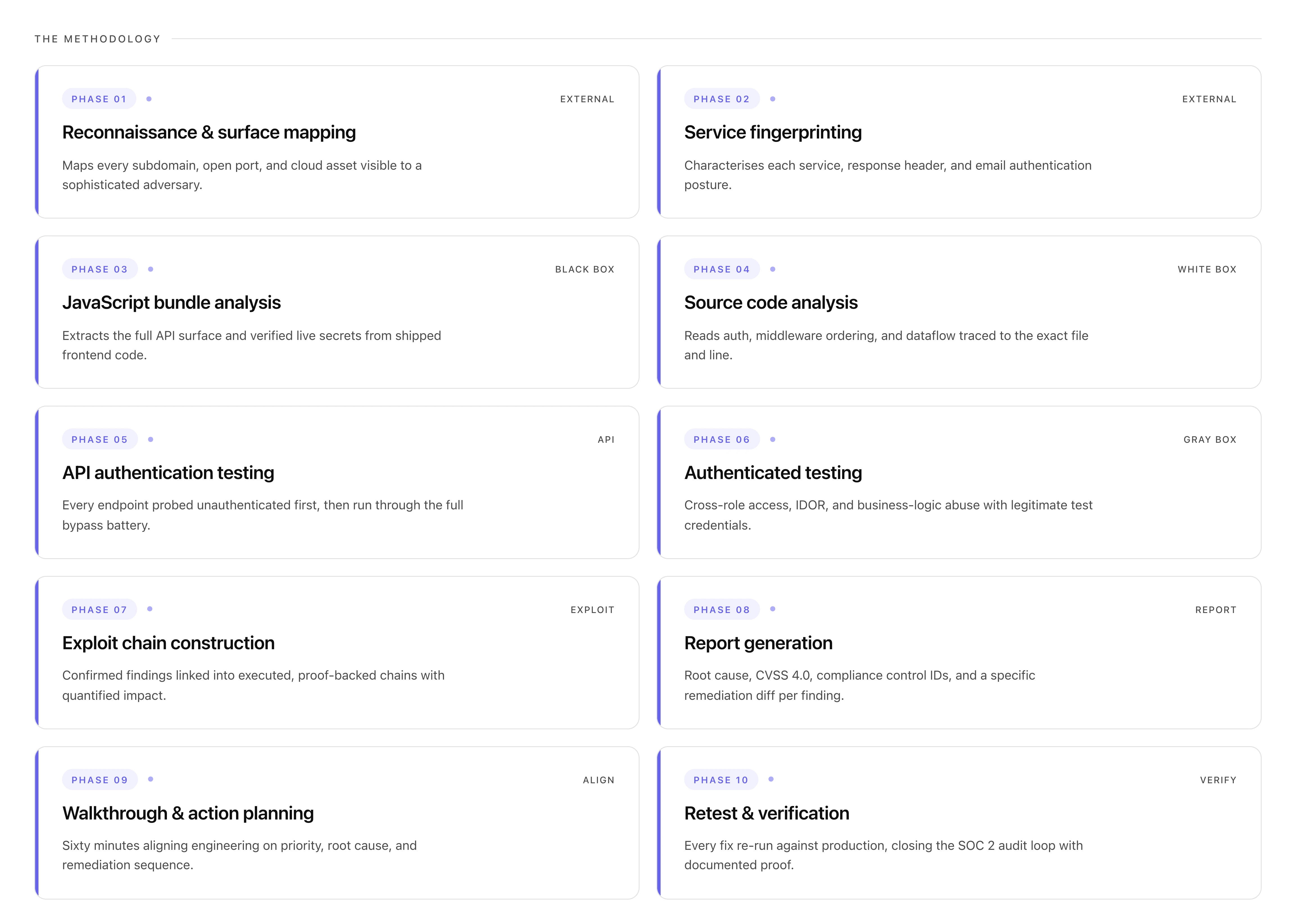

The 10-Phase Methodology of a Real Automated Penetration Test

This is what actually happens inside a genuine automated penetration testing engagement, not a scanner run. Every phase feeds the next. The output of reconnaissance shapes what authentication testing targets. The output of source code analysis shapes what exploit chains are possible. Nothing is a standalone checkbox.

Phase 1: Reconnaissance and external surface mapping

Before a single vulnerability is probed, the platform builds the most complete possible map of everything visible from the outside. This is what a sophisticated adversary does before attempting any exploitation, and thoroughness here determines what gets tested in every subsequent phase.

DNS brute-forcing runs across 150+ subdomain prefix patterns: not just www, api, mail, but dev, staging, jenkins, grafana, internal, uat, admin, portal, kibana, elastic, and hundreds more. Every discovered subdomain becomes a target.

Certificate Transparency log queries surface subdomains that DNS brute-forcing misses, every TLS certificate ever issued for any subdomain of your domain is publicly logged. Subdomains that were decommissioned from DNS but still have a running server appear here. These are consistently high-value targets: the code is old, the security patches are behind, and no one is watching them.

Full TCP port scanning runs across every discovered host, not just 80 and 443. An exposed Redis instance on port 6379, an Elasticsearch cluster on 9200, a Node.js debug inspector on 9229, a Grafana dashboard on 3000, these appear in the port scan and represent critical unauthenticated access findings that many teams have no visibility into.

Cloud asset enumeration tests naming patterns for S3 buckets, Azure Blob containers, and GCP storage across every combination of your company name, product names, and environment identifiers. A publicly-readable S3 bucket containing customer exports is one of the most consistently-found critical findings in black box engagements.

Phase 2: Service fingerprinting and configuration analysis

Every discovered service is characterized before vulnerability testing begins. HTTP response headers reveal the technology stack, X-Powered-By: Express, JSESSIONID cookies, X-Application-Context headers exposing internal ports. This matters because different frameworks have different vulnerability patterns and different configuration locations.

Security header analysis runs across every domain: missing Strict-Transport-Security, absent Content-Security-Policy, misconfigured X-Frame-Options. Email authentication checks verify SPF, DKIM, and DMARC records, a domain with p=none in DMARC allows an adversary to send convincing phishing email from any @yourdomain.com address to your own users.

Phase 3: JavaScript bundle analysis

This is the step that most traditional penetration testing approaches skip entirely and where modern SaaS applications consistently leak their most sensitive internal information.

Every JavaScript file served by the application is downloaded and analyzed. A typical React or Vue SPA ships 5–15 MB of minified compiled JavaScript, the assembled output of hundreds of source files. Inside that code is a complete map of the application's API surface, every endpoint the frontend calls, every internal service it references, and, consistently, secrets that were never meant to be there.

The analysis runs 30+ secret detection patterns: AWS access keys, Stripe live keys, GitHub tokens, JWT secrets, database connection strings, Sentry DSNs, Google API keys, Twilio credentials, SendGrid keys. Every hit is verified against the live API before being reported, a Stripe key gets tested against the Stripe API to confirm whether it's active and what permissions it grants. A finding is only reported if the secret is confirmed valid.

Staging vs. production bundle comparison surfaces endpoints removed from the production frontend that are still deployed on the production API server. These forgotten endpoints were removed from the UI intentionally, which means there was a reason, but the API route was never decommissioned.

Phase 4: Source code analysis (white box track)

This is where the platform's code intelligence, the same capability that powers defensive code review, becomes a direct offensive asset.

With read-only repository access, the platform reads every authentication and authorization configuration end-to-end. Spring Security filter chains, Express.js middleware ordering, Django permission classes, Rails before_action declarations. The single most impactful finding class in white box testing is the middleware ordering vulnerability: admin routes registered before authentication middleware is applied globally, meaning they are publicly accessible despite appearing protected. This produces a normal HTTP response to unauthenticated requests. No external scanner sees it. Reading the code surfaces it immediately.

Dataflow tracing follows every user-controlled input from HTTP entry to every dangerous sink: raw SQL queries, shell commands, file operations, template rendering, HTTP requests with user-controlled URLs. The finding is not "SQL injection detected," it is the exact file, line, function, parameter, working payload, and a remediation diff. Engineers fix the right thing on the first attempt.

Git history scanning covers every branch, every commit, every deleted file. A credential committed and removed in a subsequent commit is still in version control and retrievable by anyone with repository access. Every discovered historical secret is verified for current validity.

Phase 5: API authentication testing

Every endpoint discovered across all prior phases, from documentation, JS bundle analysis, GraphQL introspection, Swagger exposure, CT log reconnaissance, is tested unauthenticated first. The response classification determines what happens next.

Every endpoint returning anything other than a clean 401 gets subjected to the full battery of authentication bypass patterns: JWT alg:none attacks, empty bearer tokens, expired token replay, path traversal variants (/api/admin/users/../users, /API/admin/users, /api/admin/users%20), header manipulation bypasses (X-Original-URL, X-Forwarded-Host), and CORS misconfiguration testing across seven attacker-controlled origin patterns.

Exposed API documentation is both a finding and a map. A publicly accessible /actuator/env endpoint in a Spring Boot application returns every environment variable including DATABASE_URL, STRIPE_SECRET_KEY, and JWT_SECRET. A publicly accessible /actuator/heapdump is worse, a binary JVM heap dump that can be parsed to extract active credentials stored as string objects in memory.

Phase 6: Authenticated testing (gray box track)

With test credentials established during scoping, the gray box track tests what legitimate users can access beyond their intended permissions. This is the highest-risk threat model for most SaaS applications, not an external adversary with zero access, but a customer or employee who already has valid credentials.

Every admin endpoint is tested with standard user credentials. Every pro-tier endpoint is tested with free-tier credentials. This catches the most common broken access control pattern in production SaaS: role enforcement that exists in the frontend UI but was never implemented at the API level.

IDOR testing runs systematically across every identifier-accepting endpoint: sequential IDs, UUIDs, slugs, compound identifiers. Authenticated as user ID 1001, the test requests records for user IDs 1000, 1002, and a range beyond. The test confirms whether the database query filters by the authenticated user's identity or merely by the ID passed in the request.

Business logic testing walks every critical workflow adversarially: can the checkout confirmation endpoint be called without completing the payment step? Can a discount code be replayed by intercepting and resending the validation request? Can two concurrent requests exploit a race condition in an inventory or balance check? These do not produce anomalous HTTP responses and match no CVE signature. They require understanding what the application is designed to do, and verifying whether it actually enforces that intent at every API entry point.

Phase 7: Exploit chain construction

Every confirmed finding from every track, black box, white box, gray box, is loaded into a unified findings model and evaluated for chain potential.

Alao, check out the 3 different types of AI penetration testing and how to pick the right one for you.

The chain construction asks: does finding A provide access, information, or capability that makes finding B more impactful? A tenant ID leaking from the user profile endpoint plus an IDOR on the records endpoint equals complete cross-tenant data access. A hardcoded internal API hostname in the JS bundle plus an unauthenticated endpoint on that internal API equals access to internal services with zero credentials. Three medium-severity findings that individually get deprioritized combine into a critical chain that gets escalated.

Every confirmed chain is executed with working proof-of-concept. The impact is quantified: how many records were accessible, what data types were exposed, what is the realistic path from zero access to maximum data breach.

Phase 8: Report generation

The report is built to serve two audiences simultaneously:

the engineering team remediating findings

the auditor verifying that security controls operated effectively

Every finding includes: working proof-of-exploit (the exact request that produced unauthorized access), root cause to file and line, CVSS 4.0 score with metric justification, compliance mapping to specific control IDs (not general "SOC 2" references, specific CC6.1, CC6.6, CC7.1 control numbers), and a remediation diff showing exactly what to change. Not generic guidance, the specific before-and-after code change.

Phase 9: Walkthrough and action planning

A 60-minute walkthrough with the engineering team turns the report into a shared understanding of what happened, why it matters, and what to do in what order. Priority alignment, root cause discussion, remediation specifics, and edge cases resolved before the remediation sprint begins.

Phase 10: Retest and verification

Every fix is retested against the production environment, not staging. The retest report documents finding-by-finding: the proof-of-concept no longer works, the fix is confirmed in source, the production environment reflects the change. This document is what closes the SOC 2 audit loop.

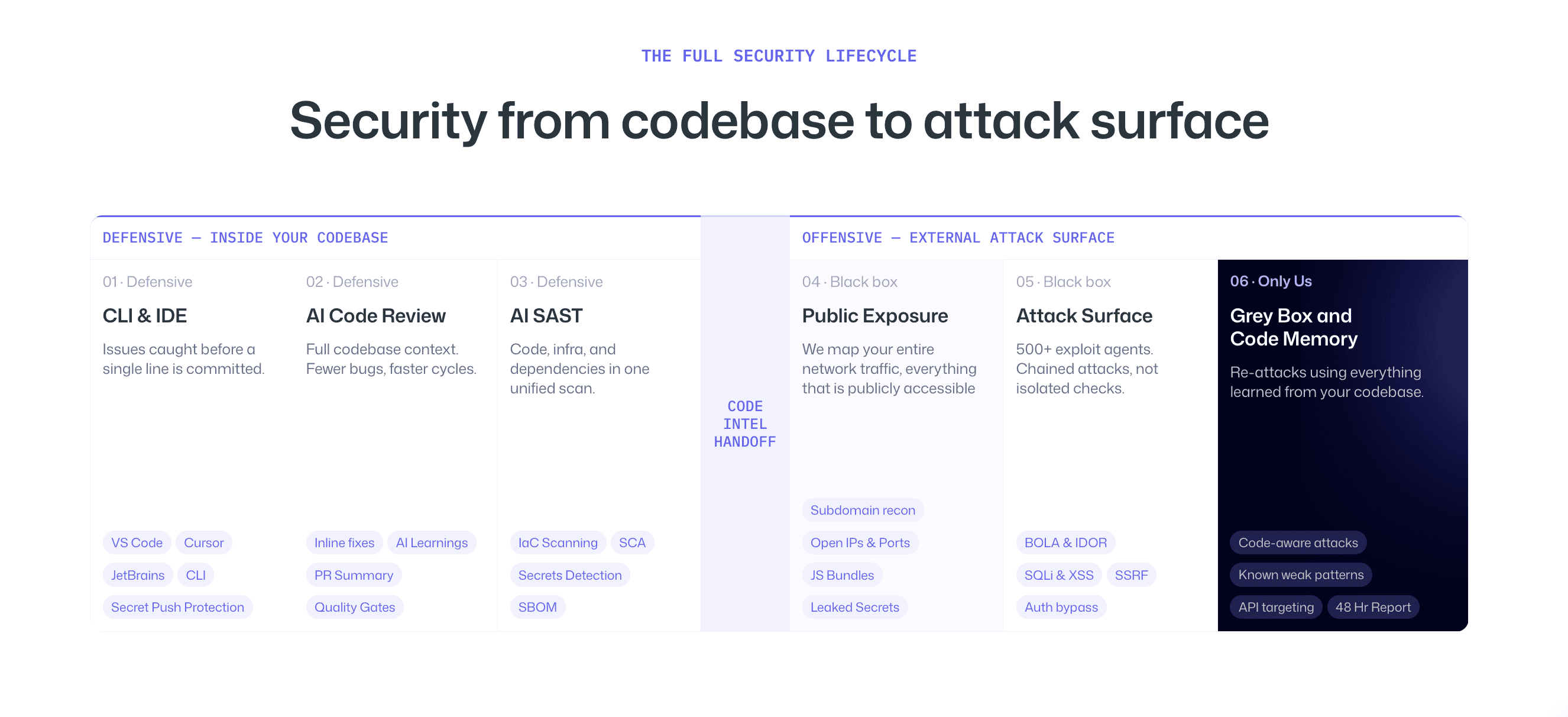

Why the Best Automated Penetration Testing Platforms Combine Offensive & Defensive Security

Here is the structural insight that separates the newest generation of platforms from everything that preceded them.

Traditional penetration testing and code review are treated as separate programs, different vendors, different teams, different schedules. The pentest happens once a year. The code review happens on every PR. They do not talk to each other.

This separation creates a blind spot that runs in both directions. The annual pentest tests what is visible from the outside but lacks the code intelligence to find what is invisible externally, middleware bypasses, dataflow injection, configuration-level auth failures. The continuous code review catches patterns in new code but cannot confirm whether those patterns are exploitable in the production environment as it actually exists.

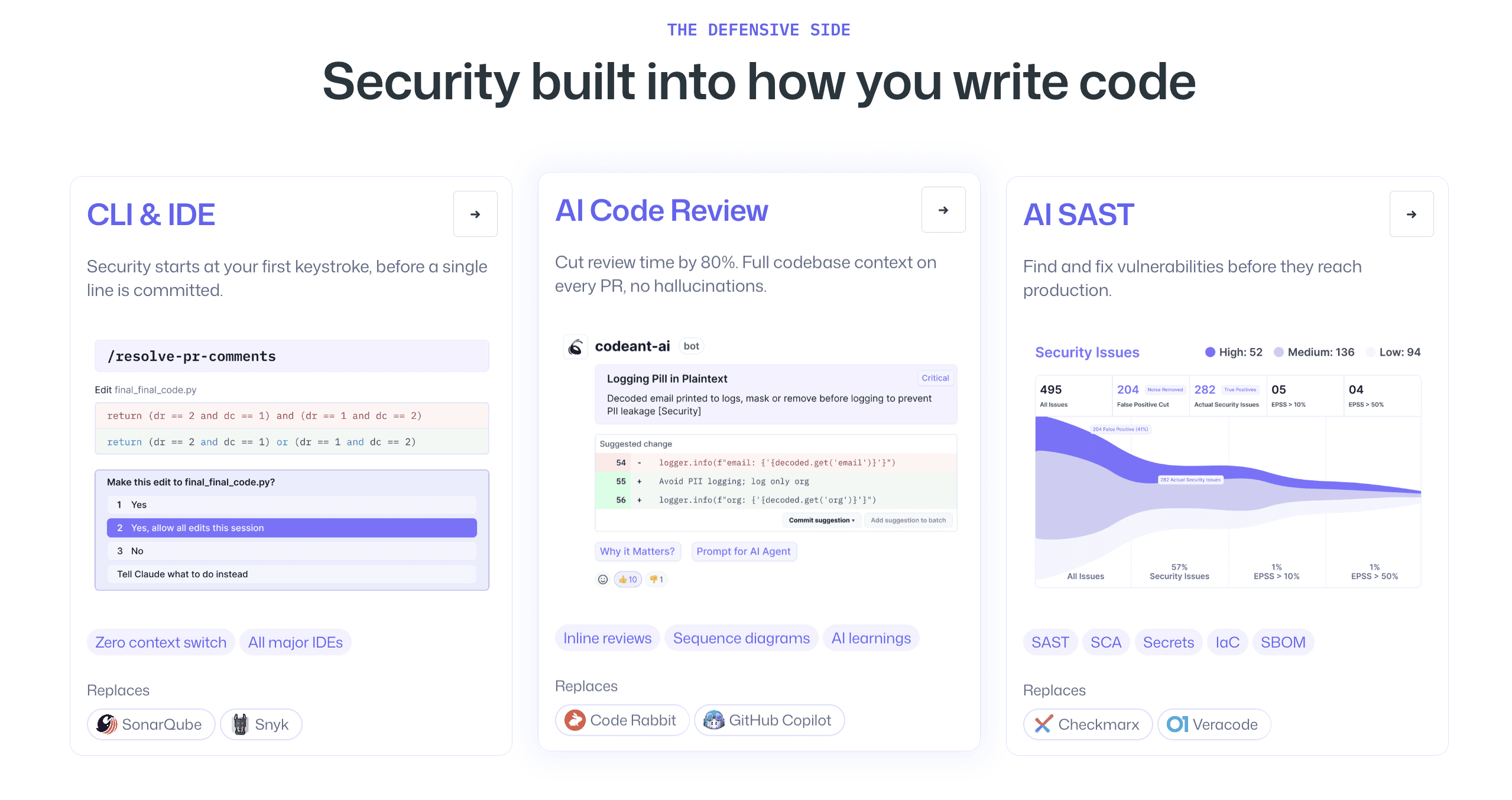

When both run on the same platform, the intelligence transfers. The system that has read your authentication middleware for the last 18 months of code reviews knows exactly where the exclusion rules are before the penetration test begins. The system that traced your data flows in defensive analysis knows which sinks are reachable from which endpoints before the offensive engagement maps the attack surface. An adversary conducting reconnaissance against your external surface with deep knowledge of your code's weaknesses is the most accurate simulation of how sophisticated real-world attacks actually work.

The feedback loop also runs in reverse. When the offensive engagement finds an exploit chain that the defensive review did not flag, that pattern gets incorporated into the defensive analysis, calibrating the code review to catch similar configurations more aggressively in future pull requests.

The two modes make each other better over time. This is the difference between buying two separate tools that happen to be in the same security category and operating a unified platform where the defensive and offensive programs reinforce each other continuously.

The Top Automated Penetration Testing Services Compared

CodeAnt AI: Full-spectrum agentic platform covering all 10 phases above. Unique in combining defensive code review with offensive penetration testing on a single platform. The code intelligence from defensive analysis informs the offensive engagement, producing white box depth that external-only platforms cannot reach. Covers black box, white box, and gray box in a single engagement. Pricing tied to findings, low and medium severity included at no cost, high and critical findings drive the engagement fee. Unlimited retests included. Complete SOC 2 evidence package standard.

Pentera: Automated security validation focused on network and external infrastructure. Strong for continuous external surface validation and credential testing. No white box capability, no source code analysis, no business logic testing. Retest is not included as standard. Best fit for infrastructure-heavy organizations that need continuous external validation alongside a separate application security program.

Intruder: Continuous attack surface management with scanner-based testing. Strong for ongoing CVE-to-infrastructure mapping and clean stakeholder reporting. Does not perform authenticated gray box testing, exploit chain construction, or source code analysis. Best fit for teams that need continuous external monitoring and accept that application-layer and code-level vulnerabilities require a separate engagement.

xBow: AI-native offensive testing with genuine agentic reasoning on web applications. More sophisticated than scanner-based tools for external web application testing. No source code integration, no defensive capability, no formal compliance evidence package. Best fit for teams that already have a strong code review program and want AI-assisted external offensive testing.

Portswigger Burp Suite Pro: Industry-standard manual web application testing tool. Best-in-class for skilled security researchers conducting manual assessments. Not an automated platform, requires expert operator. No source code analysis, no managed reporting, no compliance documentation. Best fit for in-house security teams conducting deep manual assessments.

Check out the full in-depth comparison of the best automated penetration testing tools here.

What Most Automated Penetration Testing Services Miss

The gap between what automated scanning finds and what a full-spectrum engagement finds is not marginal. It is the difference between finding network misconfigurations and finding the authentication bypass that exposes every customer's data.

Middleware ordering vulnerabilities, where routes are registered before authentication middleware applies globally, produce normal HTTP responses to unauthenticated requests. They do not appear in any CVE database. They are invisible to any external testing approach. They are found by reading the code.

Business logic flaws, price manipulation, workflow bypass, subscription tier abuse, produce no anomalous HTTP patterns and match no signature. They are found by understanding what the application is supposed to do and testing whether the API enforces it.

Secrets in Git history, credentials committed and deleted, are accessible to anyone who clones the repository. They are not visible externally. They are found by scanning commit history.

Exploit chains that cross severity thresholds only in combination, three medium findings that individually get deprioritized but combine into a critical access path, are found by systematic cross-referencing across findings. Scanners report findings individually. Agentic platforms evaluate them together.

Every automated penetration testing service you evaluate should be assessed against these categories. If the methodology description does not cover source code analysis, authenticated gray box testing, and exploit chain construction, those categories are not being tested, regardless of what the marketing says.What automated penetration testing structurally cannot find

This is where most evaluations go wrong. No automated tool can find:

Business logic vulnerabilities that require understanding what the application is designed to do. Price manipulation, discount code replay, checkout workflow bypass, subscription tier abuse, these require knowing the intended behavior and verifying whether the API enforces it. No signature database covers these because they are application-specific by definition.

Social engineering and physical security. Automated testing operates on digital attack surface. Phishing, vishing, and physical access testing require human judgment and cannot be automated in any meaningful sense.

Novel zero-day exploitation requiring research. Automated tools find known patterns and logical chains from discovered vulnerabilities. Finding a genuinely novel exploitation technique in a custom cryptographic implementation or a proprietary protocol requires the kind of creative reasoning that remains a human researcher advantage.

Vulnerabilities requiring contextual understanding of organizational risk. Whether a finding constitutes a critical business risk depends on what data it exposes, who the affected users are, and what the regulatory implications are. Automated tools can classify CVSS scores; they cannot tell you that this particular finding, in this particular application, serving these particular enterprise customers, requires immediate escalation to the board.

Conclusion: Automated Penetration Testing Only Works if it Proves Real Risk

Automated penetration testing is useful, but only if it proves real risk. Most tools stop at identifying potential vulnerabilities instead of confirming whether they can actually be exploited. That gap is where real breaches happen.

If your current approach cannot show how a vulnerability is exploited, what data is exposed, or how multiple issues combine into an attack path, then you are not running a penetration test. You are running a scan. This is where CodeAnt changes the model. Instead of just scanning endpoints, it reads your code, tests real workflows, validates vulnerabilities with proof of exploit, and connects findings into actual attack scenarios. The result is not more findings, but the right ones.

Because security is not about how much you detect. It is about whether you catch what actually matters before someone else does.

Try our free pentesting tool today. Pay only on high & critical issues. Low and medium findings? Always free. No engagement fee.

FAQs

What is automated penetration testing?

Is automated penetration testing enough for SOC 2?

How is automated penetration testing different from manual penetration testing?

What vulnerabilities does automated penetration testing miss?

How much does automated penetration testing cost?