Semgrep and CodeAnt AI both market themselves as developer-first security tools, but they define “developer-first” differently. Semgrep puts the rule in the developer’s hands: an open-source, lightweight pattern-matching engine where security teams write custom rules in a YAML syntax that reads almost like the code it searches.

CodeAnt AI puts AI in the developer’s PR:

an AI-native platform that combines code review

code quality

security scanning into a single workflow, generating evidence-based findings with one-click fixes at every stage from pre-commit to SecOps dashboard

This blog compares both tools across the same six-dimension evaluation framework used in our full SAST tools comparison. The comparison is written for the developers and DevSecOps engineers who are most likely shortlisting these two tools, people who care about how a tool works, not just what it claims to detect.

CodeAnt AI vs Semgrep: Quick Summary

Dimension | Semgrep | CodeAnt AI |

Primary Strength | Open-source rule engine + custom rule authoring | Unified AI code review + quality + security |

AI Tier | AI-Assisted (Pro Engine uses semantic analysis) | AI-Native |

Detection Engine | Pattern matching (OSS) + semantic analysis (Pro Engine) | AI as primary detection engine |

Steps of Reproduction | ✗ | ✓ (every finding) |

Auto-Fix | ✗ (suggests rules, does not generate code patches) | AI-generated one-click fixes in PR |

Security Coverage | SAST + SCA + Secrets | SAST + SCA + Secrets + IaC + SBOMs |

Code Quality | ✗ | ✓ (complexity, duplication, dead code) |

Code Review | ✗ | ✓ (AI code review with inline comments) |

Custom Rules | ✓ genuine differentiator (YAML pattern syntax) | AI-driven detection (custom policies in plain English) |

Workflow Integration | CLI → (VS Code) → CI/CD → Dashboard | CLI → IDE → PR → CI/CD → SecOps |

IDE Support | VS Code (Semgrep extension) | VS Code, JetBrains, Visual Studio, Cursor, Windsurf |

Pre-Commit / CLI | ✓ CLI scanning (fast, lightweight). No pre-commit secret blocking. | ✓ (blocks secrets, credentials, SAST/SCA issues before commit) |

SecOps Dashboard | Semgrep AppSec Platform dashboard (findings, triage) | ✓ (vulnerability trends, OWASP/CWE/CVE, team risk, Jira/Azure Boards) |

SCM Support | GitHub, GitLab, Bitbucket | GitHub, GitLab, Azure DevOps, or Bitbucket |

Deployment | CLI runs locally; dashboard is cloud-based | Customer DC (air-gapped), Customer Cloud, CodeAnt Cloud |

Compliance | SOC 2 (Enterprise tier) | SOC 2 Type II, HIPAA, zero data retention |

Pricing Model | Free (up to 10 contributors); per contributor (Team/Enterprise) | Per user ($20/user/month Code Security) |

Open Source | ✓ (core engine is Apache 2.0) | ✗ |

Languages | 30+ languages | 30+ languages, 85+ frameworks |

Comparison verified against Semgrep documentation and CodeAnt AI documentation as of February 2026. Features change, verify with both vendors before purchasing.

Where Semgrep Excels

Semgrep has become the most popular open-source SAST tool on GitHub, and that popularity is earned. Any honest comparison starts with what Semgrep does well.

Open-Source Core and Custom Rule Authoring

Semgrep’s open-source engine (Apache 2.0) lets anyone run SAST locally without a license, a vendor contract, or a cloud dependency. The engine is deterministic and transparent: you can read every rule, understand exactly why it fired, and modify it to match your codebase’s conventions.

The custom rule authoring experience is Semgrep’s deepest competitive advantage. Rules are written in a YAML syntax that uses pattern-matching operators (pattern, pattern-not, pattern-either, metavariable-regex) to describe vulnerable code shapes. A security engineer familiar with the target language can write a working custom rule in minutes, not hours, not days. This means security teams can encode organization-specific policies (e.g., “our internal auth library must always be called with verify=True”) without waiting for the vendor to add support. No other SAST tool makes custom rule authoring this accessible.

Developer Community and Rule Registry

Semgrep’s open-source community has contributed thousands of rules to the Semgrep Registry. This registry covers common vulnerability patterns across major languages and frameworks, and rules are reviewed and maintained by both community contributors and Semgrep’s internal security research team.

The commercial Semgrep AppSec Platform adds Pro rules, proprietary rules written by Semgrep’s security researchers that cover more advanced vulnerability patterns, including some cross-file analysis via the Pro Engine. The combination of community rules (free, transparent) and Pro rules (curated, higher-confidence) gives teams a flexible detection strategy.

For teams that invest in rule customization, this ecosystem is a genuine advantage. The ability to fork a community rule, adapt it to your codebase, and share it back, all in readable YAML, creates a feedback loop that other tools cannot match.

Lightweight CLI-First Approach

Semgrep’s CLI is best-in-class for lightweight local scanning. It starts in seconds, runs incrementally (scanning only changed files), and produces results fast, typically under 30 seconds for incremental scans on large codebases. The CLI runs entirely locally: no cloud dependency, no data leaving the developer’s machine.

This lightweight approach means Semgrep fits into workflows where heavier tools create friction. Developers can run semgrep scan from their terminal as naturally as they run git status. The output is clean, readable, and actionable. For teams that value speed and simplicity above all else, Semgrep’s CLI experience is hard to beat.

Where CodeAnt AI Goes Further

CodeAnt AI addresses several gaps that developers encounter as their security needs outgrow what pattern matching alone can detect, particularly around AI-native detection, evidence-based findings, auto-fix capabilities, and platform breadth beyond pure security scanning.

AI-Native Detection vs. Pattern Matching

Semgrep’s detection engine is built on pattern matching. The open-source engine performs single-file analysis, matching code against rule patterns. The commercial Pro Engine adds cross-file and cross-function analysis with semantic understanding, a meaningful upgrade, but the underlying approach is still pattern-driven: rules define what to find, and the engine matches against those patterns.

CodeAnt AI uses an AI-native detection engine where machine learning models are the primary analysis mechanism. The scanner reasons about what code does, how data flows, what functions are reachable, what inputs are user-controlled, rather than matching patterns against a rule library.

The practical difference is most visible in three scenarios. Novel vulnerability patterns that no rule has been written for, CodeAnt AI’s AI engine can detect these; Semgrep requires someone to write the rule first. Complex multi-file taint flows where the data path crosses many functions, the Pro Engine handles some of these, but pattern matching has inherent limits with deeply nested flows. And AI-generated code from Copilot, Cursor, or Claude Code that produces patterns rule databases have not cataloged.

CodeAnt AI’s AI-native approach is more powerful for novel detection but less transparent, the model’s reasoning is not a readable YAML file. Teams that value rule transparency above detection breadth may prefer Semgrep’s approach.

Steps of Reproduction vs. Rule-Based Alerts

When Semgrep flags a vulnerability, it provides the rule ID, the matched code location, the rule’s message (which describes the vulnerability pattern), and a severity rating. The quality of context depends on the rule author, well-written rules include detailed messages and references; community rules vary in quality.

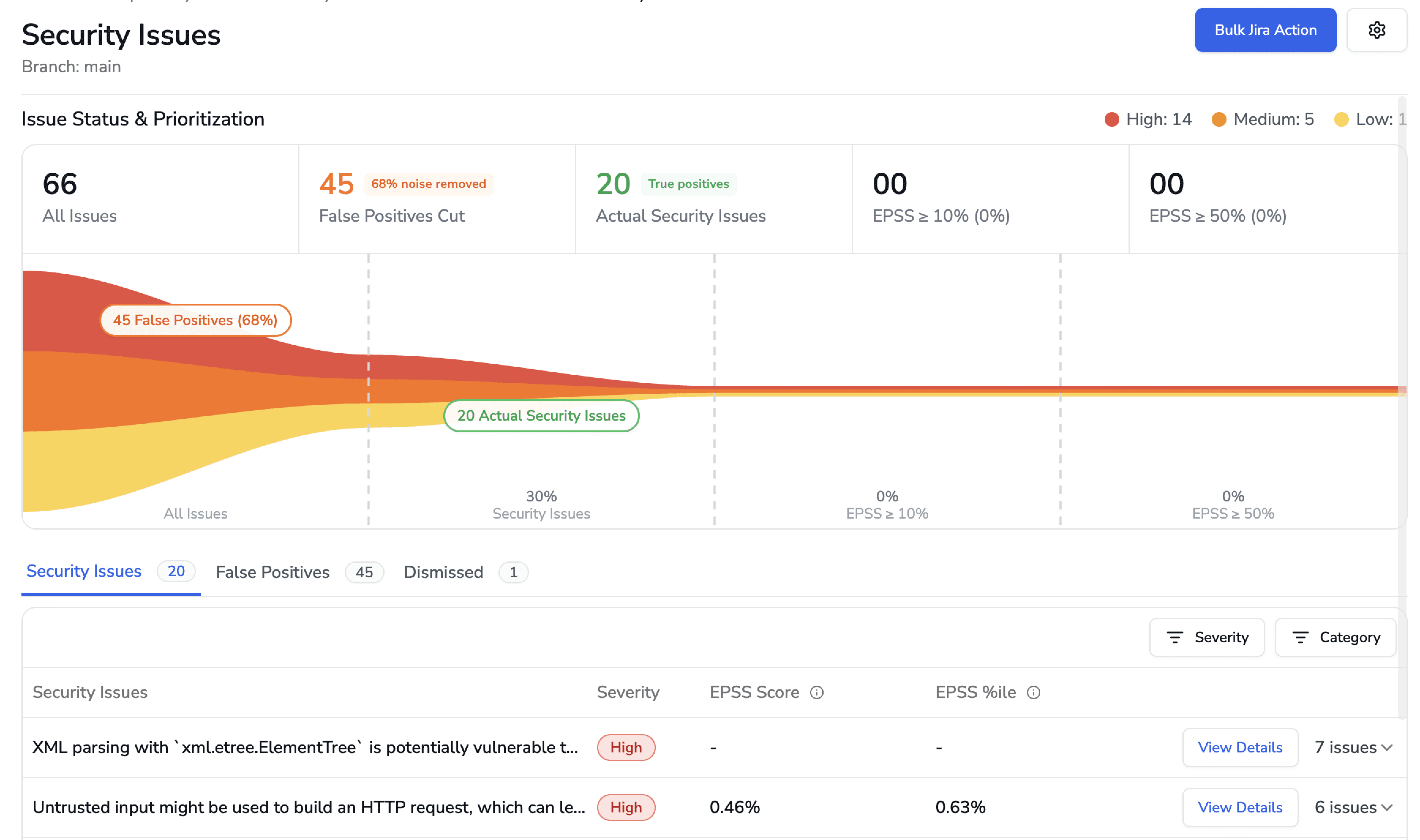

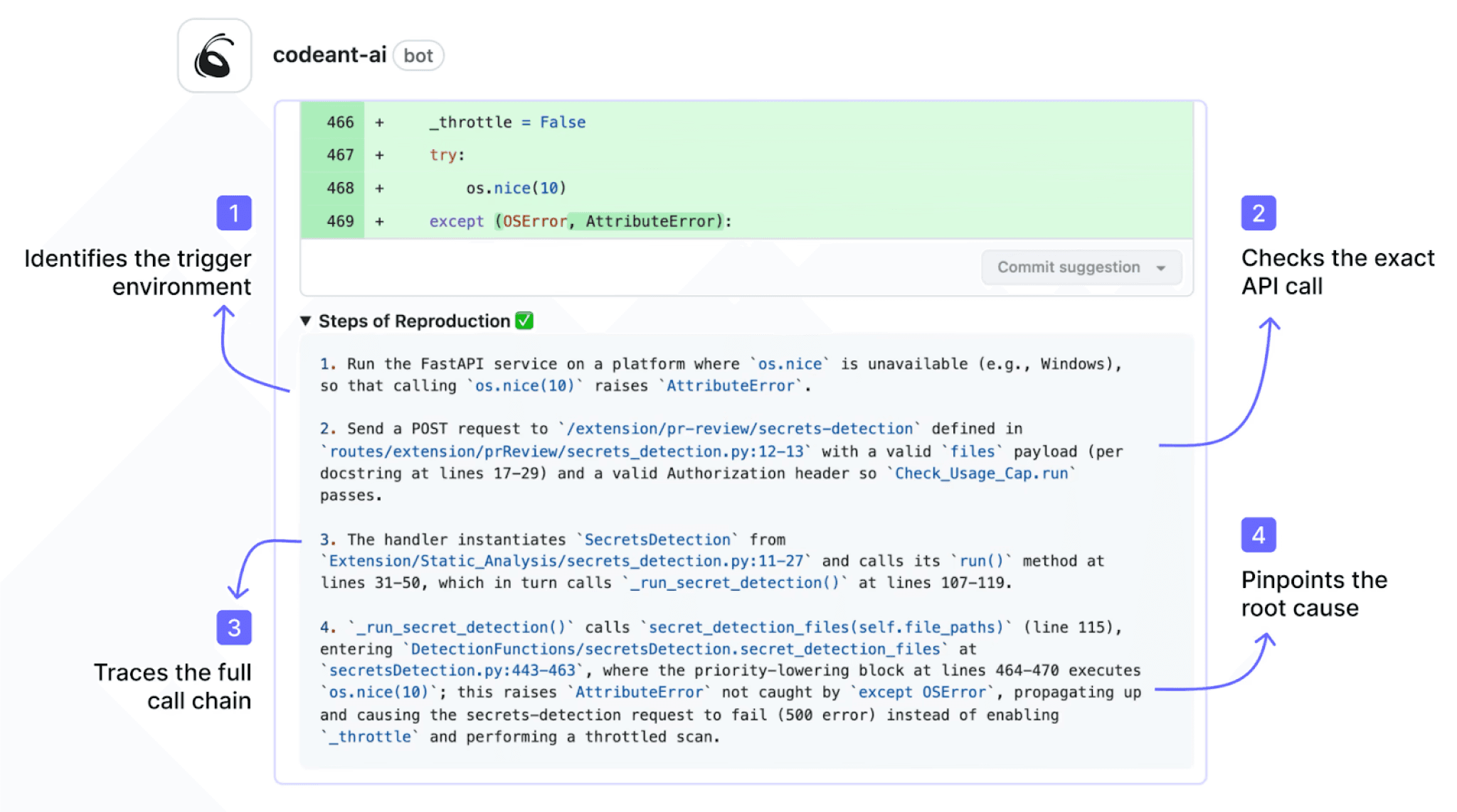

CodeAnt AI generates Steps of Reproduction for every finding: the exact entry point, the complete taint flow through each intermediate step, the vulnerable sink, and a concrete exploitation scenario.

The developer does not need to interpret a pattern match, they review a step-by-step proof that the vulnerability is triggerable.

This difference compounds at scale. When a developer receives 50 findings from a Semgrep scan, they must investigate each one to determine if the pattern match represents a real vulnerability in their specific context. When they receive 50 findings from CodeAnt AI, each comes with reproduction evidence that transforms “investigate whether this is real” into “review the evidence and decide on a fix.” For more on how evidence quality affects developer trust in SAST findings, see why Steps of Reproduction change how developers trust findings.

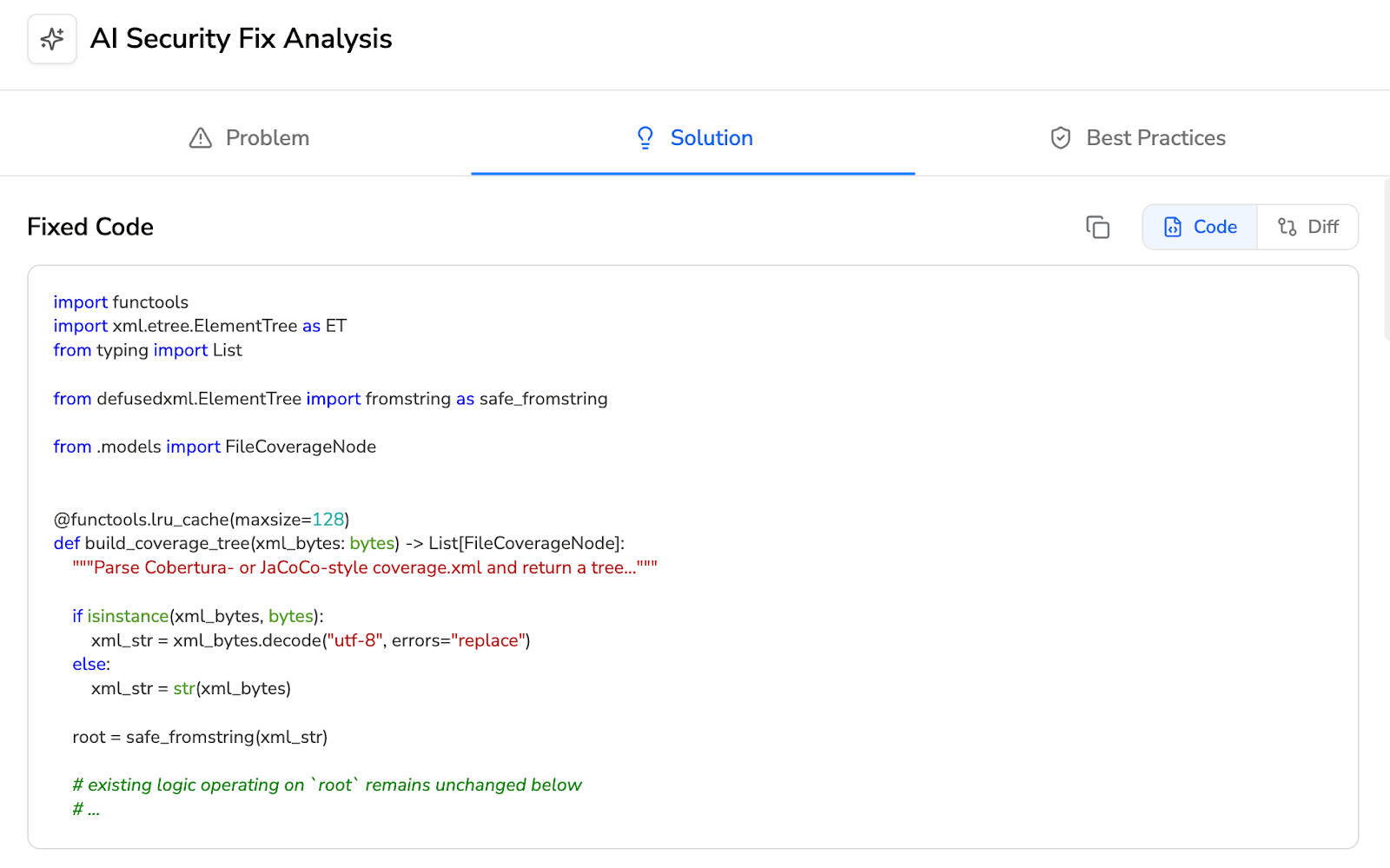

Auto-Fix Quality (AI-Generated vs. Template)

Semgrep does not generate code-level fix suggestions. The tool identifies the vulnerability and provides the rule message (which may include remediation guidance written by the rule author), but it does not produce a code patch that the developer can apply. Developers must write the fix themselves.

CodeAnt AI generates AI-powered fix suggestions for every finding: a concrete code change that the developer can apply with one click directly in the PR. The fix is presented as a committable suggestion, the developer clicks “Apply Fix,” and the change is committed to the PR branch. No separate PR, no manual coding. Across dozens of findings per sprint, the difference between “here’s what’s wrong, fix it yourself” and “here’s the fix, click to apply” is substantial.

Code Quality and Review (Not Just Security)

Semgrep focuses on security scanning (SAST, SCA, secrets detection). For code quality analysis (code smells, duplication, dead code, complexity) and code review, teams using Semgrep add separate tools, SonarQube for quality, and GitHub reviews or a dedicated review tool for code review.

CodeAnt AI consolidates:

code quality analysis (code smells, duplication, dead code, cyclomatic complexity, DORA metrics)

SBOM generation into a single platform

This means one PR comment thread covers security findings, code quality issues, and code review observations, rather than three separate tools posting three separate sets of comments.

Feature-by-Feature Comparison

Feature | Semgrep | CodeAnt AI |

Detection Accuracy | ||

SAST (first-party code) | ✓ (pattern matching; cross-file via Pro Engine) | ✓ (AI-native with semantic analysis) |

SCA (open-source dependencies) | ✓ (Semgrep Supply Chain) | ✓ (with EPSS scoring) |

Secrets detection | ✓ | ✓ |

IaC scanning | Partial (via community rules) | ✓ (AWS, GCP, Azure) |

SBOM generation | ✗ | ✓ |

Steps of Reproduction | ✗ | ✓ (every finding) |

Custom rule authoring | ✓ (YAML pattern syntax best-in-class) | Custom policies in plain English |

AI Capabilities | ||

AI tier | AI-Assisted (Pro Engine semantic analysis) | AI-Native (Tier 3) |

AI code review | ✗ | ✓ (line-by-line PR review) |

AI auto-fix | ✗ (no code patch generation) | ✓ (one-click committable fixes in PR) |

AI triage / false positive reduction | Partial (confidence scoring on Pro rules) | ✓ (AI-native detection + reachability analysis) |

PR summaries | ✗ | ✓ |

Batch auto-fix | ✗ | ✓ (resolve hundreds of findings at once) |

Developer Experience | ||

Primary interface | CLI-first + Semgrep App dashboard | PR-native (inline comments) |

CLI scanning | ✓ (best-in-class, fast, lightweight, local) | ✓ |

Pre-commit hooks (secret/credential/SAST blocking) | ✗ (CLI can scan, but doesn’t block commits) | ✓ (blocks before commit) |

IDE integration | VS Code (Semgrep extension) | VS Code, JetBrains, Visual Studio, Cursor, Windsurf |

AI prompt generation for IDE fixes | ✗ | ✓ (generates prompts for Claude Code/Cursor) |

Inline PR comments | ✓ (via CI integration, scan results) | ✓ (review comments + findings + Steps of Reproduction + fix suggestions) |

One-click fix application | ✗ | ✓ (committable suggestions in existing PR) |

Integrations | ||

GitHub | ✓ | ✓ |

GitLab | ✓ | ✓ |

Bitbucket | ✓ | ✓ |

Azure DevOps | ✗ | ✓ |

CI/CD pipelines | ✓ (GitHub Actions, GitLab CI, Jenkins, CircleCI) | ✓ (GitHub Actions, Jenkins, GitLab CI, Bitbucket Pipelines, Azure DevOps Pipelines) |

Jira integration | ✗ (via third-party or API) | ✓ (native) |

Pricing | ||

Free tier | ✓ (up to 10 contributors Team features) | 14-day free trial |

Open-source option | ✓ (core engine is Apache 2.0) | ✗ |

Pricing model | Per contributor | Per user |

Starter price | Free (10 contributors); Team/Enterprise pricing per contributor | $20/user/month (Code Security) |

Enterprise | Custom | Custom |

Enterprise Readiness | ||

Deployment options | CLI runs locally; dashboard is cloud-based (no formal air-gapped offering) | Customer DC (air-gapped), Customer Cloud (AWS/GCP/Azure), CodeAnt Cloud |

SOC 2 | ✓ (Enterprise tier) | ✓ (Type II) |

HIPAA | ✗ | ✓ |

Zero data retention | N/A (CLI is local; dashboard stores findings) | ✓ (across all deployment models) |

SecOps dashboard | Semgrep AppSec Platform (findings, triage, policy management) | ✓ (vulnerability trends, fix rates, team risk, OWASP/CWE/CVE mapping) |

Ticketing integration | ✗ (via API or third-party) | ✓ (Jira, Azure Boards — native) |

Audit-ready reporting | Limited | ✓ (PDF/CSV exports for SOC 2, ISO 27001) |

Attribution / risk distribution | ✗ | ✓ (repo-level and developer-level risk) |

Code quality analysis | ✗ | ✓ (code smells, duplication, dead code, complexity) |

Developer productivity metrics | ✗ | ✓ (DORA metrics, PR cycle time, SLA tracking) |

Custom Rules: Semgrep YAML vs. AI-Driven Detection

The most fundamental difference between Semgrep and CodeAnt AI is how each tool decides what to look for.

Semgrep: Rule-Driven Detection

Semgrep is built on explicit rules.

Every finding maps back to a specific YAML rule that defines:

The pattern to match

The severity

The message

Fix guidance

The open-source engine includes community rules, while the Pro tier adds curated rules maintained by Semgrep’s security team.

The core advantage: teams control detection.

If no rule exists, Semgrep will not detect the pattern.

If you write a new rule, Semgrep detects it immediately.

For teams with mature security engineering capabilities, this is powerful. You can encode organization-specific risks such as:

Internal API misuse

Authorization bypass patterns

Framework-specific anti-patterns

Semgrep rules are readable and reviewable. A custom rule looks like code, which means developers can understand and contribute to the rule library.

CodeAnt AI: AI-Driven Detection

CodeAnt AI takes a different approach.

Instead of matching patterns, it uses machine learning models to reason about:

Data flow

Reachability

Input validation

Output encoding

Code intent

This allows detection of vulnerability classes where no predefined rule exists, including:

Novel patterns in AI-generated code

Complex multi-file taint flows

Business-logic vulnerabilities

The scanner is not limited to what someone explicitly defined in advance.

The Tradeoff: Transparency vs. Breadth

Semgrep offers full transparency.

You can read the rule that generated the finding.

You can debug false positives by editing YAML.

Detection is deterministic and customizable.

CodeAnt AI offers broader coverage.

It can detect patterns no one anticipated.

It is not constrained by rule libraries.

The model’s reasoning is not a human-readable rule file.

Operationally:

If a Semgrep rule causes false positives, you edit the rule.

If an AI-driven finding is incorrect, you suppress or adjust policy thresholds.

Which Approach Fits Your Team?

Choose Semgrep if:

Your security team can write and maintain custom rules

You value deterministic, transparent detection

Policy enforcement is your priority

Choose CodeAnt AI if:

You want detection beyond predefined patterns

Your team is generating AI-assisted code

You value breadth of discovery over rule-level transparency

In Practice: Many Teams Use Both

The approaches are often complementary:

Semgrep enforces organization-specific policies through custom rules.

CodeAnt AI detects broader vulnerability classes that rules may miss.

This combination, deterministic policy enforcement plus AI-native detection, is not theoretical. It is a model that works in real-world teams.

End-to-End Workflow Comparison (CLI → IDE → PR → CI/CD → SecOps)

Both Semgrep and CodeAnt AI position themselves as developer-first tools, but they cover different stages of the developer workflow, and at different depths.

Workflow Stage | Semgrep | CodeAnt AI |

CLI + Pre-Commit | ✓ | ✓ CLI blocks secrets, credentials, API keys, tokens, and high-risk SAST/SCA issues before |

IDE | Partial. VS Code extension provides inline findings from Semgrep rules. No support for JetBrains, Visual Studio, or AI coding environments (Cursor, Windsurf). | ✓ VS Code, JetBrains (IntelliJ, PyCharm, WebStorm), Visual Studio, Cursor, Windsurf. In-context scanning with guided remediation. AI prompt generation triggers Claude Code or Cursor to auto-fix vulnerabilities. |

Pull Request | ✓ Semgrep can post findings as PR comments via CI integration. Findings include rule ID, matched code, and rule message. No AI code review. No fix suggestions. No PR summaries. | ✓ AI code review + security analysis on every PR. Line-by-line review. Steps of Reproduction for every security finding. One-click AI-generated fixes committed directly in the PR. PR summaries. |

CI/CD | ✓ GitHub Actions, GitLab CI, Jenkins, CircleCI. | ✓ GitHub Actions, Jenkins, GitLab CI, Bitbucket Pipelines, Azure DevOps Pipelines. Configurable policy gates by severity, CWE category, OWASP classification, and custom rules. |

SecOps / Compliance | Semgrep AppSec Platform provides a findings dashboard with triage workflows, policy management, and rule performance metrics. No native ticketing integration (Jira/Azure Boards via API or third-party). Limited audit-ready reporting. | ✓ Unified SecOps dashboard: vulnerability trends, TP/FP rates, fix rates, EPSS scoring, OWASP/CWE/CVE mapping, team/repo risk distribution. Native Jira and Azure Boards. Audit-ready PDF/CSV for SOC 2, ISO 27001. Attribution reporting. |

Where Semgrep’s coverage thins out is:

The IDE experience is limited to a VS Code extension. Developers using JetBrains, Visual Studio, or AI coding environments like Cursor and Windsurf do not have native integration. The PR experience reports rule matches but does not provide AI code review, fix suggestions, or evidence-backed findings. The SecOps layer includes a functional triage dashboard, but lacks deeper compliance workflows such as native ticketing, audit-ready exports, and attribution reporting.

CodeAnt AI extends coverage across all five stages.

Pre-commit: Blocks high-risk issues before they enter Git. Semgrep scans and reports; CodeAnt AI scans and blocks.

IDE: Supports a broader range of environments, including AI coding tools where increasing volumes of code are written.

Pull Request: Provides AI code review, Steps of Reproduction, and one-click fixes, not just scan results.

SecOps: Adds analytics, native ticketing, and compliance-ready reporting to close the loop from detection to audit evidence.

The honest summary is simple:

Semgrep has the stronger CLI. CodeAnt AI has the broader workflow.

If your team lives in the terminal and prioritizes fast local scanning, Semgrep is hard to beat. If you need end-to-end workflow coverage, from pre-commit enforcement to PR-native remediation to audit reporting, CodeAnt AI covers stages Semgrep does not.

Deployment and Data Residency

For teams with strict data residency requirements, deployment architecture determines where code and findings data live.

Semgrep’s model has a clear split.

The Semgrep CLI runs entirely locally. Code never leaves the developer’s machine, and no cloud connectivity is required. For teams that only need local scanning, this is a genuine data-residency advantage.

However, the Semgrep AppSec Platform, including the dashboard, triage workflows, policy management, and Pro rules — is cloud-based. Findings metadata, rule configurations, and scan results are stored in Semgrep’s cloud infrastructure. There is currently no formally air-gapped or fully self-hosted version of the complete platform. Teams that need centralized dashboard capabilities must accept cloud-based processing of findings data.

For organizations comfortable with CLI-only scanning and no centralized dashboard, Semgrep offers one of the simplest data-residency models in the SAST market: nothing leaves the machine.

CodeAnt AI provides three deployment options:

Customer Data Center (Air-Gapped): Fully deployed within on-prem infrastructure, including zero external network connectivity. No code, metadata, or telemetry leaves the environment. The full platform, CLI, IDE, PR workflow, CI/CD gates, and SecOps dashboard, runs locally.

Customer Cloud (AWS, GCP, Azure): Deployed inside the customer’s VPC, with full control over infrastructure and network boundaries.

CodeAnt Cloud: Hosted by CodeAnt, SOC 2 Type II certified and HIPAA compliant. Fastest deployment option.

Across all models, CodeAnt AI offers zero data retention, code is analyzed in memory and not persisted to disk.

The practical comparison is straightforward:

If you only need local scanning and no dashboard, Semgrep’s CLI-only model is the simplest option.

If you need a full platform, dashboard, triage, compliance reporting, ticketing, in a self-hosted or air-gapped environment, CodeAnt AI offers deployment flexibility that Semgrep’s cloud-based platform does not currently provide.

Pricing Comparison

Dimension | Semgrep | CodeAnt AI |

Pricing model | Per contributor | Per user |

Free option | ✓ Open-source engine (free forever); AppSec Platform free for up to 10 contributors | 14-day free trial |

Paid Plan | $100/user/month | |

($40 for SAST, $40 for SCA, $20 for Secrets) | $44/user/month ($20 for SAST + SAC + IAC + Secrets + SBOMs, $24 for AI Security Review) | |

Includes AI code review | ✗ | ✓ |

Includes code quality | ✗ | ✓ |

Includes auto-fix | ✗ | ✓ |

Includes SecOps Dashboard + Jira/Azure Boards | Dashboard yes; Jira/Azure Boards via API only | ✓ (native) |

Pricing page | semgrep.dev/pricing |

Final Verdict: Semgrep vs CodeAnt AI for SAST in 2026

Semgrep is built for control. CodeAnt AI is built for automation.

If your security team wants full visibility into every rule, the ability to write YAML-based policies, and an open-source scanning engine that runs locally without vendor dependency, Semgrep remains one of the strongest developer-first SAST tools available.

If your team wants AI-native detection, evidence-backed findings, one-click fixes inside pull requests, and a unified platform that combines SAST, SCA, code review, and compliance reporting, CodeAnt AI provides a broader workflow.

The decision ultimately comes down to what you value more:

Deterministic rule transparency

Or AI-driven detection breadth and automated remediation

The fastest way to evaluate both is not feature comparison tables.

Run both tools on the same repository. Compare findings. Compare false positives. Compare remediation time.

Start a 14-day CodeAnt AI trial and compare it directly with Semgrep on your next PR.

FAQs

What is the main difference between Semgrep and CodeAnt AI for SAST?

Is Semgrep better for custom security policies?

Does CodeAnt AI provide auto-fix for SAST findings?

Which SAST tool is better for AI-generated code?

Can Semgrep and CodeAnt AI be used together?