GitHub processed 43.2 million pull requests per month in 2025, up 23% year-over-year. Meanwhile, Greptile's State of AI Coding report found median PR size jumped 33% between March and November 2025 alone, from 57 to 76 lines changed per PR. The bottleneck isn't writing code anymore. It's everything that happens after git push.

Pull request automation is the category of tools that accelerate the lifecycle of a PR, from creation through review assignment, testing, merging, and deployment. It spans far more than AI code review. It includes merge queues, stacked PRs, automated reviewer assignment, PR analytics, dependency updates, and yes, AI-powered review as one critical layer.

This guide covers the full PR automation stack: what each category does, which platforms lead in each, how they complement each other, and how to assemble the right combination for your team.

TL;DR

PR automation is a stack, not a single tool, merge queues, stacked PRs, smart review assignment, AI code review, PR analytics, and dependency bots each solve different bottlenecks.

Graphite (now owned by Cursor) leads stacked PRs and developer workflow; Aviator and Mergify lead merge queues; LinearB leads PR analytics and DORA metrics.

CodeAnt AI fills the AI-native review + security layer at $24/user/month across all four git platforms, the layer that merge queues and analytics tools don't touch.

AI-generated PRs wait 4.6x longer for review pickup and have a 32.7% acceptance rate vs. 84.4% for human PRs, making automated quality gates non-negotiable.

The best stack for most teams in 2026: a merge queue (Aviator, Trunk, or GitHub native) + AI review layer (CodeAnt AI) + dependency automation (Renovate or Dependabot) + optional analytics (LinearB or Swarmia).

What "PR Automation" Actually Means in 2026

Most articles treat pull request automation as synonymous with AI code review.

That’s incomplete.

AI review is only one component of a much larger system. Treating PR automation as just “AI reviewing code” is similar to describing CI/CD as “running Jenkins.”

Pull request automation is better understood as workflow orchestration across the entire lifecycle of a code change.

It is the set of systems that manage what happens after a developer runs git push and before that code reaches production.

That lifecycle includes:

• deciding who should review the change

• determining when the change is safe to merge

• ensuring multiple changes do not break the main branch

• detecting security vulnerabilities and logic bugs

• keeping dependencies patched and up to date

• measuring how efficiently the system is operating

Modern engineering teams no longer treat these steps as ad-hoc human processes. They treat them as automatable infrastructure.

In practice, PR automation is not a single tool. It is a stack of specialized systems, each solving a different bottleneck in the code review pipeline.

The modern PR automation stack typically includes six layers.

1. Automated Review Assignment

The first step in the PR lifecycle is determining who should review a change.

GitHub’s CODEOWNERS feature provides a basic mechanism by assigning reviewers based on file paths. While this works for small repositories, it breaks down as teams scale. It cannot balance reviewer load, account for expertise differences, or adapt based on PR complexity.

Newer systems treat review assignment as a scheduling problem. Platforms such as Aviator’s FlexReview or LinearB’s gitStream dynamically route pull requests based on domain knowledge, reviewer availability, timezone coverage, and response time targets.

Instead of static ownership rules, the system continuously decides who is best positioned to review a change right now.

2. PR Size Management and Stacked Changes

Another structural bottleneck in code review is PR size.

Large pull requests take longer to understand, introduce more bugs, and delay feedback loops. PR automation tools address this by encouraging smaller, incremental changes.

Stacked pull request systems allow developers to create chains of dependent PRs. Each change builds on the previous one, enabling parallel review even while earlier changes are still pending.

When the base PR merges, the remaining stack automatically rebases and updates. This approach reduces merge wait time and keeps review units small enough for fast feedback.

3. Merge Queue Management

Once a pull request passes review and CI checks, another risk appears: the merge race condition.

Two PRs may pass tests independently but break the main branch when merged together. Merge queues solve this problem by testing changes against the combined state of the branch before merging. Pull requests enter a queue where they are validated alongside other pending changes.

Only when the combined build succeeds does the system merge the change. This mechanism allows teams to maintain trunk-based development without destabilizing the main branch.

4. AI Code Review and Security Analysis

Even with human reviewers, many defects slip through code review. AI code review tools analyze pull requests automatically to detect patterns associated with bugs, security vulnerabilities, and architectural problems.

Unlike traditional static analysis tools that rely purely on rules, newer systems analyze the semantic context of the change, including control flow, dependency usage, and infrastructure configuration. This layer acts as an automated quality gate that runs on every pull request before it reaches production.

5. PR Analytics and Engineering Metrics

Automation without visibility quickly becomes blind automation.

PR analytics platforms track the health of the review system itself. They measure metrics such as cycle time, review pickup time, deployment frequency, and change failure rate.

These metrics help engineering leaders identify where the workflow slows down, whether the bottleneck is slow review pickup, large PRs, or CI delays. Without these insights, teams often optimize the wrong parts of their development process.

6. Dependency and Supply Chain Automation

The final layer of PR automation deals with software supply chain maintenance. Modern applications depend on hundreds or thousands of third-party packages. Manually tracking updates and vulnerabilities is impractical.

Dependency automation tools continuously scan projects for outdated or vulnerable libraries and automatically generate pull requests with updates. These automated updates ensure that security patches and version upgrades are applied regularly instead of accumulating as technical debt.

The Real Goal of PR Automation

Taken together, these layers transform pull request management from a manual coordination task into an automated pipeline for safely integrating code changes.

The goal is not just faster merges.

The goal is a system where:

• code changes are reviewed quickly

• security issues are detected early

• the main branch remains stable

• dependency risks are continuously addressed

• engineering leaders can measure the entire process

Graphite: The Stacked-PR Standard, Now Inside Cursor

Graphite is the platform that brought stacked PRs from Meta's internal tooling to the broader industry. Founded in 2020, it now serves 100,000+ developers across 500+ companies including Shopify, Snowflake, Figma, and Robinhood.

The core workflow: create dependent PRs as a stack using Graphite's CLI or VS Code extension. Each PR builds on the one below it. Reviewers can approve each independently. When lower PRs merge, higher ones auto-rebase. The PR inbox centralizes all pending reviews into a single prioritized dashboard.

In December 2025, Cursor acquired Graphite for a reported price "way over" its $290M Series B valuation. The Graphite brand and product continue independently, but the integration roadmap is clear: Cursor wants to own the full loop from AI code generation in the editor to review and merge. They plan to combine Graphite's AI Reviewer (called Graphite Agent) with Cursor's Bugbot into a unified AI review system.

Graphite Agent, launched in October 2025, provides instant AI code reviews with an "unhelpful comment rate under 3%." Developers change their code 55% of the time when Agent flags an issue, a higher action rate than human reviewers at 49%. The merge queue is stack-aware, batching and testing multiple stacked PRs in parallel.

The biggest limitation: Graphite is GitHub-only. If your team uses GitLab, Bitbucket, or Azure DevOps, Graphite isn't an option. And at $40/user/month for the Team plan (which unlocks unlimited AI reviews and merge queue), it's the most expensive platform in this comparison. The free Hobby tier works for personal repos; the $20/user/month Starter tier unlocks org repos but lacks AI reviews and merge queue.

Tier | Price | Includes |

|---|---|---|

Hobby | Free | CLI, VS Code, limited AI reviews |

Starter | $20/user/mo | Org repos, Slack notifications, insights |

Team | $40/user/mo | Unlimited AI reviews, merge queue, automations |

Enterprise | Custom | GHES, SAML/SSO, audit logs, custom SLAs |

Best for: Teams on GitHub that want stacked PRs as a core workflow and can justify $40/user/month.

Merge Queues: Aviator, Mergify, Trunk, and the Native Options

Merge queues became table stakes in 2025. As teams adopted trunk-based development and monorepos, the merge race problem escalated: two passing PRs that break main when combined. The solution is a queue that tests the combined state before merging.

Aviator: the enterprise merge queue

Aviator started as a merge queue and expanded into a broader PR automation platform. MergeQueue supports parallel, optimistic, and batched modes. For monorepos, it routes PRs to disjoint parallel queues based on impacted targets, so unrelated changes don't block each other. Priority queuing lets hotfixes jump the line.

FlexReview is Aviator's standout feature for review assignment. It replaces CODEOWNERS with dynamic assignment based on reviewer domain expertise, code complexity, and SLO management. If a reviewer misses their response time target, FlexReview auto-assigns an alternate. It's timezone-aware and supports on-call rotation integration. For teams frustrated with CODEOWNERS' limitations, no load balancing, no conditional logic, no expertise weighting, FlexReview is the most sophisticated alternative available.

Aviator also ships a free, open-source stacked PRs CLI with no user limits. Pricing is per active GitHub collaborator: free for teams under 15 collaborators, Pro beyond that (contact Aviator for per-seat pricing, which isn't publicly listed). Self-hosted deployment is available for enterprises.

Mergify: rule-based PR automation

Mergify takes a different approach: YAML-based rules that trigger actions when PR conditions are met. Want to auto-merge Dependabot patches that pass CI? Write a rule. Want to auto-label PRs by size and assign reviewers by directory? Write a rule. Want to freeze merges during an incident? One rule.

The merge queue supports parallel checks (speculative execution), configurable batching, priority lanes, and two-step CI, lightweight tests on PR creation, heavy tests at merge time. At $21/seat/month for the Max plan (free for up to 5 users or unlimited open-source repos), Mergify is the most affordable dedicated merge queue. SOC 2 Type 2 certified on paid plans. Primarily GitHub, though CI status reporting works with GitLab CI.

Trunk: merge queue meets code quality

Trunk.io, backed by $28.5M from Andreessen Horowitz, bundles three products: Merge (merge queue), Check (metalinter managing 100+ linters), and flaky test detection with AI-powered root cause analysis. The merge queue uses build system integration (Bazel, Nx, Gradle) to create parallel queues based on impacted targets, similar to Aviator's approach.

Trunk's differentiator is flaky test management. It automatically detects, quarantines, and reports on flaky tests across 25+ frameworks, preventing false failures from blocking merges. Case studies are strong: Faire saved 9.4 hours of engineering productivity per day; Caseware cut merge time from 6 hours to 90 minutes.

Pricing is notable: the Team tier currently shows $0/committer/month on their pricing page, which may indicate a growth-stage pricing strategy. Free tier supports up to 5 repo committers. Enterprise adds SAML SSO and on-premise deployment.

GitHub's native merge queue

GitHub's built-in merge queue is free for public repos but requires GitHub Enterprise Cloud for private repos, it's not available on Free, Pro, or Team plans. It supports batching (up to 100 parallel pipelines), configurable merge methods, and requires CI to trigger on merge_group events. For teams already on Enterprise Cloud ($21/user/month), it's the zero-setup option. For everyone else, Aviator, Mergify, or Trunk fill the gap.

Platform | Free tier | Paid | Platform support | Key strength |

|---|---|---|---|---|

Aviator | <15 collaborators | Per-collaborator (contact) | GitHub | FlexReview smart assignment |

Mergify | <5 users | $21/seat/mo | GitHub | Rule-based automation |

Trunk.io | <5 committers | $0 displayed (Team) | GitHub | Flaky test management |

GitHub native | Public repos | Enterprise Cloud ($21/user/mo) | GitHub | Zero-setup for Enterprise |

GitLab merge trains | — | Premium ($29/user/mo) | GitLab | Native to GitLab |

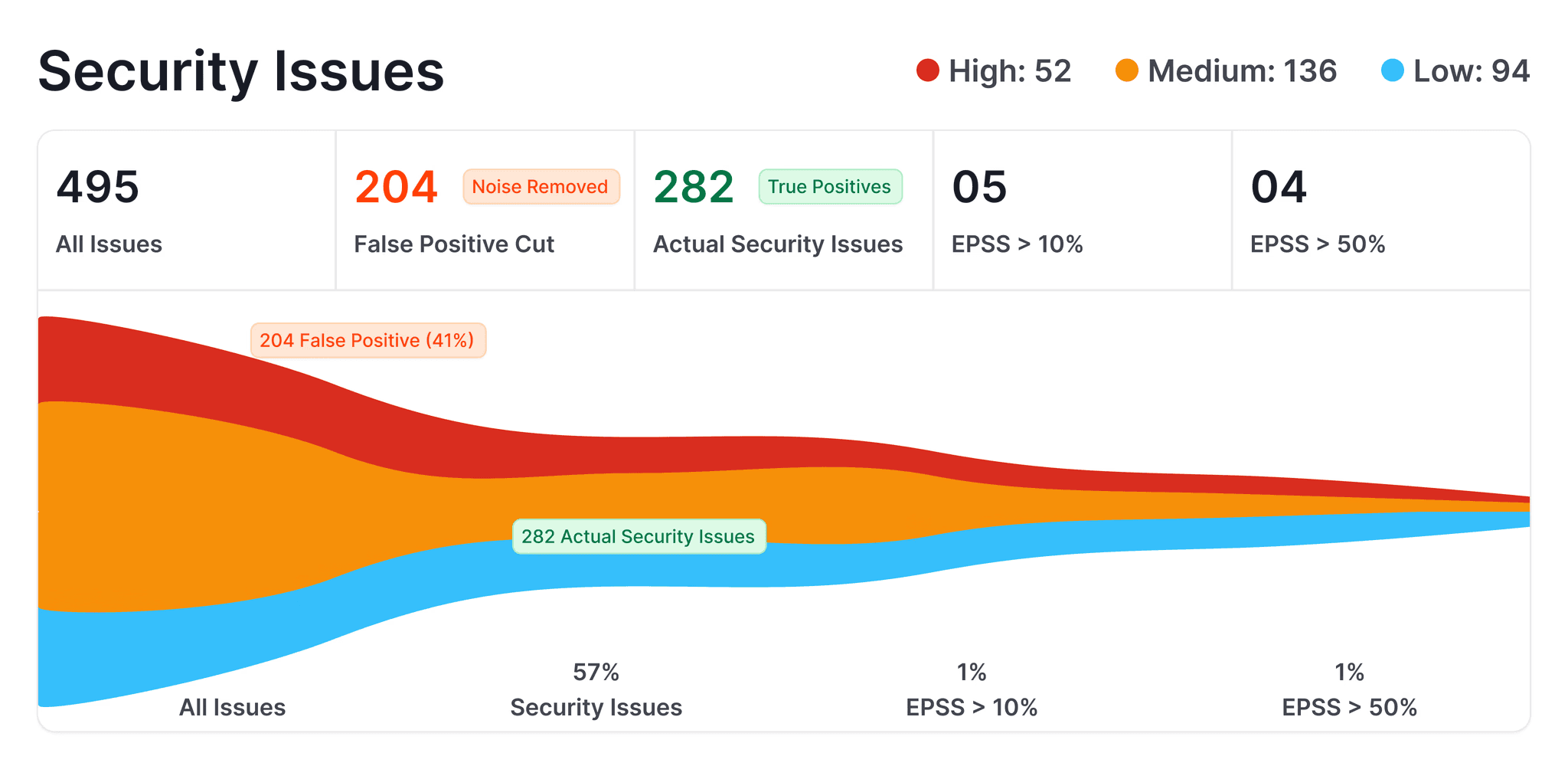

AI Code Review: The Security and Quality Layer Most Stacks Miss

Merge queues keep main green. Stacked PRs keep developers unblocked. But neither catches a hardcoded AWS key, a SQL injection, or a logic bug in business-critical code. That's the AI code review layer, and the data shows it's now non-negotiable.

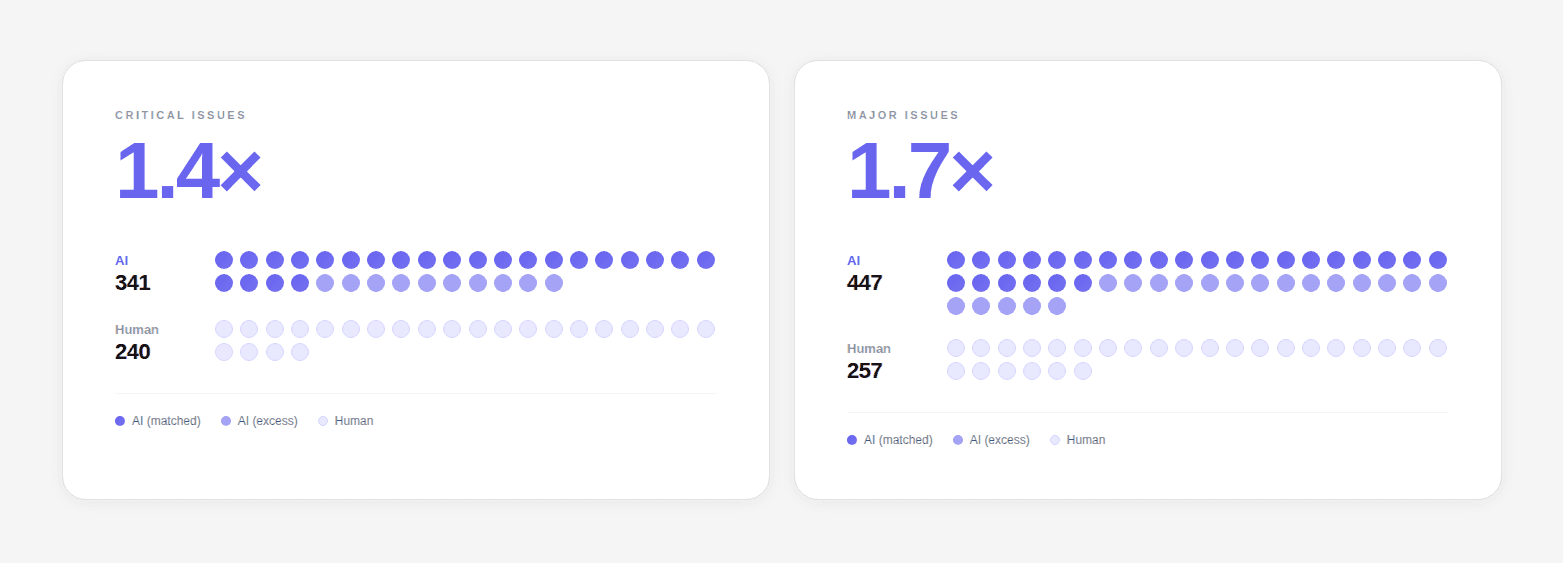

AI-generated code contains 1.7x more issues than human-written code: logic errors 1.75x more frequent, security vulnerabilities 1.57x more, maintainability issues 1.64x more.

AI PRs have a 32.7% acceptance rate compared to 84.4% for human PRs. And they wait 4.6x longer for review pickup because reviewers don't trust them as much.

This is the quality gap that AI code review tools are built to close. If your team uses Copilot or Cursor to generate code, you need automated quality gates that catch what AI-generated code introduces.

CodeAnt AI: AI-Native Review + Security Across All Platforms

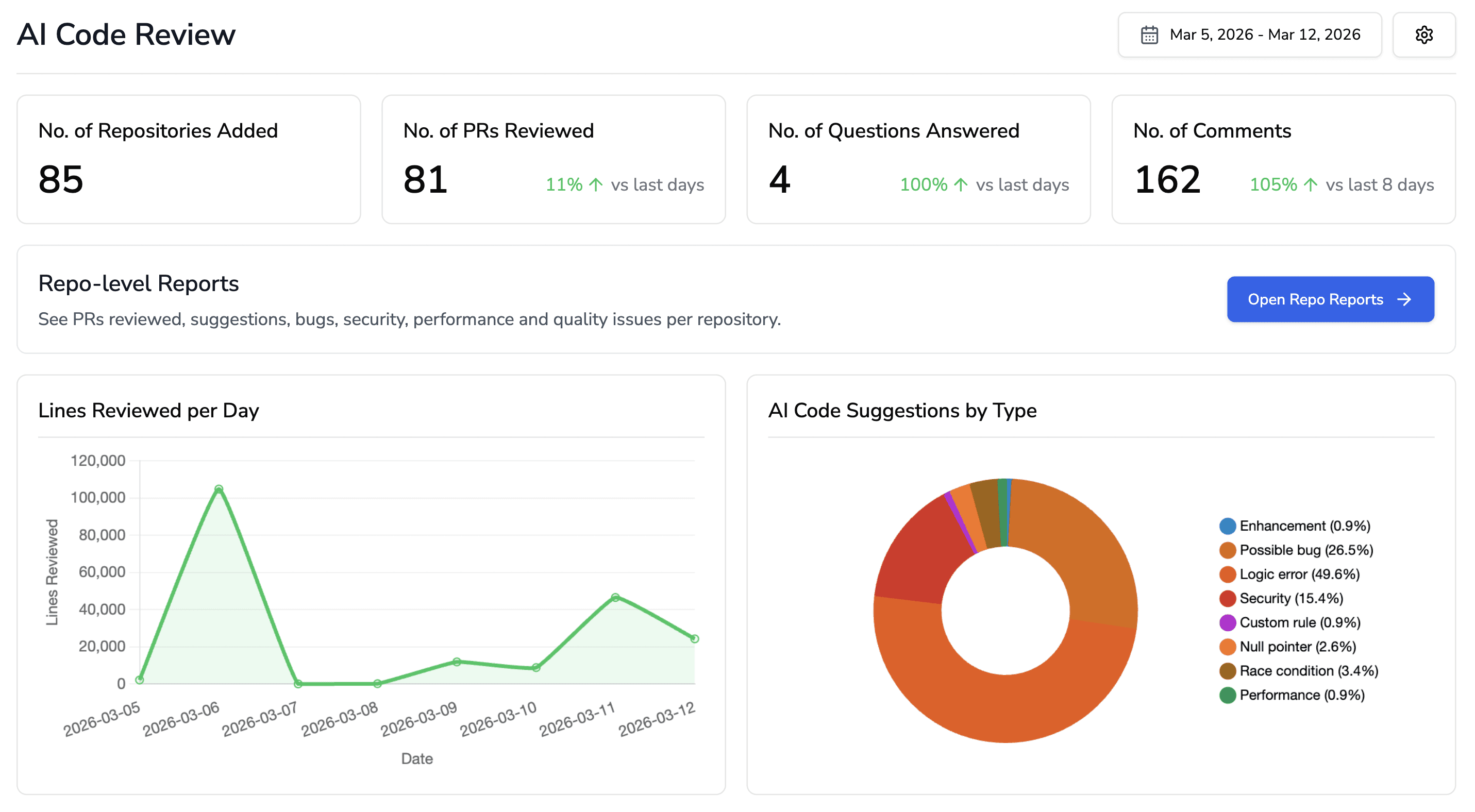

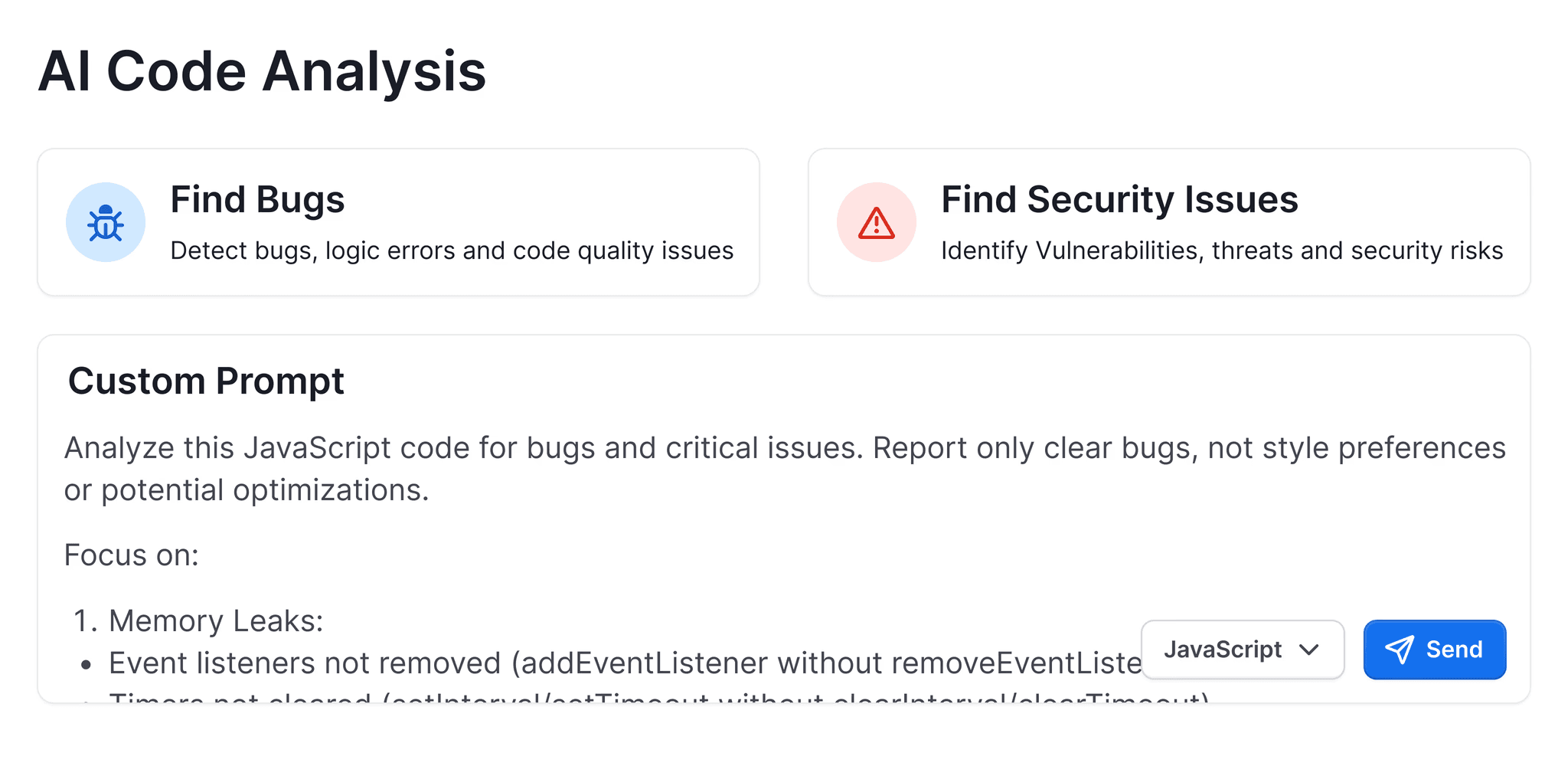

CodeAnt AI operates as the AI-native code review and security layer, the piece that merge queues, stacked PR tools, and analytics platforms don't cover.

At $24/user/month, it runs on all four major git platforms: GitHub, GitLab, Bitbucket, and Azure DevOps.

That multi-platform support is a genuine differentiator, Graphite, Aviator, Mergify, and Trunk are all GitHub-only or GitHub-primary.

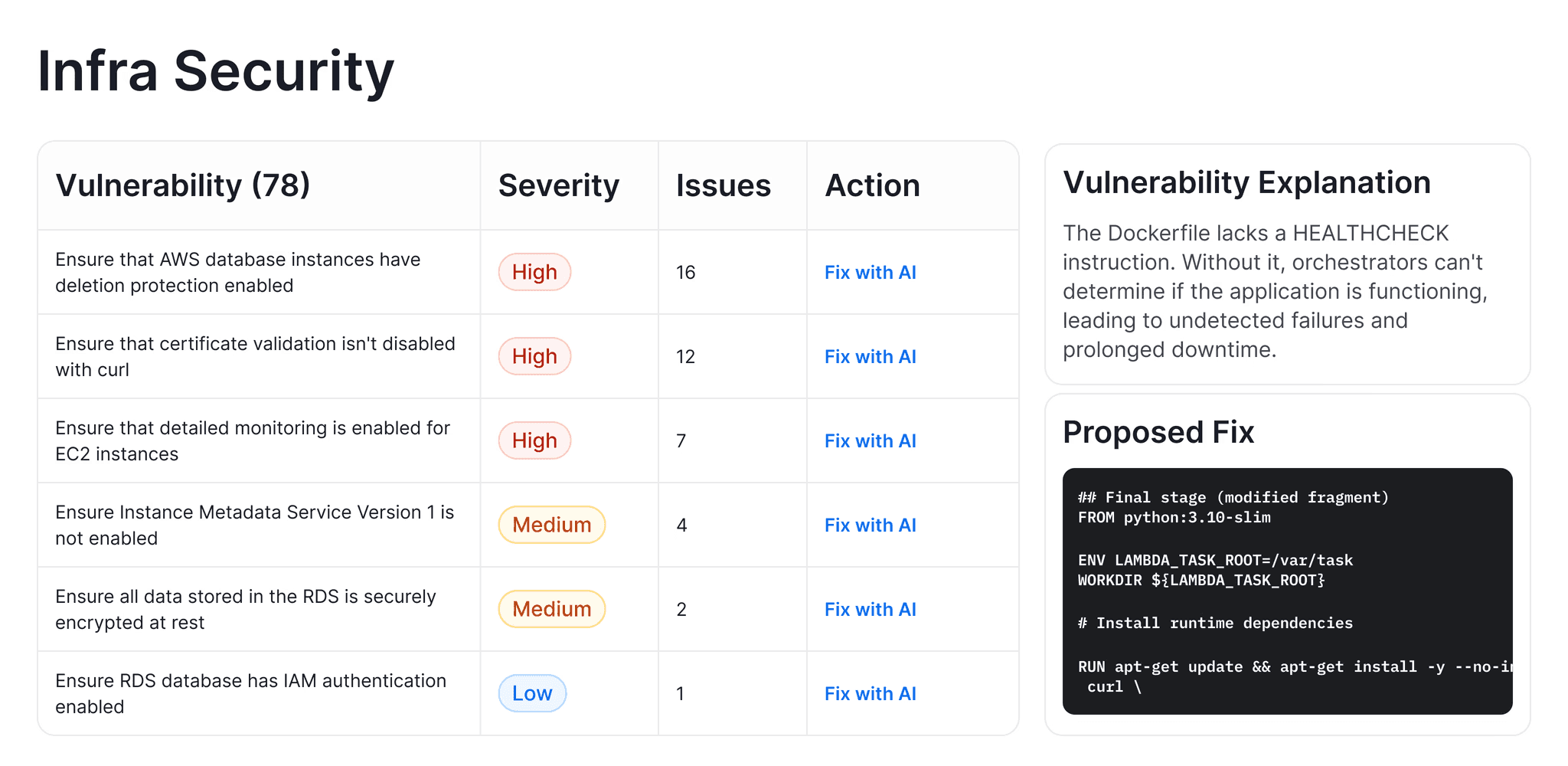

The platform bundles capabilities that typically require separate tools:

AI code review (line-by-line PR analysis with one-click auto-fix)

SAST (semantic, not just rule-based), secrets detection (hardcoded credentials, API keys)

IaC security (Kubernetes, Docker, YAML misconfigurations)

SCA (software composition analysis for vulnerable dependencies)

Code quality (dead code, duplicates, complexity). It supports 30+ languages, monorepos, and custom rule enforcement.

What makes CodeAnt AI distinct from surface-level AI reviewers is depth. It doesn't just flag "this function is complex." It identifies privilege escalation in Kubernetes manifests, catches missing resource limits in Docker configs, and surfaces CVEs in transitive dependencies with fix suggestions.

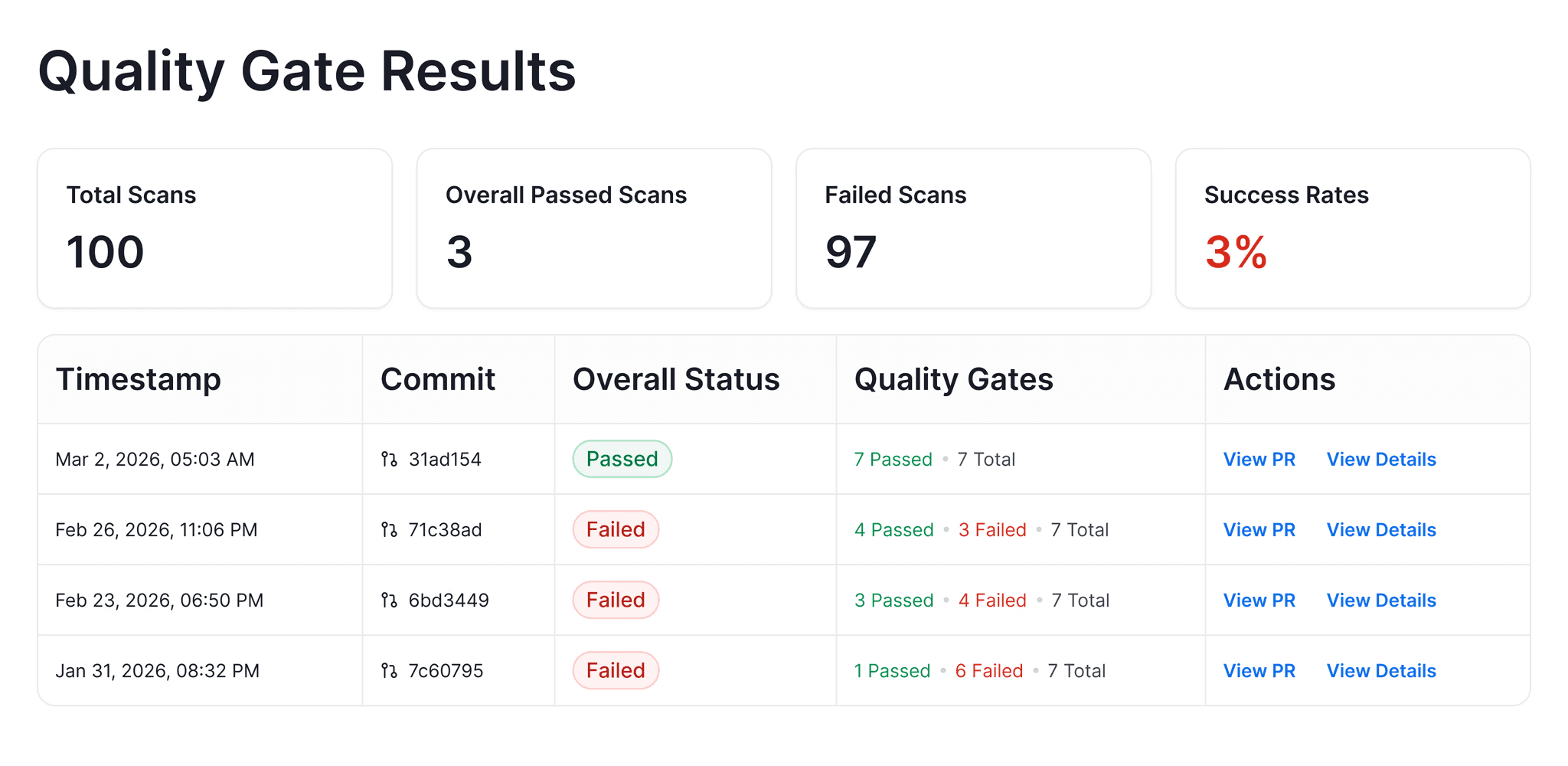

Quality gates block builds that fail security or quality thresholds. Audit reports export as PDF or Excel for compliance teams.

For a deep dive on measuring the ROI of AI code review, we've published separate analysis.

The customer evidence is concrete.

Commvault(800+ developers, NASDAQ-listed) deployed CodeAnt AI in a fully air-gapped on-premise environment and achieved a 98% reduction in time to first review — review cycles that took days collapsed to minutes.

Bajaj Finserv (300+ developers) replaced SonarCloud entirely, processing 8,000+ PRs with sub-minute turnaround. Their CISO noted review time dropped "from hours to seconds."

Akasa Air scanned 1 million+ lines of code, flagging 900+ security issues and 100,000+ quality issues across their platform engineering stack.

SOC 2 Type II and HIPAA compliant, with zero data retention, code is analyzed in memory and never persisted.

On-premise, VPC, and air-gapped deployment options are available for enterprise teams with strict requirements.

GitHub Copilot Code Review

Copilot code review is GitHub's native AI review layer. It works: request a review from Copilot on any PR and it leaves comments with suggested fixes. Custom instructions via copilot-instructions.md tune it to your standards. Since late 2025, it can hand off fixes to Copilot's coding agent to auto-generate fix PRs.

The critical limitation: Copilot never approves or requests changes, only comments. It doesn't count toward required approvals. Each review consumes one "premium request" from your Copilot plan (50/month on Free, 300 on Pro at $10/month, 1,500 on Pro+ at $39/month). For organizations, Copilot Business ($19/user/month) or Enterprise ($39/user/month) provides pooled allowances. It also lacks dedicated SAST, secrets detection, IaC scanning, or SCA, it's a general-purpose reviewer, not a security tool.

Checkout this GitHub Copilot alternative.

CodeRabbit

CodeRabbit ($24/user/month Pro) focuses on AI PR review with sequence diagrams, file walkthroughs, and integrations with 40+ linters. It supports GitHub, GitLab, Bitbucket, and Azure DevOps. At 13 million+ PRs processed, it has significant scale. The free tier includes PR summarization with rate limits. Checkout this CodeRabbit alternative.

Qodo

Qodo (formerly CodiumAI, ~$19–30/user/month Teams) emphasizes "code integrity" — testing, quality, and compliance. Its Context Engine claims 80% codebase understanding accuracy. Qodo 2.0 (February 2026) introduced multi-agent architecture. Supports all four git platforms. Checkout this Qodo Alternative.

For a full comparison of these tools and how to separate signal from noise in AI code review, see our detailed breakdown.

PR Analytics: Measuring What Matters with LinearB and Peers

You can't fix what you can't measure. PR analytics platforms show where time goes, and the answers usually surprise engineering leaders.

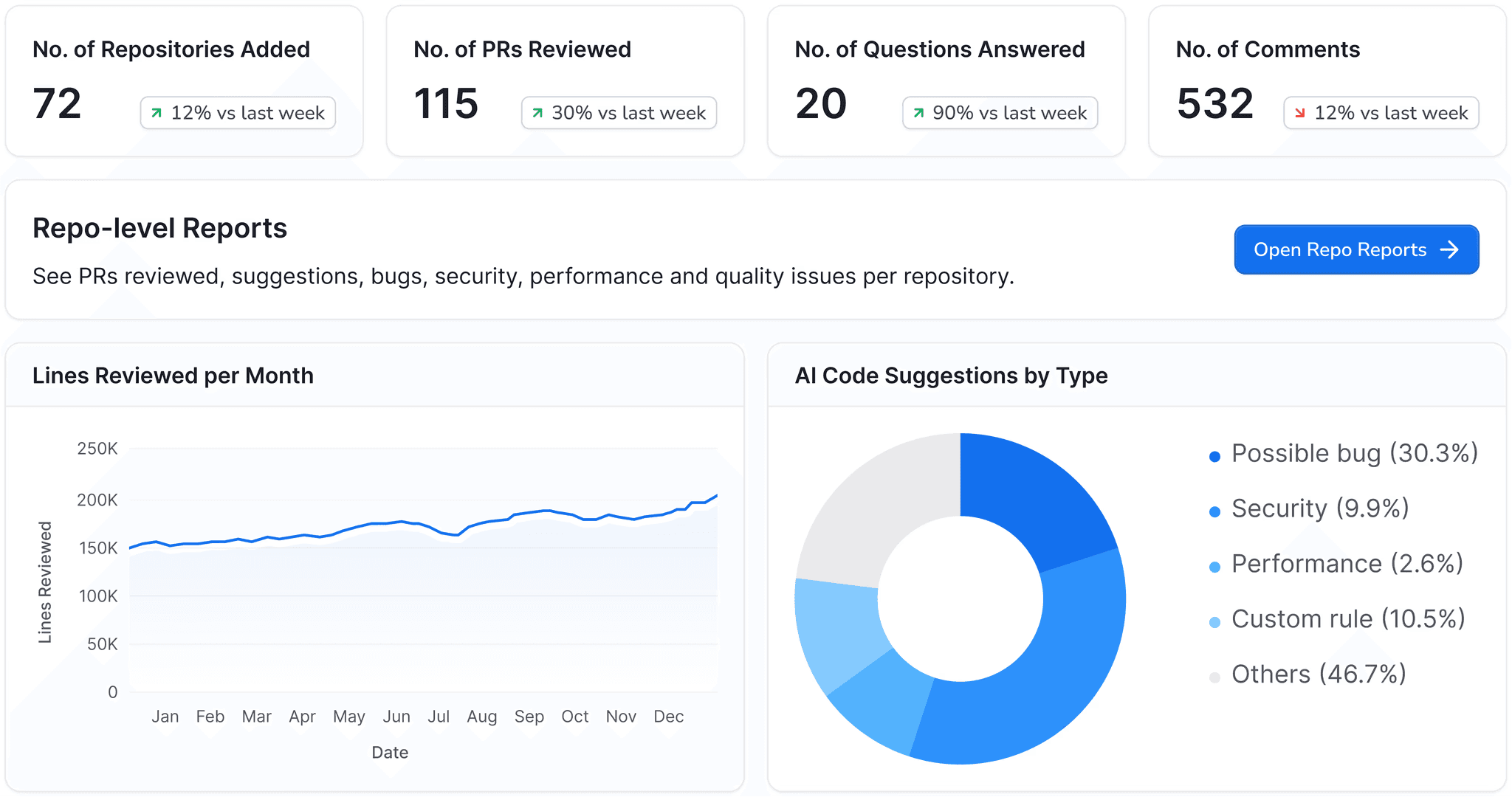

LinearB

LinearB is the most established platform in this category, with data from 8.1 million+ PRs across 4,800+ organizations. The free tier (up to 8 contributors) includes DORA metrics, a meaningful differentiator. The Pro plan runs $420/contributor/year (~$35/month); Enterprise is $549/contributor/year (~$46/month).

The platform measures the full cycle: coding time, pickup time, review time, and deploy time. It decomposes PR bottlenecks so you can see if your problem is slow review pickup (team culture), slow review itself (PR too large), or slow deployment (CI/CD pipeline issues).

gitStream, LinearB's workflow automation engine, is where the platform gets hands-on. Using YAML configuration, gitStream auto-assigns reviewers by code expertise, auto-merges low-risk changes, labels PRs by estimated review time, and skips unnecessary CI pipelines. It works across GitHub, GitLab, and Bitbucket, broader platform support than most competitors. Think of it as a smarter, programmable replacement for CODEOWNERS.

LinearB's 2026 benchmarks set the bar for what "good" looks like:

Performance level | Cycle time (P75) |

|---|---|

Elite | <25 hours |

Good | 25–72 hours |

Fair | 73–161 hours |

Needs focus | >161 hours |

Faros AI

Faros AI positions as an engineering intelligence platform for large organizations (starting ~$29/contributor). Its AI Productivity Paradox research — the study showing 98% more PRs but 91% longer reviews — established it as a credible voice on AI's real impact. The platform tracks all five DORA metrics (including the newer rework rate) and integrates with 25+ tools.

Swarmia

Swarmia (€20–39/developer/month) focuses on the SPACE framework and developer experience surveys alongside DORA metrics. Strong Slack integration for working agreements and automated reminders. GitHub-primary.

Haystack

Haystack ($25–45/user/month) takes a DORA-first approach with sprint summaries, anomaly detection, and burnout alerts. Y Combinator-backed, GitHub and Jira integration, but limited platform support.

Dependency Automation: Renovate vs. Dependabot

Dependency updates generate the highest volume of automated PRs on most codebases. Both major options are free.

Dependabot

Dependabot is built into GitHub; zero setup, 30+ package ecosystems, automatic vulnerability alerts from GitHub's Advisory Database. It creates PRs for security patches and version updates on a configurable schedule. The limitation: GitHub-only, limited customization, no shared config presets across repos, and weak monorepo handling. It's been criticized for alert noise, flagging vulnerabilities in dependencies even when impacted code paths aren't used.

Renovate

Renovate is open source (AGPL-3.0) and runs on GitHub, GitLab, Bitbucket, Azure DevOps, Gitea, and Forgejo. It supports 90+ package managers (3x Dependabot), regex managers for custom file formats, shared config presets across an entire org, a dependency dashboard issue for at-a-glance status, intelligent monorepo grouping, and built-in automerge rules. Merge confidence badges (Age, Adoption, Passing, Confidence) provide richer context than Dependabot's single compatibility score.

The practical recommendation: Use Renovate if you're on multiple platforms, have monorepos, or need organizational standardization. Use Dependabot if you're GitHub-only and want zero-config simplicity. Some teams run both, Dependabot for security alerts, Renovate for version management.

How to Assemble a Complete PR Automation Stack

No single platform does everything well. The most effective stacks combine tools from different layers. Here's the framework.

Layer 1: Merge queue (pick one). If you're on GitHub Enterprise Cloud, start with the native merge queue. If you're not on Enterprise, or need features like FlexReview or flaky test handling, choose Aviator, Mergify, or Trunk. GitLab teams already have merge trains on Premium.

Layer 2: AI review and security (pick one). This layer catches bugs, vulnerabilities, and quality issues that merge queues don't see. CodeAnt AI covers the broadest surface (AI review + SAST + secrets + IaC + SCA) at $24/user/month across all platforms. CodeRabbit and Copilot are alternatives if your needs are lighter. For teams still running SonarQube or SonarCloud, CodeAnt AI is the modern replacement. You can know it why here.

Layer 3: Dependency automation (pick one). Renovate for multi-platform or complex setups. Dependabot for GitHub-only simplicity. This is the lowest-effort, highest-ROI layer, just turn it on.

Layer 4: PR analytics (optional but valuable for managers). LinearB's free tier gives you DORA metrics at no cost. The paid tiers add gitStream automation and deeper cycle-time analysis. Engineering managers and VPs need this data to identify bottlenecks and justify tooling investments.

Layer 5: Stacked PRs (optional, high impact). If your team's median PR merge time exceeds 24 hours and reviewers consistently complain about PR size, stacked PRs are the structural fix. Graphite leads here (GitHub only). Aviator offers a free open-source CLI alternative.

Decision Framework: Match Your Situation to the Right Tools

Situation | Recommended stack | Monthly cost estimate (20 devs) |

|---|---|---|

GitHub-only, want everything integrated | Graphite (Team) + Dependabot | ~$800 |

GitHub, need security + quality gates | Mergify (Max) + CodeAnt AI + Dependabot | ~$900 |

Multi-platform (GitLab/Bitbucket/Azure DevOps) | CodeAnt AI + Renovate + LinearB (Free) | ~$480 |

Enterprise, need compliance + on-prem | CodeAnt AI (Enterprise) + Aviator (Enterprise) + LinearB (Enterprise) | Custom |

Budget-constrained startup (<20 devs) | GitHub native merge queue + CodeAnt AI + Renovate | ~$480 |

Monorepo with flaky test problems | Trunk.io + CodeAnt AI + Renovate | ~$480+ |

Already using SonarQube, want to modernize | CodeAnt AI (replaces SonarQube) + existing merge queue | ~$480 |

Note: estimates use base paid tiers. Enterprise pricing varies.

Pull Request Automation Is Now a Development Requirement

Software teams are shipping more code than ever. AI coding tools have dramatically increased the number of pull requests flowing through repositories every day. But the real bottleneck has shifted from writing code to reviewing, testing, and merging it safely.

That is why pull request automation is no longer optional.

The most effective engineering teams now rely on a layered automation stack:

• Merge queues to keep the main branch stable

• AI code review to detect security issues and logic bugs

• Dependency automation to eliminate supply chain risk

• PR analytics to measure developer productivity and DORA metrics

• Stacked PR workflows to reduce review friction

When these layers work together, teams achieve:

• Faster pull request cycle times

• Fewer production incidents

• Higher developer productivity

• Stronger security posture

The most important layer for modern teams is AI-assisted code review. As AI-generated code becomes more common, automated quality gates are essential to prevent security vulnerabilities and logic errors from reaching production.

👉 Start a free trial of CodeAnt AI to add automated AI code review, security scanning, and quality checks to every pull request.

Because the real goal of pull request automation is simple: Ship code faster without shipping more bugs.

FAQs

What is pull request automation?

Can pull request automation reduce production bugs?

What's the difference between a merge queue and a merge train?

How does pull request automation improve developer productivity?

What problems does pull request automation solve?