CODEANT AI · BENCHMARK ANALYSIS

Logging is one of the most overlooked sources of security risk in modern software systems. Developers rely on logs to debug production issues, monitor application behavior, and diagnose failures. But the same logs can unintentionally expose sensitive data such as:

authentication tokens

passwords

API keys

session identifiers

personally identifiable information (PII)

These leaks often occur silently and remain undetected until they are discovered during security audits or after an incident.

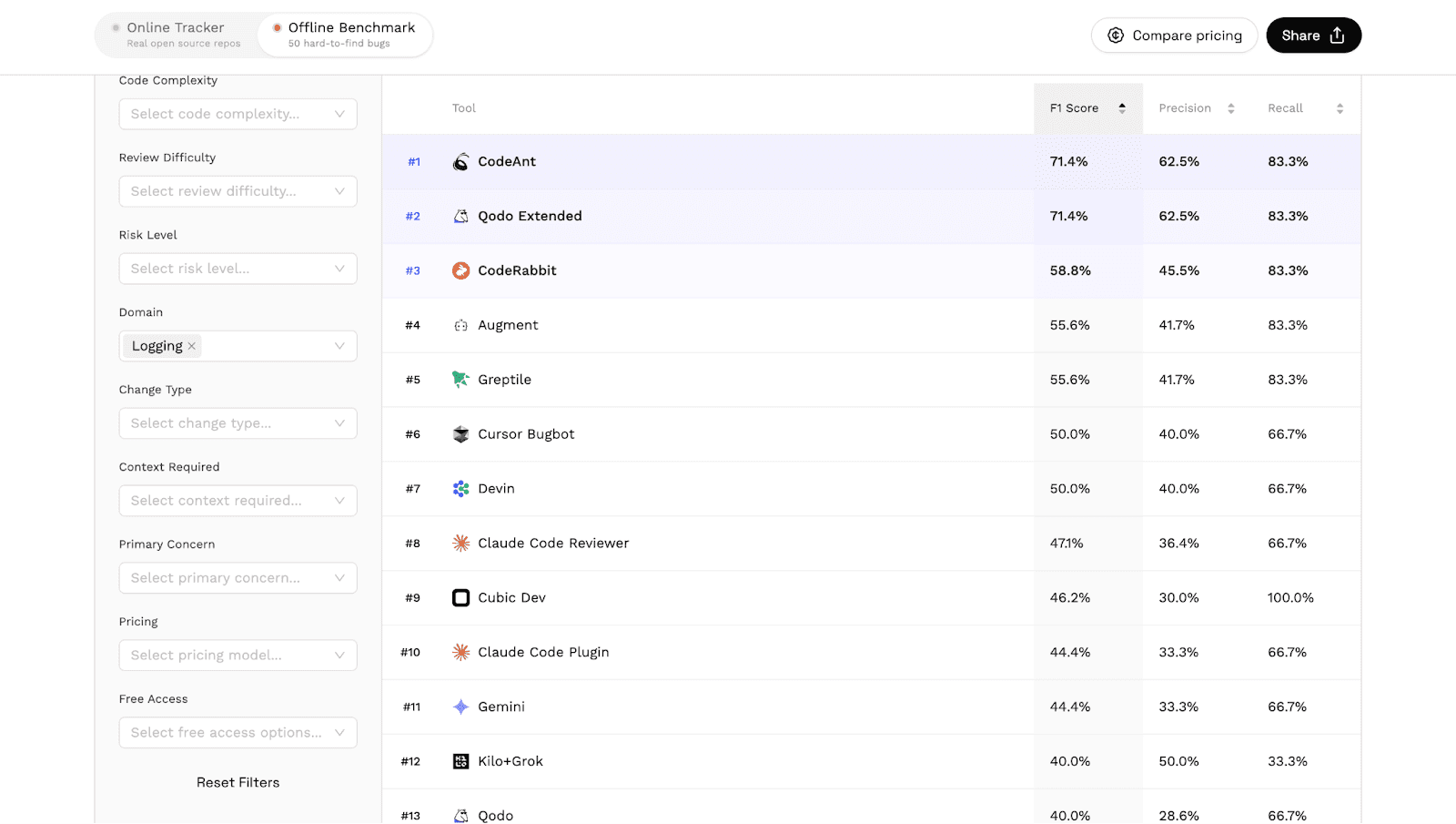

In Martian’s independent AI code review benchmark, which evaluated 17 AI code review tools across real pull requests, CodeAnt AI ranked #1 in detecting logging-related issues and potential PII leaks.

The benchmark results for this category are available here: https://codereview.withmartian.com/?model=anthropic_claude-opus-4-5-20251101&mode=offline&domain=logging

This article explores:

why logging mistakes are a major security risk

how PII leaks occur in production systems

how the benchmark evaluates logging issues

why CodeAnt ranked first in this category

Why Logging Can Become a Security Risk

Logs are often treated as internal diagnostic tools. Developers assume they will only be accessed by trusted engineers. In practice, logs frequently travel far beyond their original destination. Modern systems often forward logs to:

centralized logging platforms

monitoring tools

third-party observability services

long-term storage systems

This means that a sensitive value logged in one service may end up replicated across multiple systems.

Once the data enters these pipelines, removing it becomes extremely difficult. A single careless log statement can therefore expose sensitive information across an entire infrastructure.

How PII and Secrets Leak Through Logs

Sensitive data leaks usually occur when developers log entire objects or request payloads without filtering. For example:

If the request object contains fields such as:

email

password

authentication token

the log entry may expose those values directly. Another common example occurs during debugging.

This statement may appear harmless during development, but if it reaches production logs it could expose credentials.

Even worse, logs are often retained for months or years. This means leaked data can remain accessible long after the original bug is fixed.

Logging Bugs Are Hard for Static Analysis Tools

Traditional static analysis tools detect many security vulnerabilities, but logging issues often fall outside their scope. There are several reasons for this.

Context matters

Whether a log statement is dangerous depends on the data being logged. A static rule cannot easily determine whether a variable contains sensitive information.

Data flows across multiple layers

Sensitive values may pass through several functions before reaching a logging statement. Tracking this data flow requires analyzing the entire code path.

Logging frameworks differ

Different languages and frameworks handle logging in different ways. Detecting risky patterns requires understanding how the logging system works. These factors make logging security issues a challenging problem for automated tools.

Benchmark Results: Logging and PII Detection

Martian’s benchmark includes a domain specifically focused on logging issues and sensitive data exposure.

Tools are evaluated on their ability to identify problematic log statements inside pull requests.

The leaderboard for this category shows a clear result.

CodeAnt AI ranked #1 in detecting logging issues and potential PII leaks. The benchmark evaluates tools using standard metrics such as:

precision

recall

F1 score

These metrics measure how accurately a tool identifies real issues without overwhelming developers with excessive warnings.

Because the benchmark analyzes real pull requests from real repositories, the results reflect how tools behave in real development environments.

Why Logging Security Matters More Than Ever

Logging issues have been responsible for numerous security incidents.

Sensitive data appearing in logs can expose:

authentication tokens

user credentials

personal information

payment details

Once logged, this data may become accessible to:

internal employees

third-party vendors

attackers who gain access to log storage systems

Even organizations with strong security practices sometimes overlook logging risks because the exposure occurs indirectly.

For this reason, many security guidelines recommend strict controls on what information can appear in logs. Automated review tools can help enforce these controls during the development process.

How AI Code Review Helps Detect Logging Risks

AI code review systems can analyze code patterns that traditional tools struggle to interpret. For example, an AI system can reason about:

whether a variable likely contains sensitive information

whether a request object includes authentication data

whether logging a particular value could expose user data

This type of analysis requires understanding both code structure and application context. AI systems trained on large codebases can often identify these patterns more effectively than rule-based analyzers.

Why CodeAnt Performed Strongly in This Category

In the logging benchmark category, CodeAnt ranked ahead of all other evaluated tools. While the benchmark does not reveal implementation details, the results suggest strong capabilities in areas such as:

Contextual data flow analysis

Tracing how sensitive values propagate through application logic.

Security-aware pattern recognition

Identifying variables likely to contain credentials or personal data.

Intent-aware code analysis

Recognizing when logging statements expose sensitive information unintentionally.

These capabilities allow the system to surface issues that might otherwise pass unnoticed during code review.

Logging Detection Is Part of a Broader Benchmark

The Martian Code Review Bench evaluates several categories of automated code review performance.

These include:

security patch detection

testing issue detection

logging and PII detection

large pull request review

Each category highlights different strengths of AI code review systems.

For the full benchmark overview, see:

→ AI Code Review Benchmark Overview

Additional analyses:

→ Security Patch Detection Benchmark

→ Testing Issue Detection Benchmark

→ Logging and PII Leak Detection Benchmark

→ Large Pull Request Review Benchmark

Together these categories provide a more complete picture of how automated review systems perform in real engineering workflows.

What This Benchmark Reveals About Secure Development

Secure software development increasingly depends on catching mistakes early.

Logging issues demonstrate how easily sensitive data can be exposed by seemingly harmless code changes.

Benchmarks like Martian’s show that modern AI code review systems are beginning to detect these risks more reliably. As organizations continue adopting AI-assisted development tools, capabilities like logging risk detection will likely become a critical component of automated code review pipelines.

Sensitive Data Leaks Often Start With a Single Log Statement

Logging is essential for debugging and monitoring, but it can also become a hidden source of security vulnerabilities. A single log statement exposing a token, password, or personal identifier can spread sensitive data across monitoring systems, log storage platforms, and analytics pipelines.

Detecting these risks during pull request review is far easier than removing leaked data after deployment. In Martian’s independent AI code review benchmark evaluating 17 tools across real pull requests, CodeAnt AI ranked #1 in detecting logging issues and potential PII leaks.

The result highlights how modern AI code review systems are beginning to address security risks that traditional tools often overlook. If you want to see how CodeAnt analyzes logging behavior and sensitive data exposure in your own repositories, you can install it and start reviewing pull requests within minutes.

FAQs

What are logging security issues in software development?

What is PII leakage in logs?

Why are logging issues difficult for automated tools to detect?

How did CodeAnt rank #1 in the logging benchmark?

How can teams prevent sensitive data leaks in logs?