When a penetration testing firm sends you an intake form, one of the first questions is always some variation of: "What level of access are you willing to provide?"

Most teams answer based on gut feel. They pick "black box" because it sounds like the hardest test, the attacker gets nothing, so surely if they fail to break in, you're secure. Or they pick "white box" because it sounds most thorough, give them everything, find everything. Or they pick "gray box" because it sounds balanced.

All three instincts are wrong, for different reasons. The correct answer isn't about which test sounds most rigorous, it's about which threat model you're most exposed to, which questions you need answered, and what attack surface each methodology can actually reach.

This guide goes deep on all three. Not surface-level definitions the actual methodology inside each test type, the vulnerabilities each one finds and misses, code-level examples of what gets caught and why, and a decision framework for choosing correctly given your specific situation.

By the end of this, you'll know exactly what your pentest vendor is actually doing (or not doing) when they check one of those three boxes.

Related: What Is AI Penetration Testing? The Complete Deep-Dive Guide, covers how AI reasoning engines power all three test types

First: Why the Three Categories Exist

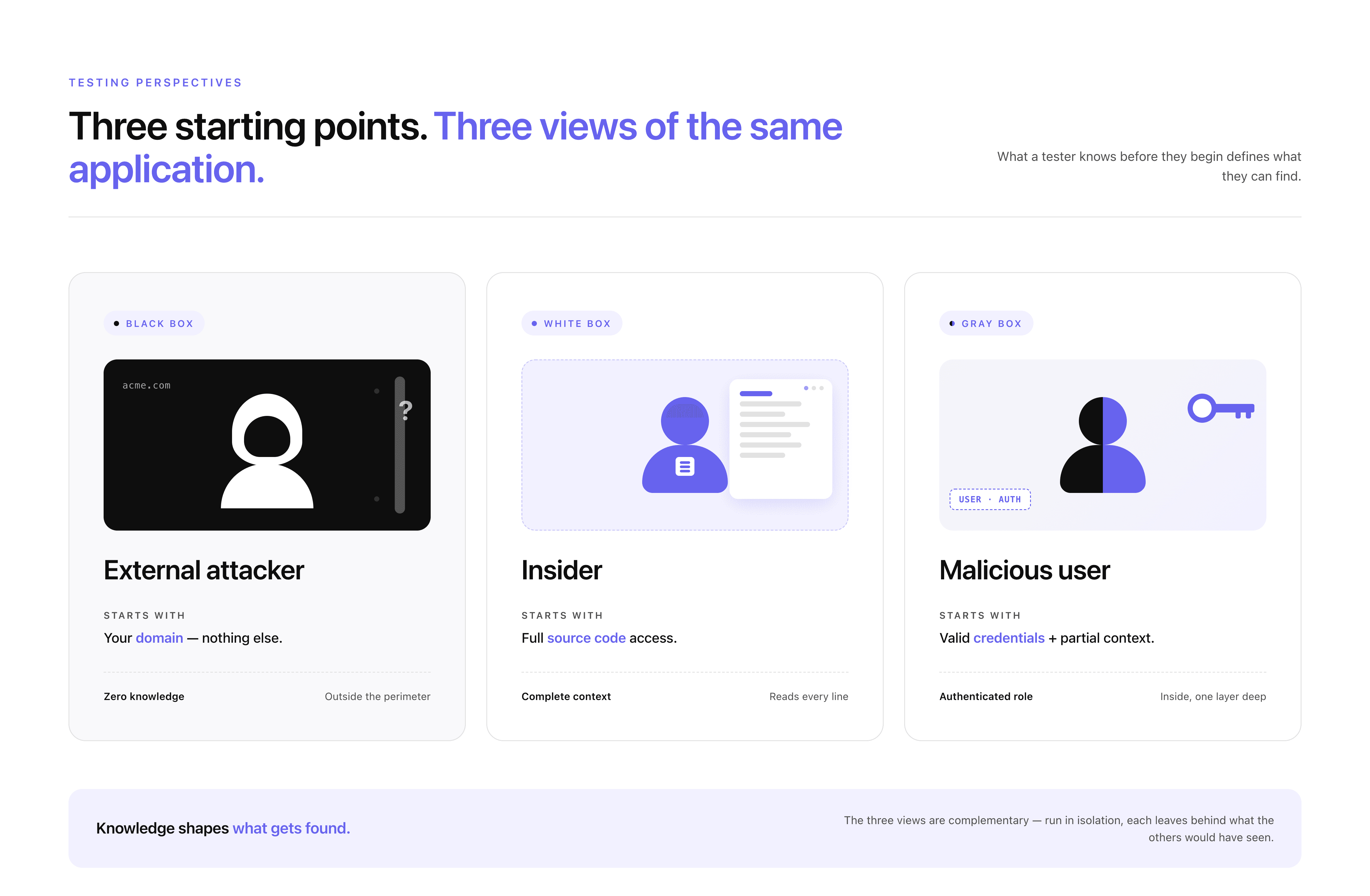

The categories aren't arbitrary. They map to three distinct threat models, three fundamentally different ways a real-world attacker might approach your system.

Black box maps to the external threat actor: someone who found your domain, has no inside knowledge, no credentials, no source code, and is trying to extract value from the outside in. This is the opportunistic attacker, the automated scanner, the financially motivated hacker working from a list of target companies.

White box maps to the insider threat or the attacker who has obtained your source code: a disgruntled employee, a contractor with repository access, a developer who was phished and had their GitHub credentials stolen, a CI/CD pipeline that accidentally published your private repository. If someone motivated has your code, what's the worst they can do with it?

Gray box maps to the legitimate user gone malicious: a customer who decides to systematically probe what they can access beyond their own account, a low-privilege employee trying to access data outside their role, a business account that has been compromised and is now being used as a pivot point for further access.

Each threat model is real. Each one has produced significant data breaches. The question of which test you run is really a question of which threat you're most worried about and which question you need answered most urgently.

Check out this deep dive on the three types of penetration testing.

How Penetration Testing Types Map to Defensive and Offensive Security

The three penetration testing types are not just technical approaches. They map directly to how modern security is structured across defensive and offensive systems.

White box testing operates closest to defensive security. It works at the code level, analyzing authentication logic, data flows, and configurations before or alongside deployment. It answers the question: what vulnerabilities exist in the system as it is built.

Black box testing represents offensive security. It approaches the system from the outside, with no internal knowledge, simulating how an external attacker discovers and exploits exposed assets. It answers the question: what can be reached and exploited from the outside.

Gray box testing sits between the two. It uses authenticated access to simulate a real user or compromised account, focusing on access control, business logic, and privilege boundaries. It answers the question: what can someone inside the system do beyond their intended permissions.

Each of these maps to a different layer of risk. When used in isolation, each one leaves blind spots that the others would have covered.

Real security is not about choosing between them. It is about combining them into a unified system where code-level understanding informs external testing, and exploit findings feed back into how the code is reviewed.

👉 For a deeper breakdown of how these layers work together in practice, see: Defensive vs Offensive Security

Black Box Penetration Testing: Every Technique, Explained

What Black Box Actually Means in Practice

"Black box" means the tester knows nothing about the internals of the system they're testing. It is a black box, opaque, sealed, unknowable except through what it reveals from the outside. The tester's starting point is identical to an external attacker's: your domain name, accessible from the public internet.

From that single starting point, a thorough black box engagement works through a defined sequence: map the external surface, fingerprint every service, analyze client-side code, probe authentication, test for known vulnerability classes, and chain every finding together into the highest-impact exploit path possible.

Let's walk through each phase in detail.

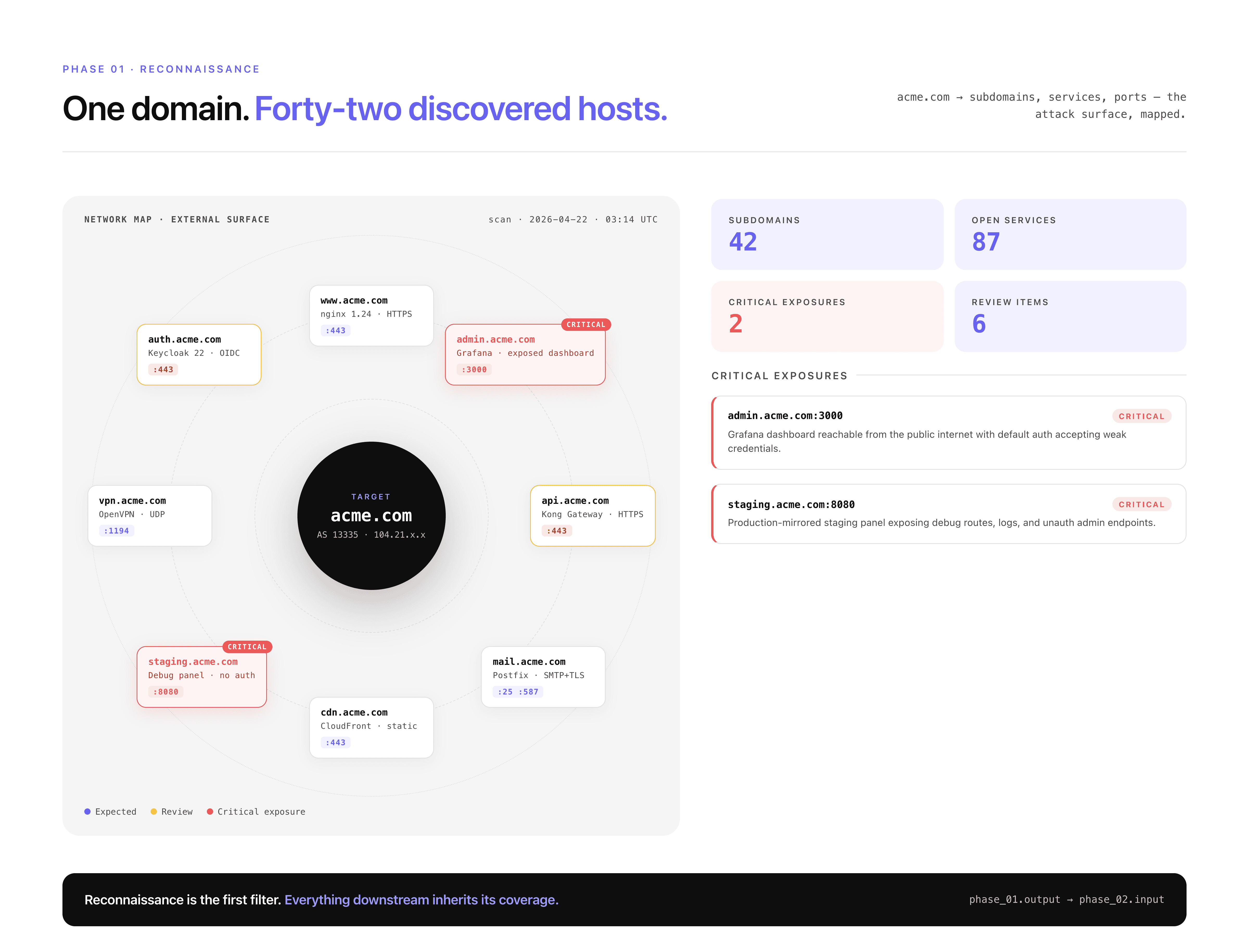

Phase 1: Reconnaissance and External Surface Mapping

Before a single vulnerability is tested, the tester builds the most complete picture possible of everything externally visible. This reconnaissance phase is where many traditional pentesters go shallow, and where AI-powered approaches go significantly deeper.

Subdomain enumeration is the process of discovering every domain and subdomain that your organization operates. This matters because:

Development and staging environments almost always have weaker security than production

Legacy subdomains for old products or features may still be running on outdated infrastructure

Acquired company domains may be connected to your infrastructure but maintained separately with less scrutiny

Internal tools accidentally exposed to the internet (Grafana, Jenkins, Kibana, internal wikis) often live on subdomains

The methodology uses brute-force DNS resolution against a wordlist of common prefixes. Not just the obvious ones:

Each prefix is queried against the target domain. Responses that resolve to an IP address are added to scope.

Certificate Transparency (CT) log queries add a second layer. Every TLS certificate issued for any domain is publicly logged in CT logs. Querying these logs reveals subdomains that DNS brute-forcing might miss, including historical subdomains that had certificates issued years ago and may still be running a server.

CNAME resolution identifies underlying infrastructure. A subdomain that CNAMEs to

company.azurewebsites.nettells you it's hosted on Azure App Service. One pointing tocompany.s3.amazonaws.comtells you it's an S3-hosted site. Infrastructure identification informs the attack surface, Azure App Service deployments have different vulnerability profiles than Kubernetes-hosted services.Port scanning runs across all discovered hosts. Full port scan, not just 80 and 443. This finds services that have no business being internet-accessible:

Port | Service | Why It's a Finding When Exposed |

|---|---|---|

6379 | Redis | Usually no authentication by default, full read/write access |

9200 | Elasticsearch | Often no auth, complete index access |

27017 | MongoDB | Pre-4.0 installs often had no auth by default |

5432 | PostgreSQL | Direct database access from internet |

8080 / 8443 | Internal APIs | Admin interfaces, management APIs not meant to be public |

9090 | Prometheus | Exposes all application metrics including error rates, DB queries |

3000 | Grafana | Dashboard access, often with default credentials |

8888 | Jupyter Notebook | Code execution environment |

9229 | Node.js Inspector | Remote debugger, allows arbitrary code execution |

4040 | ngrok / Localtunnel | Exposed development tunnels |

Finding an Elasticsearch instance on port 9200 with no authentication on a subdomain is a critical finding. The entire search index, which may contain user data, internal documents, application logs with sensitive information, is readable by anyone who hits that port.

Phase 2: JavaScript Bundle Analysis: The Most Underused Technique

Modern web applications are largely built as Single Page Applications (SPAs). React, Vue, Angular, they all compile your application code into JavaScript bundles that are served to every visitor's browser. And those bundles contain more sensitive information than most engineering teams realize.

Every bundle is downloaded and statically analyzed. Typical bundle sizes range from 5–20 MB of minified, compiled JavaScript. Inside:

API endpoint extraction: The compiled JavaScript contains every API call the frontend makes. Endpoints that don't appear in documentation, aren't in public API specs, and aren't tested in standard black box approaches, they're all in the JavaScript.

Hardcoded secret detection runs across 30+ pattern types:

Every finding is verified before being reported. An AWS access key found in a bundle is tested against the AWS API to determine what it actually grants access to, S3 read, EC2 describe, IAM permissions, before being assigned a severity rating.

Staging vs. production bundle comparison surfaces a specific category of finding that's unique to JavaScript analysis: endpoints that were removed from the production frontend but are still reachable on non-production URLs. A feature removed from

app.company.combut still accessible onstaging.company.com, using the same production database backend.

Phase 3: API Authentication Testing

Every endpoint discovered from the JavaScript bundle, from Swagger/OpenAPI exposure, from GraphQL introspection, from direct observation, is tested for authentication enforcement.

The initial test is simple: hit every endpoint without any credentials. Classify the response:

For every endpoint that doesn't return a clean 401, authentication bypass patterns are tested systematically:

JWT algorithm confusion attack: JWT tokens have a header that specifies the signing algorithm. Some implementations accept the

nonealgorithm, which means "no signature required." Forging a token withalg: noneand any payload you want bypasses signature validation entirely.

CORS misconfiguration testing: Cross-Origin Resource Sharing misconfigurations allow attacker-controlled websites to make authenticated requests to your API and read the responses. The test sends requests with 7+ different

Originheader values:

API documentation exposure: Many applications expose their API documentation publicly, often unintentionally:

A publicly accessible /actuator/heapdump is a critical finding. The heap dump contains the complete in-memory state of the running application, including database credentials, API keys, and active session tokens stored as string objects in memory.

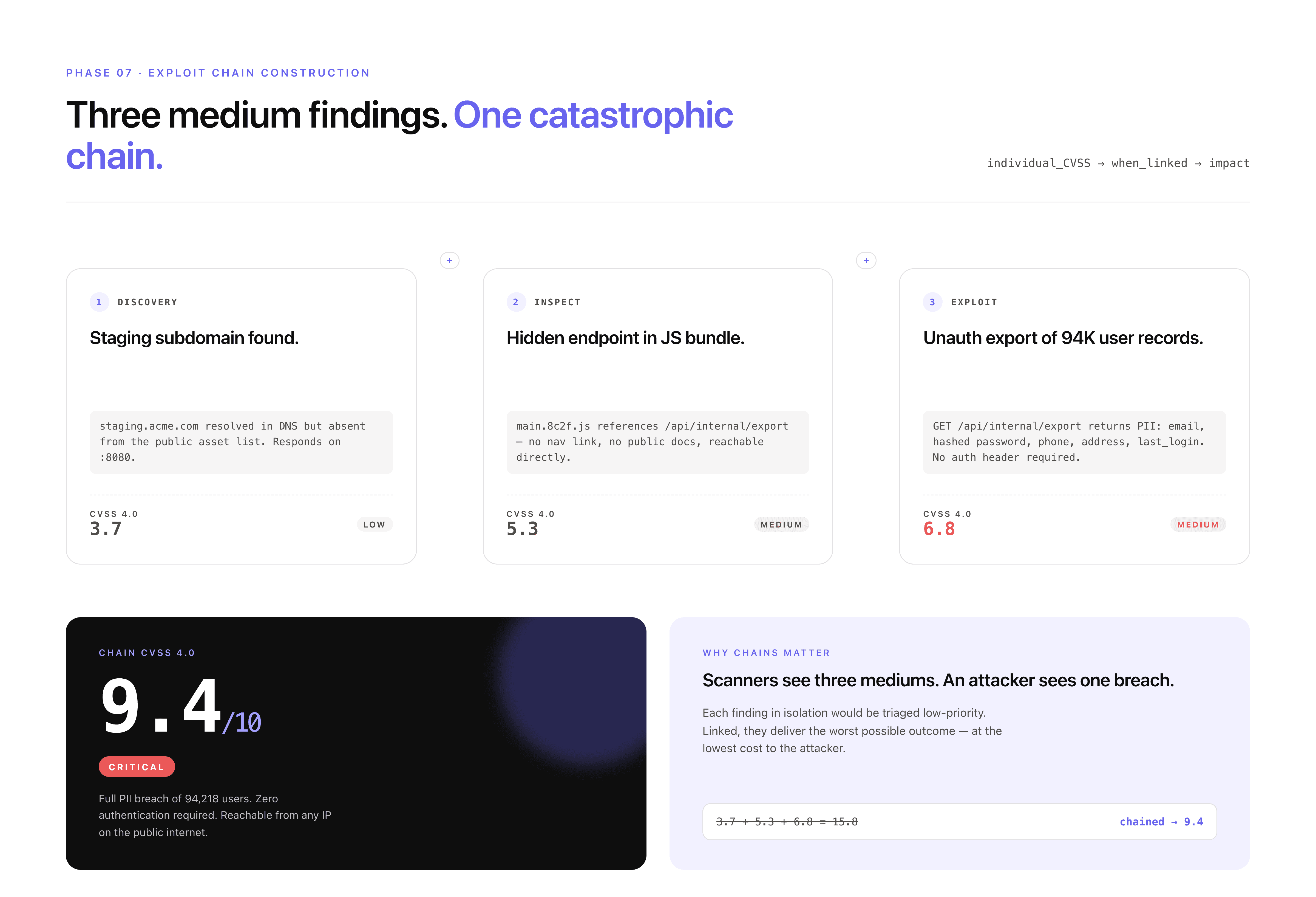

Phase 4: Exploit Chain Construction

This is the phase that separates real penetration testing from running a checklist. Every confirmed finding is evaluated against every other finding. The question is not "what does this finding mean in isolation?" but "what does this finding enable when combined with what I've found elsewhere?"

A real chain example:

None of the individual steps above is a CVSS 9+ finding. The subdomain is a medium. The JS endpoint reference is informational. The unauthenticated endpoint is high. The chain is critical.

What Black Box Definitively Cannot Find

Being precise about limitations is as important as being precise about capabilities. Black box testing cannot find:

Invisible auth bypasses: A Spring Security configuration that excludes

/api/v2/**from all security filters produces normal HTTP responses. There's no anomalous response for external testing to detect. The bypass is in the code, invisible from the outside.Git history secrets: A password committed and deleted three months ago is still in version control. Black box has no access to version control history.

Internal service vulnerabilities: Microservices that communicate over an internal network are completely invisible to external testing.

Business logic flaws requiring authentication: You can't test the checkout flow's payment manipulation if you can't authenticate to reach the checkout flow.

Reachability analysis: A vulnerable dependency flagged by SCA tooling might be a critical finding or might be dead code that's never called. Black box can't determine which without the source.

White Box Penetration Testing: The Source Code Audit

What White Box Actually Means in Practice

White box testing gives the tester complete visibility into the system's internals, source code, configuration files, infrastructure definitions, and version control history. Everything is visible. The question shifts from "what can I see from the outside?" to "what can I find when I can read everything?"

The threat model: someone who has obtained your repository. This happens more often than most teams assume, leaked CI/CD logs containing GitHub tokens, compromised developer laptops, misconfigured public repositories, insider access. The white box test answers the question: if this happened, how bad is it?

White box testing is also the only methodology that can find the most dangerous category of vulnerability: authentication bypasses that produce no external signal whatsoever.

Authentication Configuration Analysis: Where the Real Bypasses Live

The first thing a white box engagement reads is every authentication and authorization configuration in the codebase. Not skimmed, read end to end, with the goal of finding every location where the expected security enforcement might break.

Spring Security: the most commonly misconfigured Java auth framework:

Spring Security uses filter chains to enforce authentication. The configuration defines which URL patterns require authentication, which roles are required for specific paths, and crucially, which URLs are excluded from security enforcement entirely.

The webSecurityCustomizer().ignoring() call completely excludes matched paths from the Spring Security filter chain. This means the .hasRole("ADMIN") check on /api/admin/** does not apply to /api/v2/admin/**. The endpoint responds with real data to any unauthenticated request.

From the outside, this endpoint looks like it's working normally, 200 OK with data. There's no authentication error to detect. The vulnerability is entirely in the configuration and is only findable through a code read.

Express.js: middleware ordering vulnerabilities:

In Express, middleware is applied in the order it's registered. Authentication middleware added after route handlers don't protect those routes, ever.

This is an extremely common pattern in Express applications, especially those that grew organically. New routes get added by developers who assume the auth middleware is applied globally, they don't realize it was added to the router after the admin routes.

Django: missing decorators on class-based views:

The class-based view without the decorator is publicly accessible. The function-based view above it is protected. The difference is a single decorator that's easy to omit when a developer converts a view from one style to another.

Secrets and Credential Scanning: In Code, In Config, In History

White box secret scanning goes three layers deep, current code, configuration files, and version control history.

Layer 1: Current codebase and configuration

Every file type that commonly contains credentials is scanned:

A particularly common finding in Kubernetes configurations:

Layer 2: Git history

This is the layer most teams don't think about and most pentest firms don't check. Every commit that ever touched the repository is part of the history. Deleted files, replaced secrets, removed configuration, all of it is recoverable.

A deleted secret in Git history is still an active credential if it was never rotated after deletion. The finding isn't just "here's a historical secret," it's "this production Stripe API key was committed, deleted, but never rotated. It still works. Here is the API call that confirms it."

Layer 3: Dependency reachability

Software Composition Analysis (SCA) tools flag every dependency with a known CVE. The problem: not every vulnerable dependency is actually exploitable in your application. A vulnerable image processing library is critical if your application processes user-uploaded images and passes them to that library. It's not a finding if the library is installed as an indirect dependency of a testing framework and is never called in production code paths.

White box reachability analysis traces whether the vulnerable function in the dependency is called, directly or transitively, from production code. This reduces SCA noise dramatically and produces a prioritized list of dependencies that actually present risk.

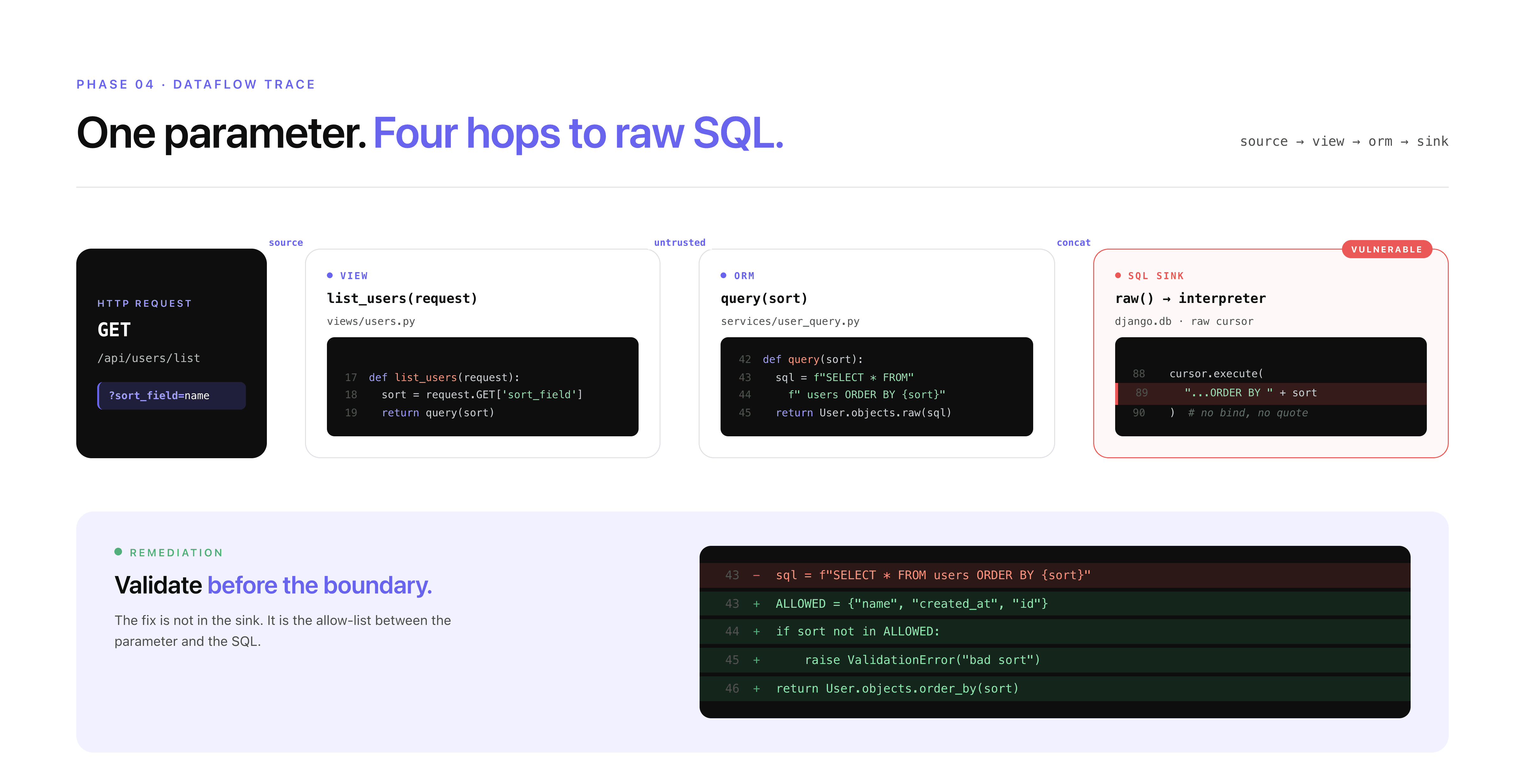

Dataflow Tracing: Following Input From Entry to Impact

The most technically sophisticated part of white box testing is dataflow tracing, following user-controlled input from where it enters the application to every place it's used.

The finding maps the exact path of the input:

Infrastructure Code Review

Modern applications define their infrastructure as code, Terraform, Kubernetes manifests, Dockerfiles, Helm charts. All of it is in the repository and all of it is in scope for a white box engagement.

Common infrastructure findings:

What White Box Definitively Cannot Find

White box is the deepest methodology, but it has its own blind spots:

Runtime-only vulnerabilities: Code that looks safe statically but behaves differently under specific runtime conditions. Race conditions in concurrent request handling. Vulnerabilities that only manifest with specific database states or specific sequences of API calls.

Configuration drift: The deployed configuration may differ from what's in the repository. Environment variables overriding config files, Kubernetes secrets overlaying hardcoded values, infrastructure configuration managed outside version control.

Third-party service vulnerabilities: APIs your application calls. SaaS integrations. CDN configurations. These are outside the repository and invisible to static analysis.

Gray Box Penetration Testing: The Insider Threat Simulation

What Gray Box Actually Means in Practice

Gray box testing gives the tester authenticated access, real credentials for one or more user roles, and sometimes additional context: architecture documentation, API specs, information about what the application is designed to do. The inside is partially visible.

The threat model: a legitimate user who has decided to systematically probe the limits of their access. This is your most dangerous and most common threat in SaaS applications. A customer who changes user IDs. An employee who tries to access files outside their department. A compromised account being used as a pivot.

Gray box testing answers the question: what can someone who already has valid credentials actually do beyond what they're supposed to?

Access Control Testing: The Server Must Enforce What the UI Hides

The foundational principle of access control testing: anything enforced only in the UI is not enforced at all.

If the admin panel is hidden from non-admin users in the interface but the underlying API endpoints have no server-side role check, any user who knows the endpoint path has admin access. This is the most consistently found vulnerability category across gray box engagements.

IDOR: The Vulnerability That Exposes the Most Data

Insecure Direct Object References (IDOR) occur when an application uses a user-supplied identifier to retrieve a record without verifying that the requesting user is authorized to access that specific record.

It sounds simple. The impact is consistently massive.

The cross-tenant IDOR is particularly devastating in B2B SaaS applications. Every customer's data is in the same database. If the API doesn't filter records by the authenticated user's tenant at the query level, if it relies on the client sending the correct tenant ID, then any authenticated user can access any other customer's complete data set.

Tenant isolation needs to be verified at the data layer, not just the API layer. The test confirms that the SQL query itself contains a WHERE tenant_id = [authenticated_user_tenant] clause, not just that the API returns a 403 for an obvious cross-tenant request.

JWT Manipulation: Privilege Escalation in Tokens

JWTs are the authentication mechanism of choice for modern APIs. They're also a consistently productive testing surface.

Business Logic Testing: What No Scanner Can Touch

Business logic vulnerabilities require understanding what the application is intended to do and then systematically finding where it doesn't enforce that intent.

Test Category | What's Being Tested | Example Finding |

|---|---|---|

Workflow bypass | Can step N be called without completing steps 1 through N-1? | POST /checkout/confirm succeeds without POST /checkout/payment |

Price manipulation | Can price fields be modified before server-side calculation? | Changing total_price in request body before order confirmation |

Discount abuse | Can single-use codes be reused? Stacked beyond policy? | Replay of discount validation request with same code |

Quantity manipulation | Can negative quantities reduce total? | quantity: -1 in cart reduces total below zero → negative charge |

Rate limit evasion | Do rate limits apply consistently across all parameters? | Rotating X-Forwarded-For header bypasses IP-based rate limit |

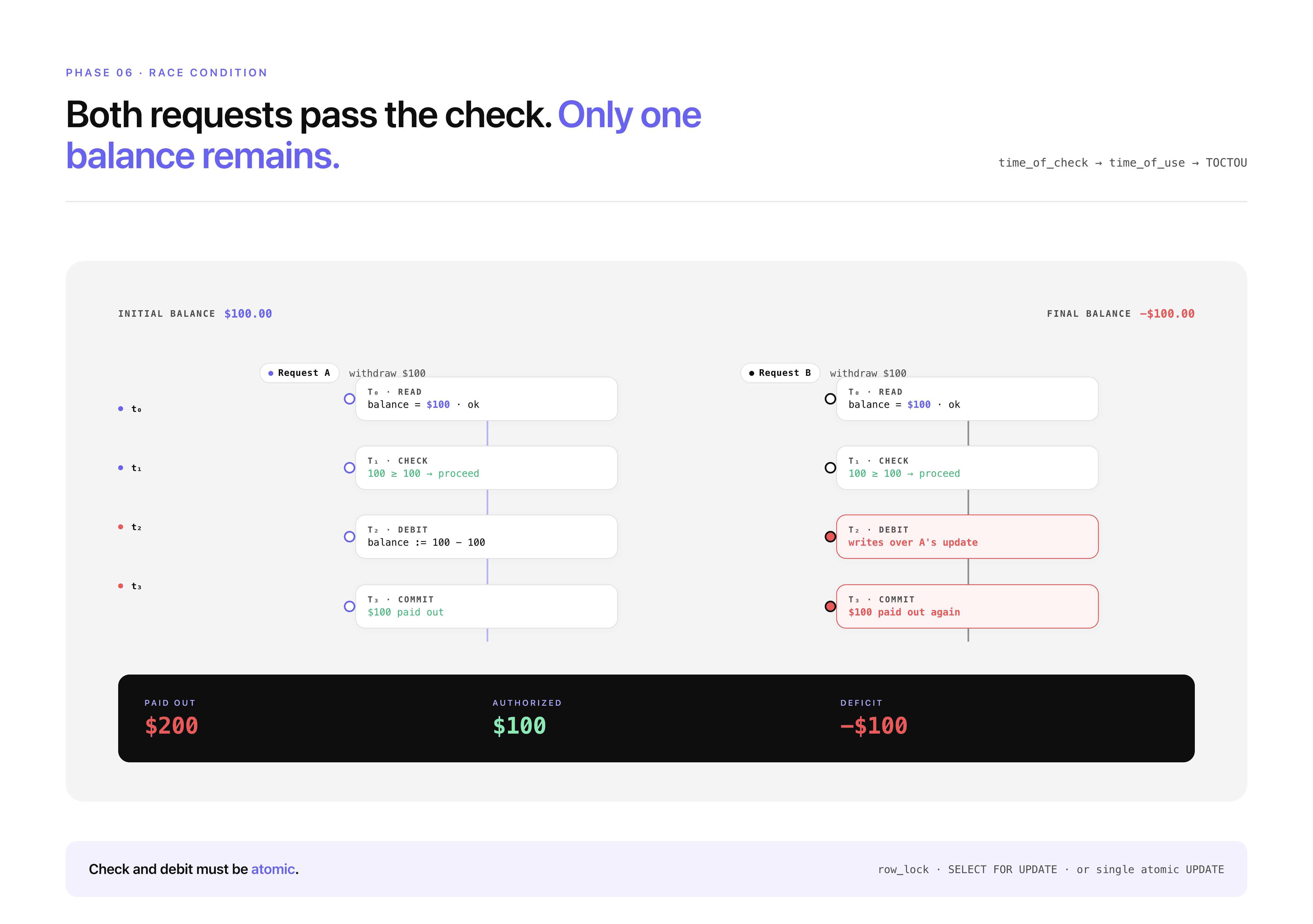

Concurrent request race | Do simultaneous requests exploit time-of-check-time-of-use gaps? | Two simultaneous withdraw requests both pass balance check |

Subscription abuse | Can paid features be accessed via direct API call as free user? | GET /api/premium/export works for free-tier users |

Privilege persistence | Does role downgrade immediately invalidate tokens with old role? | Admin→User demotion doesn't invalidate admin-capability token |

The concurrent request race condition deserves specific attention, it's a category that's consistently underestimated:

A successful race condition on a withdrawal endpoint is a direct financial loss vulnerability. It's not theoretical, it's reproducible with a script and a real account.

Black Box vs White Box vs Gray Box Comparison

Type | Access Level | Strength | Limitation |

|---|---|---|---|

Black box | No access, external only | Identifies exposed assets, infrastructure risks, and external attack paths | Cannot detect internal vulnerabilities, code flaws, or hidden logic issues |

White box | Full access to code and config | Finds deep vulnerabilities, authentication flaws, and root causes at code level | Cannot simulate real-world external attack conditions or runtime behavior |

Gray box | Authenticated user access | Detects IDOR, privilege escalation, and business logic vulnerabilities | Limited visibility into infrastructure and full code-level context |

Each type answers a different security question. Black box asks what is exposed, white box asks what is broken internally, and gray box asks what can be abused once access is gained.

Running only one answers only part of the problem.

Choosing the Right Test: The Decision Framework

Now that you understand what each methodology actually does, here is the decision framework:

Scenario | Recommended Test | Core Reason |

|---|---|---|

First pentest, no baseline | Full Assessment (all three) | Don't guess at your threat model, map the full surface first |

Pre-launch with customer data | Gray Box + White Box | Business logic and auth config issues are highest priority |

SOC 2 Type II audit | Full Assessment | Auditors want external, code, and authenticated coverage |

Post-acquisition security review | Full Assessment | Unknown codebase history, cover all angles |

Regression after major feature release | White Box | Fastest check for new code introducing auth or injection issues |

Continuous ongoing validation | Continuous (monthly) | Attack surface changes constantly, testing should match |

"Clean last pentest, want deeper" | White Box | Most prior tests are black box, code level is likely untouched |

Compliance-only, limited budget | Black Box | Most compliance frameworks satisfy with external surface coverage |

SaaS B2B with multi-tenant data | Gray Box priority | IDOR and tenant isolation are the highest-impact category |

The optimal security posture runs all three, which is exactly what a Full Assessment delivers: black box external surface coverage, white box source code depth, gray box insider threat simulation, unified into a single report delivered in 48–96 hours.

What to Demand From the Report, Per Test Type

The test type determines what the report should contain. Here's the minimum acceptable bar:

Report Element | Black Box | White Box | Gray Box |

|---|---|---|---|

Working proof-of-exploit (curl / script) | Required for every finding | Required for every finding | Required for every finding |

Root cause to file + line number | Not possible (no code access) | Required | Required if code provided |

Remediation diff | Not possible | Required | Required if code provided |

Exploit chain documentation | Required | Required | Required |

CVSS 4.0 per finding | Required | Required | Required |

JS bundle analysis results | Required | N/A | N/A |

Auth config findings | N/A | Required | N/A |

Git history scan results | N/A | Required | N/A |

IDOR test results by identifier type | N/A | N/A | Required |

Business logic test results | N/A | N/A | Required |

Compliance mapping (SOC 2 / PCI / HIPAA) | Required | Required | Required |

Retest verification | Included | Included | Included |

If a black box report doesn't include JavaScript bundle analysis results, the tester didn't do it. If a white box report doesn't include a Git history scan, same conclusion. If a gray box report doesn't document every identifier type tested for IDOR, the IDOR testing wasn't systematic.

These aren't nice-to-haves. They're the minimum evidence that the engagement covered what it claimed to cover.

Conclusion

Black box, white box, and gray box are not levels of rigor. They are different lenses, each revealing a different category of vulnerability, each simulating a different threat model.

Black box tells you what a complete outsider can do.

White box tells you what happens if your code is obtained.

Gray box tells you what a legitimate user can do if they decide to go malicious.

All three are real threats. The most dangerous breaches often start with black box reconnaissance, pivot through a credential leak (white box territory), and escalate through an IDOR or privilege escalation (gray box territory). The chain crosses all three.

Running all three in a single engagement, a Full Assessment, is how you get the complete picture. At CodeAnt AI, that's a 48–96 hour engagement with a unified report, working proof-of-exploit for every finding, root cause to file and line for everything the code access allows, and a retest included.

If no CVSS 9+ critical vulnerability or active data leak is found, you pay nothing.

→ Book a 30-minute scoping call. Testing starts within 24 hours.

FAQs

What is the difference between black box, white box, and gray box penetration testing?

Which type of penetration testing is best for SaaS applications?

Can I run black box and white box simultaneously, or do they need to be sequential?

Do you need all three types of penetration testing?

If we do gray box, does the tester need production credentials or test credentials?