Here is the situation most engineering leaders are in right now. Your last penetration test was six months ago. Since then your team has shipped 47 deployments. Three new API endpoints are in production. Your authentication flow was refactored in February. A third-party SDK processing payment metadata was added in March.

Your penetration test report says your systems are secure. It is describing a system that no longer exists in its tested form.

This is not a failure of the penetration test that was run. It is a structural failure of the model that runs one test per year against an application that changes every week. The deployment velocity gap, the time between when a vulnerability enters production and when security testing detects it, stretches to an average of 180 days in an annual testing program. For a SaaS team shipping code weekly, 180 days is not an acceptable detection window.

PTaaS, penetration testing as a service, was built to close that gap. Not as a better version of the annual test. As a fundamentally different operating model where testing cadence matches deployment cadence, findings land in real time, and the evidence trail is continuous across the full compliance observation period rather than a single point-in-time snapshot.

This guide covers exactly:

what PTaaS is at a technical level

how it relates to agentic pentesting and autonomous testing

what the major platforms actually deliver

what it costs

what your SOC 2 auditor will and will not accept from a PTaaS provider

But first get familiar with the best PTaas vendors.

Who Are the Leading PTaaS Providers in 2026?

The PTaaS market in 2026 has two distinct tiers: established crowdsourced platforms that have been operating for 5–10 years, and newer AI-native platforms that have changed the methodology depth available to mid-market buyers.

Established crowdsourced PTaaS leaders: Cobalt (credit-based, fastest launch, best for DevSecOps-integrated first PTaaS engagement), HackerOne (largest researcher pool, bug bounty + PTaaS combination, FedRAMP capable), Synack (highest-vetting crowdsourced model, government and defense focus), Bugcrowd (broadest researcher community, flexible engagement models), NetSPI (enterprise consulting depth, complex multi-service environments), BreachLock (mid-market focus, fast delivery).

AI-native PTaaS platforms: CodeAnt AI (unified defensive + offensive on shared code intelligence, the only platform where the same system reviewing pull requests conducts PTaaS cycles), Pentera (automated security validation for internal network infrastructure), NodeZero/Horizon3.ai (autonomous network attack path chaining).

For B2B SaaS companies under 500 employees, the right PTaaS provider depends on one question: do you need human-validated crowdsourced testing, or AI-driven testing with deeper application-layer coverage? Cobalt is the most cost-efficient entry into crowdsourced PTaaS at this company size. CodeAnt AI is the right choice when white box source code analysis, gray box business logic testing, and a complete SOC 2 evidence package are required, and when pay-per-confirmed-high/critical-finding pricing matters to a growth-stage company that cannot absorb a $65K–$100K annual commitment regardless of findings.

What is PTaaS (penetration testing as a service)

PTaaS is a delivery model for penetration testing that replaces the project-based annual engagement with continuous or on-demand testing delivered through a platform. The testing methodology, reconnaissance, exploitation, chain construction, reporting, does not change. What changes is how frequently testing runs, how findings flow to the teams who need to act on them, and what the output looks like for compliance.

Three things define a real PTaaS engagement and separate it from everything marketed as PTaaS that is not:

Continuous or cadenced testing. Rather than a fixed two-week window once a year, tests run on a defined schedule, monthly, quarterly, after major deployments, or continuously. New attack surface introduced by each deployment gets assessed within weeks, not the following year.

Real-time finding delivery. Findings surface during the test window, not in a PDF delivered three weeks after the engagement closes. A critical vulnerability confirmed on day two of a testing cycle is in the engineering team's remediation queue by day three.

Integrated remediation workflows. PTaaS platforms connect directly to Jira, GitHub Issues, Linear, and Slack. A confirmed finding creates a ticket automatically. Remediation is tracked inside the platform. Retesting is scheduled without a separate engagement or additional cost.

What PTaaS is not: a rebranded vulnerability scanner. This distinction matters because SOC 2 auditors, PCI-DSS assessors, and ISO 27001 reviewers are trained to identify the difference. A scanner identifies potential vulnerabilities through CVE signature matching and version detection, it does not confirm exploitation.

Real PTaaS delivers confirmed exploitation with working proof-of-concept, exploit chain construction across findings, business logic testing that requires understanding what the application is designed to do, and authenticated gray box testing across all role boundaries. If a provider cannot show you a finding with working proof-of-exploit, they are selling a scanner subscription with a PTaaS label.

PTaaS vs Traditional Penetration Testing: The Operational Difference

Aspect | Traditional pentest | PTaaS |

|---|---|---|

Testing cadence | Annual or semi-annual fixed window | Continuous, monthly, or deployment-triggered |

Finding delivery | Static PDF 2–4 weeks post-engagement | Real-time dashboard during engagement |

Remediation workflow | Email, spreadsheet tracking | Integrated ticketing: Jira, GitHub, Linear |

Retest scheduling | Separate engagement, additional cost | Built into platform, on-demand |

SOC 2 Type II evidence | Single point-in-time report | Continuous evidence trail across full audit period |

Attack surface coverage | System as it existed on test date | System as it evolves across deployment cadence |

Deployment velocity gap | Up to 365 days, average 180 days | 15–30 days depending on cadence |

New asset detection | None — scope is fixed at engagement start | Fresh reconnaissance each cycle catches new subdomains, endpoints, cloud assets |

Compliance report generation | Manual assembly per engagement | On-demand from platform, mapped to TSC/PCI/ISO controls |

Cost model | Fixed per engagement | Subscription or credit-based annual |

The deployment velocity gap is the most operationally significant number in this table. For a SaaS team shipping code every week, closing detection time from 180 days to 15 days is the difference between finding a critical authentication bypass before adversaries discover it and finding it six months after it shipped. For context: the average cost of a data breach in 2024 was $4.88 million. The vulnerability most likely to cause a breach is the one introduced after the last test, exactly what annual testing misses by design.

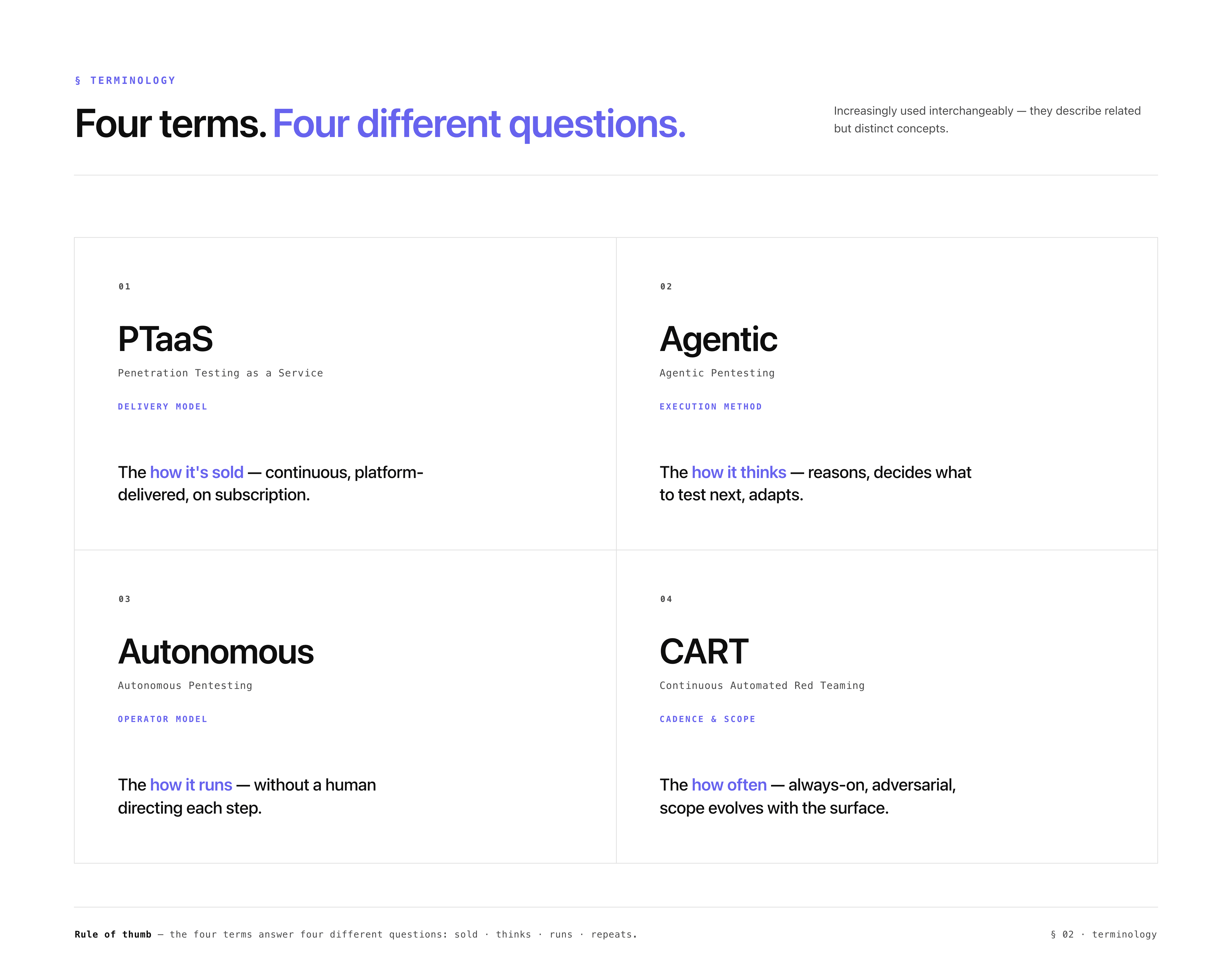

PTaaS, Agentic Pentesting, Autonomous Pentesting, and CART: What Each Term Means

These four terms are increasingly used interchangeably in vendor marketing. They describe related but distinct concepts, and understanding the differences is important when evaluating platforms and reading compliance requirements.

PTaaS is the delivery model, continuous, platform-driven, subscription-based penetration testing as opposed to project-based annual engagements. PTaaS describes how testing is delivered, not how it is executed technically.

Agentic pentesting describes the execution methodology, AI-driven testing where an agent reasons about the attack surface and decides what to test next based on what it finds, rather than following a fixed signature-based script. An agentic pentesting platform does not run a predetermined checklist. It maps the attack surface, identifies what reconnaissance reveals, constructs exploit chains from combinations of findings, and adapts its approach based on what each phase surfaces. This is categorically different from a scanner that runs 50,000 known-vulnerability checks in sequence.

Autonomous pentesting extends the agentic concept to describe testing that runs without human operator involvement at the execution level. Autonomous pentesting platforms, like Pentera's automated security validation or Horizon3.ai's NodeZero, operate independently against defined targets and report findings without requiring a human researcher to direct each test step.

Continuous automated red teaming (CART) is the most aggressive implementation: adversarial simulation running continuously rather than on a cadence, with findings streaming in real time as the system evolves. CART treats the attack surface as a living target rather than a fixed scope, new assets get added automatically, new findings surface as they are confirmed, and the engagement never formally closes.

PTaaS is the delivery wrapper that enables all three. The platform infrastructure, real-time reporting, and compliance evidence generation are PTaaS. The methodology running inside that platform, agentic reasoning, autonomous execution, or continuous red teaming, determines the depth and accuracy of what gets found.

The most sophisticated implementations combine all four: a PTaaS delivery model with agentic AI execution that includes autonomous testing cycles and continuous red teaming against the external surface. This is where the gap between platforms becomes most visible, and where the difference between finding your most critical vulnerability and missing it entirely lives in methodology rather than marketing.

How PTaaS Works: The Technical Phases

PTaaS delivers the same testing phases as a full penetration engagement. The delivery model differs, not the methodology depth. Every cycle covers:

Scoping and asset registration

The first engagement establishes complete scope: every domain, subdomain, API endpoint, cloud infrastructure component, and repository. In subsequent cycles, scope updates flow through the platform, new subdomains, new API versions, new cloud assets added to the test registry before the next cycle begins. This is the mechanism that catches attack surface expansion between cycles. In a traditional annual test, scope is fixed at engagement start and stale by the end of the test window.

Reconnaissance and external surface mapping

Every cycle opens with fresh reconnaissance. DNS enumeration runs across 150+ subdomain prefix patterns. Certificate Transparency log queries surface any new subdomains that received TLS certificates since the last cycle, including subdomains that exist in CT logs but have been removed from active DNS, which may still be running servers with outdated software. Full TCP port scanning covers all discovered hosts. Cloud asset enumeration checks for new S3 buckets, Azure Blob containers, GCP storage, exposed CI/CD dashboards, and container registries. Any new asset discovered that was not present in the previous cycle is flagged immediately and tested in the current cycle, not deferred to the next.

JavaScript bundle analysis

Every JS file served by the application is downloaded and analyzed every cycle. This catches secrets introduced since the last cycle, a developer who hardcoded a live API key in a new feature, an internal endpoint reference embedded in a frontend configuration file, a database connection string in a build artifact. Secret detection runs 30+ pattern types. Every confirmed secret is verified against the live service before reporting, a Stripe key is tested against the Stripe API to confirm whether it is active and what permissions it grants. This phase is why PTaaS is not simply running the same test more frequently. New code ships every deployment. New JS bundles ship with it. The secrets and endpoint references change with every release and require fresh analysis every cycle.

Source code analysis (white box track)

In PTaaS engagements with repository access, the white box analysis runs incrementally. Rather than reading the entire codebase from scratch each cycle, the analysis focuses on code changed since the last engagement, new files, modified authentication configurations, new API controllers, changed middleware ordering. Changed authentication configurations receive the deepest scrutiny. A modification to a Spring Security filter chain configuration or Express.js middleware ordering that introduces an authentication bypass produces no external signal, an external scanner will see a 200 response and flag nothing. Reading the changed configuration catches the bypass before the first real request reaches it.

Authenticated gray box testing

Every cycle runs the full gray box track with test credentials for each role. IDOR testing covers every identifier-accepting endpoint systematically. Privilege escalation testing covers every admin endpoint with standard user credentials. JWT manipulation tests every token for signature validation failures. Business logic testing covers every critical workflow for step-bypass attacks, concurrent request race conditions, subscription tier abuse, and price manipulation. This is the category most completely absent from external-only testing, and the category where most critical findings in SaaS applications originate. PTaaS ensures IDOR vulnerabilities introduced in new features are found within 30 days of shipping, not in the following year's annual engagement.

Exploit chain construction

Every confirmed finding from every track is cross-referenced for chain potential. Three medium-severity findings that individually get deprioritized combine into a critical chain when one provides the identifier another needs to exploit, and the third removes the final access control. PTaaS platforms that report findings in isolation, without evaluating chain potential, miss the attack paths that represent the most serious real-world risk and that sophisticated adversaries systematically construct. For more on how chain construction works technically, see the complete AI penetration testing methodology.

Real-time reporting and remediation integration

Critical and high findings surface immediately, not at cycle close. The platform creates tickets in the engineering workflow, assigns ownership, and tracks remediation status. On-demand retesting confirms fixes against the production environment and marks findings remediated or still open in the platform dashboard.

Compliance report generation

At cycle close, the platform generates compliance-ready documentation. For SOC 2: findings mapped to specific TSC control IDs (CC6.1, CC6.6, CC7.1), timeline documentation (discovery date, remediation date, verification date per finding), data deletion certificate. For PCI-DSS: requirement-specific mapping including Requirement 11.4 (penetration testing at least annually and after significant changes). For ISO 27001: Annex A control mapping. Reports generate from the platform, not assembled manually from notes and screenshots after the fact.

The Major PTaaS Platforms Compared (2026)

This is the section most PTaaS guides skip. Understanding what each platform actually delivers, not what the marketing says, determines whether you get findings your auditor accepts or a scanner report with a PTaaS label.

Platform | Model | Confirmed exploitation | White box / source code | Gray box / authenticated | SOC 2 evidence package | Defensive code review | Best for |

|---|---|---|---|---|---|---|---|

CodeAnt AI | Agentic AI + defensive platform | Yes, working PoC per finding | Yes, full dataflow tracing | Yes,systematic IDOR, privilege escalation, business logic | Yes, complete (retest, timeline, data deletion cert) | Yes, same platform reviews PRs in CI/CD | SaaS teams needing unified offensive + defensive coverage |

Cobalt | Crowdsourced, credit-based | Yes, human-validated | No | Partial | Partial, verify before assuming SOC 2 acceptance | No | Fast-moving DevSecOps teams, first PTaaS engagement |

HackerOne | Crowdsourced + bug bounty | Yes, human-validated | No | Partial | Partial, variable quality, verify explicitly | No | Organizations wanting researcher scale and bug bounty flexibility |

Synack | AI-assisted crowdsource (SRT) | Yes, human-validated | No | Partial | Yes, strong compliance focus | No | Government, defense, highly regulated enterprises |

Pentera | Automated security validation | Partial, automated confirmation | No | Limited | Partial | No | Continuous network/infrastructure validation |

NetSPI | Enterprise PTaaS + consulting | Yes, deep manual | No | Yes | Yes, strong compliance mapping | No | Enterprise-scale, complex multi-service environments |

Intruder | Continuous ASM + scanning | No, scanner-based | No | No | No, scanner output only | No | Continuous external surface monitoring, not PTaaS |

The critical difference that no comparison table fully captures: Every platform above operates exclusively on the offensive side. CodeAnt AI is the only platform where the same code intelligence reviewing your pull requests in CI/CD is also conducting reconnaissance against your external attack surface. This matters for PTaaS depth in a specific way: the offensive testing cycle is informed by months of defensive code review history. The platform already knows your authentication patterns, your middleware configuration, your data flows, and your insecure API call patterns before the first reconnaissance probe of the PTaaS cycle is sent. An adversary with persistent inside knowledge of your codebase testing your external surface is the most accurate simulation of how sophisticated real-world attacks operate. No external-only PTaaS platform can replicate this, because they have never seen your code.

For a deeper technical comparison of how these platforms handle specific vulnerability classes, see best AI penetration testing tools in 2026.

Top Alternatives to HackerOne PTaaS in 2026

HackerOne PTaaS is the most-searched PTaaS alternative query in 2026. Organizations evaluating alternatives typically cite cost at scale, inconsistent tester quality across engagements, and the absence of source code analysis as a standard service.

The most frequently recommended alternatives by category:

Closest crowdsourced alternatives (direct HackerOne replacements): Cobalt, credit-based model, faster test launch (24 hours), real-time reporting, strong DevSecOps integrations. Most commonly recommended first alternative. Synack, more rigorous tester vetting than HackerOne, FedRAMP authorized, premium pricing. Bugcrowd, combines structured PTaaS with bug bounty flexibility, broad researcher pool.

Enterprise methodology alternatives: NetSPI, consulting-led PTaaS with deep manual testing depth, strongest for complex enterprise environments. BreachLock, mid-market focus, AI-assisted testing with human validation, fast delivery.

Structurally different alternative (not a direct replacement, a better architecture): CodeAnt AI addresses the problems that drive HackerOne alternative evaluations in the first place:

Why teams leave HackerOne | What Cobalt/Synack/Bugcrowd offer | What CodeAnt AI offers |

|---|---|---|

High cost at scale ($100K+/yr) | Similar cost range | Pay only for confirmed high/critical findings. $0 if only low/medium found. |

No source code analysis | No source code analysis standard | Full white box source code analysis standard |

Different tester each engagement, no accumulated context | Different tester each engagement | Same AI system accumulating code context across every PTaaS cycle |

SOC 2 report requires post-processing for auditor acceptance | Partial, verify explicitly before assuming acceptance | Complete 8-document SOC 2 package: retest report, data deletion certificate, TSC control mapping (CC6.1, CC6.6, CC7.1) |

No defensive code review | No defensive code review | CI/CD integrated code review on every pull request |

The right HackerOne alternative depends on what is actually driving the evaluation. If the requirement is human researcher scale with bug bounty integration: Cobalt or Bugcrowd. If the requirement is application-layer depth, code-informed PTaaS cycles, and a complete compliance evidence package without assembling it manually: CodeAnt AI is the only platform on this list built for that.

What PTaaS Costs in 2026

PTaaS pricing has standardized around scope and cadence rather than time-and-materials. Real market ranges based on what is publicly available and verifiable:

Model | Annual cost | What is included | Best for |

|---|---|---|---|

Entry PTaaS | $8,000–$15,000/yr | Single web app or API, quarterly cycles, platform access, basic compliance reports | Early-stage SaaS, SOC 2 Type I evidence |

Mid-market PTaaS | $15,000–$40,000/yr | Full stack (web + API + cloud), monthly cycles, gray box, SOC 2 evidence package | SaaS teams shipping weekly, SOC 2 Type II |

Enterprise PTaaS | $40,000–$100,000/yr | Multiple applications, continuous testing, white box, custom compliance mapping | Enterprise SaaS, PCI-DSS, complex infrastructure |

Credit-based | $1,500–$3,000/credit | One credit = one test cycle on one asset, on-demand | Variable testing needs, supplemental coverage |

The cost comparison against traditional testing is significant. A semi-annual program with two traditional engagements and two retests costs $20,000–$60,000 plus manual evidence assembly, scheduling overhead, and scope renegotiation for each cycle. Mid-market PTaaS at $15,000–$40,000 annually delivers monthly testing, real-time findings, integrated remediation, and automatic compliance report generation, at comparable or lower total cost. For a full breakdown of what drives penetration testing pricing at each tier, see how much does a penetration test cost.

PTaaS Pricing Models Explained: What You're Actually Paying For

The pricing table above shows ranges. What it doesn't show is the structural difference between pricing models — which matters as much as the number itself.

Subscription/annual model (Cobalt, HackerOne, Synack, NetSPI): You pay a fixed annual fee regardless of how many findings are confirmed. A year with zero critical findings costs the same as a year with ten. For organizations that need guaranteed test cadence and budget predictability, this model works. The risk: you are paying for testing cycles, not confirmed risk.

Credit-based model (Cobalt, xBow): You purchase credits and redeem them per test cycle or engagement. More flexible than pure annual subscription, useful for variable testing needs across the year. Same underlying dynamic: cost is tied to testing activity, not to findings severity.

Outcome-based model (CodeAnt AI): You pay only when high or critical findings are confirmed. Low and medium findings are always free. Unlimited retests included until every finding is confirmed remediated. This aligns cost directly to actual risk found, not to time spent testing. For a growth-stage SaaS company, this model means the cost of a clean engagement is $0, and the cost of an engagement that finds a critical authentication bypass is the cost of knowing about it before an adversary does.

What drives PTaaS cost across all models:

Number of applications, APIs, and IP ranges in scope

Testing frequency (quarterly vs. monthly vs. continuous)

Gray box testing (requires test credentials for each role, more scope)

White box/source code analysis (requires repository access, significantly deeper)

Compliance reporting format (SOC 2 vs. PCI-DSS vs. ISO 27001 mapping)

Retest included vs. charged separately

What SOC 2 Auditors ActuallyAaccept from PTaaS

This is where most PTaaS evaluations go wrong, and where organizations make expensive mistakes that surface at audit time rather than during vendor selection.

SOC 2 does not explicitly mandate penetration testing in its written criteria. In 2026, however, 94% of SOC 2 auditors expect penetration testing evidence for CC7.1 (vulnerability management and monitoring) and CC4.1 (ongoing risk identification). Showing up to a SOC 2 Type II audit without penetration testing evidence is a risk most SaaS companies cannot afford, it triggers auditor questions that delay the audit and may result in a qualified opinion on CC7.1.

What auditors require, specifically:

Working proof-of-exploit per finding. Auditors look for evidence of "exploitable vulnerabilities," which means confirmed exploitation, not a scanner finding that a CVE matches your software version. A finding is only exploitable when someone exploited it and documented the exact request, the response showing unauthorized access, and the CVSS score with metric justification. Scanner output will not satisfy this requirement.

Findings mapped to specific TSC control IDs. Not "SOC 2" as a general reference. Specific control mappings: CC6.1 for authentication failures, CC6.6 for external threat protection findings, CC7.1 for the complete finding and remediation evidence set. Auditors will ask which specific criteria each finding addresses.

Retest report confirming verification in the production environment. This is the most commonly missing document in every testing program, not just PTaaS. A retest conducted against staging and not production leaves the audited system without verification evidence. The retest report must state explicitly that testing was conducted against the production environment. For the complete SOC 2 evidence package requirement, see SOC 2 penetration testing requirements.

Continuous evidence for Type II. A single annual pentest report is a point-in-time snapshot. SOC 2 Type II evaluates controls over a 6–12 month observation period, auditors increasingly expect evidence of ongoing security monitoring throughout that window. Multiple PTaaS cycle reports across the observation period satisfy that expectation directly. This is the strongest argument for PTaaS over annual testing for Type II compliance specifically.

Data deletion certificate. Formal confirmation that all data accessed during testing, including customer records accessed during exploitation, was permanently destroyed. Enterprise customers and auditors reviewing vendor management controls (CC9.2) require this document. Many PTaaS providers do not issue it. Ask explicitly before signing.

What auditors will not accept: automated scanner output presented as penetration testing evidence. Auditors are trained to distinguish between a scan report and a penetration test report. The presence of working proof-of-exploit is the clearest distinguishing signal.

You can also check out this guide on "The Question Every SOC 2 Auditor Will Ask That Most Engineering Teams Can't Answer"

The Gap PTaaS Alone Does Not Close

PTaaS is not a complete security program independently. The most sophisticated PTaaS platforms deliver excellent continuous offensive coverage. What they do not do is review the code that ships between cycles.

A vulnerability introduced on day two of a monthly PTaaS cycle sits in production for 28 days before the next cycle detects it. For a team shipping multiple deployments per week, that is dozens of commits worth of untested code in production at any given moment, a continuous stream of potential new findings between cycles.

The only program that closes this gap structurally is one where continuous offensive PTaaS testing is paired with continuous defensive code review on the same platform. When the same system analyzing every pull request for insecure patterns also conducts the reconnaissance and exploit chain construction in the PTaaS cycle, two things happen that neither program achieves independently.

First, the PTaaS cycle is deeper. It arrives with code intelligence, authentication patterns already mapped, data flows already traced, middleware configurations already understood. The offensive testing targets precisely the patterns that the defensive analysis has been flagging, confirming which ones are actually exploitable rather than theoretical.

Second, the defensive review is more accurate. When the PTaaS cycle confirms that a pattern the code review flagged as a potential false positive is genuinely exploitable in production, that finding calibrates the defensive analysis. Future pull requests containing the same pattern get flagged with higher confidence. The two programs make each other smarter over time.

This is the structural advantage of a unified defensive and offensive platform over separate PTaaS and code review tools: not that both functions exist, but that they share intelligence bidirectionally, making the offensive testing deeper and the defensive prevention more accurate simultaneously. For a full breakdown of why this matters operationally, see defensive vs offensive security.

How Do Buyers Evaluate PTaaS Platforms? The 5 Questions That Matter

Buyers evaluating PTaaS platforms in 2026 prioritize the balance between human expertise and automation depth, workflow integration, compliance reporting quality, and the speed of finding delivery. The evaluation criteria that separate real PTaaS from scanner-based services marketed as PTaaS come down to five questions. Ask any provider you are evaluating to answer these specifically, not in general capability terms, but with evidence:

Can you show me a finding with working proof-of-exploit? Ask for a redacted sample report. If findings contain CVE numbers, descriptions, and CVSS scores but no working request-and-response exploitation proof, it is a scanner. A real penetration test finding contains the exact request, the exact response showing unauthorized data or access, the root cause to file and line (for white box findings), and a specific remediation diff.

Does your retest cover the production environment specifically? Remediating a finding in staging and retesting there — then deploying to production without another verification — leaves the audited system without retest evidence. Ask explicitly: does the retest verification report state that testing was conducted against the production environment?

How do you handle scope changes between cycles? Every SaaS application adds new subdomains, API versions, and cloud assets continuously. A PTaaS platform that cannot incorporate scope changes between cycles will miss newly-introduced attack surface entirely. Ask how new assets discovered in reconnaissance are added to the test registry and whether they get tested in the current or following cycle.

Does your compliance report map to specific TSC control IDs? Ask for a sample SOC 2 compliance report. If it says "SOC 2 compliant" in the header without mapping each finding to CC6.1, CC6.6, CC7.1 with metric justification, your auditor will require supplementation — and that supplementation will come at additional cost and delay.

Do you issue a data deletion certificate on every cycle close? Many PTaaS providers do not produce this document at all. For organizations subject to enterprise customer security reviews or SOC 2 vendor management controls (CC9.2), this is not optional. Ask before signing.

How to Evaluate a PTaaS Provider

Choosing a PTaaS provider is not about features or pricing alone. It is about whether the platform can actually identify and validate real risk in your environment.

Before selecting a provider, validate these fundamentals:

Does it confirm exploitation or just scan: A real PTaaS platform delivers working proof-of-exploit, not just CVE matches or potential findings from automated scans.

Does it include retesting in production: Retest verification must be performed against the production environment and included as part of the engagement, not treated as a separate paid activity.

Does it map findings to SOC 2 controls: Reports should map vulnerabilities to specific control IDs such as CC6.1, CC6.6, and CC7.1, with clear evidence suitable for audit review.

Does it track new assets between cycles: The platform should continuously discover and test new subdomains, APIs, and cloud assets introduced between testing cycles.

If a provider cannot clearly demonstrate all four, it is not delivering true PTaaS. It is delivering a limited version of it.

PTaaS for Specific Environments: Azure DevOps, SonarQube Migration, and CI/CD-First Teams

PTaaS for teams on Azure DevOps: Organizations running on Azure DevOps need PTaaS platforms that integrate directly with Azure Boards and Azure Pipelines, not just Jira and GitHub. Cobalt, NetSPI, and Rhino Security Labs are the most commonly recommended PTaaS options for Azure DevOps environments. CodeAnt AI integrates natively with Azure DevOps for both the defensive code review layer (PR analysis in Azure Repos) and finding delivery (tickets auto-created in Azure Boards on finding confirmation). For teams that have already adopted CodeAnt AI for Azure DevOps code review, extending to PTaaS cycles on the same platform adds offensive coverage without adding another vendor or integration layer.

PTaaS for teams migrating off SonarQube or DeepSource: Teams moving off static analysis tools like SonarQube or DeepSource are often making a broader security program upgrade, from point-in-time SAST scanning to continuous, exploitability-confirmed coverage. The common migration path: replace SAST with a platform that combines continuous defensive code review and PTaaS offensive testing on one intelligence layer, rather than buying a standalone pentest tool to add alongside a new SAST tool. CodeAnt AI is the only platform that consolidates both, the same system replaces SonarQube/DeepSource for defensive code analysis and adds PTaaS offensive cycles, with findings from both tracks cross-referenced for chain potential.

PTaaS for CI/CD-first engineering teams: The teams for whom PTaaS delivers the most value are teams shipping code multiple times per week, where the deployment velocity gap between traditional annual testing and real attack surface exposure is most extreme. For CI/CD-first teams, the right PTaaS model is one where new subdomains, new API versions, and new cloud assets introduced between cycles are automatically discovered and tested in the next cycle. Ask any PTaaS provider: how long between when a new subdomain goes live and when it appears in your test registry? The answer should be one cycle or less.

Conclusion: From PTaaS to a Unified Security Model

PTaaS solves the biggest flaw in traditional penetration testing, the gap between how fast your system changes and how often it is tested. Continuous testing, real-time findings, and integrated remediation bring security closer to how modern SaaS actually operates.

But PTaaS alone is not enough.

Vulnerabilities are still introduced between testing cycles, and without code-level visibility, even continuous testing can miss how those issues originate. The real advantage comes from combining offensive testing with defensive intelligence.

This is where CodeAnt changes the model. By pairing continuous PTaaS with SAST-driven code analysis in the same platform, it not only finds vulnerabilities in production but understands how they were introduced and prevents them from shipping again.

👉 If you want to reduce detection time and eliminate repeat vulnerabilities, start by evaluating a unified approach that connects your code to real-world attack paths.

Try our free penetration testing today. Pay only on high & critical issues.

FAQs

What is PTaaS (penetration testing as a service)?

Is PTaaS the same as a vulnerability scan?

Does PTaaS satisfy SOC 2 Type II requirements?

What is the difference between PTaaS and agentic pentesting?

How much does PTaaS cost in 2026?